Optimizing Long-term Social Welfare in Recommender Systems

Mladenov et al. (ICML 2020)

MLRG, Winter 2021: Responsible ML

Betty Shea, 2021-11-24

*picture from AP

MLRG theme: Responsible ML

- Bias to address

- What is unfair?

- What is fair?

Introduction presentation

- ranking bias

- discrimination

- equal opportunity

MLRG theme: Responsible ML

-

Bias to addressIntroduce ranking bias -

What is unfair?Social welfare -

What is fair?Unequal opportunity

Today's paper

Classic ethics question

Individual rights

Common good

* picture from AFP

* picture from socialprotection.org

Taxation / social insurance

Main points from paper

- better algorithm for recommender systems

- unrealistic to consider individual utility in isolation

- myopic matching policies lead to suboptimal outcomes

- connection to outcomes that are `fairer in a utilitarian sense'

Notation

Setting

Notation

Process

Notation

Recommender system policy

Notation

Per user

Per provider

Provider abandons RS platform if viability threshold not met.

1-d examples

1-d examples

- Three content providers each with viability = 2

- Six users with utility based on distance to provider

1-d examples

- Myopic RS matching

1-d examples

- Result

- users 1, 2 and 3 --> provider 1

- users 4 and 5 --> provider 2

- user 6 --> provider 3

- Provider 3 goes out of business

- Maximizes total utility: 10 + 2

1-d examples

- Result of subsequent rounds

- users 1, 2 and 3 --> provider 1

- users 4, 5 and 6--> provider 2

- Per round total utility: 8 + 2

- user 6's utility goes from 2 to 0 without provider 3

1-d examples

- Non-myopic policy could be

- users 1 and 2 --> provider 1

- users 3 and 4 --> provider 2

- users 5 and 6 --> provider 3

- Per round total utility: 10 - 2

- Users 3 and 5 gives up 2 utility per round

Fairness?

- subsidize provider 3

- slightly lower rewards for users 3 and 5 at every timestep for perpetuity

- much higher reward for user 6 for perpetuity

Social welfare

- group every T timesteps into an epoch

- at epoch k under policy

- long-run social welfare

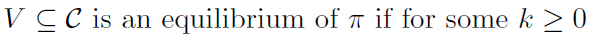

Equilibrium

- subset of providers that are viable in the long run

- many equilibria - find the one that maximizes long-run social welfare

- achieve with a single rule to apply across all epochs

Objective (for any epoch)

(expectation over user query distribution, timesteps within epoch and policy)

Objective (for any epoch)

- maximize social welfare

- ensure that all providers remain viable

- how does this translate to optimality over infinite epochs?

Solving the problem

- linear programming

- polynomial time for 1/ approximation

If utility function is additive

For non-linear utility functions

- linearize

Experiments

- linear programming solution to the welfare objective (LP-RS)

- three datasets

- synthetic data

- Movielens movie ratings

- SNAP 2010 Twitter follower

- myopic policies underperform LP-RS

Model refinements

- fairness to individual users

- incomplete information

- robustness to changes in query distributions

- full RL approach

Fairness to individual users

- long-run utility for under

- denote as the best possible policy for

- define fairness in terms of regret

Fairness to individual users

- regret of under

- maximum regret under

- add a penalty term to objective based on MR

Comments?

- constraint in objective does not take into account relative cost of subsidizing providers

- regret benchmark (optimal policy for individual user) a bit unrealistic

- penalizing maximum regret does not necessarily protect individuals and requires picking regret-tradeoff parameter values

deck

By sheaws

deck

- 1,043