Diffusion model for full field inference: application to weak lensing

Benjamin Remy

Survey Science Group, KICP

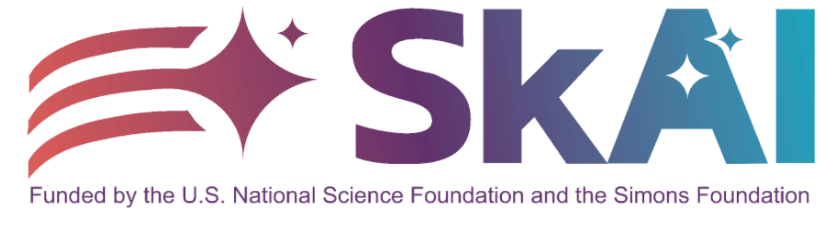

Shear

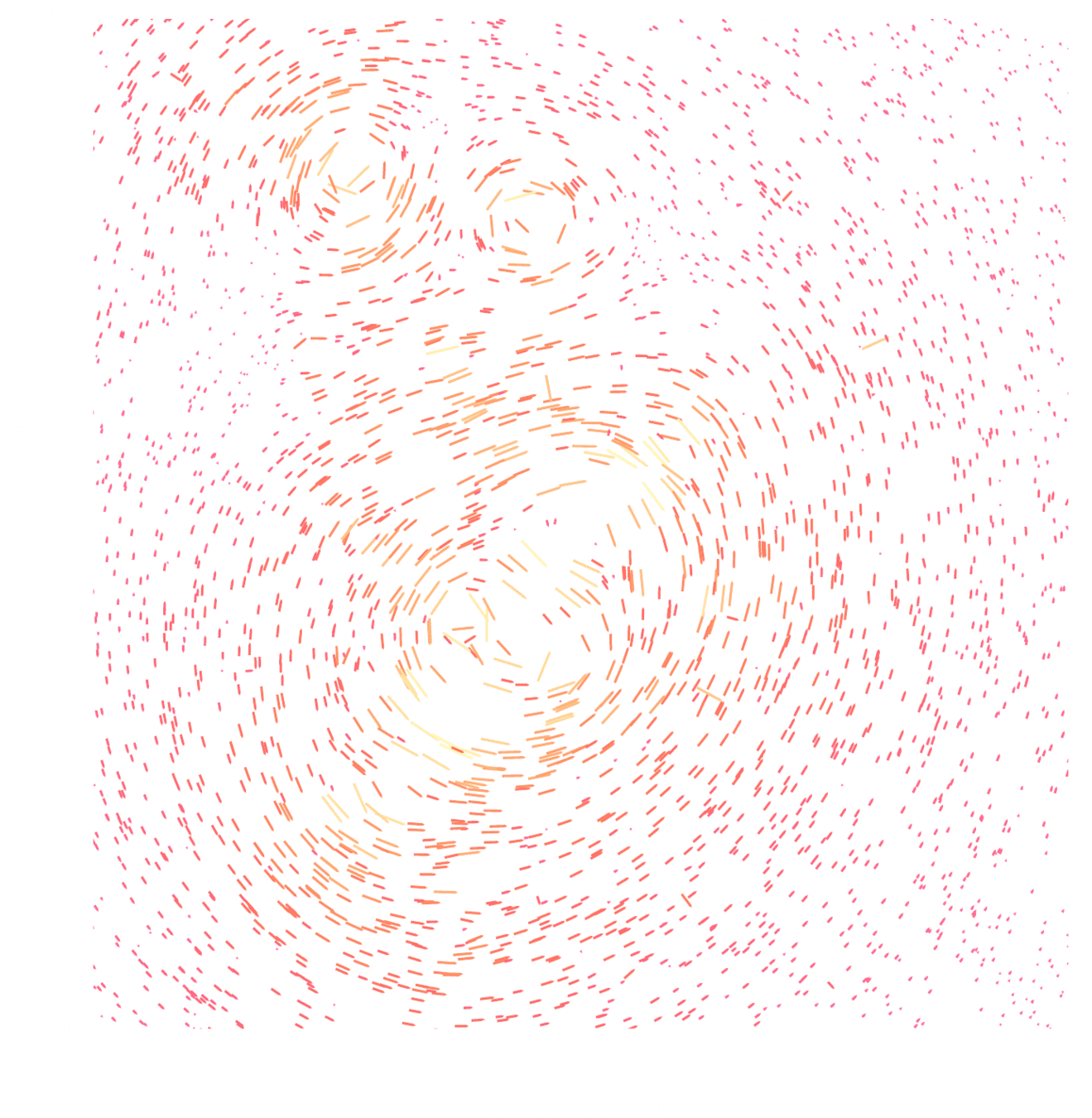

Convergence

Weak lensing mass-mapping as an inverse problem

Shear

Convergence

Weak lensing mass-mapping as an inverse problem

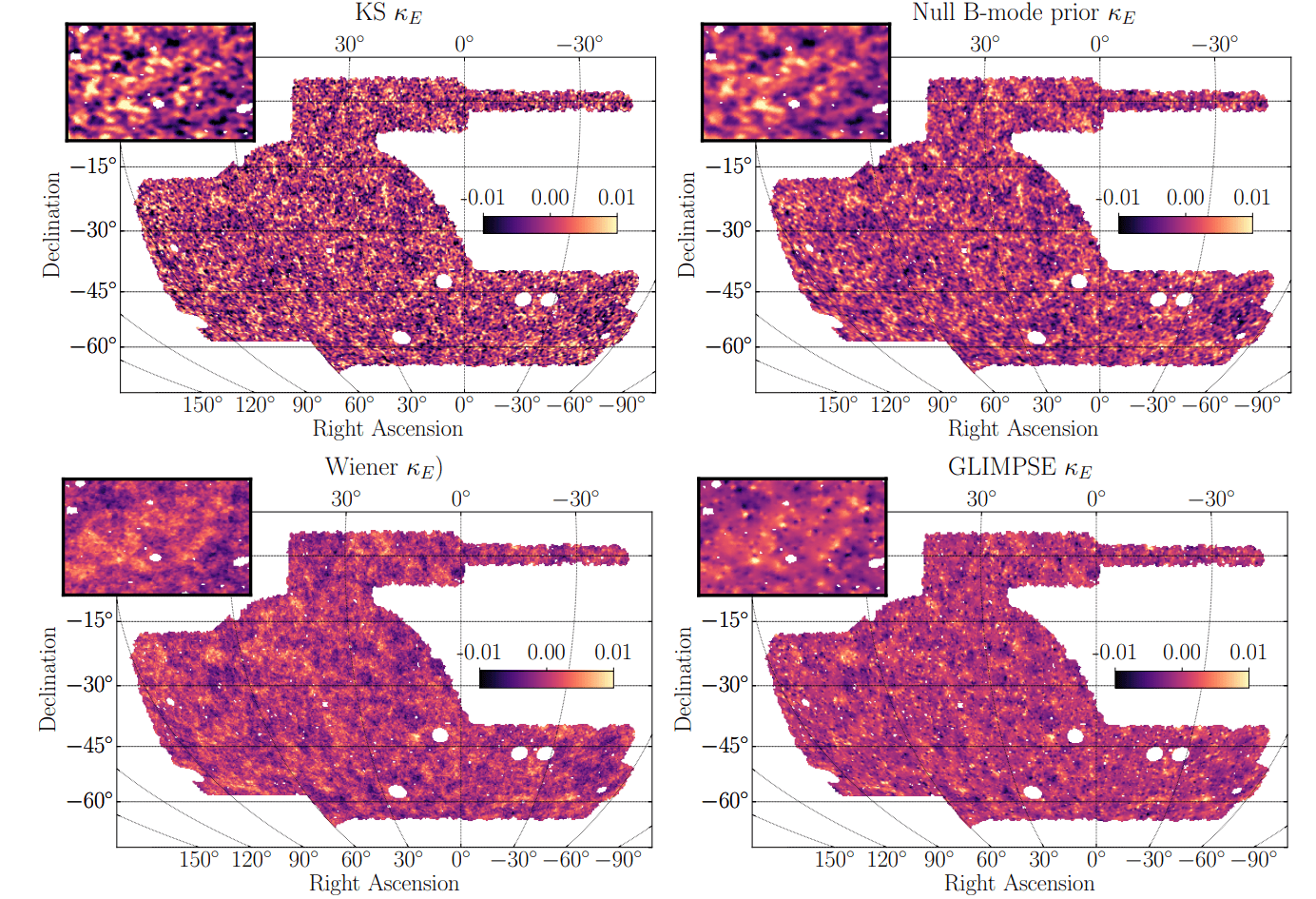

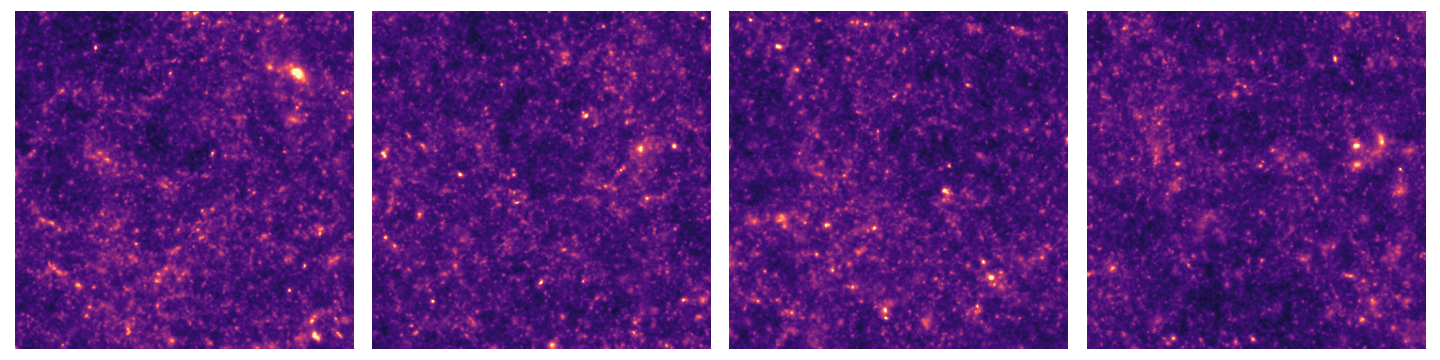

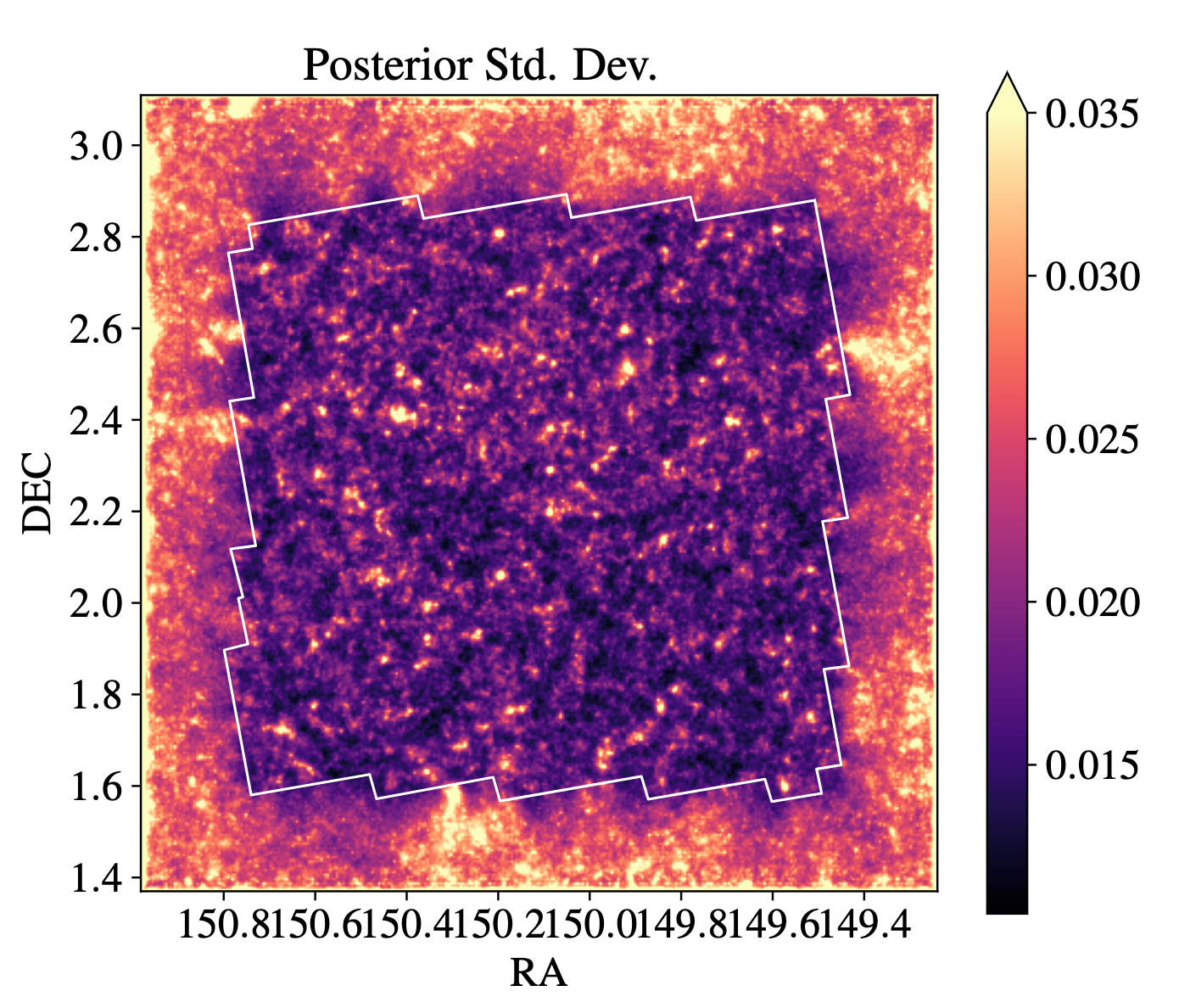

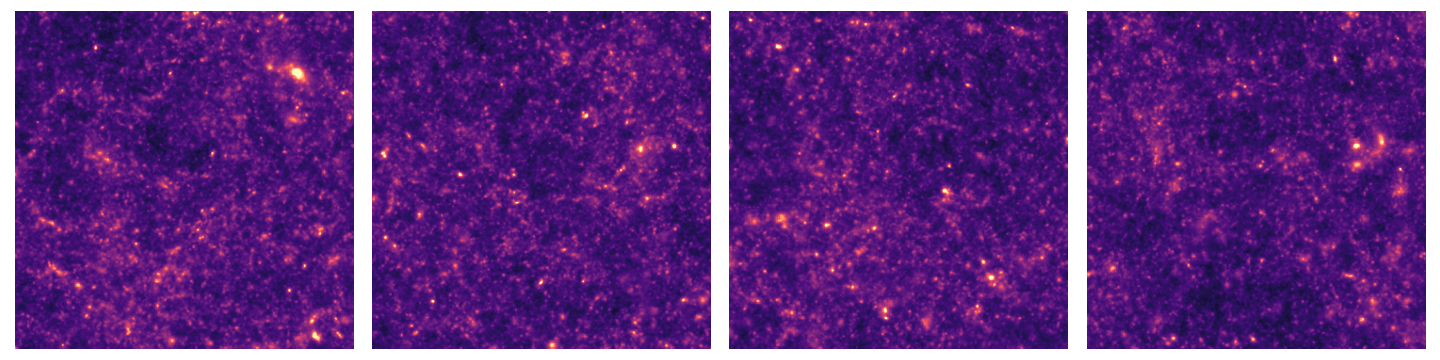

Mass-mapping with the Dark Energy Survey (DES) Y3

Jeffrey, Gatti, et al. 2021

What about using the prior from cosmological simulations?

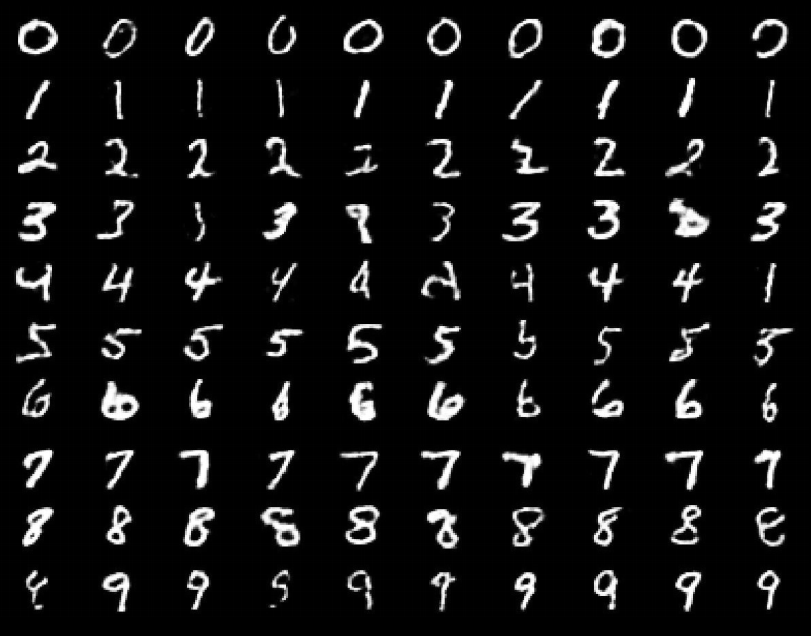

The evolution of generative models

- Deep Belief Network

(Hinton et al. 2006)

The evolution of generative models

- Deep Belief Network

(Hinton et al. 2006)

- Variational AutoEncoder

(Kingma & Welling 2014)

The evolution of generative models

- Deep Belief Network

(Hinton et al. 2006)

- Variational AutoEncoder

(Kingma & Welling 2014)

- Generative Adversarial Network

(Goodfellow et al. 2014), WGAN (2017)

The evolution of generative models

- Deep Belief Network

(Hinton et al. 2006)

- Variational AutoEncoder

(Kingma & Welling 2014)

- Generative Adversarial Network

(Goodfellow et al. 2014), WGAN (2017)

- Diffusion models (Ho et al. 2020, Song et al. 2020)

Midjourney v3

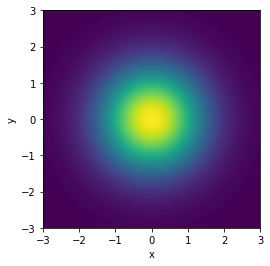

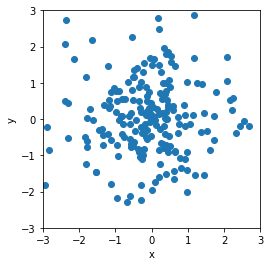

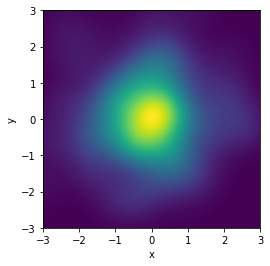

Generative modeling

Generative modeling aims to learn an implicit distribution

from which the training set

This usually means building a parametric model that tries to be close to

Model

Samples

True

Once trained, we can typically samples from and evaluate the density

is drawn.

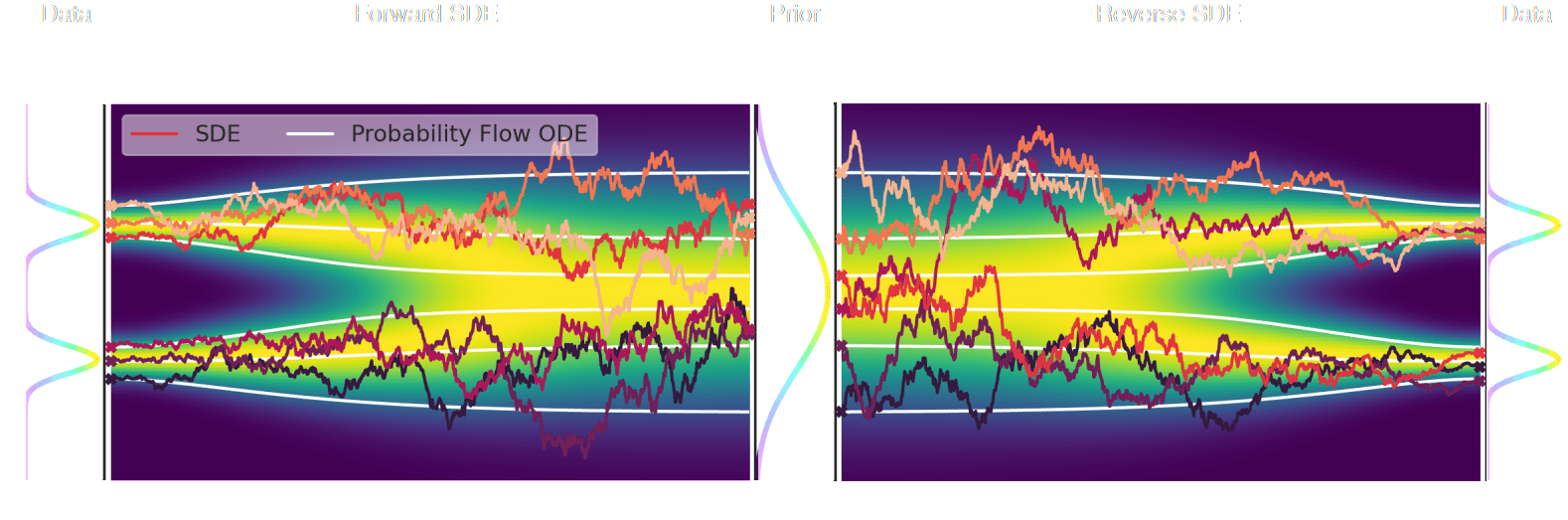

Diffusion models

Once is learned, draw and solve the reverse SDE to get target distribution samples

The score is all you need

We learn is by denoising our data

If is corrupted by additional Gaussian noise

Then a denoiser trained under an loss

Verifies the Tweedie's formula

Diffusion models for inverse problems

is known and encodes our physical understanding of the problem.

⟹ When non-invertible or ill-conditioned, the inverse problem is ill-posed with no unique solution x

Deconvolution

Inpainting

Denoising

Diffusion models for inverse problems

Or, we can aim to sample from the full posterior distribution by MCMC techniques

For instance, if is Gaussian

is the data likelihood, which constains the physics

is our prior knowledge on the solution

We can target the Maximum A Posteriori (MAP)

Bayesian view of the problem:

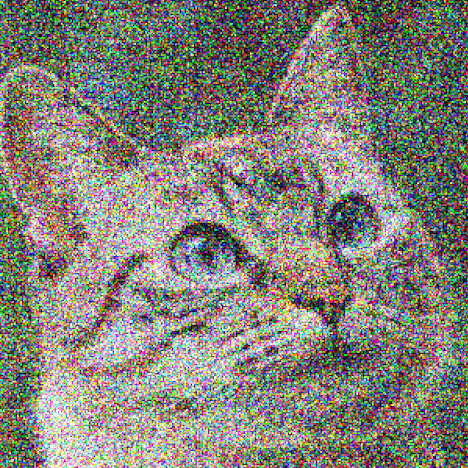

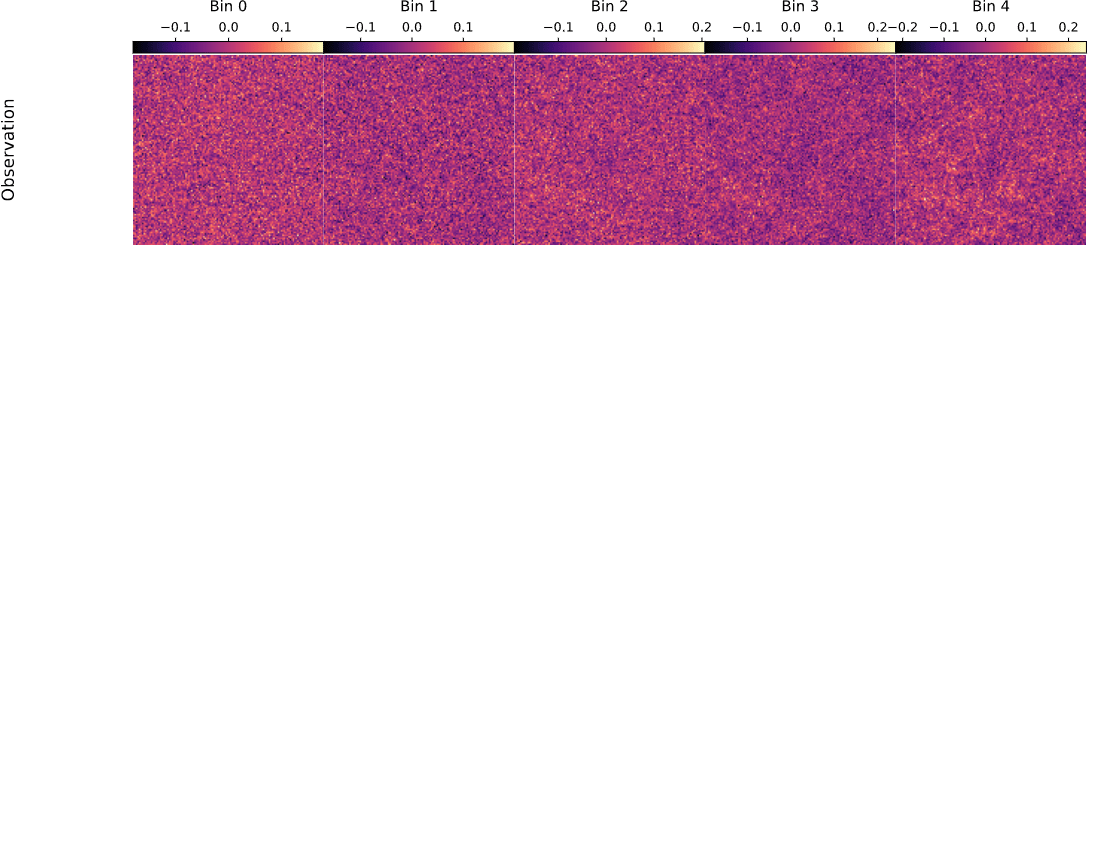

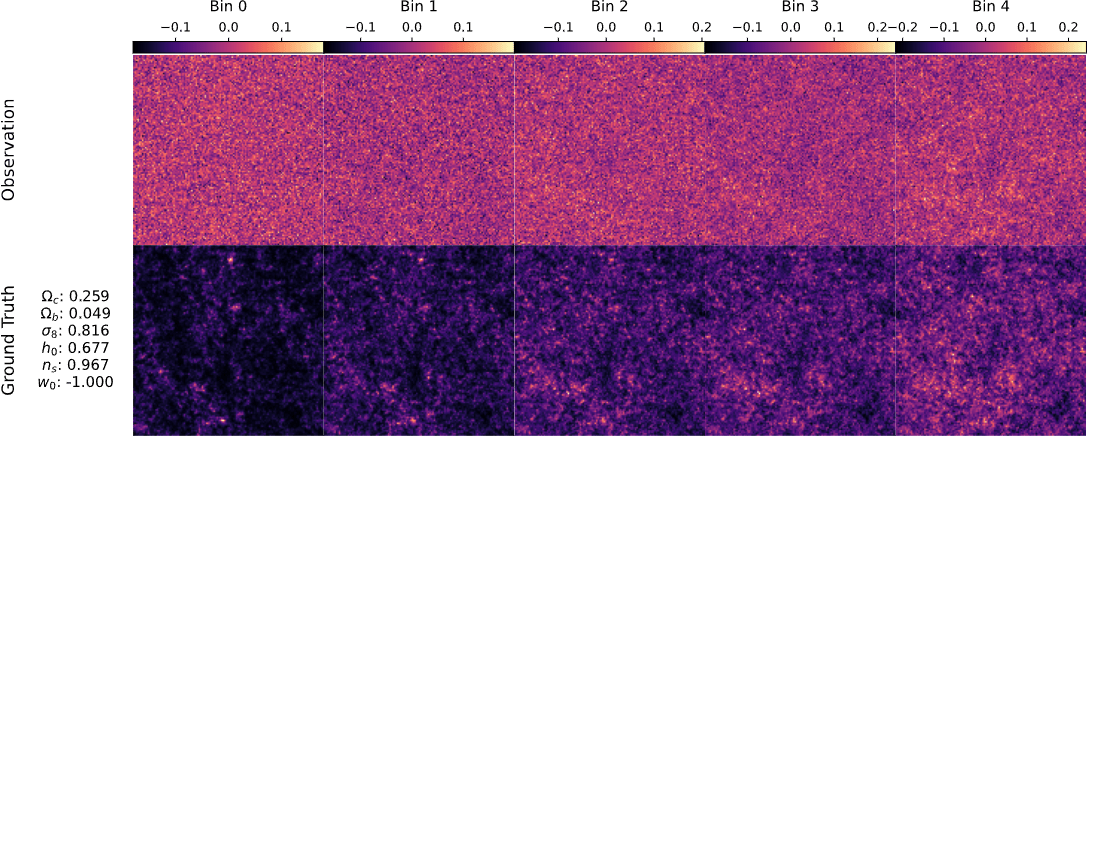

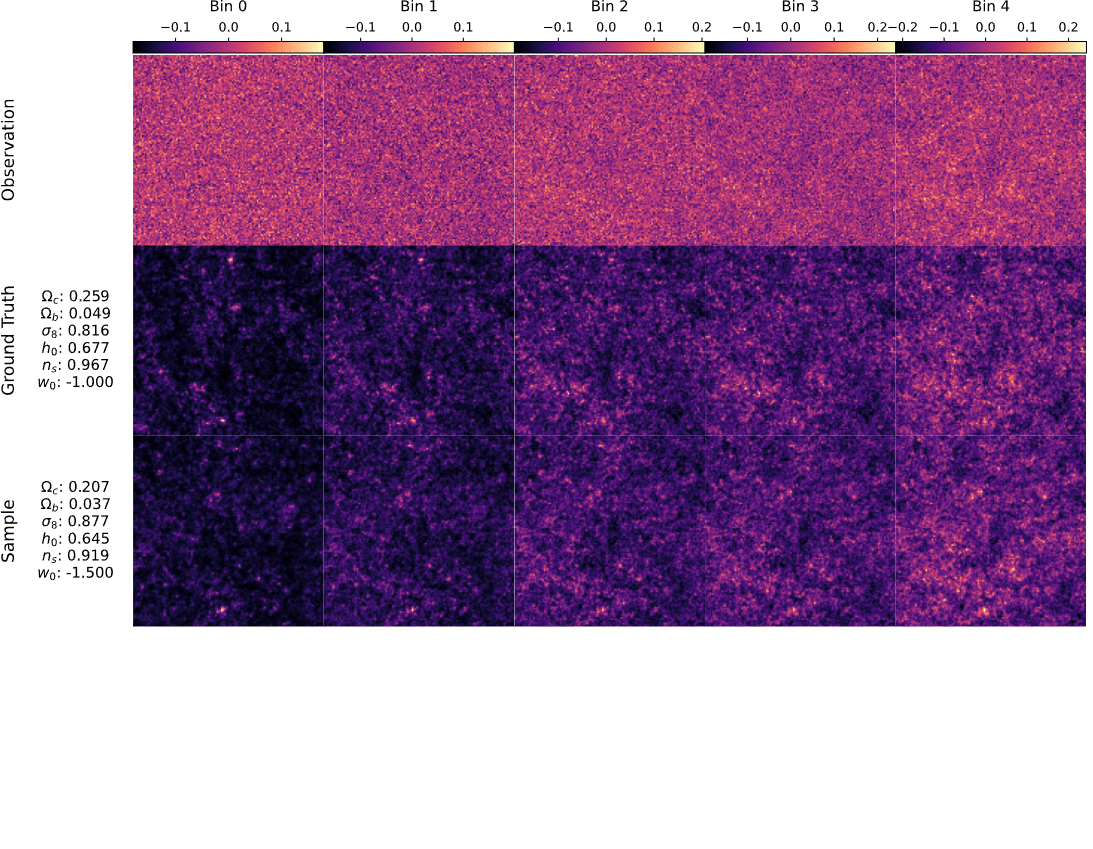

Posterior sampling with diffusion models

Sampling from the prior

Posterior sampling with diffusion models

Sampling from the posterior

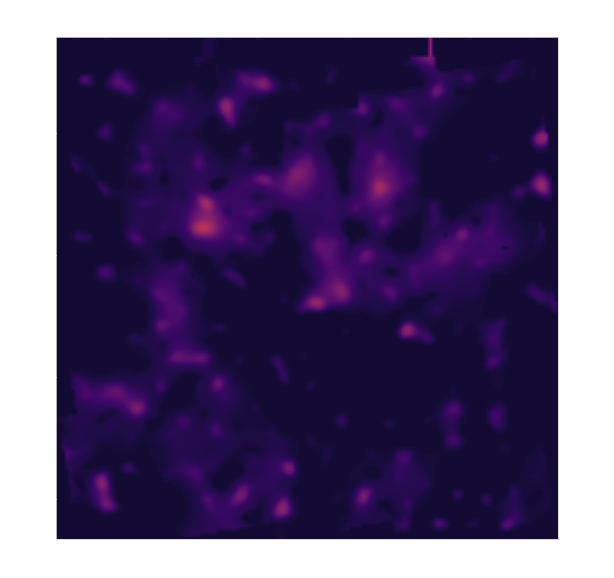

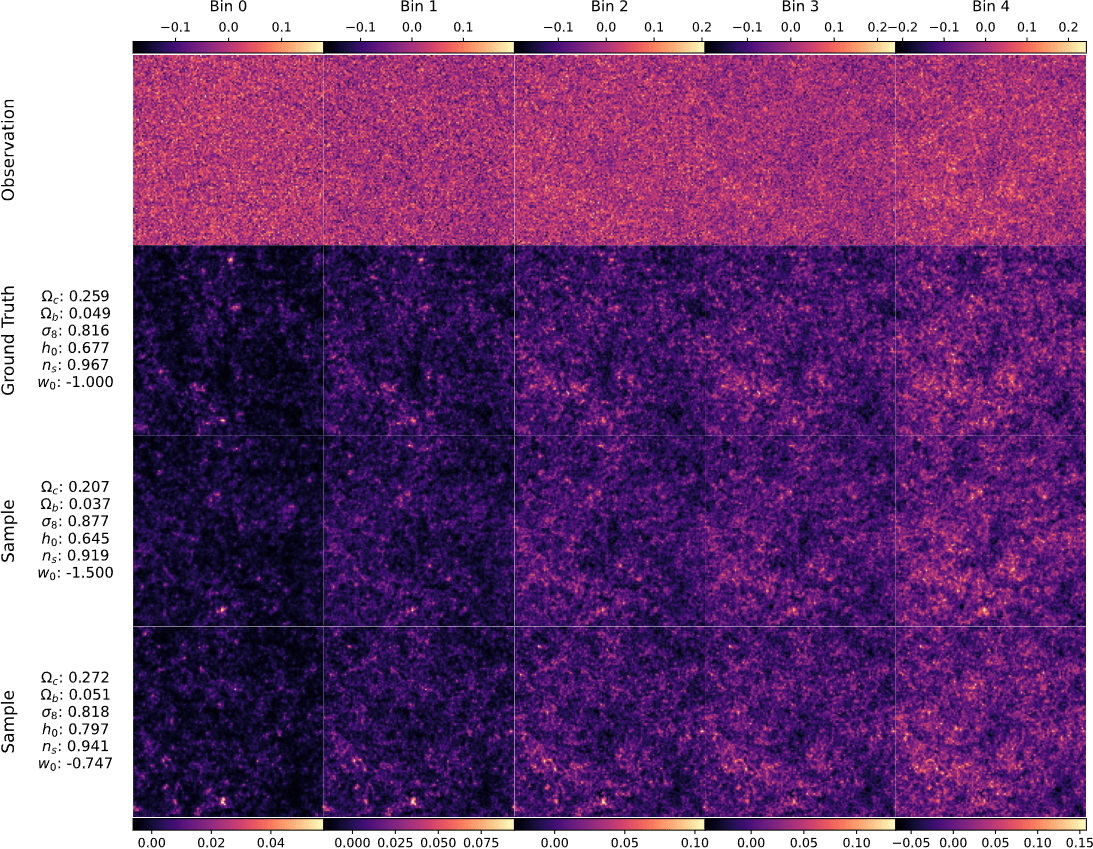

Ground truth simulation

Posterior samples

Posterior sampling with diffusion models

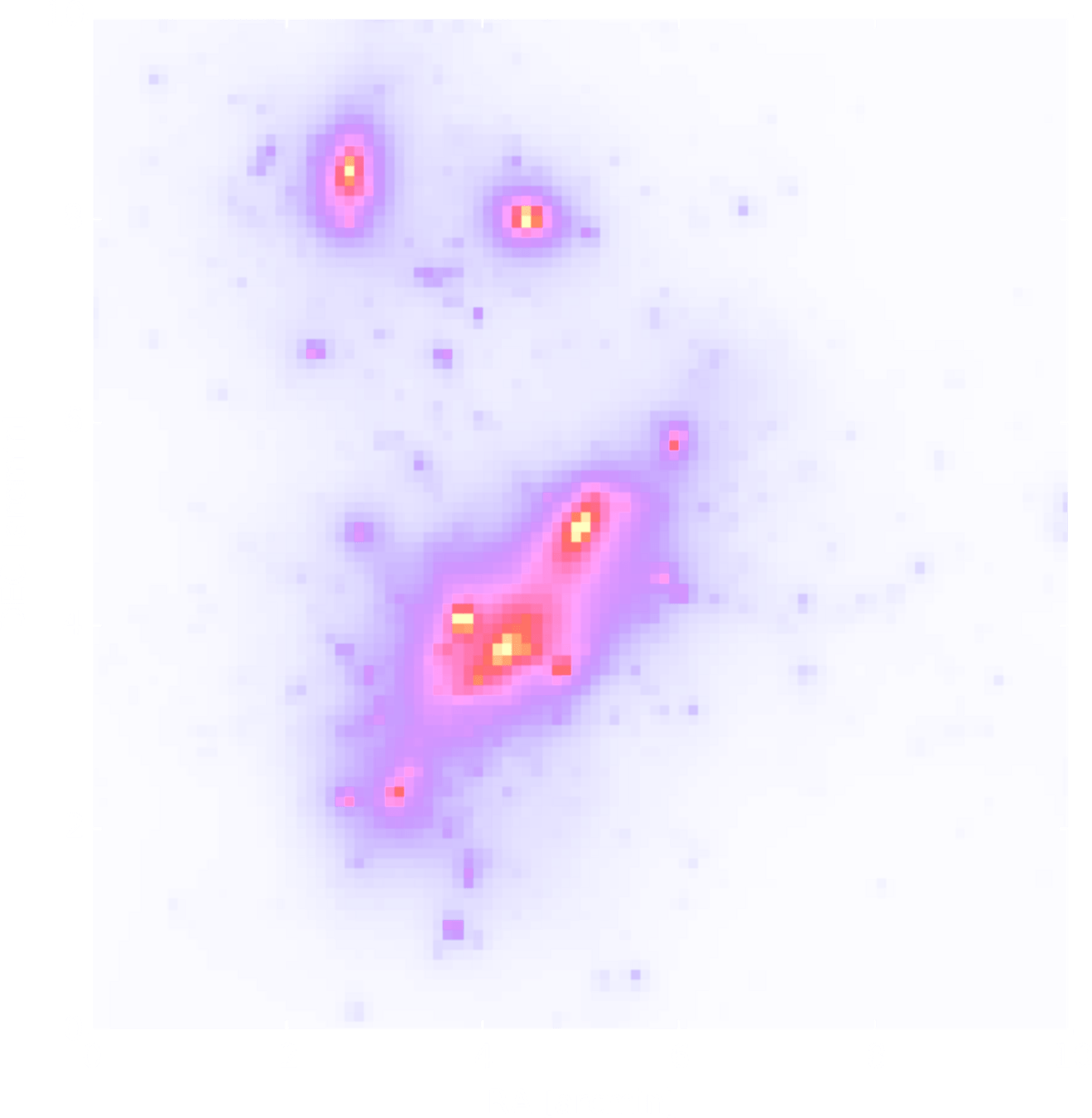

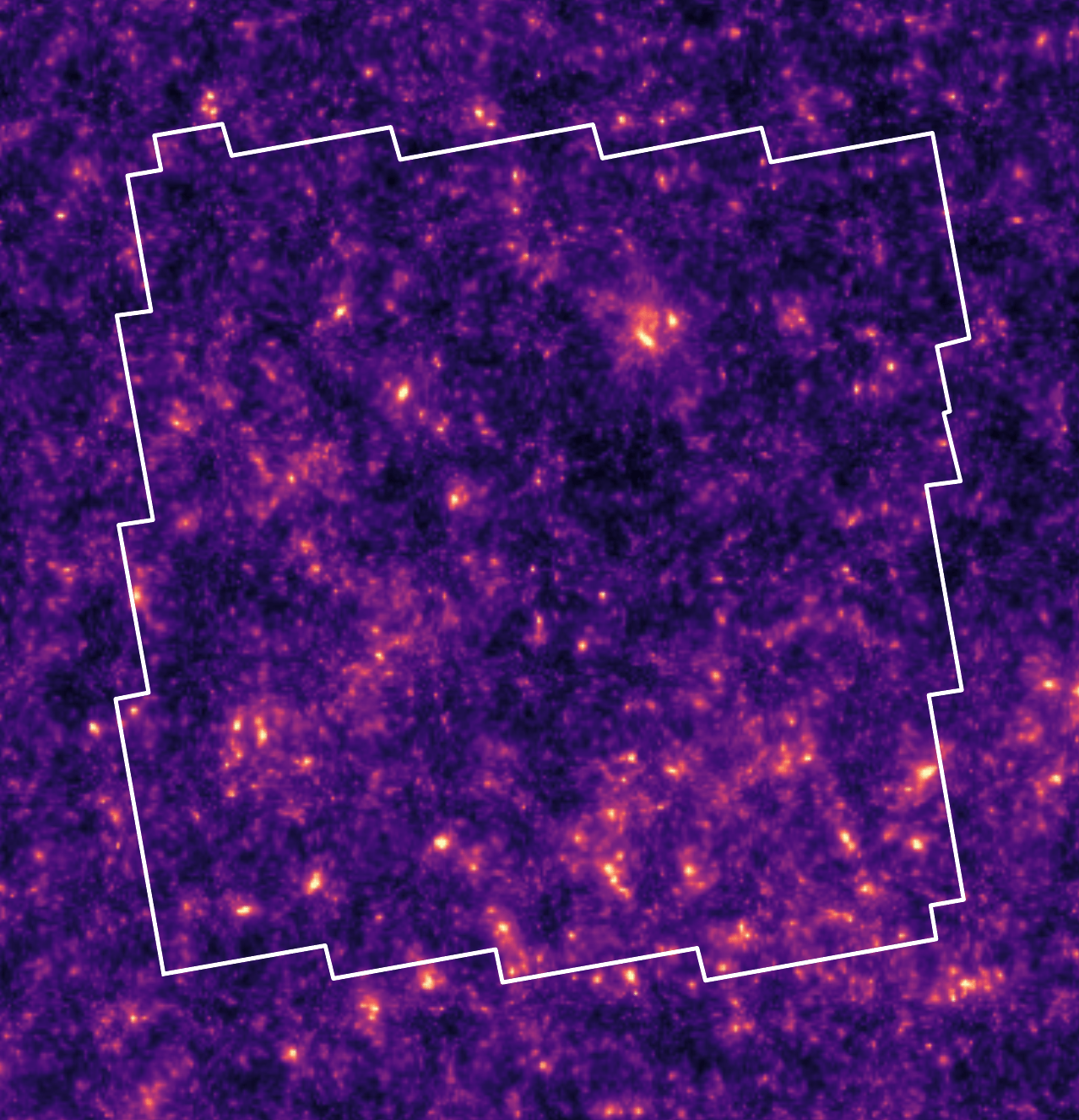

Application to HST/ACS COSMOS field

Massey et al. 2007

Remy et al. 2022 (Posterior mean)

Remy et al. 2022 (Posterior samples)

Posterior sampling with diffusion models

Remy et al. 2022 (Posterior mean)

We built a generative model of mass-maps, that we can condition on observation to get the full posterior distribution

But we implicitly assumed a cosmological model when training our prior , i.e.

How to infer jointly the cosmology and the mass map?

But we implicitly assumed a cosmological model when training our prior , i.e.

Benjamin Remy, Francois Lanusse, Niall Jeffrey, Jia Liu, Jean-Luc Starck,

Ken Osato, Tim Schrabback, Probabilistic mass-mapping with neural score estimation, A&A 2022

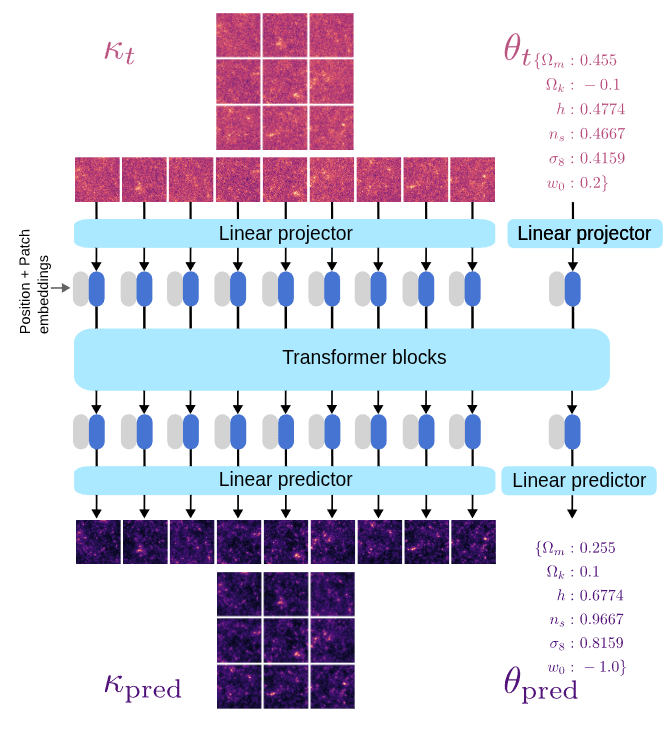

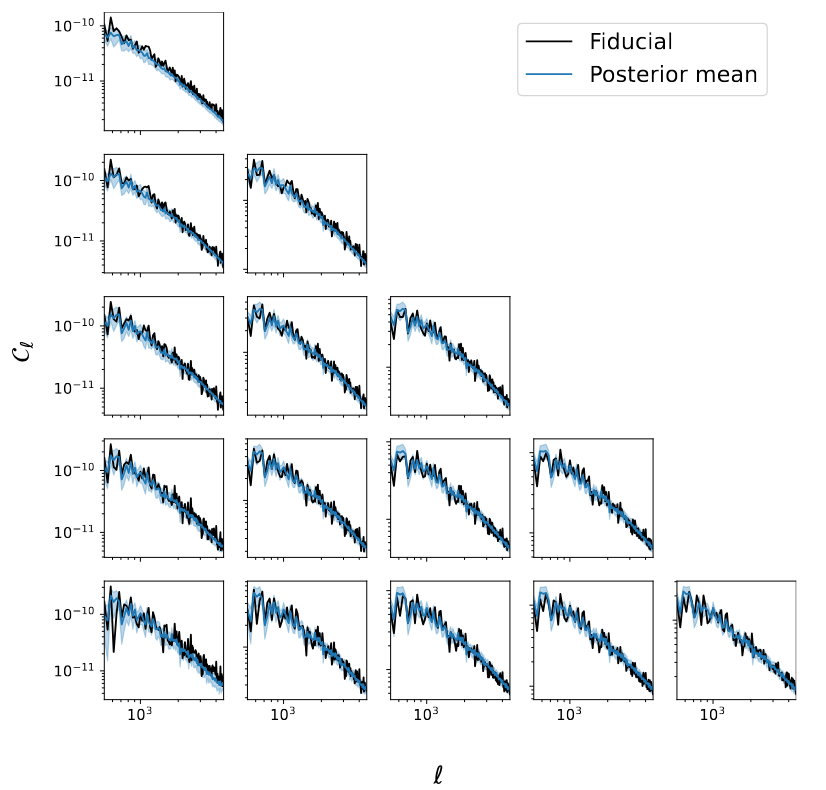

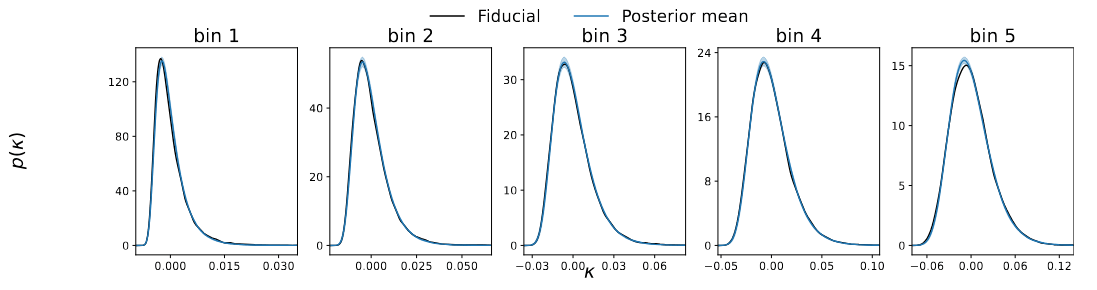

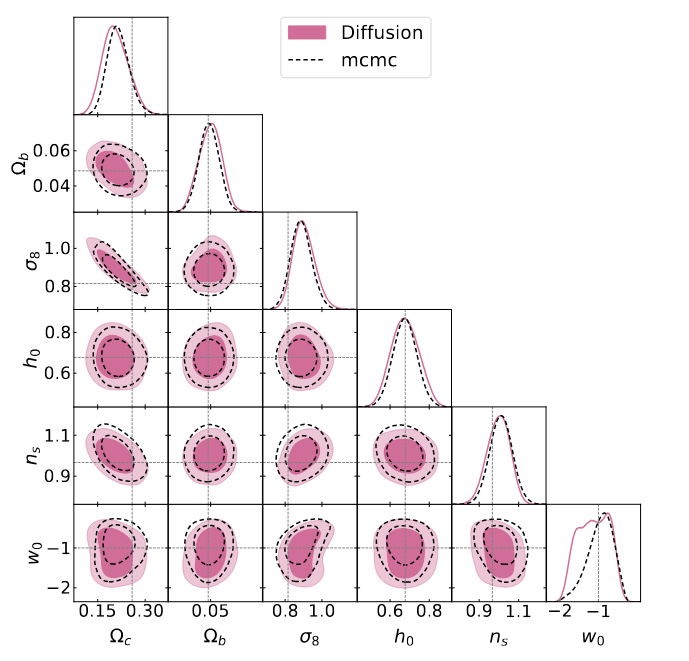

Joint inference of mass-maps and cosmological parameters

with Chihway Chang and Rebecca Willet

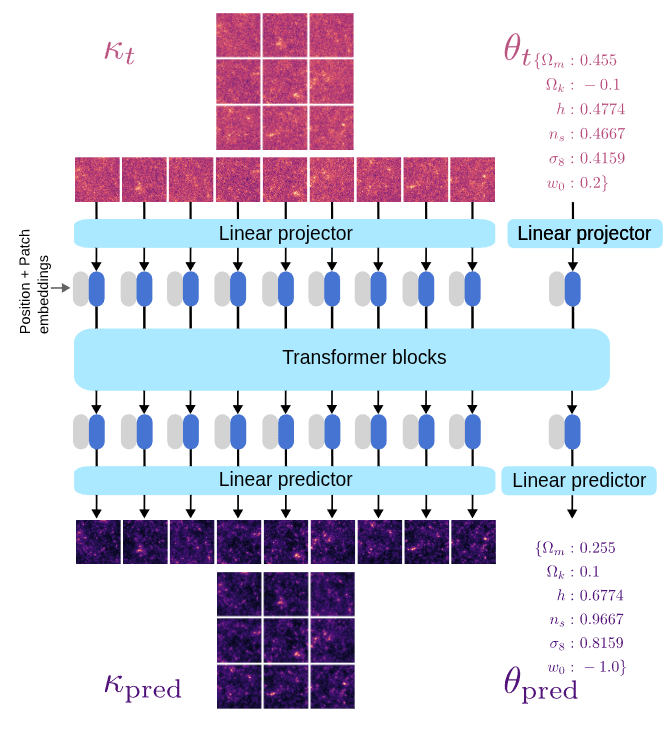

Learning the joint distribution

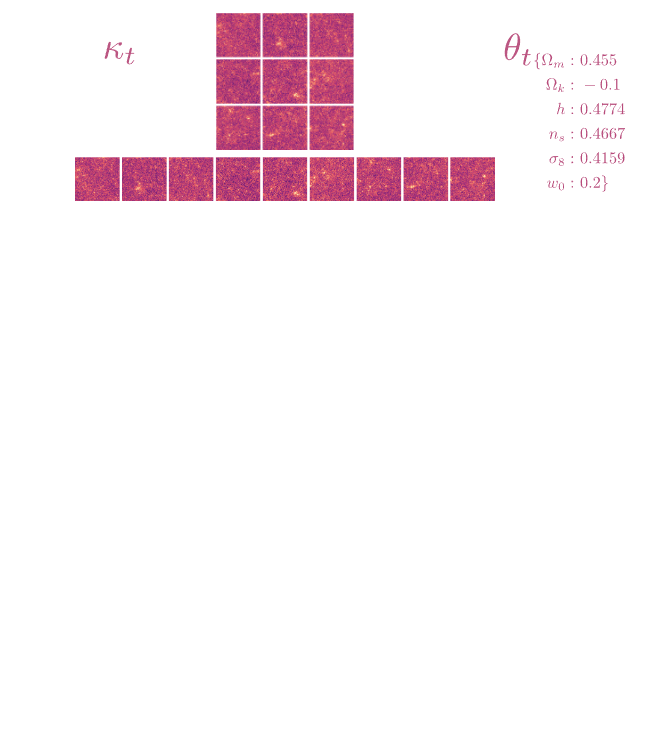

We now have a dataset of pairs

How can we build a diffusion model to learn the joint distribution?

Learning the joint distribution

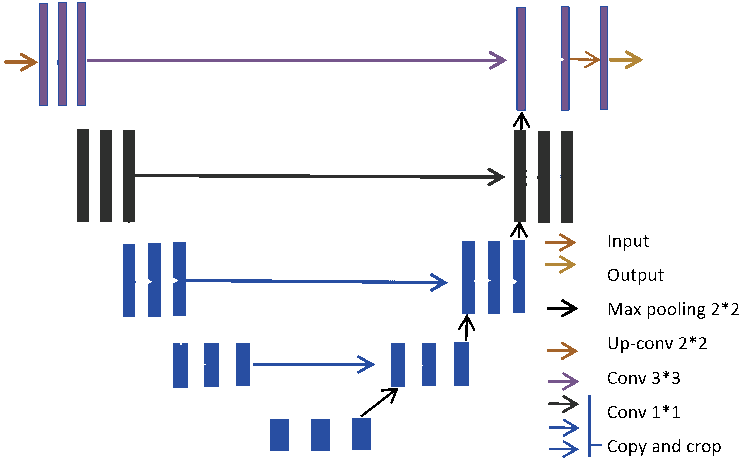

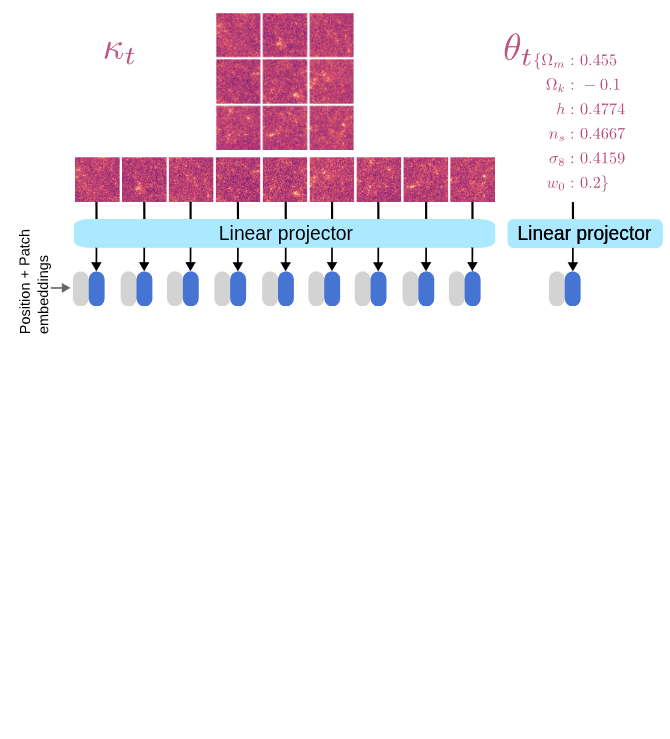

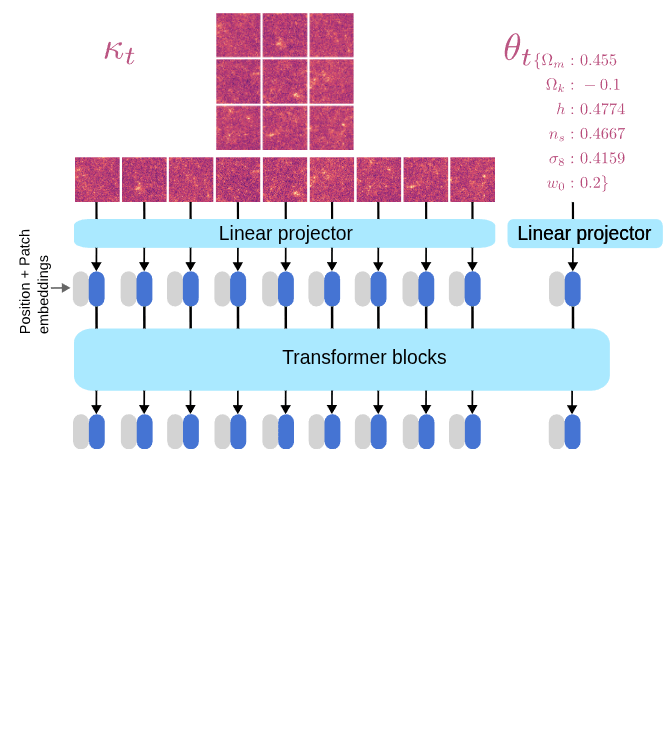

We need to model to design a denoiser architecture

to learn the score function

We want to learn the denoiser

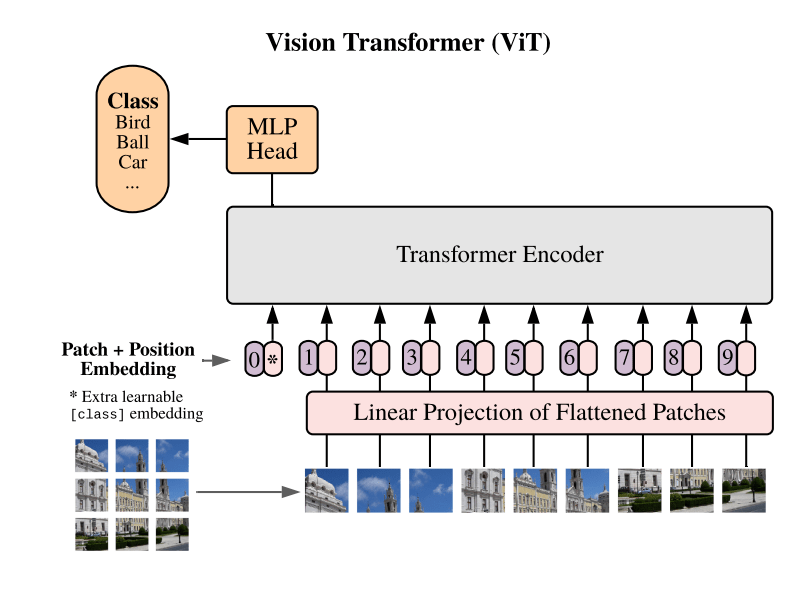

U-net architecture based on convolutional layers

Only inputs and ouputs images (or volumes)....

How do we add the cosmology?

Use Tweedie's formula to get

Learning the joint distribution

We need to model to design a denoiser architecture

to learn the score function.

We want to learn the denoiser

We now have a joint score function!

Learning the joint distribution

Marginal

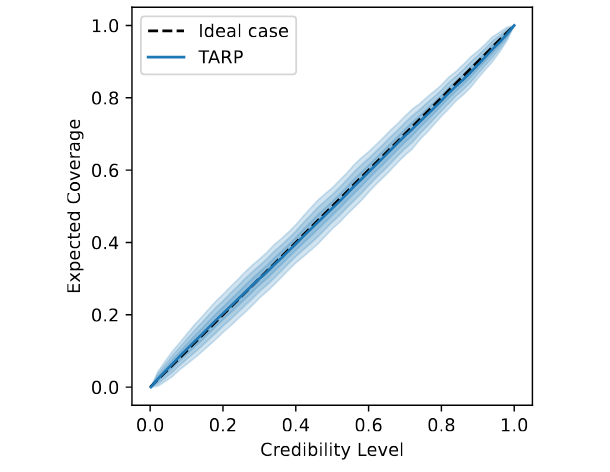

Coverage test

We built a generative model of mass-maps and cosmology, that we can condition on observation to get the joint posterior distribution

Paper out soon...

Thank you!

Generative models for full field inference

By Benjamin REMY

Generative models for full field inference

- 30