PostgreSQL as a broker for offline task processing

/ DjangoPgPubSub

Text

PG

LISTEN virtual;

NOTIFY virtual;

Asynchronous notification "virtual" received

from server process with PID 8448.

NOTIFY virtual, 'This is the payload';

Asynchronous notification "virtual" with payload

"This is the payload" received from server process with PID 8448.

LISTEN foo;

SELECT pg_notify('fo' || 'o', 'pay' || 'load');

Asynchronous notification "foo" with payload

"payload" received from server process with PID 14728.PUBLISH Hello "Is it me you're looking for"SUBSCRIBE HelloREDIS

Is it me you're looking for@pgpubsub.post_save_listener(MediaTriggerChannel)

def scan_media(old: Media, new: Media):

if not old:

print(f'Perform virus scan on the new media {new}.')

else:

print(f'Media updated; scan {new} all over again.')python manage.py listen@dataclass

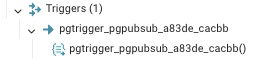

class MediaTriggerChannel(TriggerChannel):

model = Media

lock_notifications = TrueUsage

Signals? Not Quite

CREATE TABLE IF NOT EXISTS public.pgpubsub_notification

(

id integer,

channel character varying(63),

payload jsonb NOT NULL,

created_at timestamp with time zone,

db_version integer,

...

)CREATE OR REPLACE FUNCTION public.pgtrigger_pgpubsub_a83de_cacbb()

...

payload := '{"app": "tests", "model": "Media"}'::jsonb;

payload := jsonb_insert(payload, '{old}', COALESCE(to_jsonb(OLD), 'null'));

payload := jsonb_insert(payload, '{new}', COALESCE(to_jsonb(NEW), 'null'));

SELECT current_setting('pgpubsub.notification_context', True)

INTO notification_context_text;

IF COALESCE(notification_context_text, '') = '' THEN

notification_context_text := '{}';

END IF;

payload := jsonb_insert(payload, '{context}', notification_context_text::jsonb);

INSERT INTO pgpubsub_notification (channel, payload)

VALUES ('pgpubsub_a83de', payload);

perform pg_notify('pgpubsub_a83de', payload::text);

RETURN NEW;

END;

Media Table

Sender

def listen(

channels: Union[List[BaseChannel], List[str]] = None,

recover: bool = False,

autorestart_on_failure: bool = True,

start_method: str = 'spawn',

):

connection_wrapper = listen_to_channels(channels)

logger.info('Listening for notifications... \n')

while POLL:

process_notifications(connection_wrapper)

def listen_to_channels(channels: Union[List[BaseChannel], List[str]] = None):

cursor = connection.cursor()

with transaction.atomic():

for channel in channels:

logger.info(f'Listening on {channel.name()}\n')

cursor.execute(f'LISTEN {channel.listen_safe_name()};')

return ConnectionWrapper(connection.connection)Receiver

You said this replaces Celery?

Media Task

Fixed

What double processing by Multiple Receivers

class LockableNotificationProcessor(NotificationProcessor):

def process(self):

...

notification = (

Notification.objects.select_for_update(

skip_locked=True).filter(

payload_filter,

channel=self.notification.channel,

).first()

)

if notification is None:

logger.info(f'Could not obtain a lock on notification '

f'{self.notification.pid}\n')

else:

logger.info(f'Obtained lock on {notification}')

self.notification = notification

self._execute()

self.notification.delete()skip_locked is your friend

What if the receiver is down?

Re-queue unprocessed notifications from the DB -> The channel

def process_stored_notifications(channels=None):

...

if channels is None:

channels = registry

else:

channels = [locate_channel(channel) for channel in channels]

channels = {

channel: callbacks

for channel, callbacks in registry.items()

if issubclass(channel, tuple(channels))

}

with connection.cursor() as cursor:

lock_channels = [c for c in channels if c.lock_notifications]

for channel_cls in lock_channels:

payload = ''

logger.info(

f'Notifying channel {channel_cls.name()} to recover '

f'previously stored notifications.\n')

cursor.execute(

f"select pg_notify('{channel_cls.listen_safe_name()}', '{payload}');")Pros

- Signals will work with bulk create

- Less machinery / Wont burn a hole in your pocket

Cons

- Will be slower since Redis / RabbitMQ / etc resides in RAM and get their fault tolerance through cluster configs. Should be fine for 99.9% of all cases.

Me

https://au.linkedin.com/pub/iqbal-bhatti/14/63/493

Slides

PostgreSQL as a Broker

By Iqbal Talaat Bhatti

PostgreSQL as a Broker

- 91