Collector Deployment

From Edge to Gateway

Architecting your

OpenTelemetry

Collector

101

Collection

OpenTelemetry Collector

Receivers

Processors

Exporters

OpenTelemetry Collector

Receivers

Processors

Exporters

Pipelines

OpenTelemetry Collector

aka otelcol

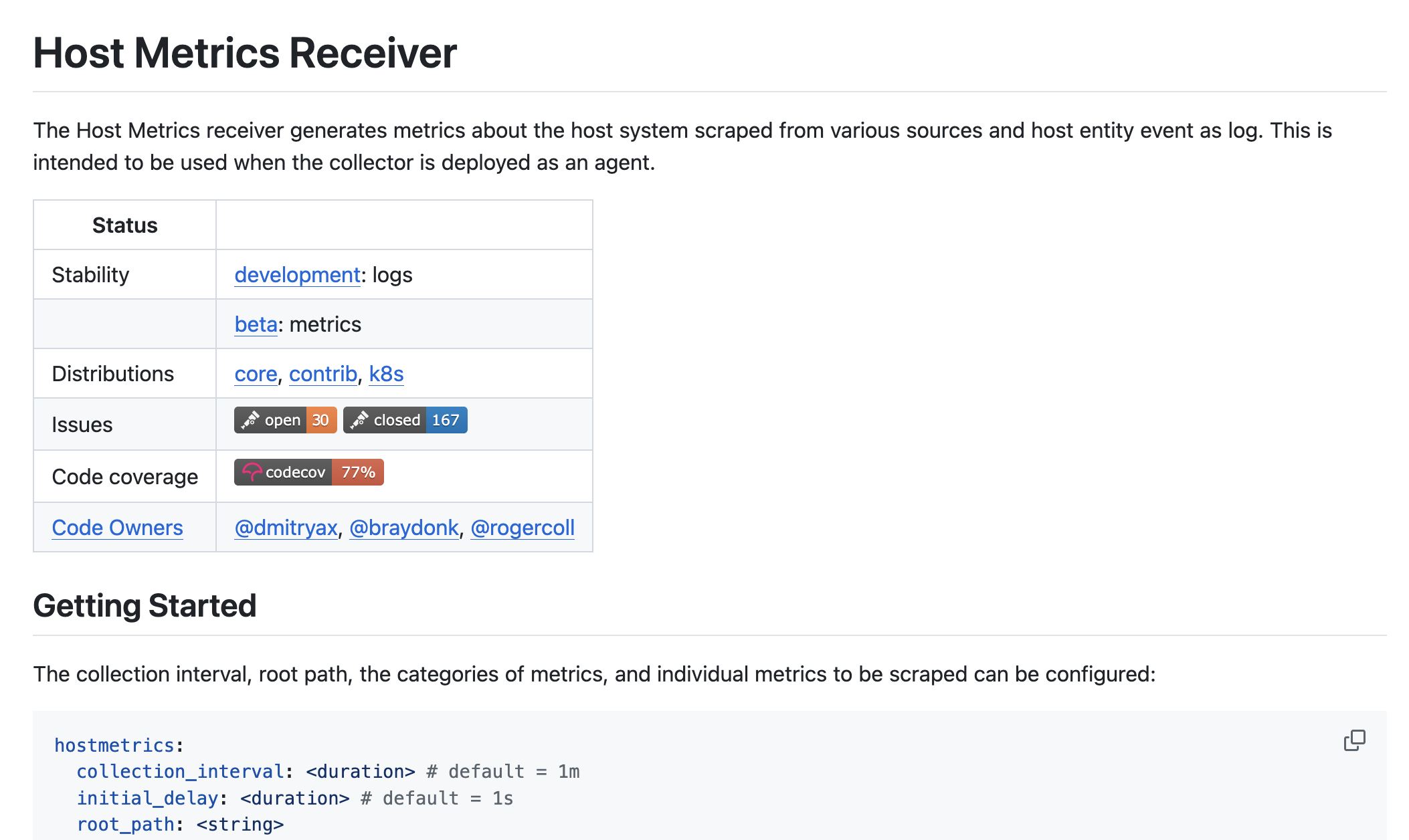

Host Metrics Receiver

Put it all together

receivers:

hostmetrics:

scrapers:

memory:

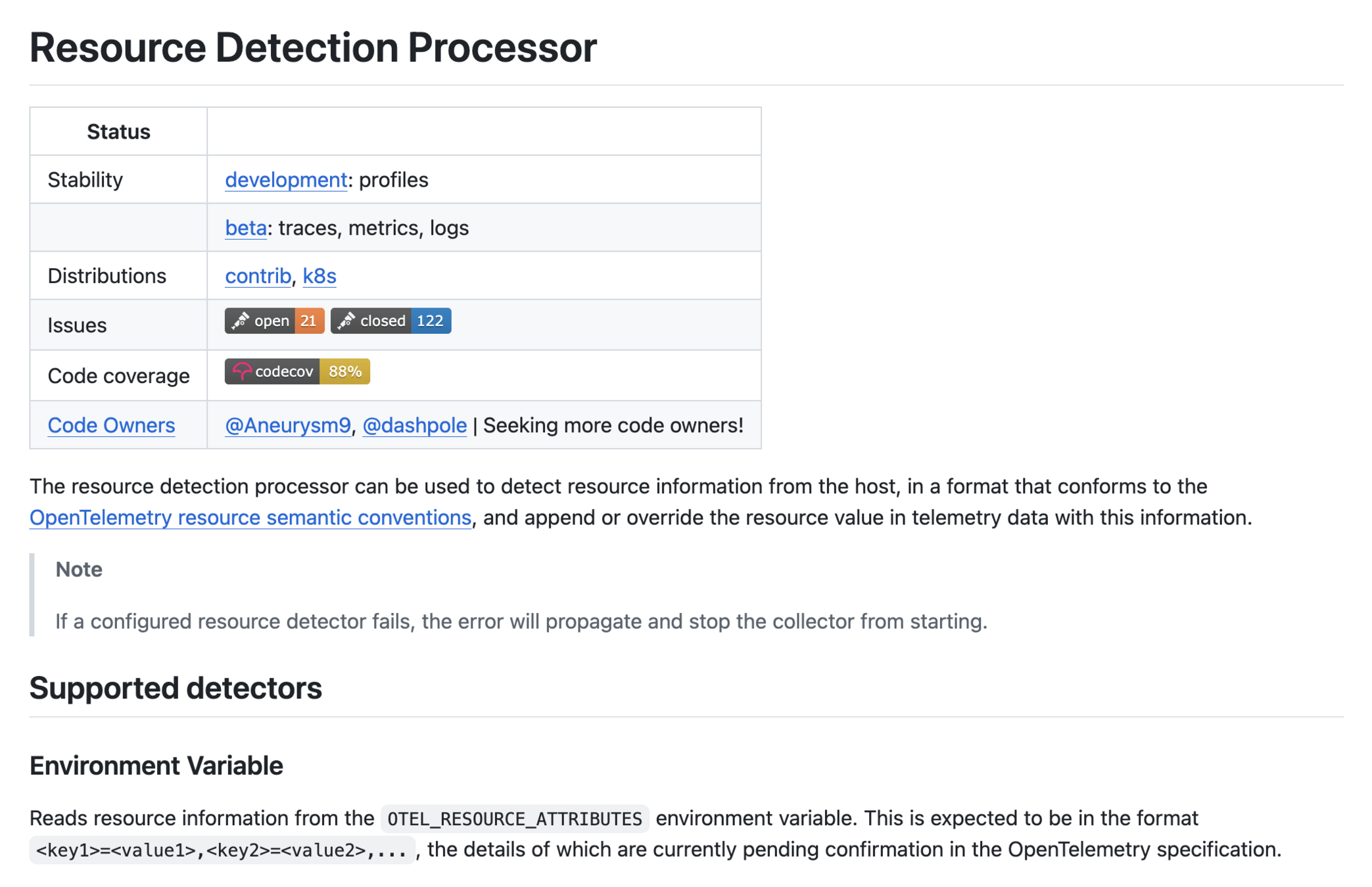

processors:

resourcedetection/detect-host-name:

detectors:

- system

system:

hostname_sources:

- os

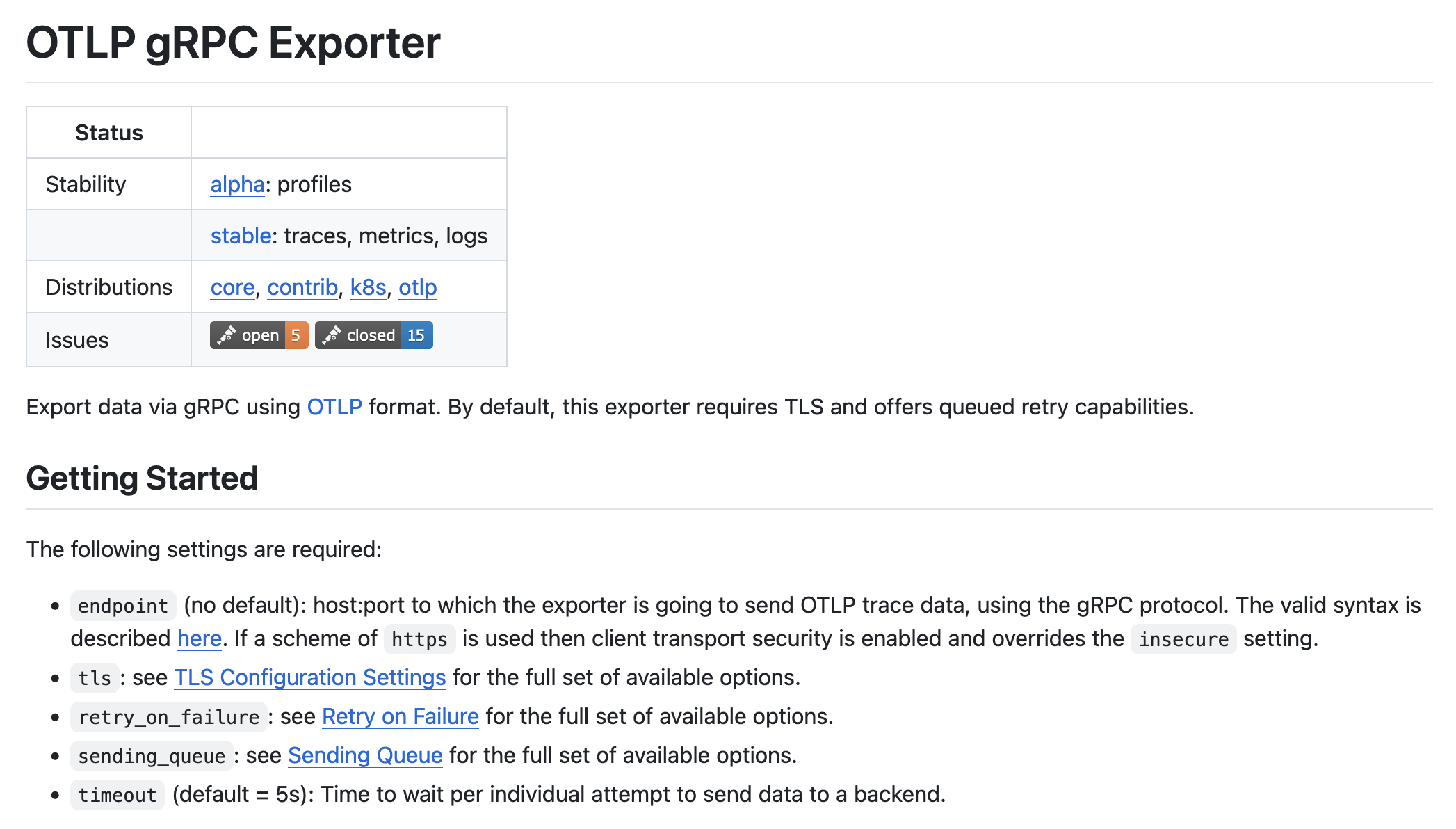

exporters:

otlp:

endpoint: my-otlp-endpoint:4317

service:

pipelines:

metrics:

receivers:

- hostmetrics

processors:

- resourcedetection/detect-host-name

exporters:

- otlpreceivers:

hostmetrics:

scrapers:

memory:

processors:

resourcedetection/detect-host-name:

detectors:

- system

system:

hostname_sources:

- os

exporters:

otlp:

endpoint: my-otlp-endpoint:4317

service:

pipelines:

metrics:

receivers:

- hostmetrics

processors:

- resourcedetection/detect-host-name

exporters:

- otlpData Pipeline

service:

pipelines:

metrics:

receivers: [hostmetrics]

processors: [resourcedetection/detect-host-name]

exporters: [otlp]

metrics/kafka:

receivers: [kafka]

exporters: [otlp/kafka]

logs:

receivers: [filelog]

exporters: [otlp]

logs/kafka:

receivers: [kafka]

exporters: [otlp, otlp/kafka]

traces:

receivers: [otlp, kafka]

processors: [resourcedetection/detect-host-name]

exporters: [otlp, otlp/kafka]More Data Pipelines

The "What"

OTel Collector Builder

OTel Collector Distros

Third-party Distros

OTel Collector Builder

OTel Collector Distros

Third-party Distros

Core distribution

github.com/open-telemetry/opentelemetry-collector - components

Contrib distribution

https://github.com/open-telemetry/opentelemetry-collector-contrib - components

Kubernetes distribution

eBPF Profiling distribution

OTLP distribution

OTel Collector Distros

Should you use contrib?

🤷♂️

Should you use contrib?

OTel Collector Builder

OTel Collector Distros

Third-party Distros

OTel Collector based, maintained by third parties.

Not validated by the community.

- ADOT (AWS)

- Alloy (Grafana)

- BDOT (Bindplane)

- DDOT (Datadog)

- DDOT (Dynatrace)

- EDOT (Elastic)

- NRDOT (NewRelic)

- RHOSDT (RedHat)

- SDOT (Splunk)

- Tulip (OllyGarden)

- ...

Third-party Distros

Industry-wide support

Among others:

OTel Collector Builder

OTel Collector Distros

Third-party Distros

OTel Collector Builder

Lean binary

- Smaller attack surface

- Smaller footprint

- Custom components

- Self-serve

Use ocb tool for your own OTel Distro!

OTel Collector Builder

YAML manifest

GO compile

YAML manifest

dist:

name: otelcol-dev

description: Basic OTel Collector distribution for Developers

output_path: ./otelcol-dev

exporters:

- gomod:

go.opentelemetry.io/collector/exporter/debugexporter v0.150.0

- gomod:

go.opentelemetry.io/collector/exporter/otlpexporter v0.150.0

processors:

- gomod:

go.opentelemetry.io/collector/processor/batchprocessor v0.150.0

receivers:

- gomod:

go.opentelemetry.io/collector/receiver/otlpreceiver v0.150.0

providers:

- gomod:

go.opentelemetry.io/collector/confmap/provider/envprovider v1.48.0

- gomod:

go.opentelemetry.io/collector/confmap/provider/fileprovider v1.48.0

- gomod:

go.opentelemetry.io/collector/confmap/provider/httpprovider v1.48.0

- gomod:

go.opentelemetry.io/collector/confmap/provider/httpsprovider v1.48.0

- gomod:

go.opentelemetry.io/collector/confmap/provider/yamlprovider v1.48.0

OTel Collector Builder

OTel Collector Builder

YAML manifest

GO compile

GO compile

OTel Collector Builder

$ ./ocb --config builder-config.yaml2025-06-13T14:25:03.037-0500 INFO internal/command.go:85 OpenTelemetry Collector distribution builder {"version": "0.150.0", "date": "2025-06-03T15:05:37Z"}

2025-06-13T14:25:03.039-0500 INFO internal/command.go:108 Using config file {"path": "builder-config.yaml"}

2025-06-13T14:25:03.040-0500 INFO builder/config.go:99 Using go {"go-executable": "/usr/local/go/bin/go"}

2025-06-13T14:25:03.041-0500 INFO builder/main.go:76 Sources created {"path": "./otelcol-dev"}

2025-06-13T14:25:03.445-0500 INFO builder/main.go:108 Getting go modules

2025-06-13T14:25:04.675-0500 INFO builder/main.go:87 Compiling

2025-06-13T14:25:17.259-0500 INFO builder/main.go:94 Compiled {"binary": "./otelcol-dev/otelcol-dev"}

The "Where"

At the Gateway

At the Edge

At the Gateway

At the Edge

At the Edge

App to SDK

Sidecar Collector

App to file

Daemonset Collector

Push

Pull

Collection where the workload is

At the Edge

Protect the app

Zero trust and isolation

- Redaction: PII

- Enrichment: K8s Attributes

- Stateless sampling, e.g. errors only

At the Gateway

At the Edge

data flood

At the Gateway

SDK

Collector

Sidecar

Gateway

At the Gateway

SDK

Collector

Sidecar

Gateway

Protect the data

Secure boundary

- Complex routing

- Stateful sampling, e.g. tail based

- Rate limiting

- Multi-backend fan-out

At the Gateway

Gateway security

The secure boundary

- Centralized API keys management

- Data masking and PII scrubbing

- Traffic validation

Gateway resilience

Protect the data

- Persistent queue

- Retry and backoff strategy

- Stateful load balancing

Edge security

Zero trust and isolation

- Network isolation

- Least privilege execution

- No vendor secrets at the edge

Edge resilience

Protect the app

- Prevent OOM kills

- Fail fast (drop over block)

- Strict resource limits

The "How"

Day 2 operations

# manual drift…

apiVersion: v1

kind: ConfigMap

metadata:

name: otel-gateway

data:

config.yaml: |

processors:

tail_sampling:

policies:

- - name: keep-errors

+ - name: keep-errors-and-checkout

type: string_attribute

...$ ssh ops@edge-482.mgmt

Connection timed out

$ ssh ops@edge-483.mgmt

Connection timed out

$ for h in $(cat hosts.txt); do

ssh $h 'sudo systemctl restart otelcol'

done

ssh: connect to host edge-511 port 22:

Operation timed out

# still 600 nodes to go…Manual ConfigMap surgery, hop-by-hop SSH, configuration drift, partiall rollouts... What can go wrong?

Fleet management evolution

Static config

GitOps / Helm

Operators

OpAMP

?

Fleet management evolution

Static config

GitOps / Helm

Operators

OpAMP

?

Fleet management evolution

Static config

GitOps / Helm

Operators

OpAMP

✓

Why OpAMP?

- World is not 100% K8s

- Multi-cluster sprawl

- Actual vs desired state monitoring

- Can manage SDKs too!

Meet OpAMP (beta)

Components

- Specification (beta)

- Go reference impl (beta)

- OpAMP Extension (beta)

- OpAMP Supervisor (beta)

- OpAMP Gateway (alpha)

OpAMP in action

OpAMP backend

Telemetry backend

OpenTelemetry Collector

OpAMP Supervisor

OTLP data

OpAMP monitoring

Config

Config

Recap

Control-plane early

Start with the Operator if you can; design headroom for OpAMP when the fleet spans the platform boundary.

Topology

Adopt the edge-to-gateway pattern as the foundation for scale and clear trust boundaries.

Binary on purpose

Prefer OCB or a curated distribution (upstream or vendor) over shipping full contrib to every production node.

Some more #OpenTelemetry

Community

Thank you!

Marcin "Perk" Stożek

@marcinstozek / perk.pl

From Edge to Gateway - architecting your OpenTelemetry Collector deployment

By Marcin Stożek

From Edge to Gateway - architecting your OpenTelemetry Collector deployment

- 75