Learning mid-level representations for computer vision

Stavros Tsogkas

People don't just "see"

other cars

how far?

Don't run them over!!

which traffic light?

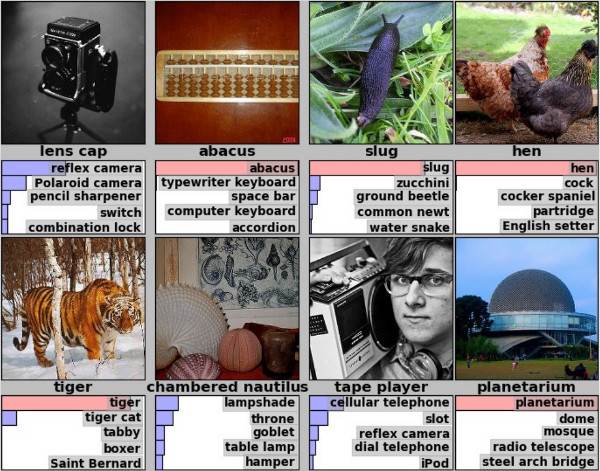

Image classification paradigm

Model

"car"

Input

Discriminative representation

Machine learning algorithm

The deep learning revolution

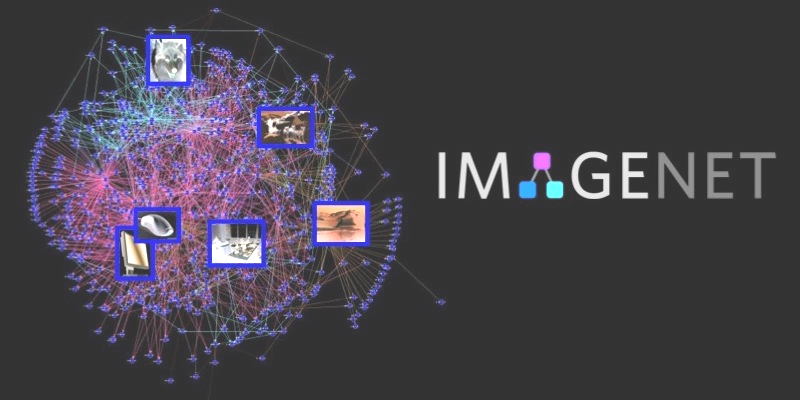

ImageNet top-5 error rate (%)

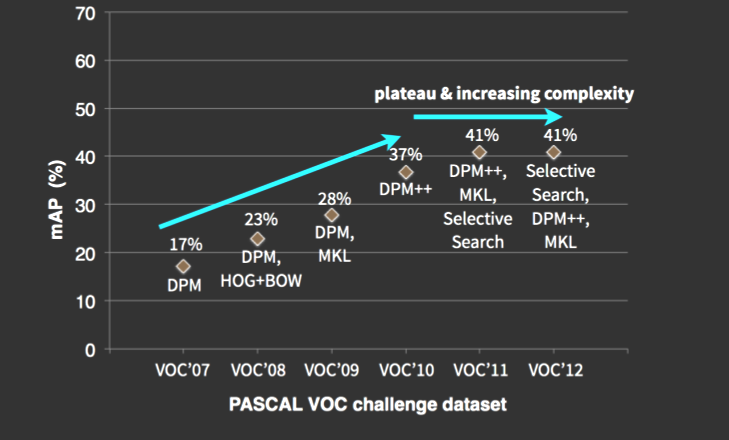

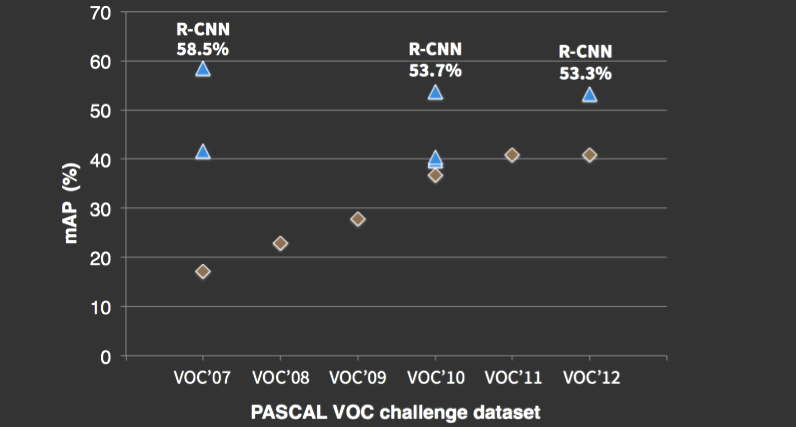

Object detection 2010-2012: performance plateau

Performance: %mAP (mean average precision)

DPM variants

Object detection 2013

Girshick et al., Rich feature hierarchies for accurate object detection and semantic segmentation, CVPR 2014

DPM variants

Deep Learning

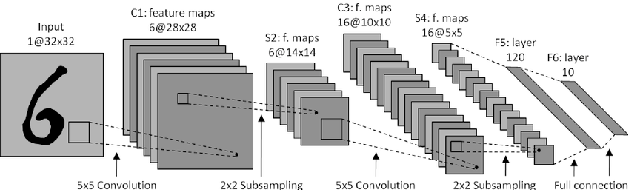

Brief history of learning in vision

Digit recognition (CNNs, 1989)

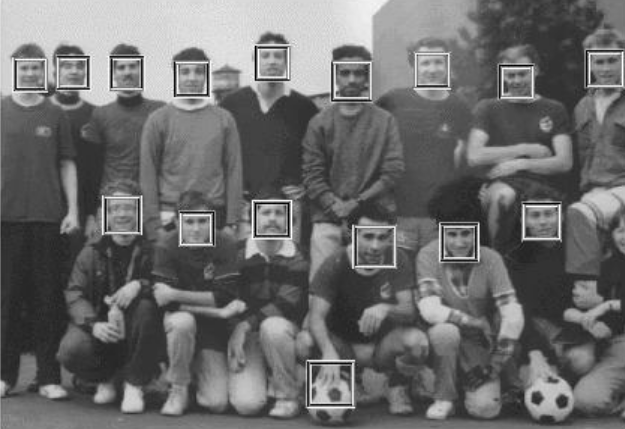

Face detection (Haar features + AdaBoost, 2001)

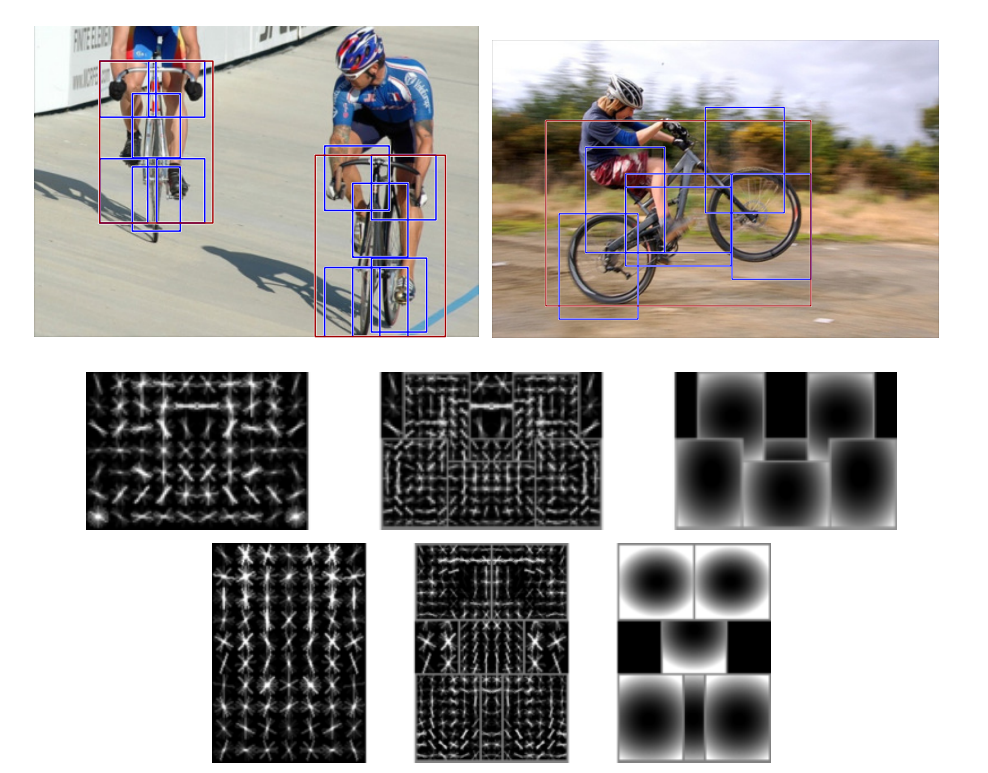

Object detection

(HOGs + SVMs, 2005-2010)

Image classification (Deep CNNs, 2012)

DL success stories

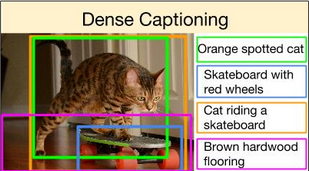

Image captioning

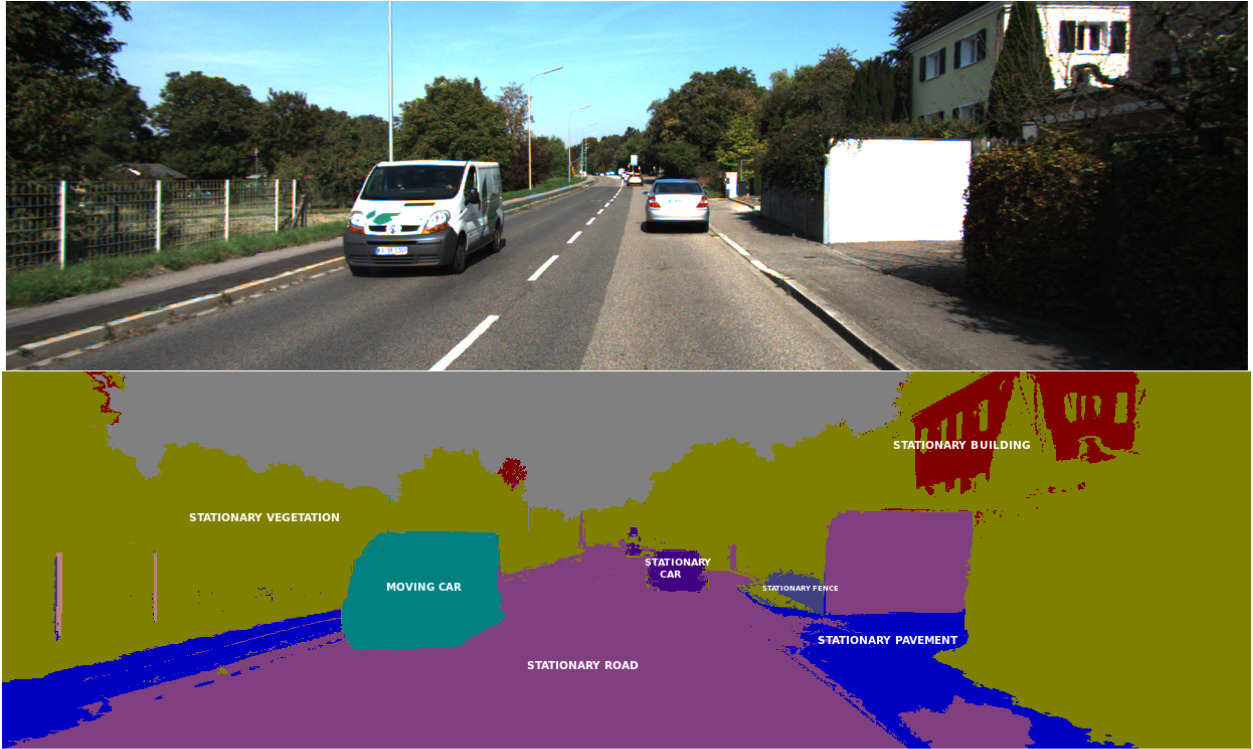

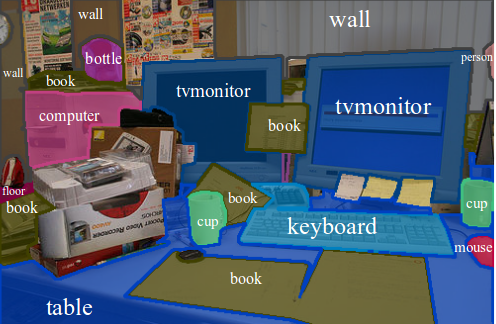

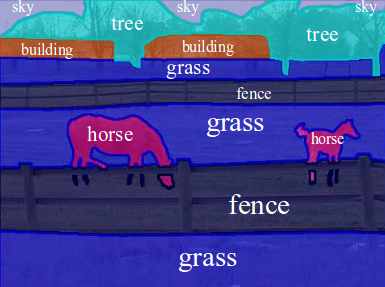

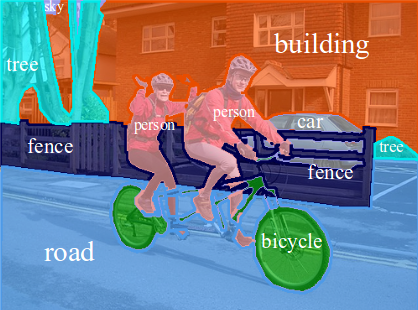

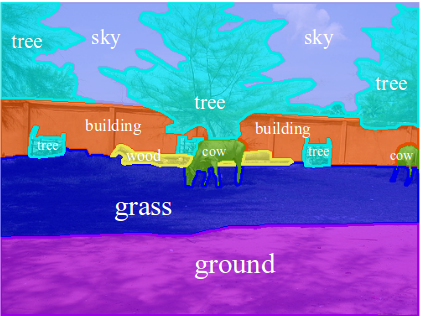

Semantic segmentation

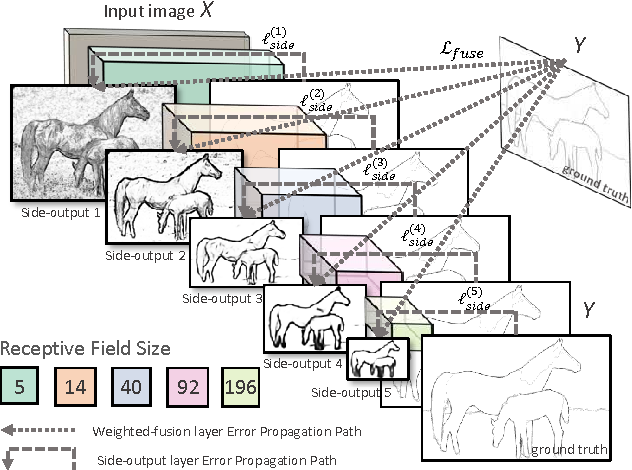

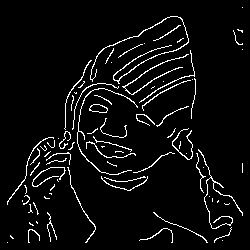

Edge detection

Object detection

Why now?

More data

Better hardware

Support from industry

Better tools

Practical limitations

3-5 minutes per image annotation + $$$

days/weeks to train

low-powered hardware

not enough data

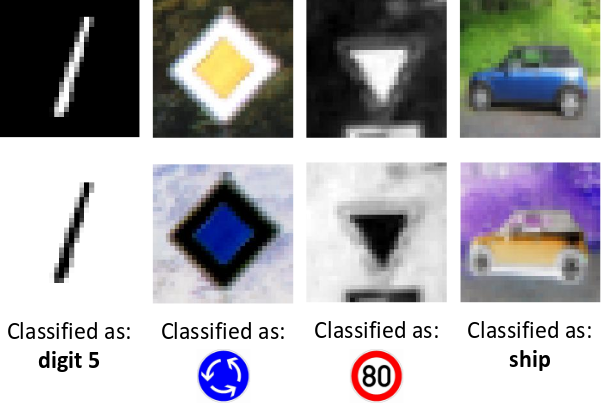

CNNs can be easily fooled...

noise

"gibbon"

99.3% confidence

"panda"

57.7% confidence

...and do not generalize well

Correctly classified

Incorrectly classified

Original images

Negative images

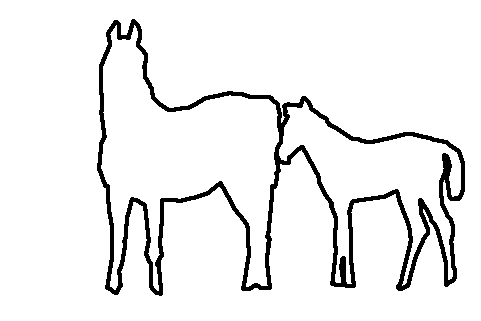

CNNs favour appearance over shape

Zebra or an elephant with stripes?

People learn general rules instead

What makes a table, a table?

flat surface

vertical support

Constellation table by Fulo

What are mid-level representations?

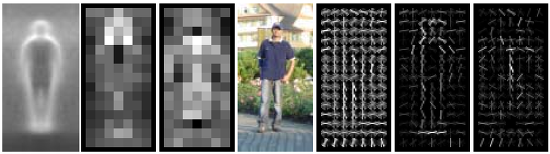

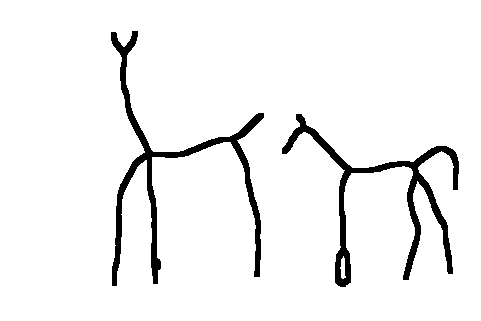

Low-level: Edges

- class agnostic

- not discriminative

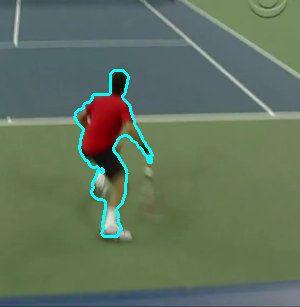

High-level: person segmentation

- class specific

- not generalizable

Mid-level:

-

discriminative and robust

-

shareable across object categories

-

simpler to model, scalable

Mid-level representations include

textures

object parts

symmetries

A "vocabulary" for images

"wheel"

"sand"

-

easier to model

-

shareable

"rotational symmetry"

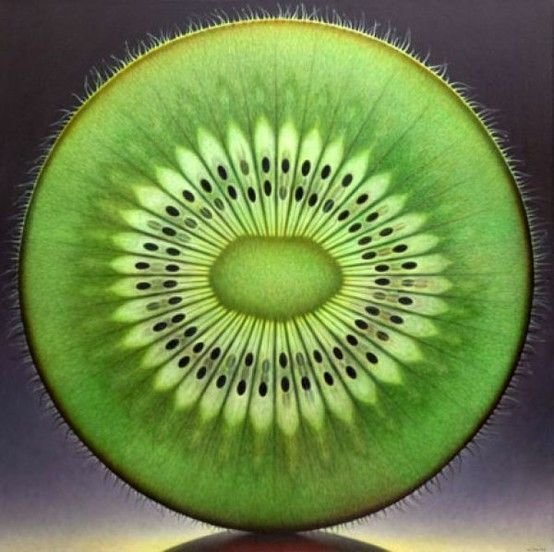

Unsupervised feature learning

X = ( , )

Y = 3

Context prediction

Doersch et al., 2015

Jigsaw puzzles

Moroozi and Favaro, 2016

1

2

3

4

8

5

9

6

7

Results comparable to supervised methods!

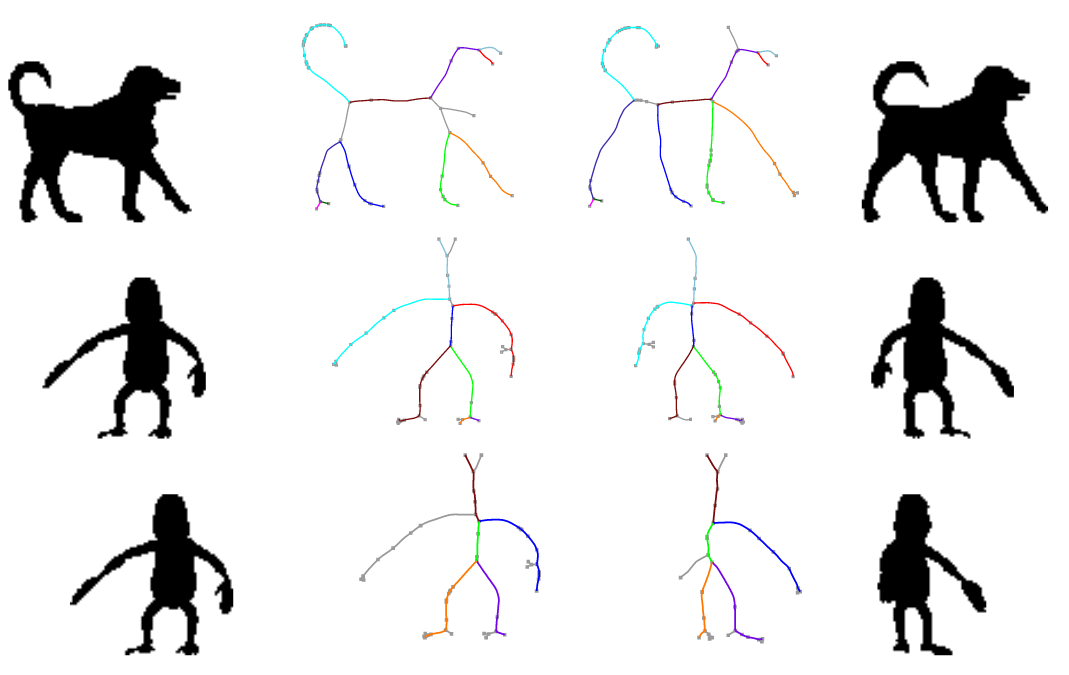

Outline

1. Medial axes

[ECCV 2012, ICCV 2017]

2. Object parts

3. Future research

Learning mid-level representations for shape and texture.

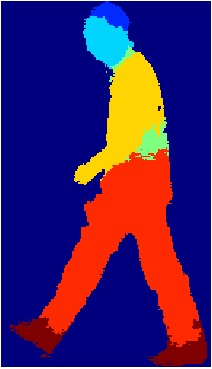

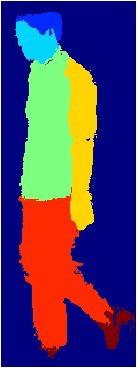

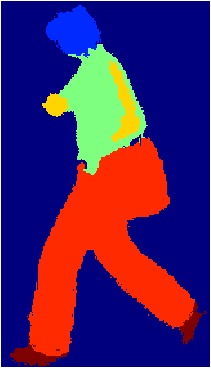

head

torso

arms

legs

hands

[arXiv 2016, ISBI 2016, MICCAI 2016]

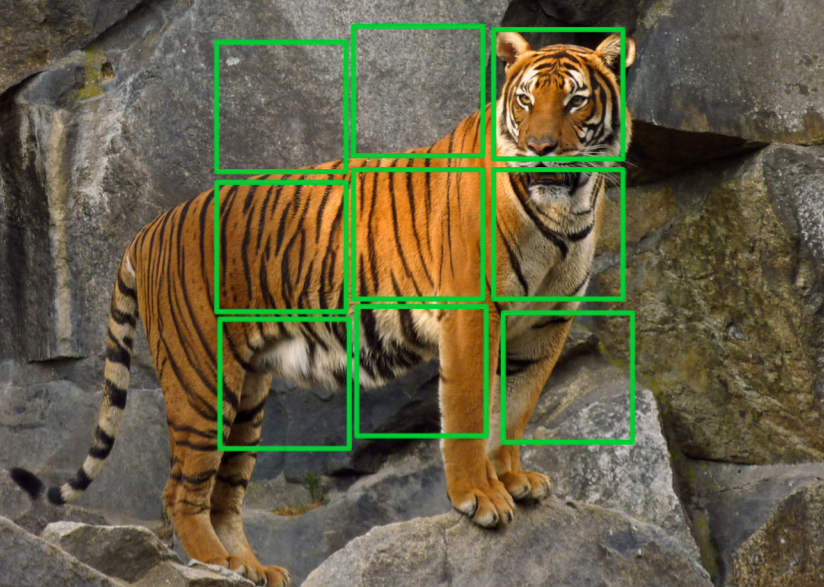

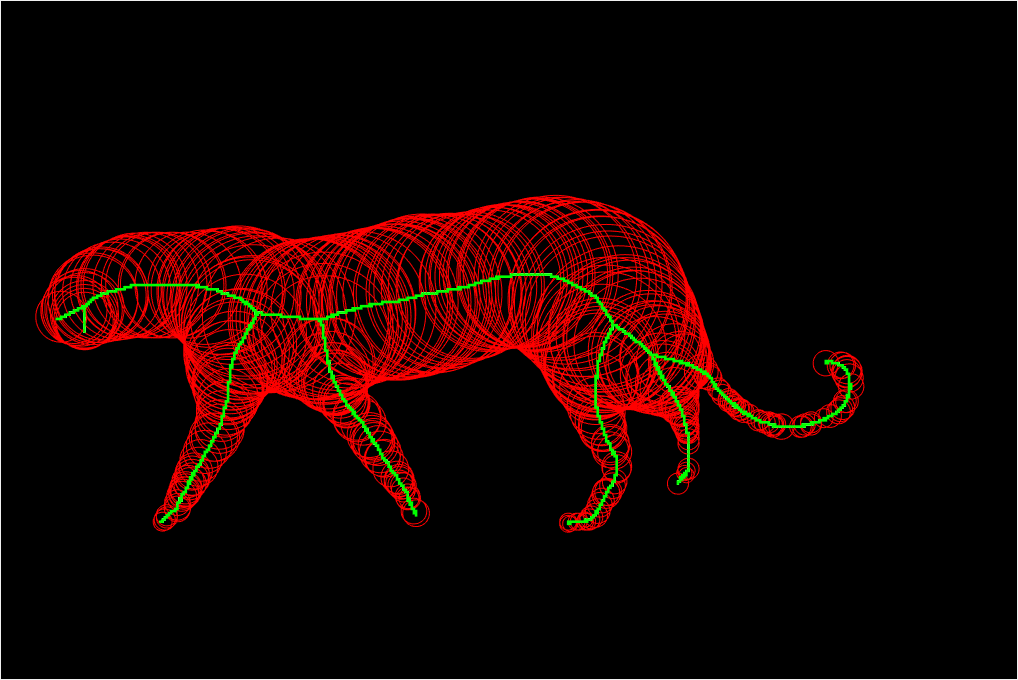

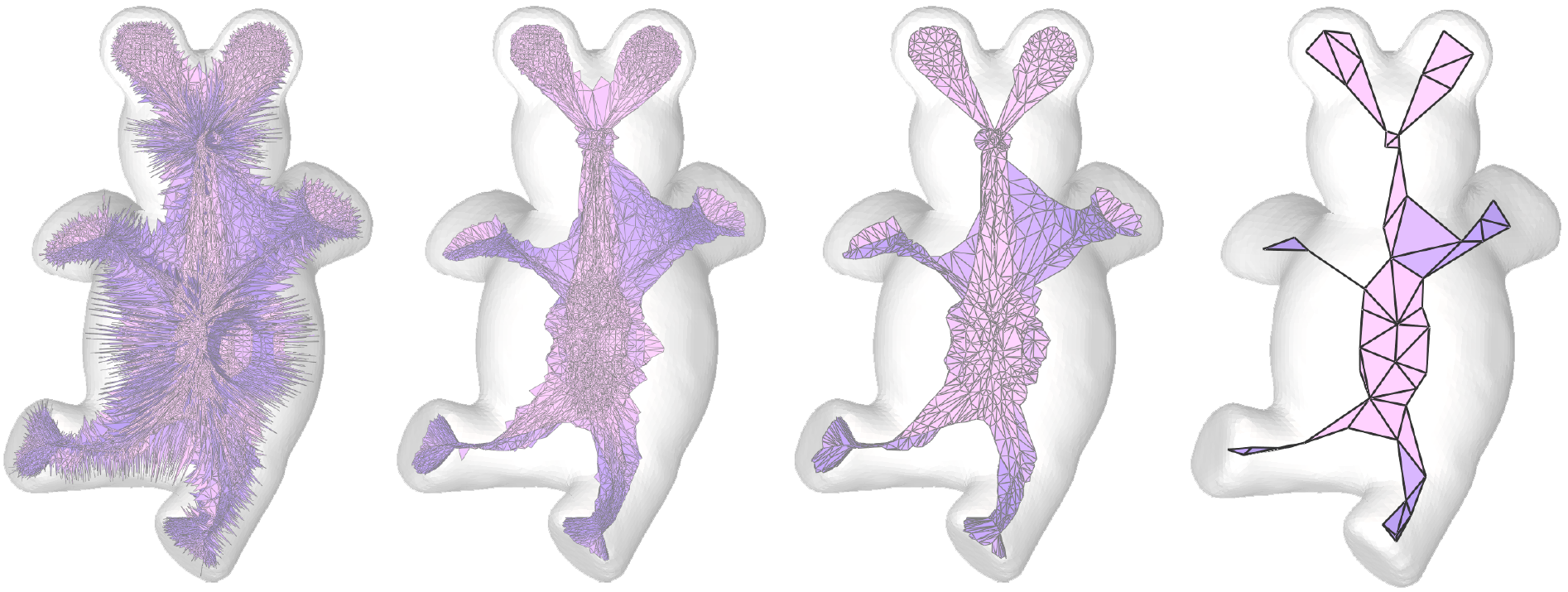

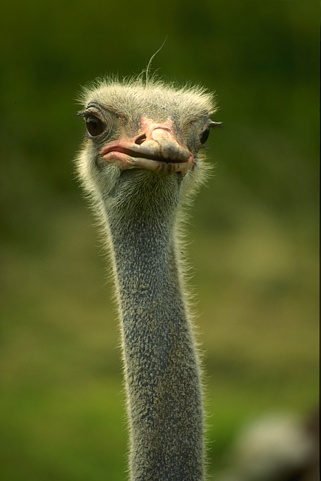

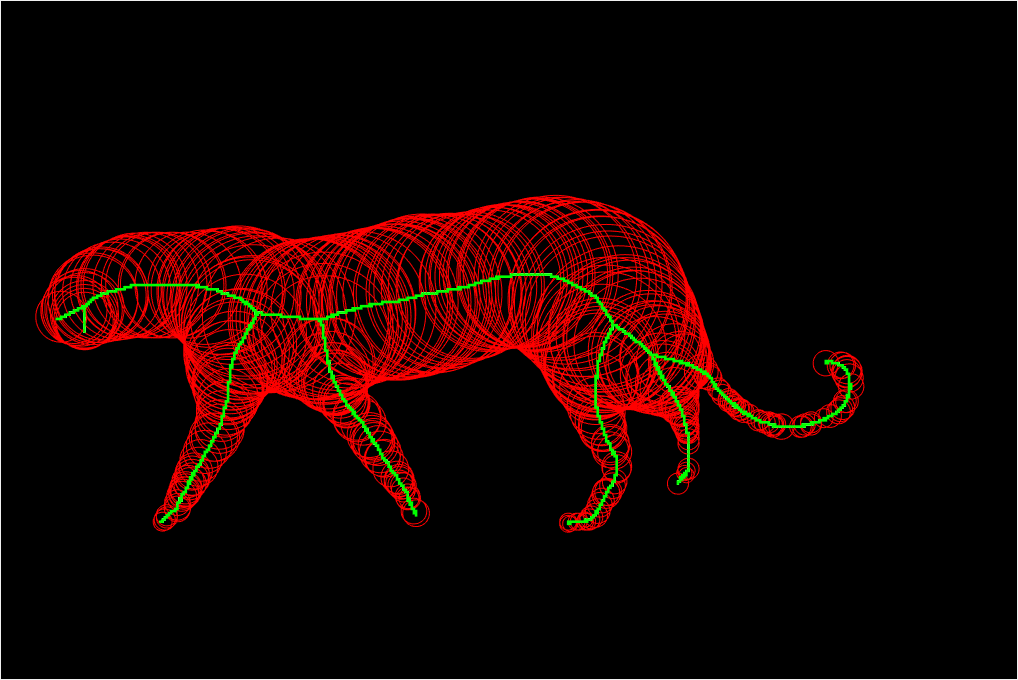

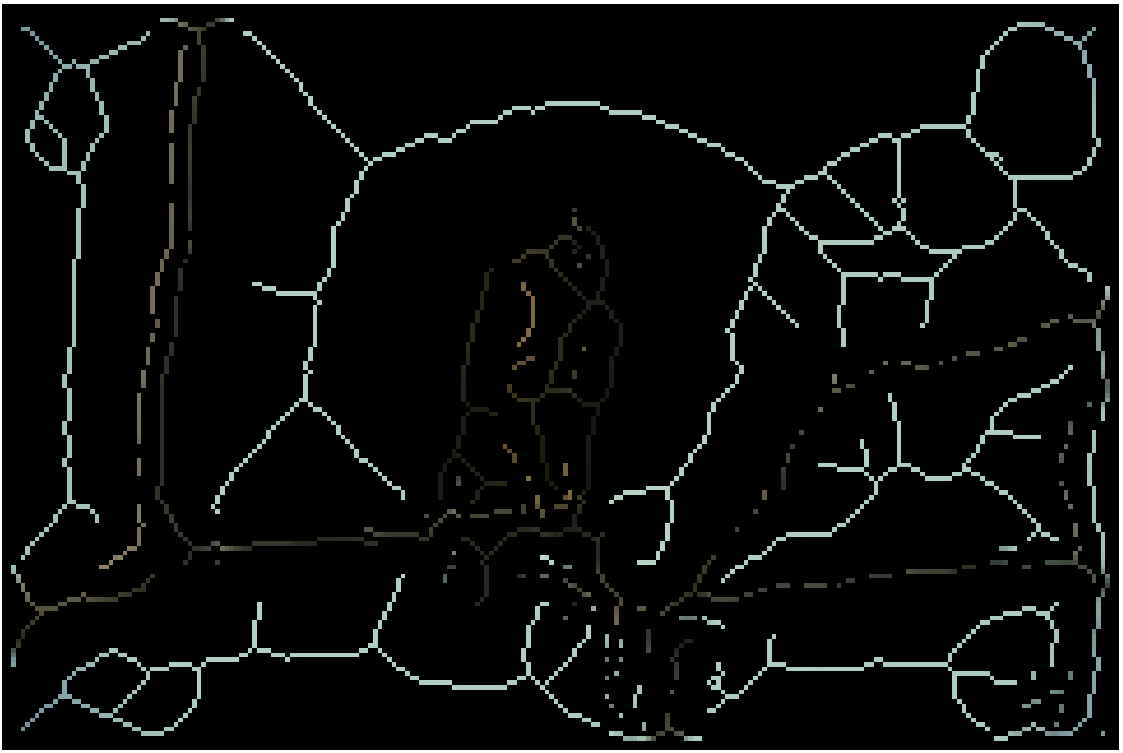

Medial axis detection

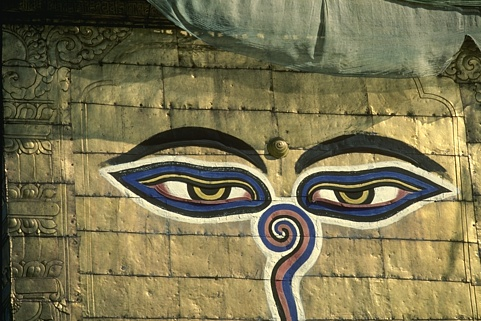

Symmetry is everywhere

Global symmetry is unstable

But local symmetry is more robust

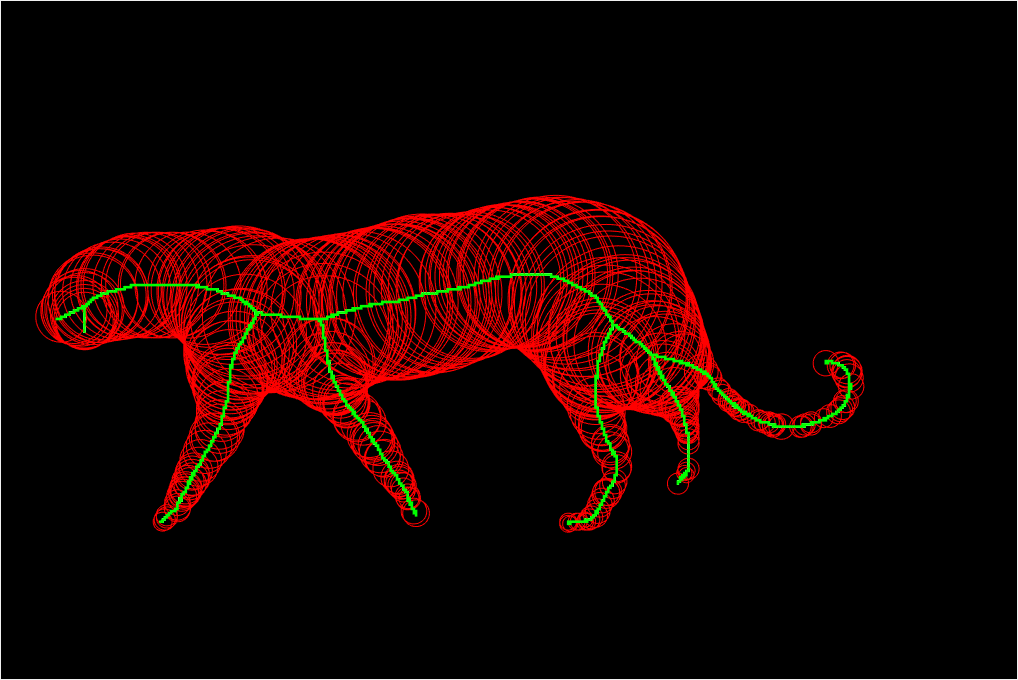

Medial Axis Transform (MAT)

A transformation for extracting new descriptors of shape, H. Blum, Models for the perception of speech and visual form, 1967

Medial Axis Transform (MAT)

A transformation for extracting new descriptors of shape, H. Blum, Models for the perception of speech and visual form, 1967

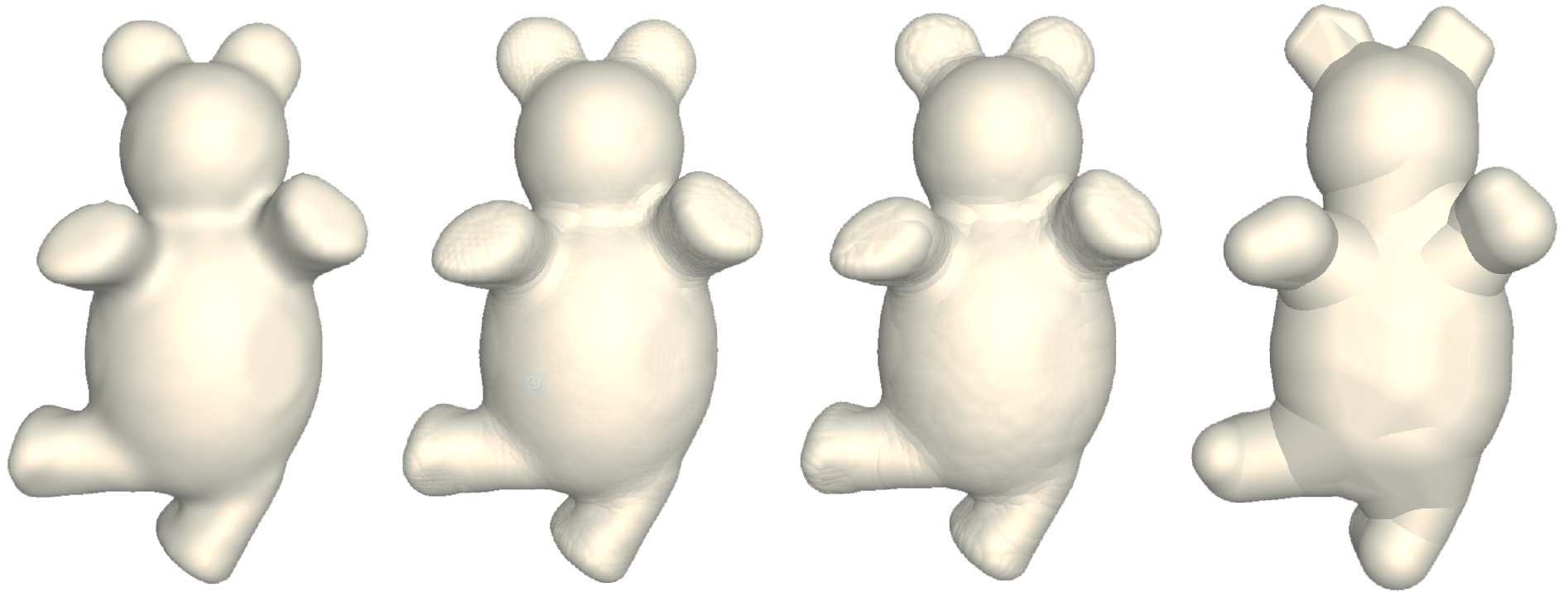

MAT applications

Shape matching and recognition

Shape simplification

Shape deformation with volume preservation

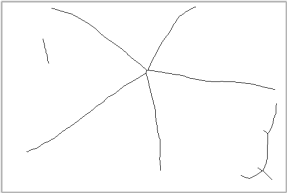

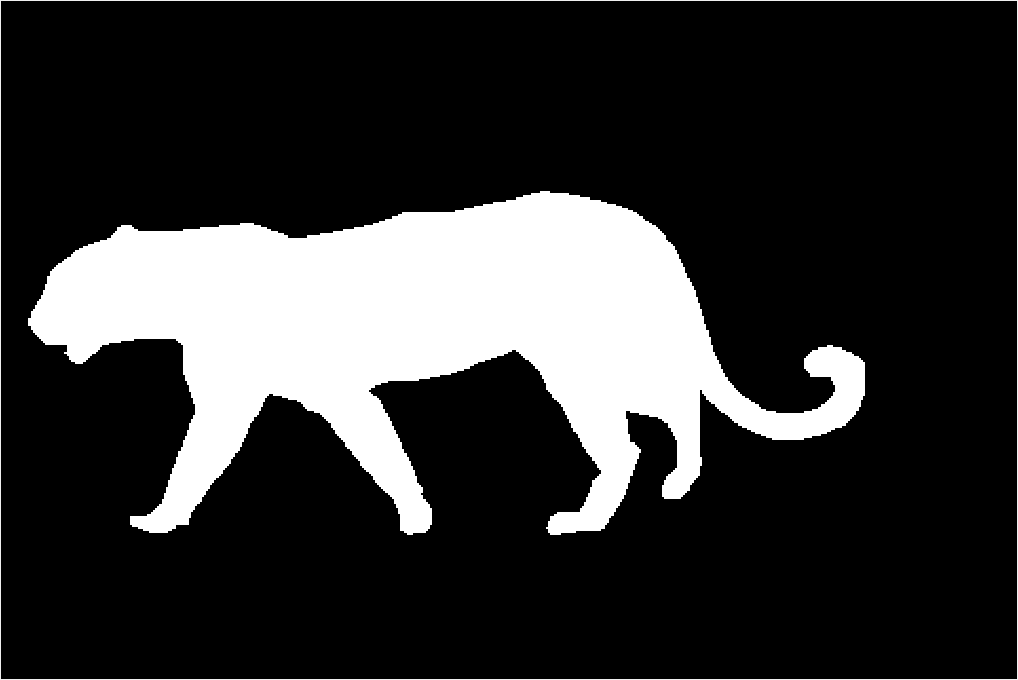

MAT for natural images is not obvious

So let's learn it from data!

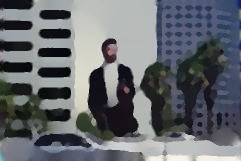

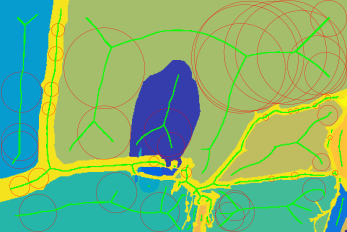

Image from BSDS300

Ground-truth segmentation

Ground-truth skeleton

Medial point detection: binary classification problem

Features designed for bilateral symmetry of image regions

Scale

Orientation

Compute colour and texture histograms for "inside"

1

2

3

\(\chi^2\)( , )

High chance of symmetry!

\(\chi^2\)( , ) \(\sim\) \(\chi^2\)( , ) \(\approx 0\)?

5

4

6

Low chance of symmetry!

High histogram distance?

and "outside"

...for both pairs?

Train with multiple instance learning

Symmetry probability:

Goal: learn w

Challenge: no ground truth annotation for scale and orientation

The bag is positive if at least one instance is positive

The bag is negative if all instances are negative

Noisy-OR = differentiable "max"

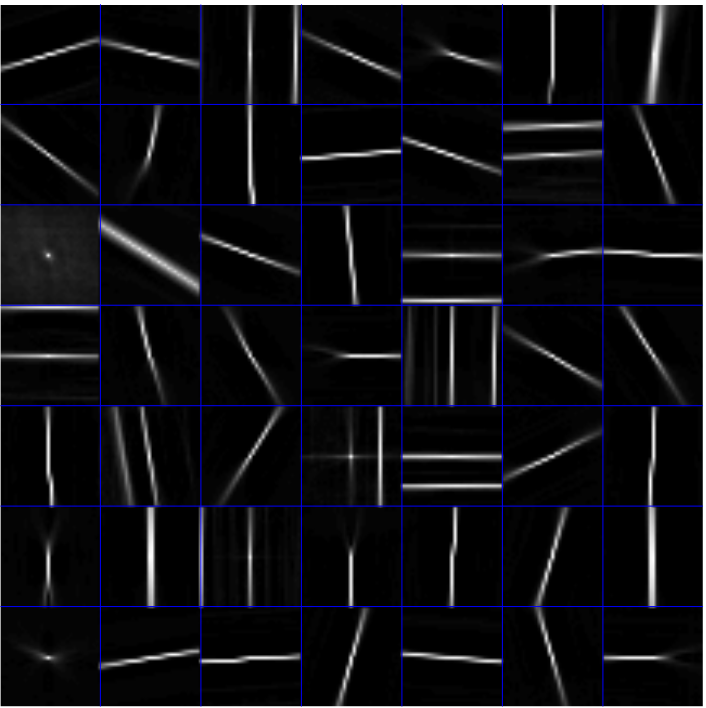

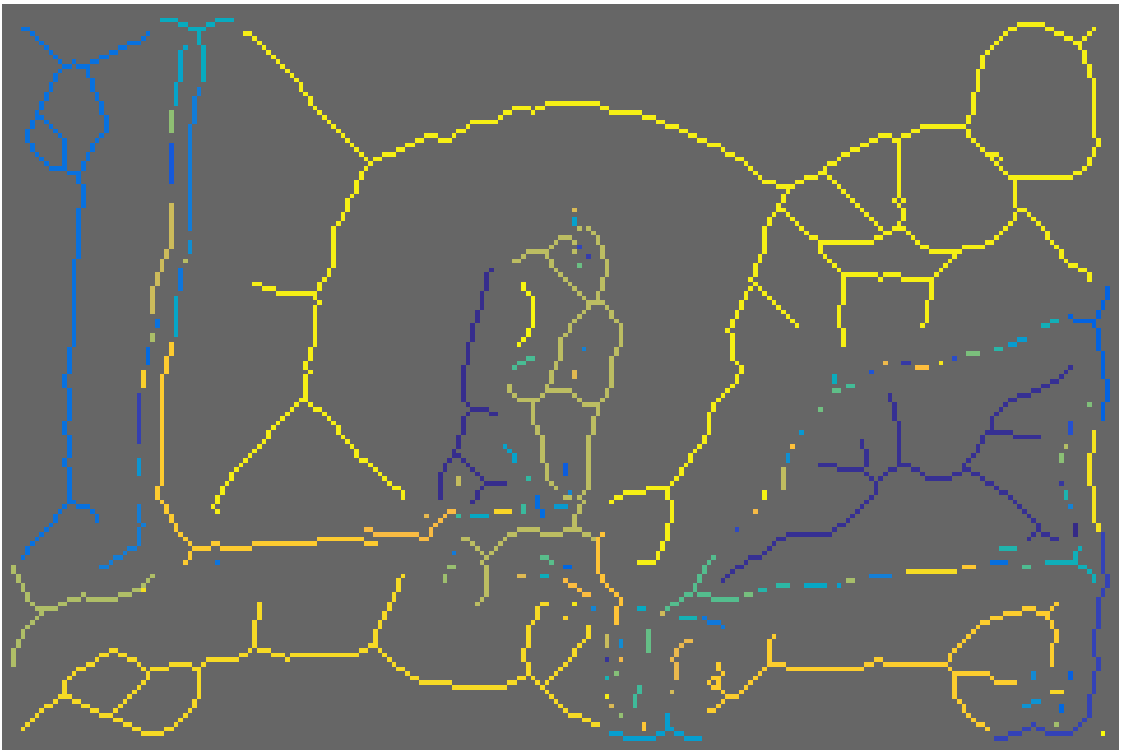

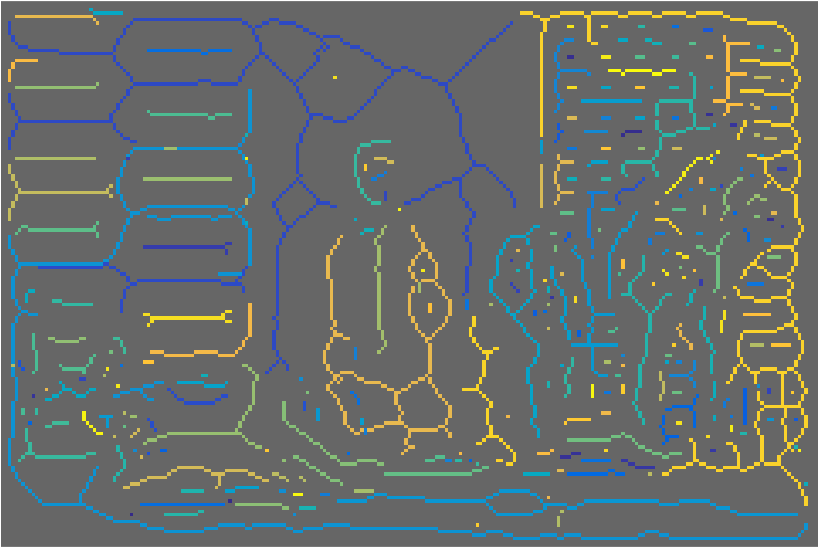

Dense feature extraction

Multiple scales

Multiple orientations

Computing symmetry probabilities

Orientation

Scale

Symmetry probability

Non-maximum suppression

Fast detection with decision forests

Symmetry "tokens"

Clustering

~0.5 sec per image

(40-60x faster than MIL)

MAT should be invertible

Generative definition of medial disks

- f: summarizes patch (encoding)

- g: reconstructs patch (decoding)

AppearanceMAT definition

...

for all p,r

A trivial solution

Select pixels as medial points (disks of radius 1).

Perfect reconstruction quality!

Not very useful in practice...

Goal: balance between sparsity and reconstruction

-

Dense representation

-

Low reconstruction error

-

Sparse representation

-

High reconstruction error

Favor the selection of larger disks...

Increasing \( w \)

Add regularization term to disk cost: \( c_{\mathbf{p},r} = e_{\mathbf{p},r} + \orange{w}(\frac{1}{r}) \).

...as long as they do not incur a high reconstruction error

AMAT is a weighted geometric set cover problem

WGSC is NP-hard!

PTAS exist

Set we want to cover

Covering elements (range)

Set costs

Cover 2D image

using disks of radii {1,...,R}

with costs \( c_{\mathbf{p},r}\)

Greedy algorithm

-

Compute all costs \( c_{\mathbf{p},r} \).

-

While image has not been completely covered:

-

Select disk \( D_{\mathbf{p^*},r^*} \) with lowest cost.

-

Add point \( (\mathbf{p^*},r^*,\mathbf{f}_{\mathbf{p^*},r^*}) \) to the solution.

-

Mark disk pixels as covered.

-

Update costs \( c_{\mathbf{p},r} \)

-

Approximation algorithms, Vijay V. Vazirani

AMAT Demo

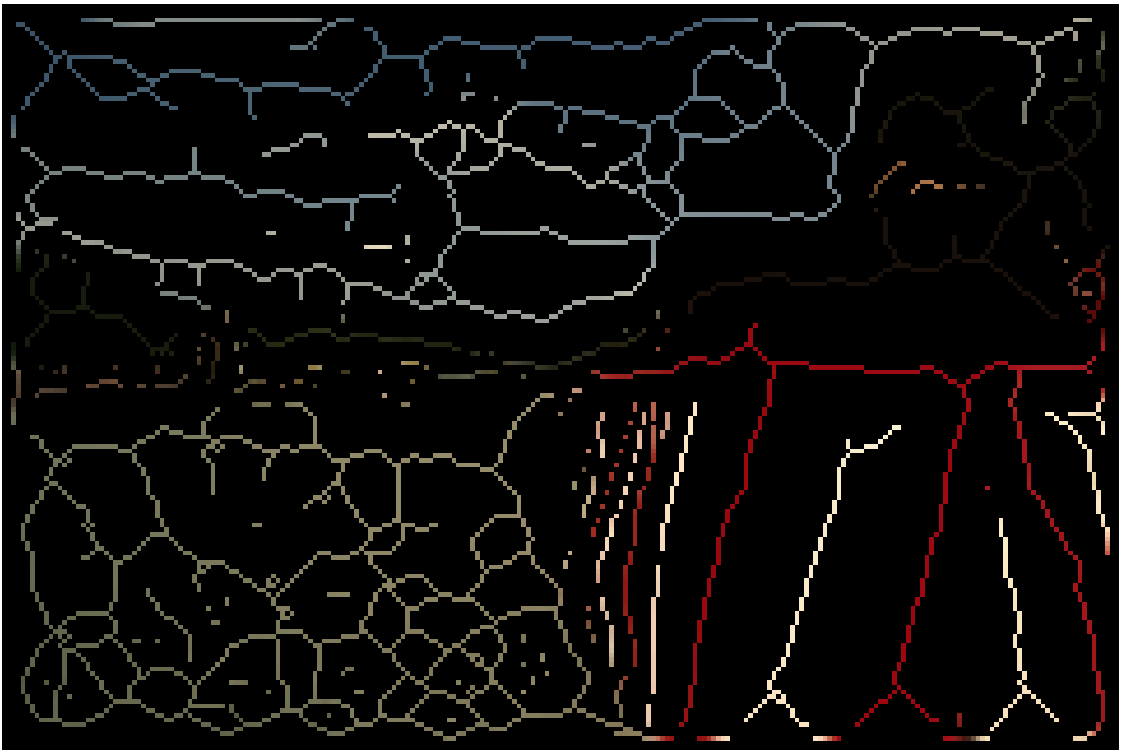

More reconstruction results

Input

MIL

GT-seg

GT-skel

AMAT

Grouping points together...

- space proximity

- smooth scale variation

- color similarity

color similarity

Input

AMAT

Groups

(color coded)

...opens up possibilities

Thinning

Segmentation

Object proposals

and more...

Qualitative results

Input

AMAT

Groups

Reconstruction

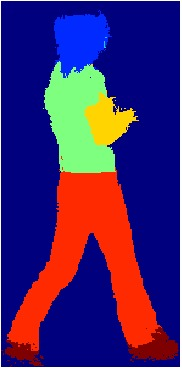

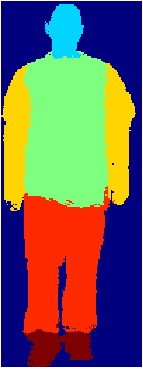

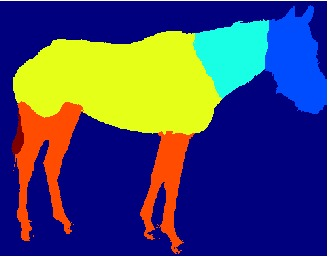

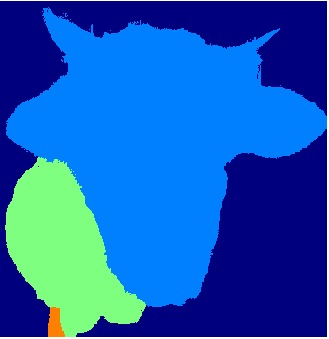

Part segmentation

Fully convolutional neural networks

P(person)

P(horse)

:

P(dog)

dog

person

Finetune for part segmentation

head

torso

arms

legs

hands

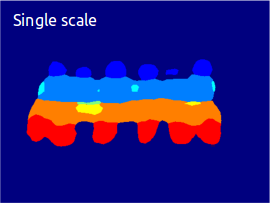

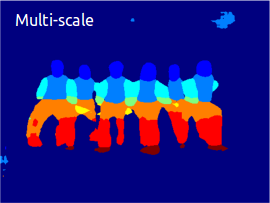

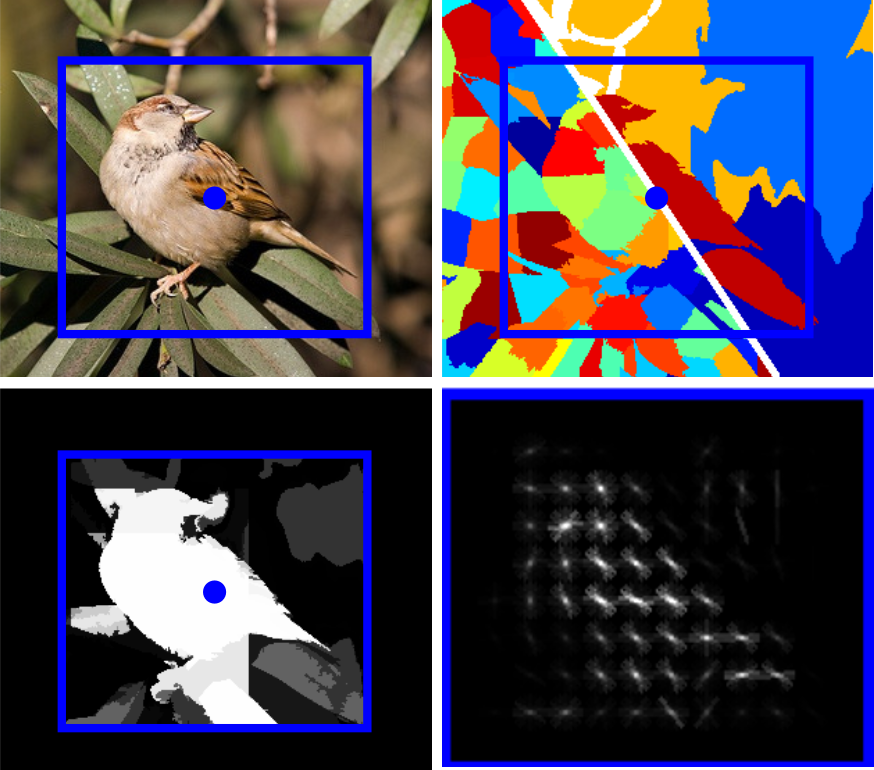

Part segmentation in natural images

Small parts are lost due to downsampling

RGB: 152x152

L1: 142x142

L2: 71x71

L3: 63x63

L4: 55x55

L5 25x25

L6 21x21

Extract features at multiple scales

Scale 1x

Scale 1.5x

Scale 2x

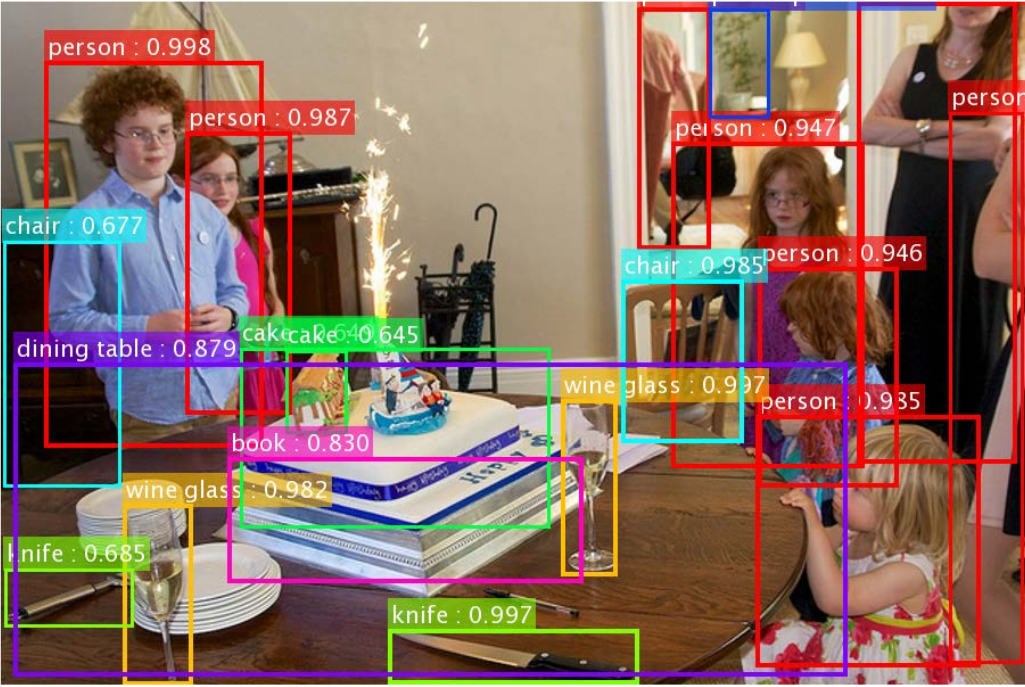

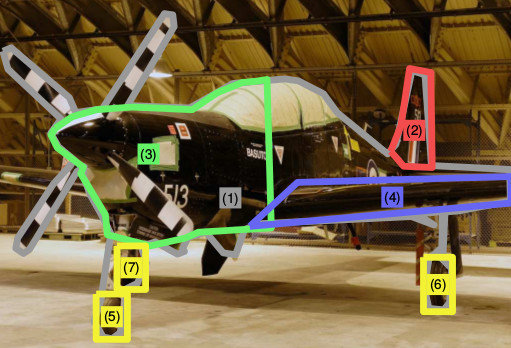

Combine with object detector

Towards real-time object detection with region proposal networks, S.Ren et al., NIPS 2015

Find scale that is closest to the network's nominal scale

: bounding box adjusted for scale

: default size of the network's input

Use features from the ideal scale

Multi-scale analysis improves results...

...and is efficient for images with many objects

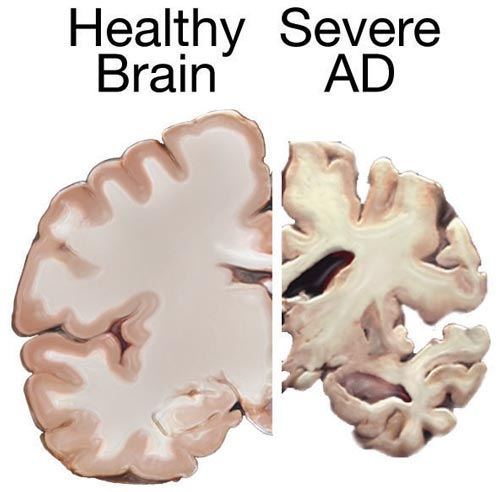

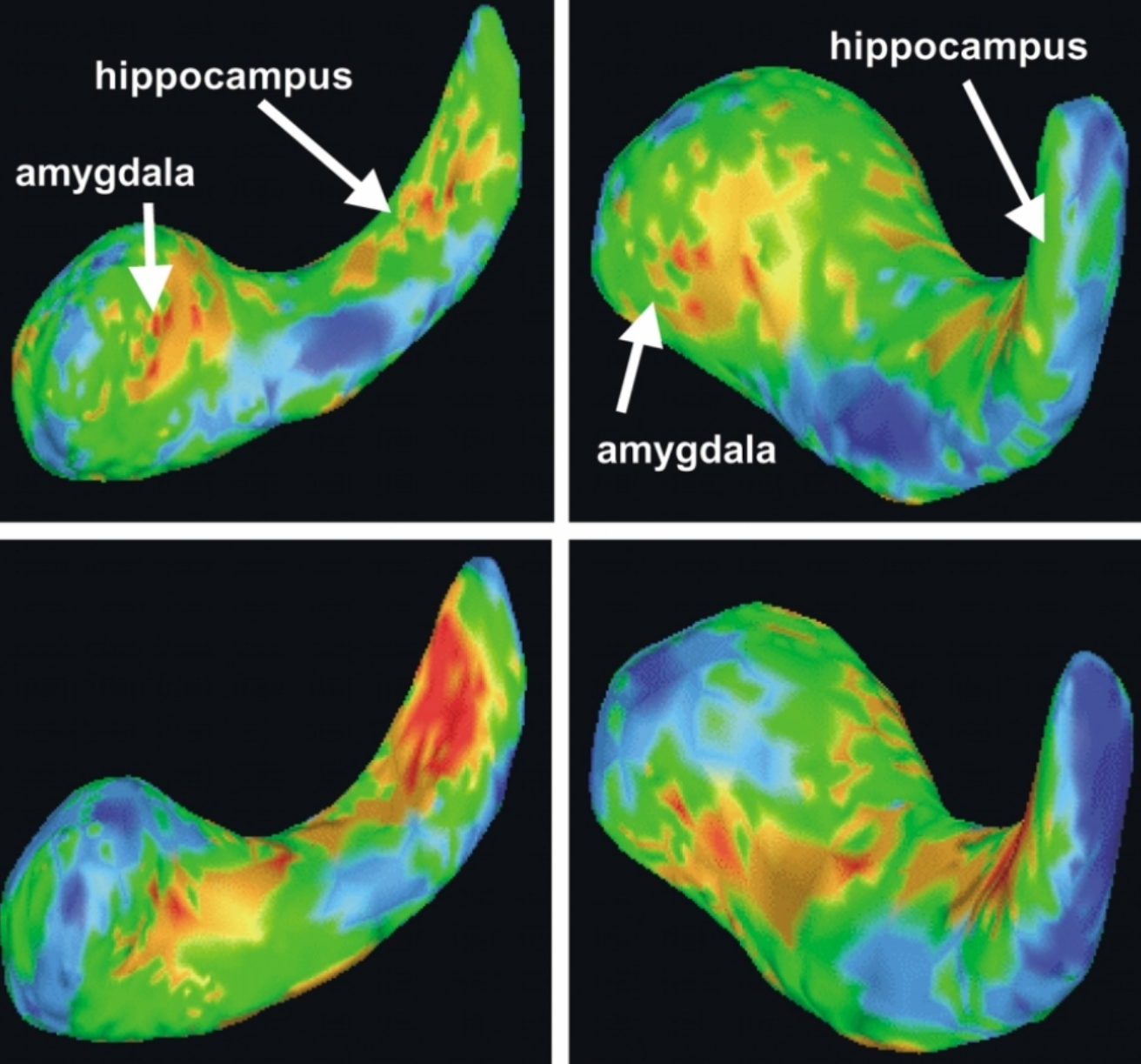

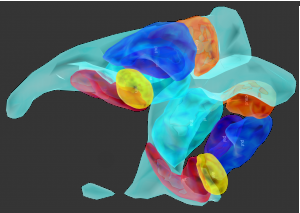

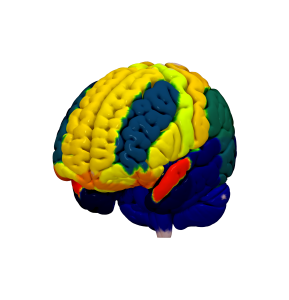

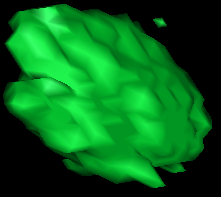

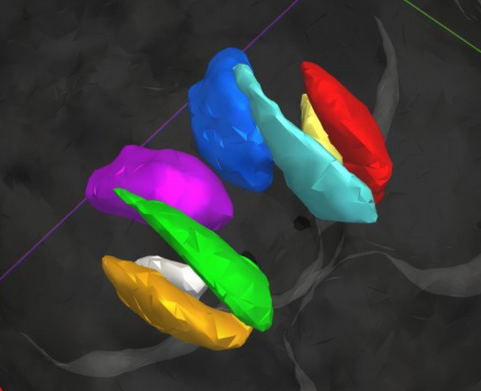

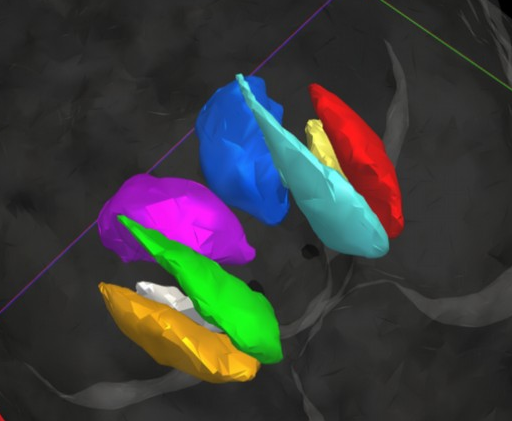

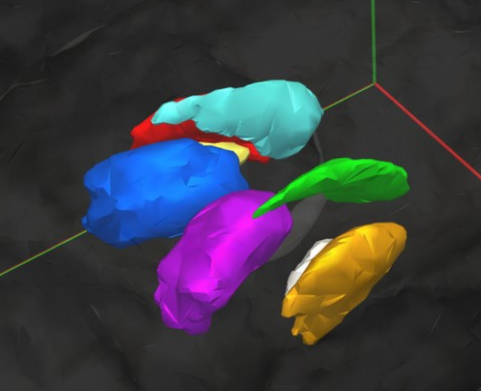

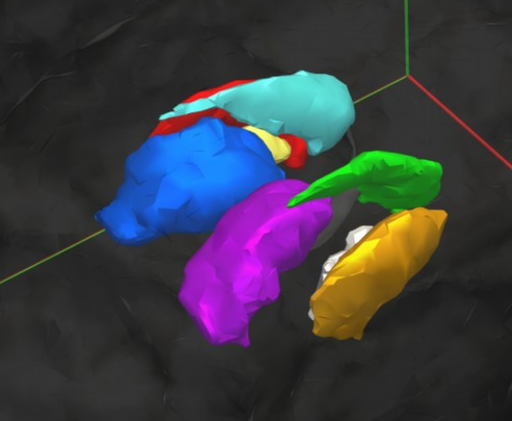

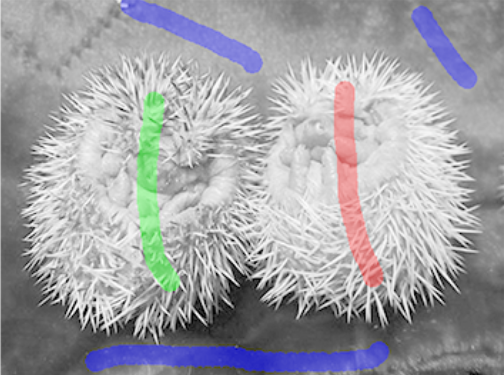

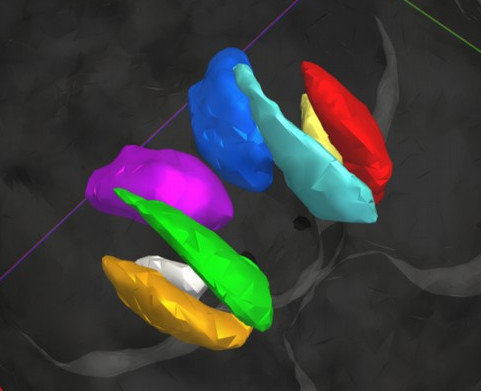

Segmenting brain "parts" is also important

Alzheimer's:

structure degeneration

Schizophrenia: volume abnormalities

[Shenton M.E. et al., Psychiatry Res. 2002]

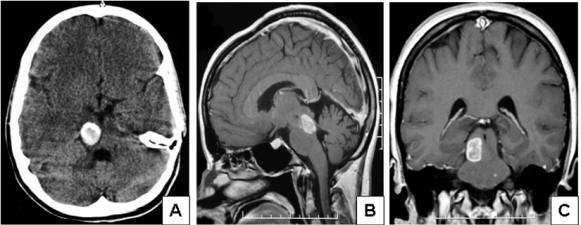

Tumors: avoid radiation on sensitive regions

[Hoehn D. et al., Journal of Medical Cases, 2012]

Why automatic segmentation?

Putamen

Ventricle

Caudate

Amygdala

Hippocampus

Visualization and inspection

No need for manual annotation

(time consuming, need experts,

limited reproducibility)

Non-invasive diagnosis and treatment

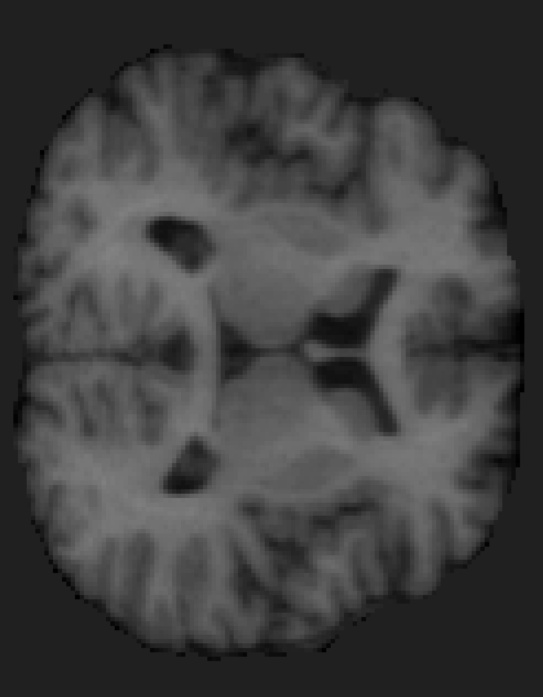

Intensity in MRI is not enough

Spatial arrangement patterns matter

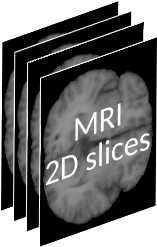

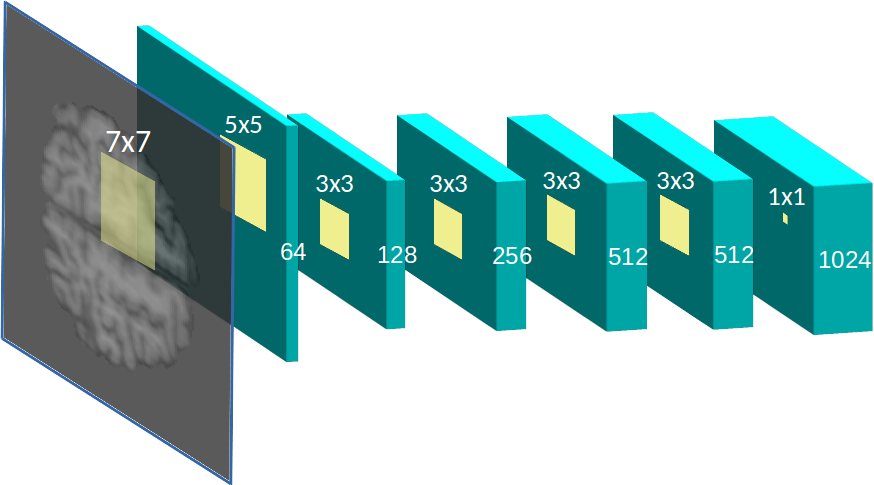

Segmenting subcortical structures in 2D FMRI with FCNNs

P(thalamus)

P(putamen)

:

P(caudate)

:

P(white matter)

2D slice

thalamus

white matter

From 2D slice to 3D volume segmentation

CNN architecture

-

16 layers including max-pooling and dropout.

-

Dilated convolutions for higher resolution.

-

Compact architecture (~4GB GPU RAM)

MRF enforces volume homogeneity

f(CNN output)

d(intensities)

Solve with \(\alpha\)-expansion

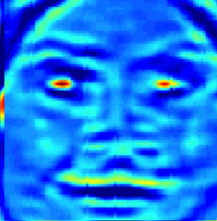

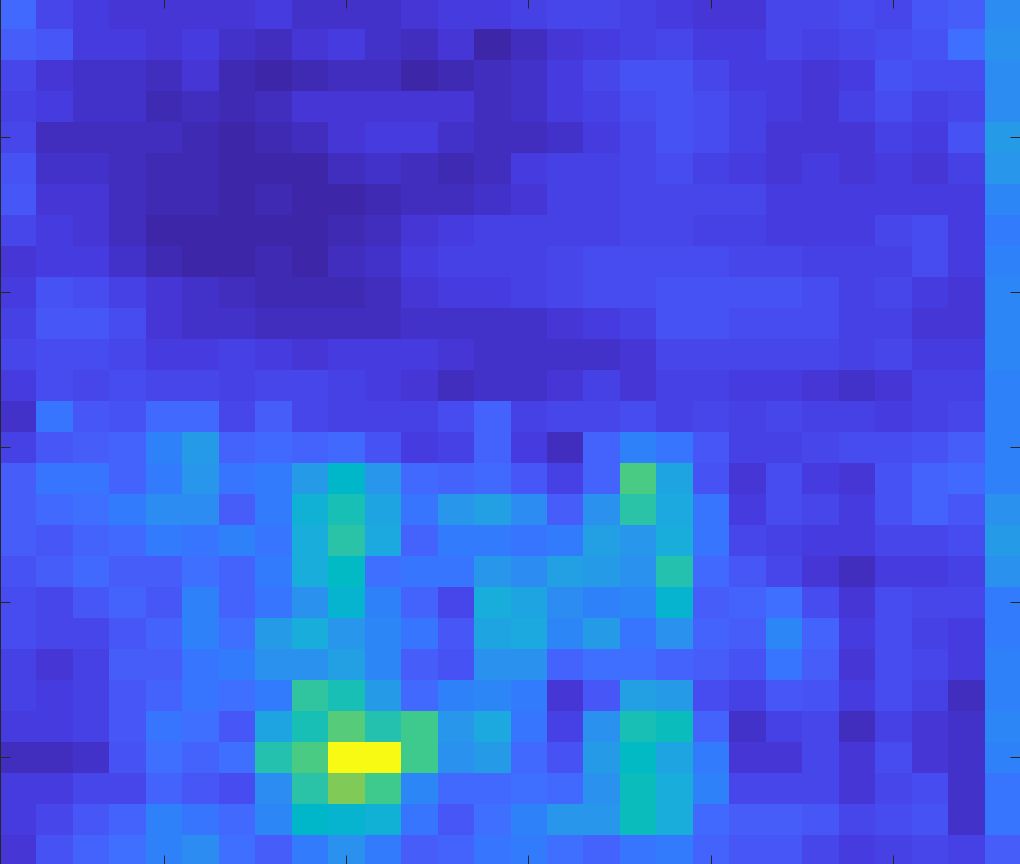

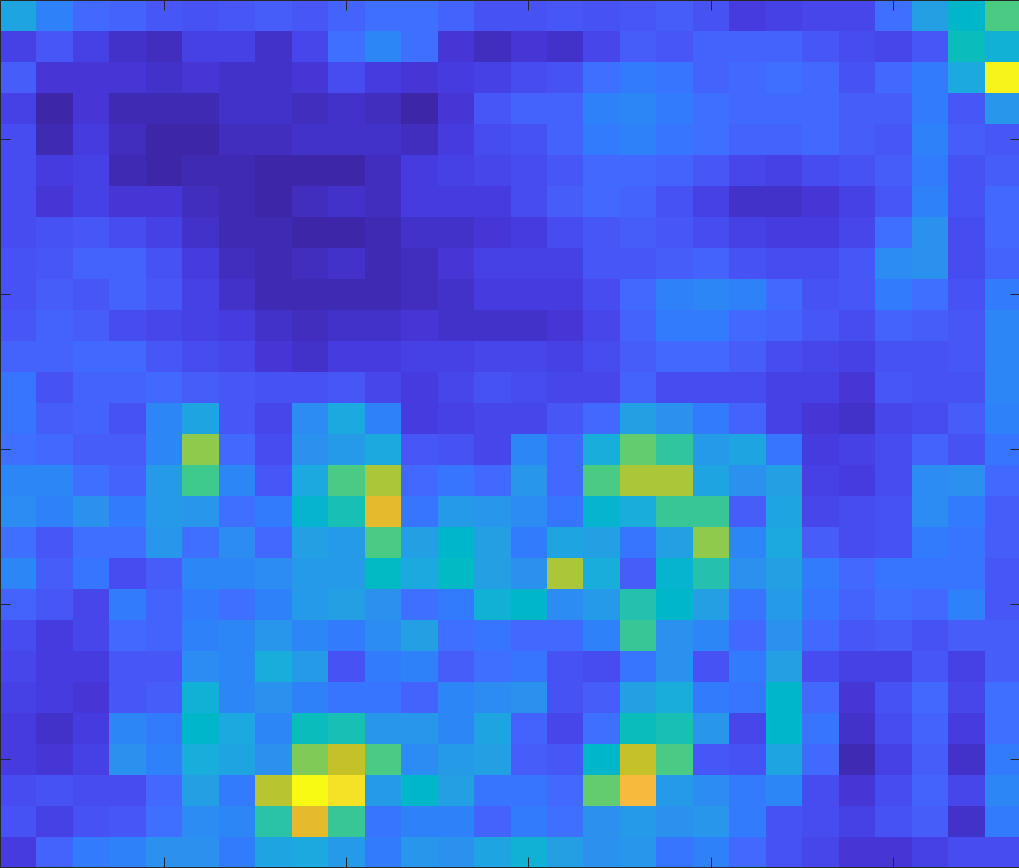

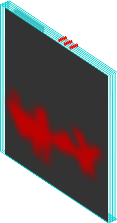

MRF removes spurious responses

CNN

CNN+MRF

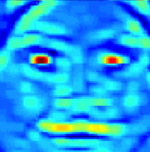

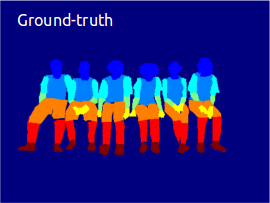

3D segmentation results

Our results

Groundtruth

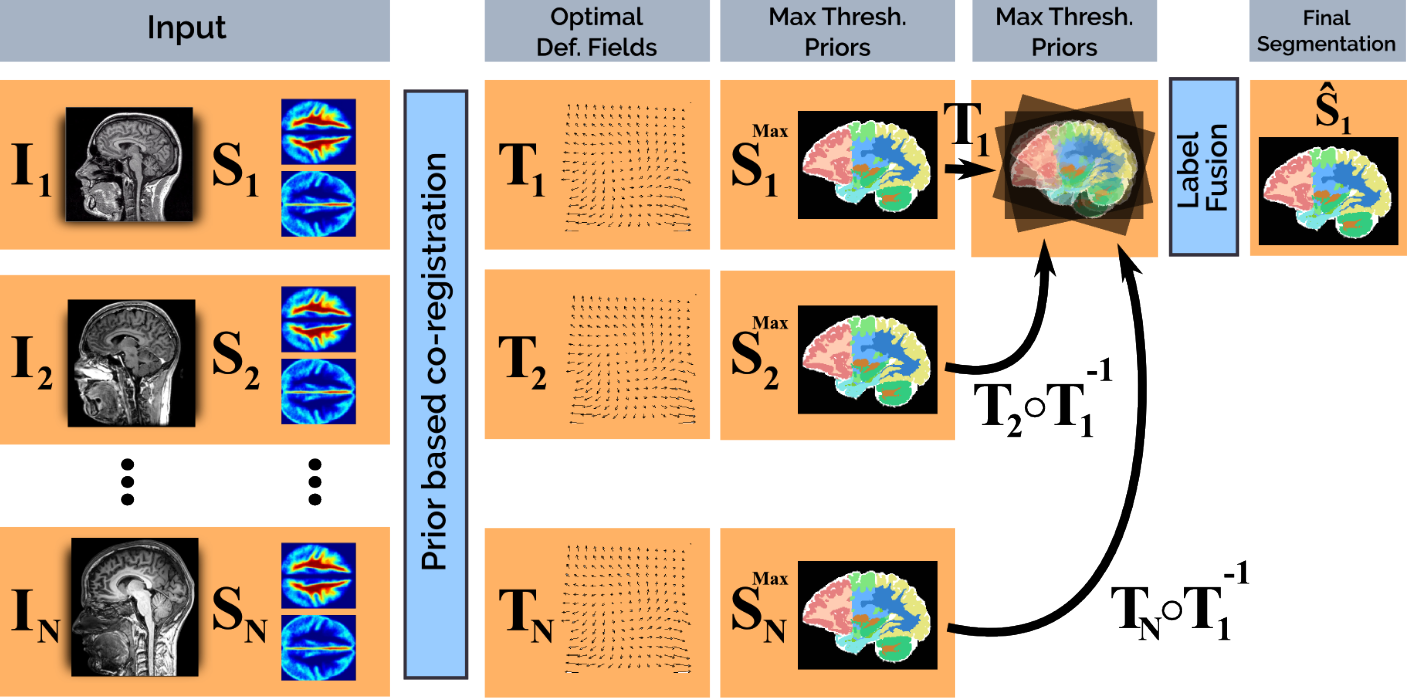

Deep priors for coregistration and cosegmentation

Future work

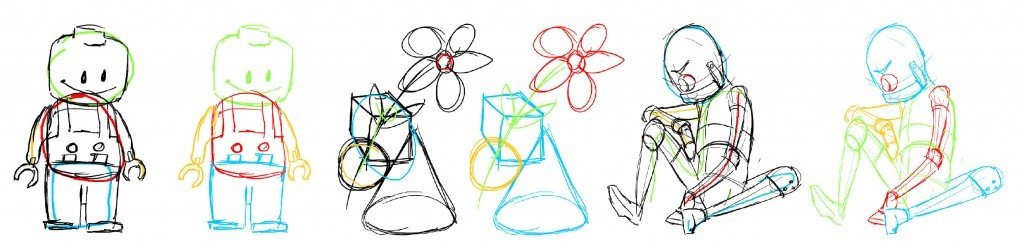

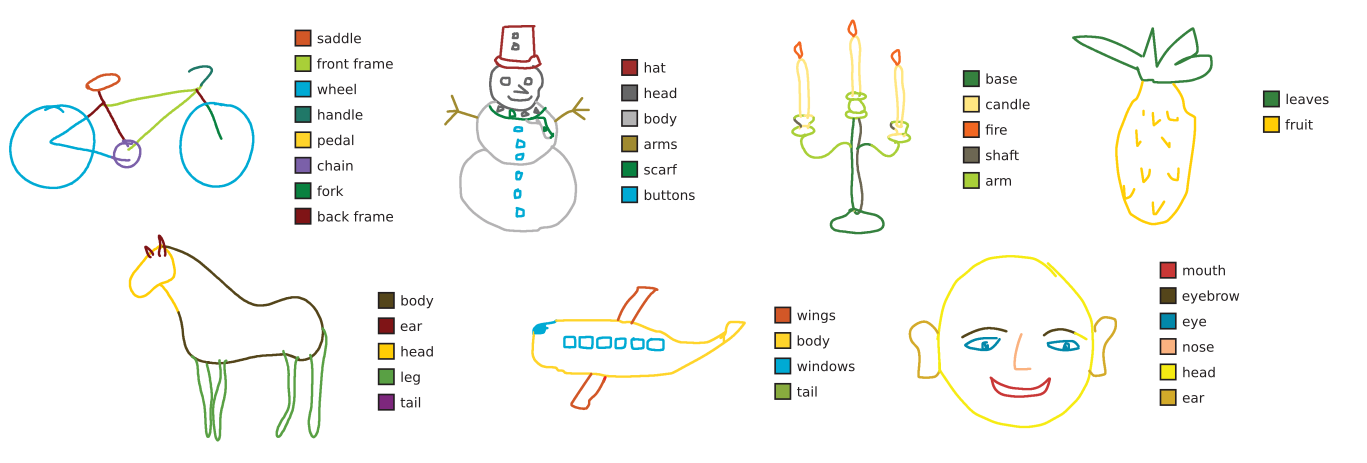

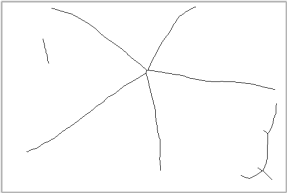

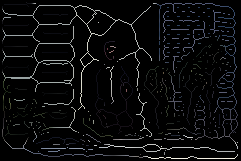

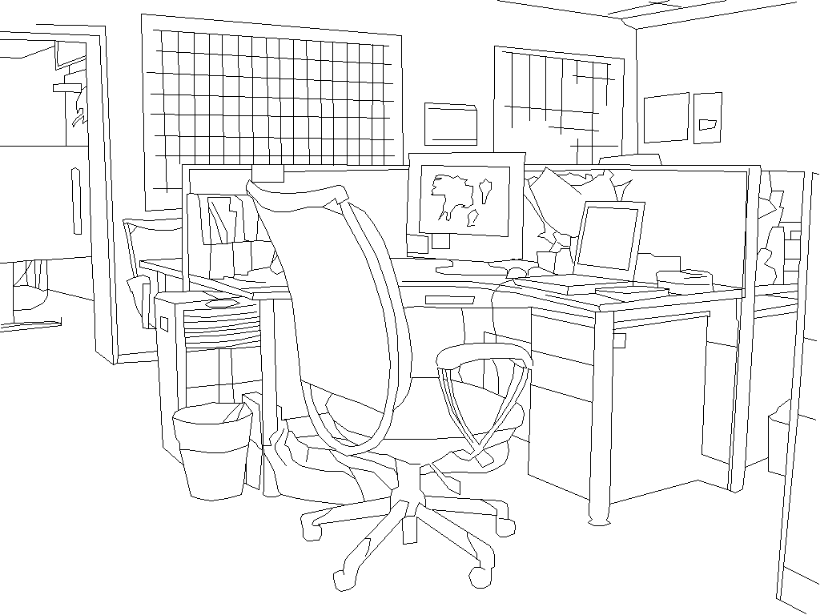

Recognition in line drawings

Chair

Monitor

Basket

Office

Intuitive form of communication

Sketch-based image retrieval

3D models from sketches

Grouping is key

Smart scribbles for sketch segmentation, Norris et al., EU computer graphics forum

Example-based sketch segmentation and labelling using CRFs, Schneider et al., TOG 2016

Gestalt grouping principles

Proximity

Parallelism

Continuity

Closure

Learn to group from synthetic data

Use CNN to extract point embeddings

Points on the same shape have similar embeddings

Points on different shapes have dissimilar embeddings

Cluster embeddings to obtain groups

RNNs for shape embeddings

N

N

N

N

N

triangle

square

circle

-

grouping

-

classification

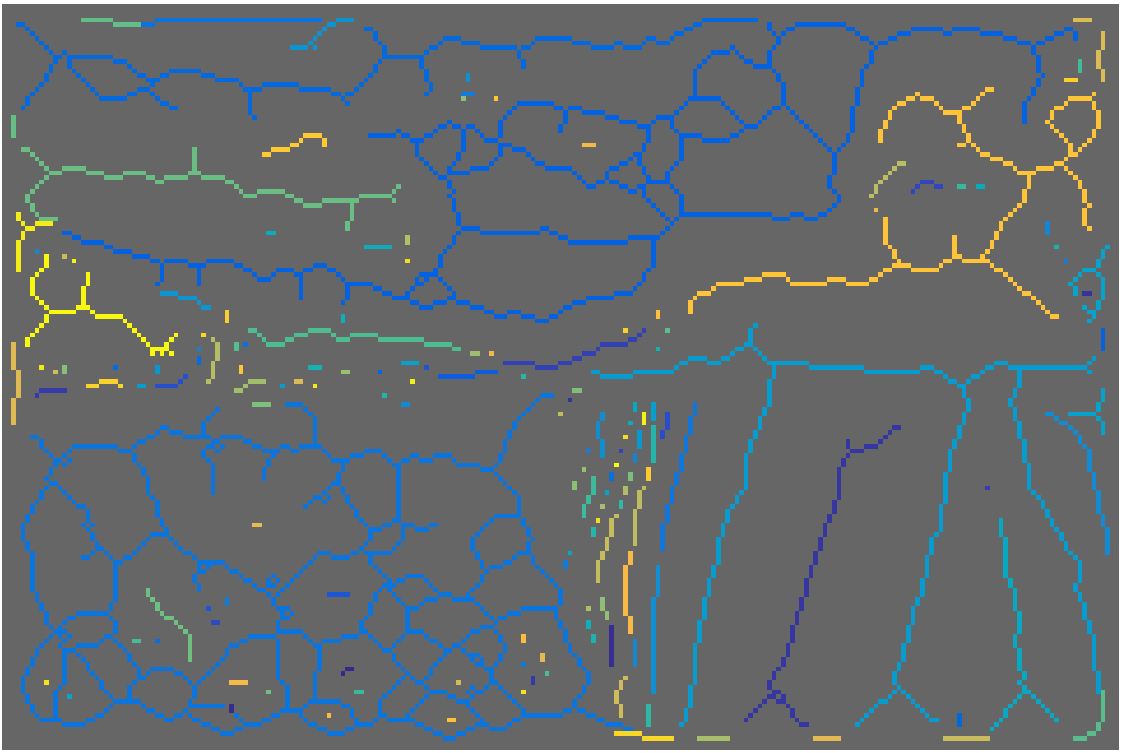

Application to complex scenes?

Edge detector result

Boundaries and medial axes are dual representations of objects

edge loss \(l_e\)

skeleton loss \(l_s\)

Edge detection network

Skeleton detection network

But they are usually extracted independently

Exploit duality to jointly learn boundaries and skeletons

edge loss \(l_e\)

skeleton loss \(l_s\)

consistency loss \(l_c\)

-

Single network (more efficient)

-

Joint optimization should improve accuracy

Autoencoders for image patches

Input patch \(P\)

Reconstruction \(\tilde P\)

Reconstruction loss \(L(P, \tilde{P})\)

Self supervised task

encoder

decoder

Learn homogeneity for textured patches from segmentation data

Rank loss: L( , ) < L( , )

high homogeneity

low homogeneity

Applications

Painterly rendering

Interactive segmentation

Constrained image editing

Acknowledgements

Mahsa Shakeri

Enzo Ferrante

Siddhartha Chandra

Eduard Trulls

P.A. Savalle

George Papandreou

Sven Dickinson

Nikos Paragios

Iasonas Kokkinos

Andrea Vedaldi

Thank you for your attention!

Symmetry

Medical imaging

Segmentation and parts

Learning mid-level representations for computer vision

By tsogkas

Learning mid-level representations for computer vision

- 1,407