Ensemble Learning

and Random Forests

Tzu-Li Tai @ NCKU TechOrange

Learning Path to Data Science - Machine Learning Session #4

Outline

- What is ensemble learning?

- Why is ensemble useful?

- Random Forests

- Hands-on Orange practice

First things first...

Who can give me a brief recap of what we have learnt already?

- Logistic Regression

- K-Nearest Neighbour

- Classification Tree (Decision Tree)

- SVM

Use 1 sentence to explain what a classifier is!

Given a set of training examples, a classification algorithm outputs a classifier.

The classifier is an hypothesis about the true function

The Hypothesis space, of a training set

: the true function

(optimal classifier)

: different solutions

of the classifier

Ensemble Classification

- A popular technique to increase the accuracy of classifiers

- An ensemble classifier is a set of classifiers whose individual decision are combined in some way (typically by voting)

whole training data ( instances)

Classification learning algorithm

Classifier

manipulate

training data

What makes a good ensemble?

The overall ensemble classifier will be more accurate than a single individual classifier if ...

- The base models are diverse

- The base models are accurate

Why Diverse?

=> Ideal if

make different predictions

Why Accurate?

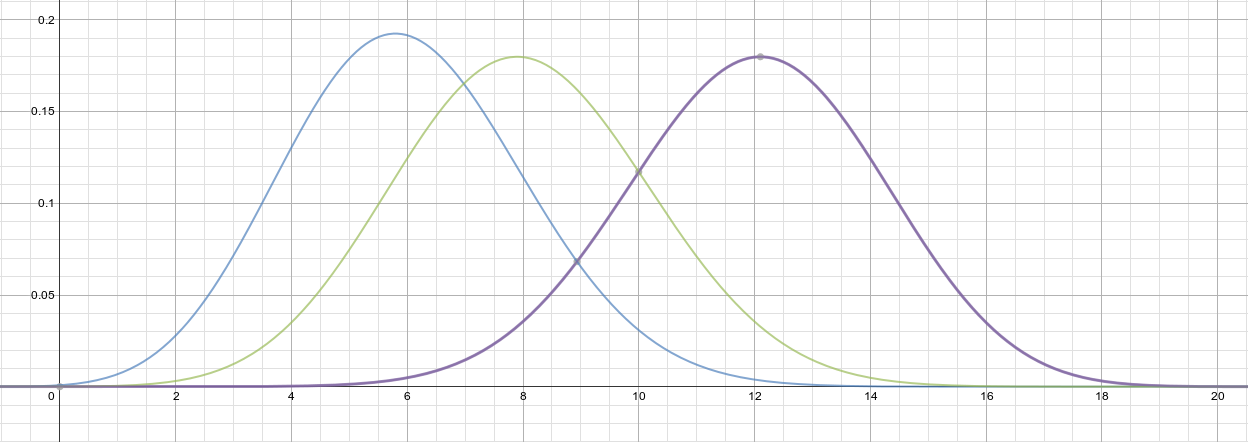

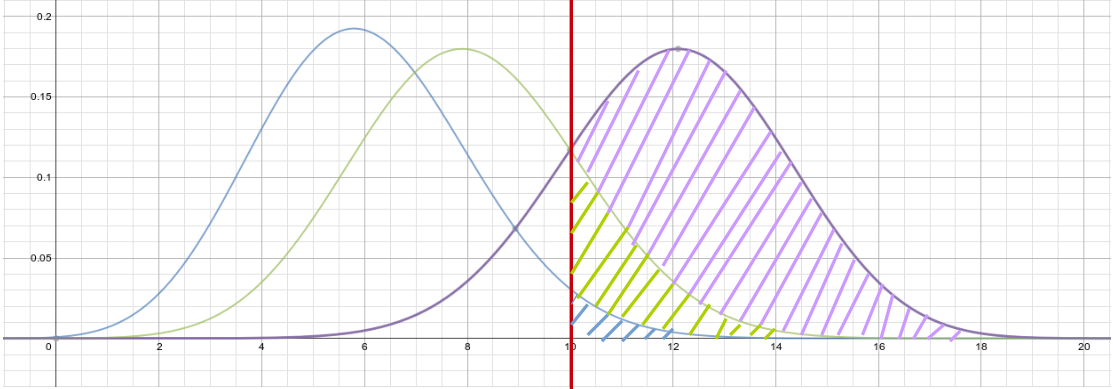

- Suppose m=20 base classifiers

- Each classifier has error rate

- If majority voting is used, > 10 wrong predictions will result in overall wrong classification result

=> Binomial distribution!

--- : p = 0.3, m = 20

--- : p = 0.4, m = 20

--- : p = 0.6, m = 20

--- : Cumulative prob. P(x>10) = 0.01714

--- : Cumulative prob. P(x>10) = 0.12752

--- : Cumulative prob. P(x>10) = 0.75534

Why is ensemble better?

This is going to be kinda vague...

but we can put it into two categories:

- Statistical

- Computational

Statistical property

"Average"

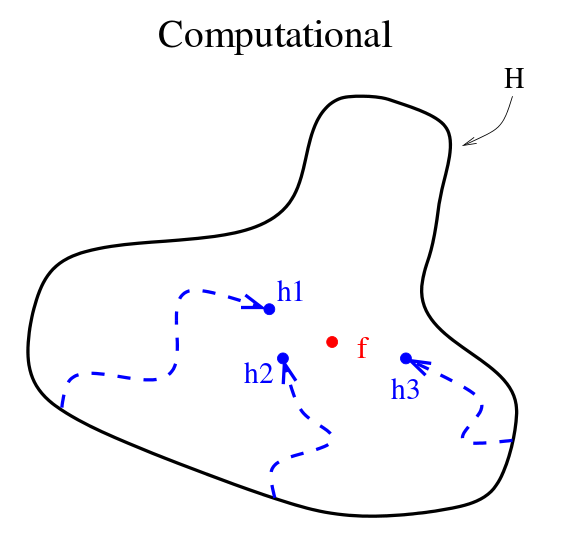

Computational property

Different local search

starting points

Methods to constructing ensembles

=> manipulate training data to form m subsets to

generate m hypotheses

- Bagging

- Boosting

Bagging

"Bootstrap Aggregating"

training instances =>

randomly select

instances with replacement for each subset

=> each subset is expected to have the fraction

of unique instances in the original training set

Boosting

=> iteratively learn from the constructed base classifiers

AdaBoost

the most famous boosting algorithm...

"Adaptive Boosting"

=> weak to strong

Adaboost

- Maintains a weight for all instances in the training data

- In each iteration , hypothesis is calculated to minimize the weighted error on the training set.

- The weighted error of is applied to the training set to update all weights of all instances in the training data

- Overall effect: Place more weight on training examples that were previously misclassified

Adaboost

Input:

- Training data

- Initial weights

- Error function

Procedure:

1. for t in (1 to m iterations):

2. Apply learning algorithm to find such that

3. is minimized.

4. Calculate weighted error of ,

5. Update

6. Final ensemble classifier

Adaboost

Random Forests

- A Random Forest is an ensemble classifier that uses decision trees build on bagged training data as base classifiers

- The only difference between a Random Forest and a typical bagged tree classifier is that the base trees are randomly split at each node by randomly selecting features.

Random Forests

Procedure RandomForest():

1. Sample bootstraps from the training data

2. for each bootstrap sample in :

3. BuildRandomizedTree()

4.

Procedure BuildRandomizedTree():

1. Initialize root node of tree to be the whole space

2. At each node newly split:

3. Select random feature space of features

4. From , find a feature that best splits current node

Ensemble Learning and Random Forests

By Tzu-Li Tai

Ensemble Learning and Random Forests

Ensemble Learning and Random Forests, Machine Learning Lecture @ NCKU TechOrange, Date: 11/23

- 467