DeepMind Robotics (formerly known as Brain)

Foundation Models for Robotics

Andy Zeng

Round-table discussion with Science Robotics

Manipulation

TossingBot

Transporter Nets

Implicit Behavior Cloning

with machine learning from pixels

closed-loop vision-based policies

Manipulation

TossingBot

Interact with the physical world to learn bottom-up commonsense

Transporter Nets

Implicit Behavior Cloning

with machine learning from pixels

i.e. "how the world works"

On the quest for shared priors

Interact with the physical world to learn bottom-up commonsense

with machine learning

i.e. "how the world works"

# Tasks

Data

MARS Reach arm farm '21

On the quest for shared priors

Interact with the physical world to learn bottom-up commonsense

with machine learning

i.e. "how the world works"

# Tasks

Data

Expectation

Reality

Complexity in environment, embodiment, contact, etc.

MARS Reach arm farm '21

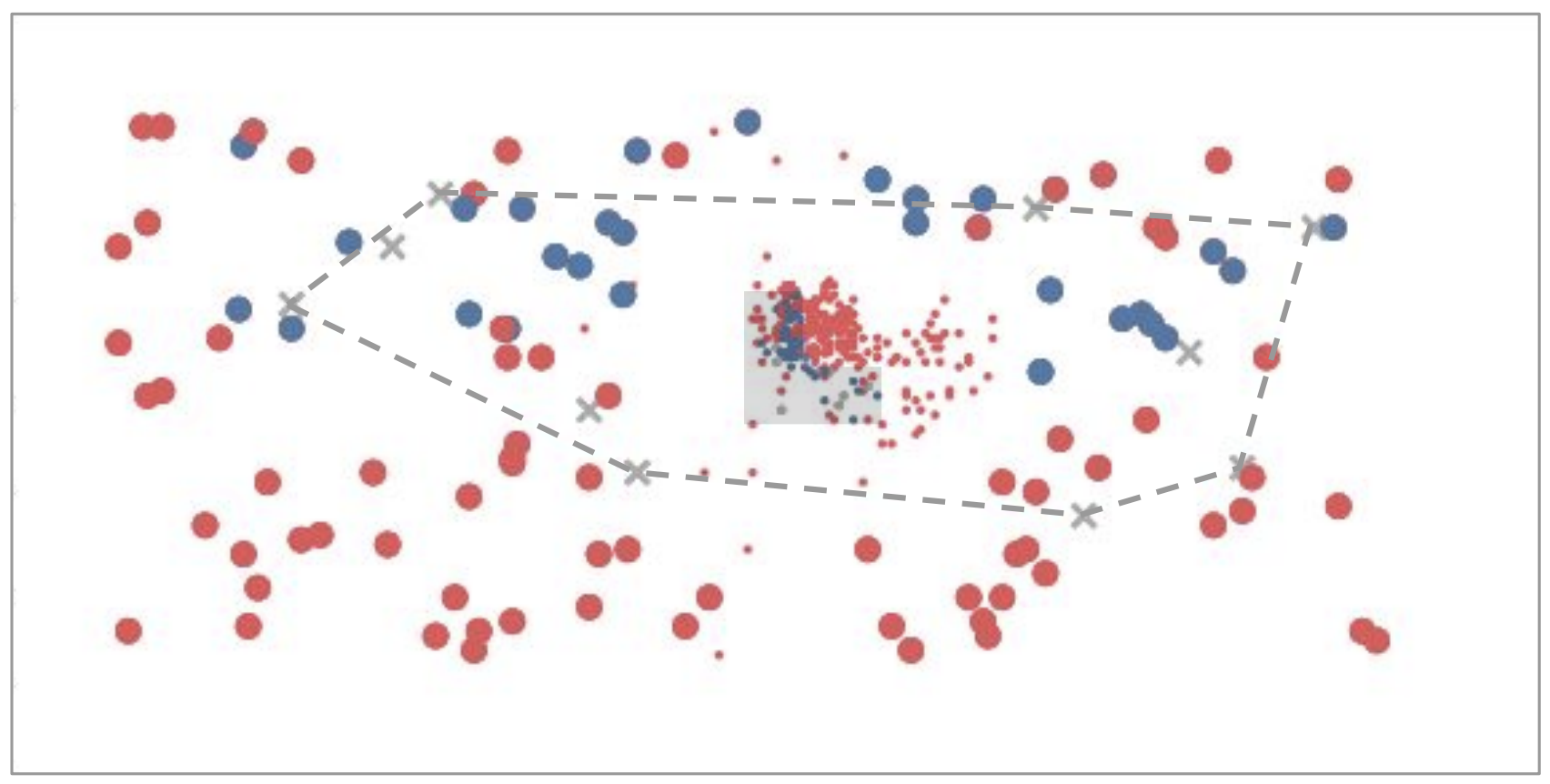

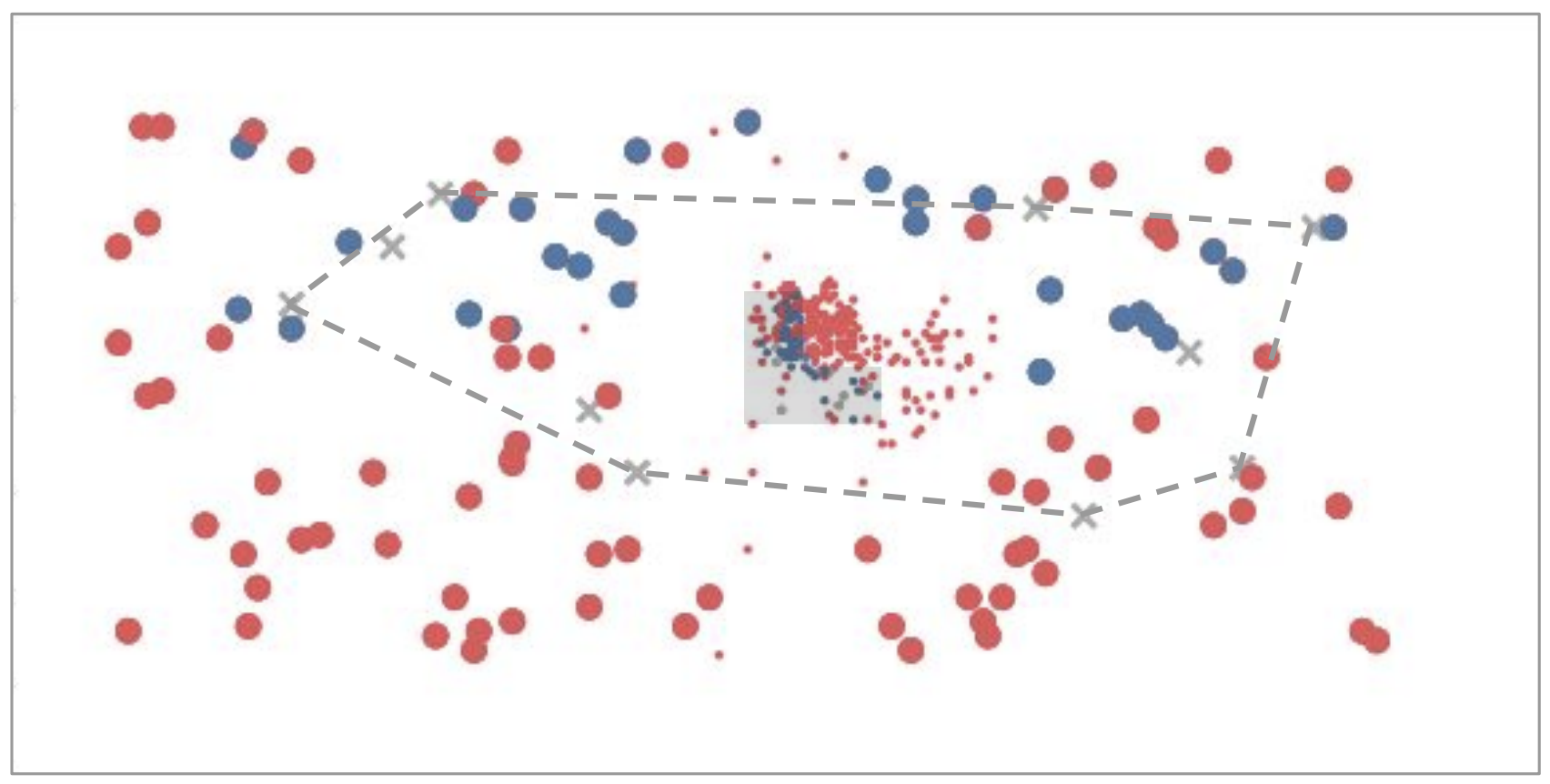

Machine learning is a box

Interpolation

Extrapolation

adapted from Tomás Lozano-Pérez

Machine learning is a box

Interpolation

Extrapolation

Internet

Meanwhile in NLP...

Large Language Models

Large Language Models?

Internet

Meanwhile in NLP...

Books

Recipes

Code

News

Articles

Dialogue

Demo

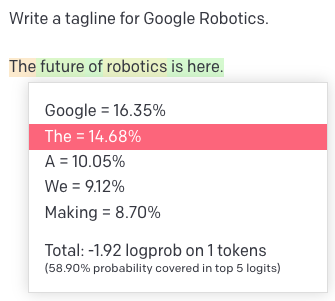

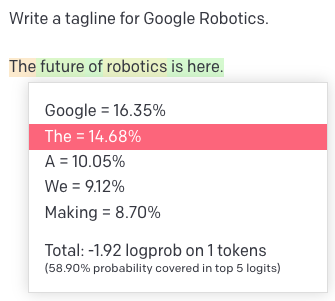

Quick Primer on Language Models

Tokens (inputs & outputs)

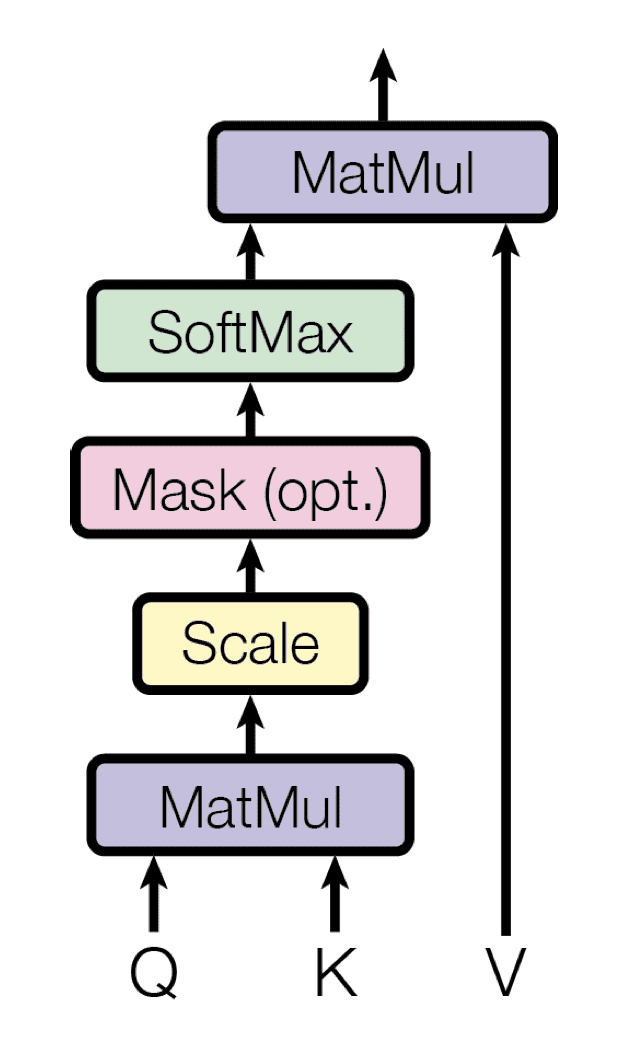

Transformers (models)

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

Quick Primer on Language Models

Tokens (inputs & outputs)

Transformers (models)

Pieces of words (BPE encoding)

big

bigger

per word:

biggest

small

smaller

smallest

big

er

per token:

est

small

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

Quick Primer on Language Models

Tokens (inputs & outputs)

Transformers (models)

Self-Attention

Pieces of words (BPE encoding)

big

bigger

per word:

biggest

small

smaller

smallest

big

er

per token:

est

small

Attention Is All You Need, NeurIPS 2017

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin

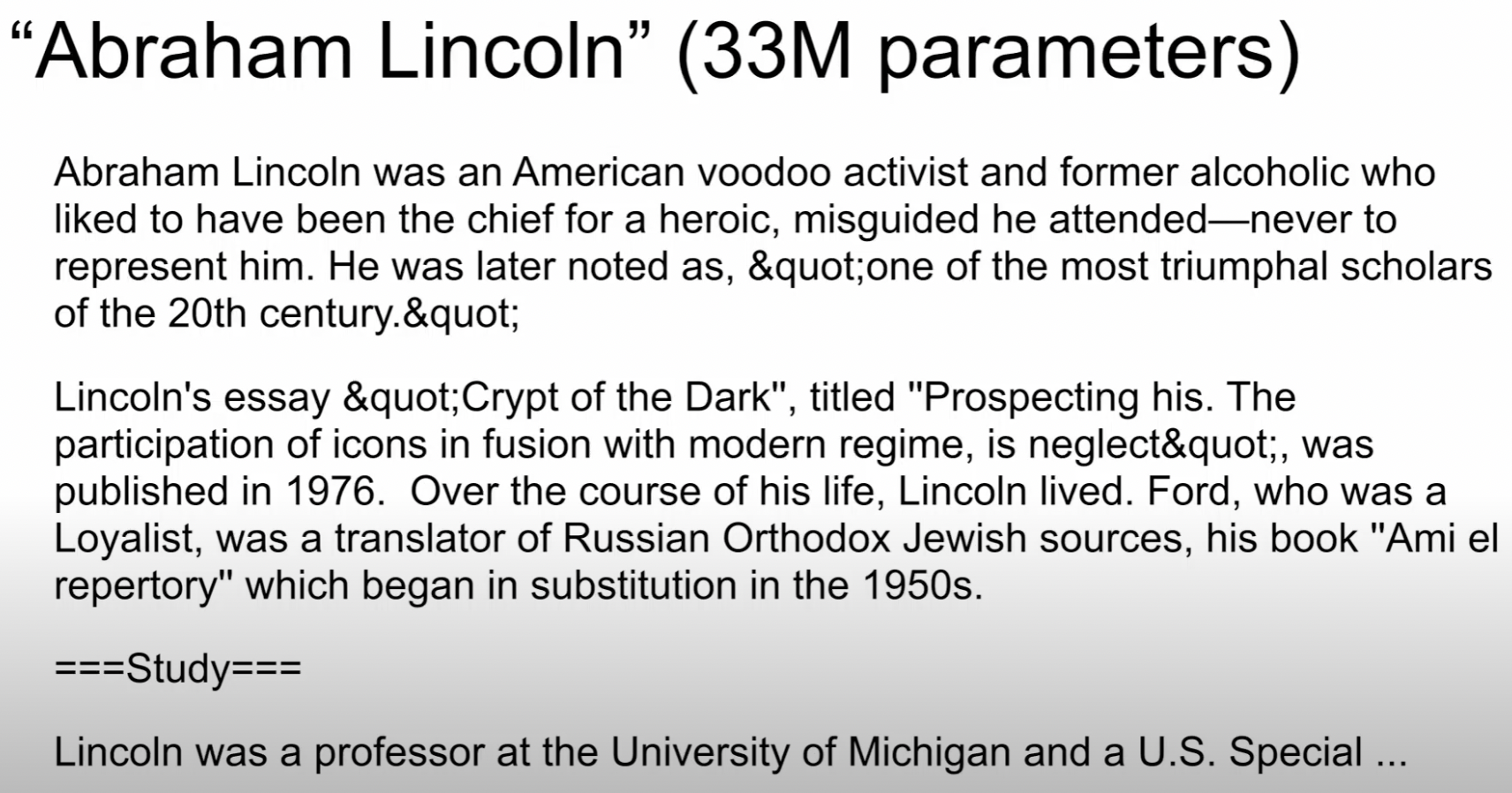

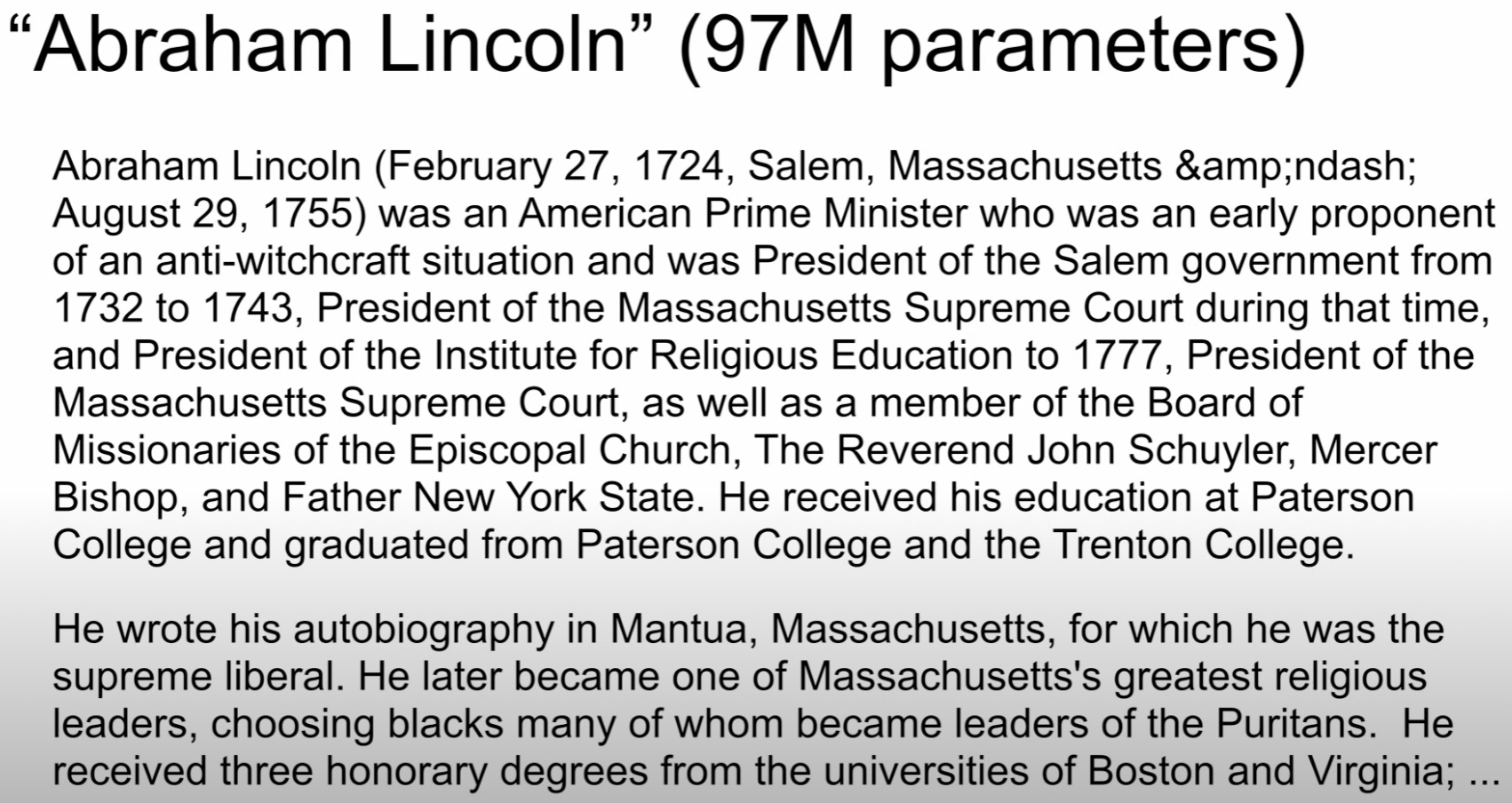

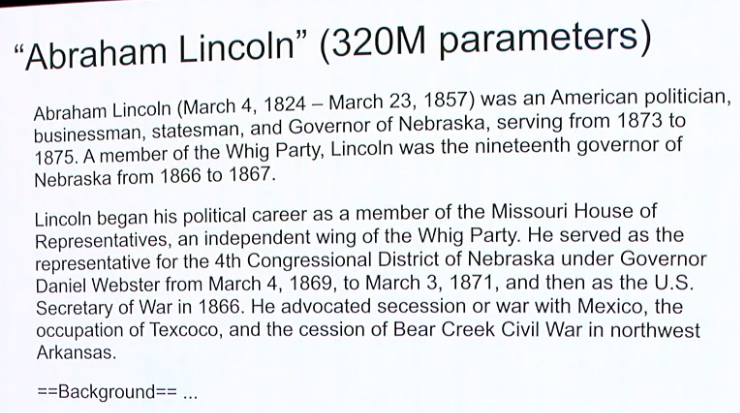

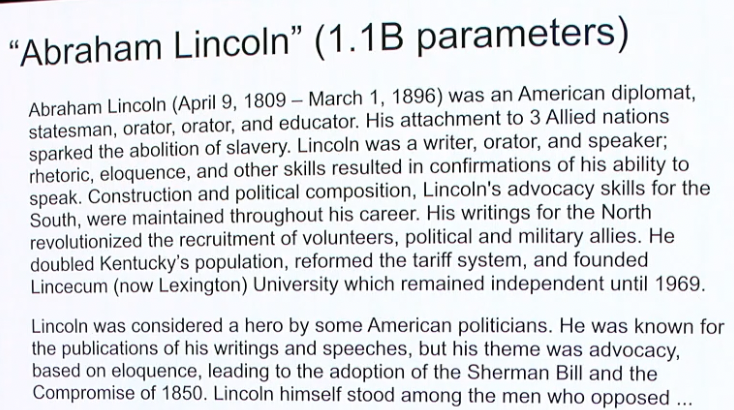

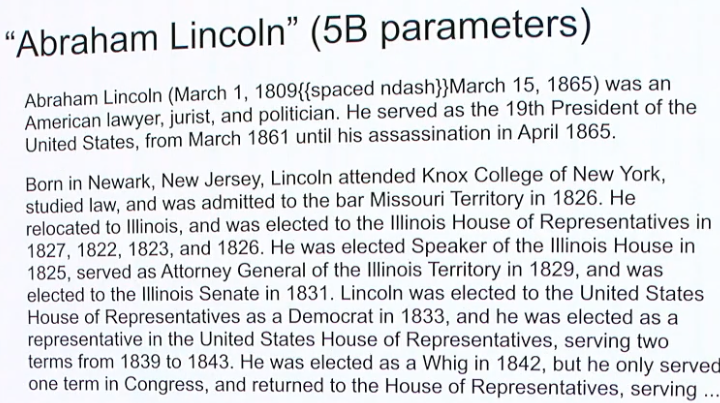

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

Bigger is Better

Neural Language Models: Bigger is Better, WeCNLP 2018

Noam Shazeer

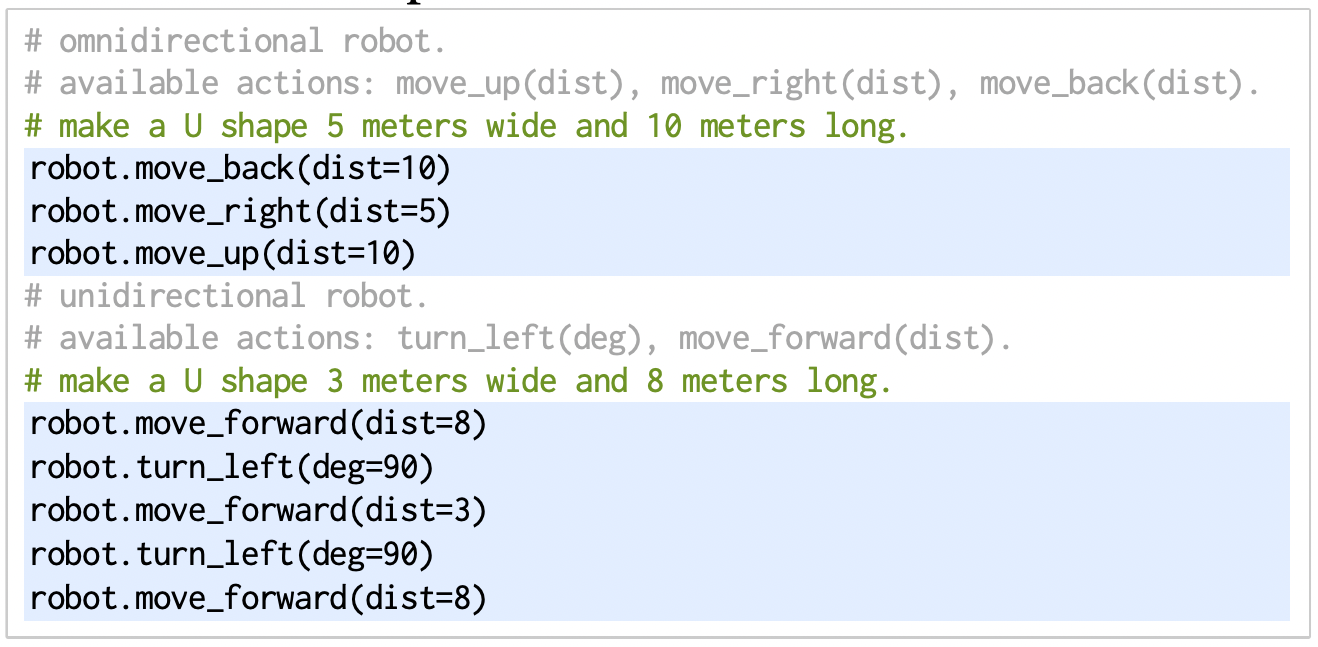

Robot Planning

Visual Commonsense

Robot Programming

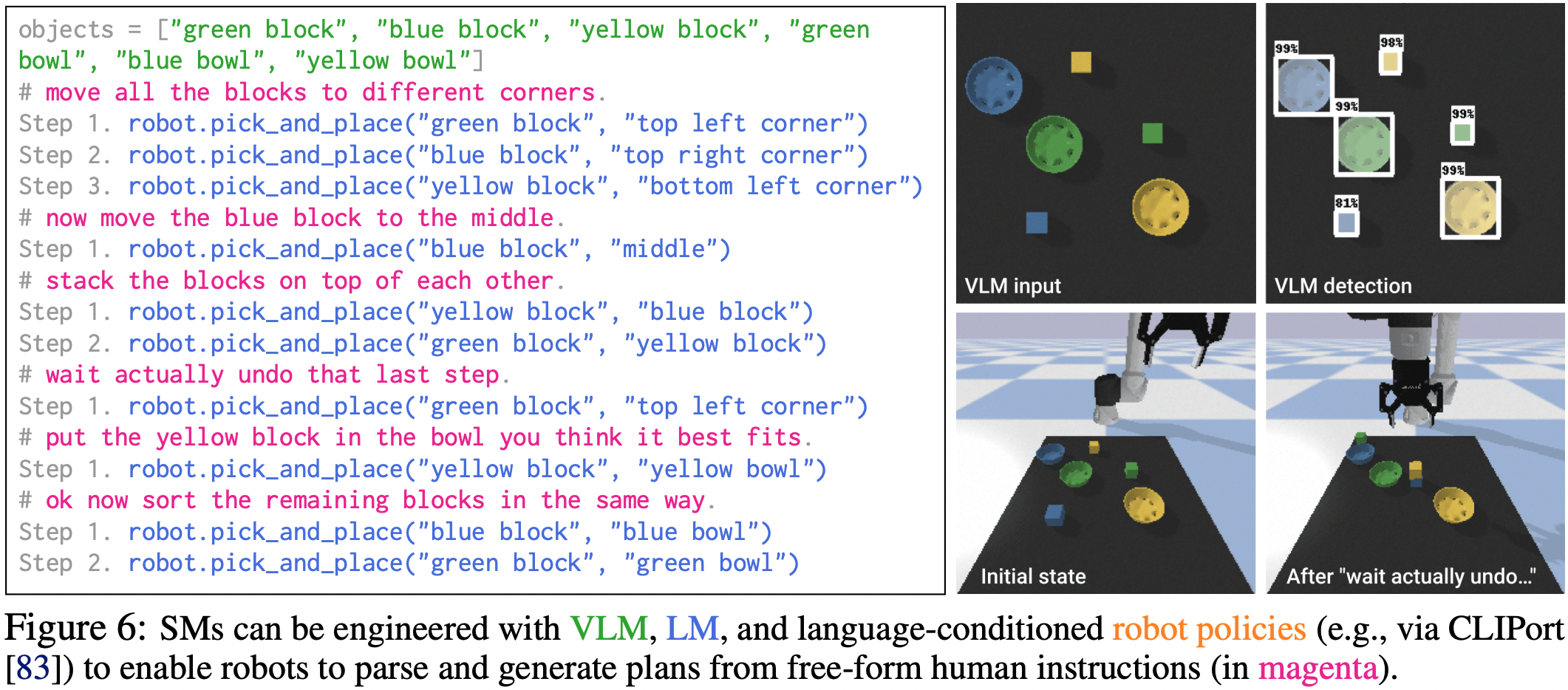

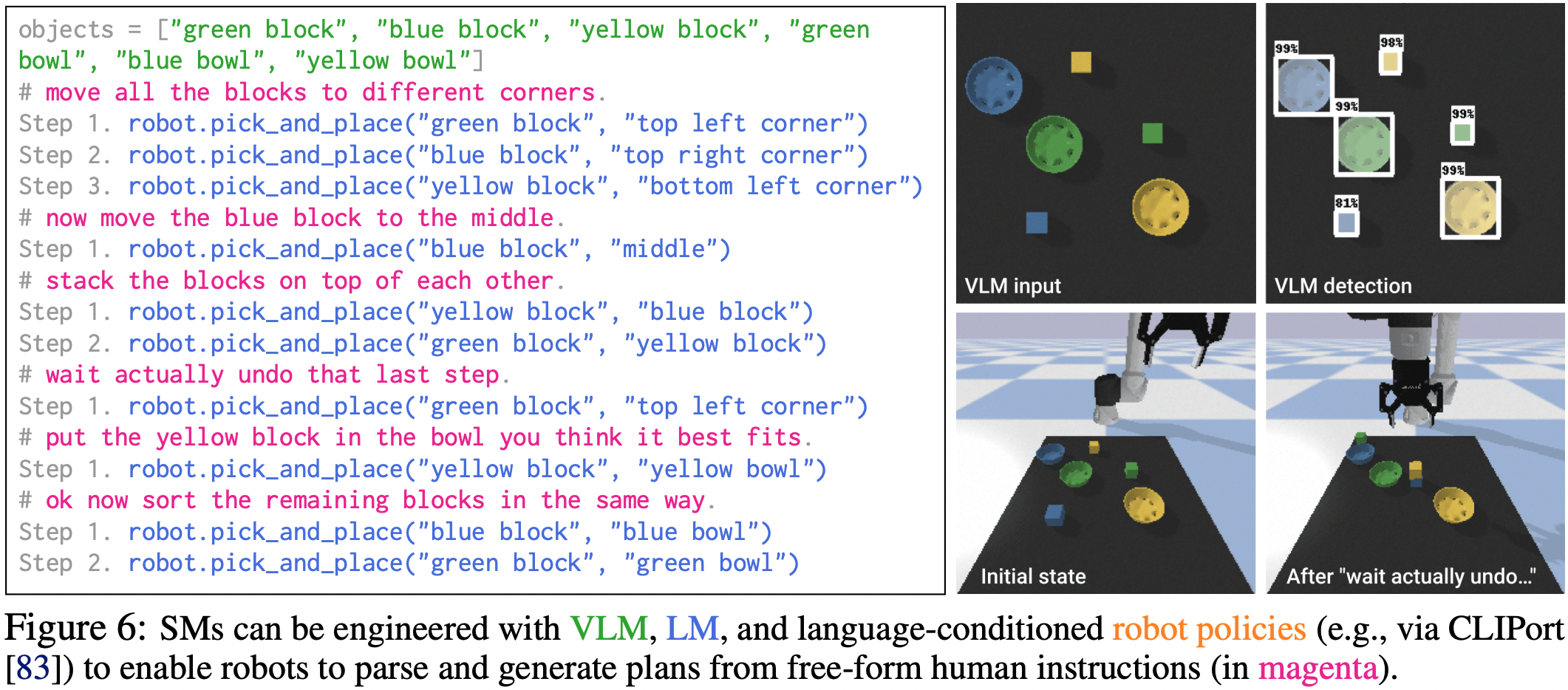

Socratic Models

Code as Policies

PaLM-SayCan

Demo

Somewhere in the space of interpolation

Lives

Socratic Models & PaLM-SayCan

One way to use Foundation Models with "language as middleware"

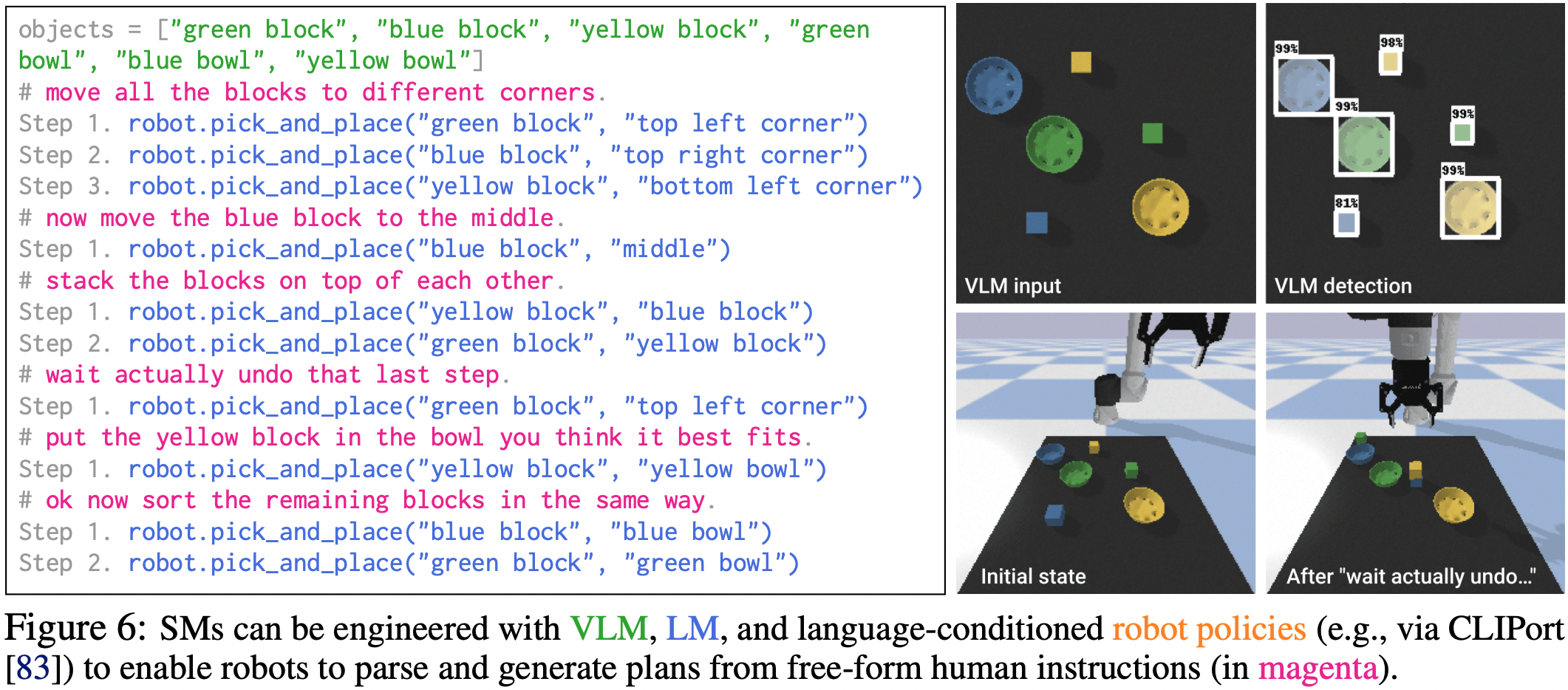

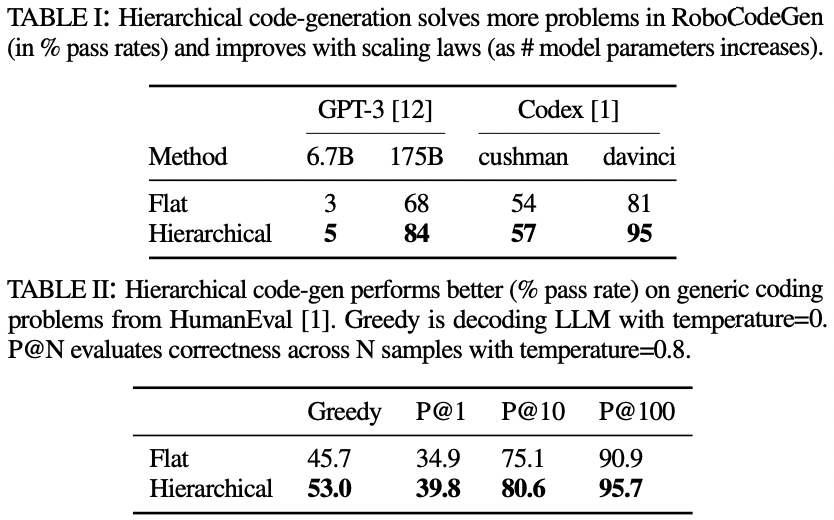

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

Socratic Models & PaLM-SayCan

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

Visual Language Model

CLIP, ALIGN, LiT,

SimVLM, ViLD, MDETR

Human input (task)

One way to use Foundation Models with "language as middleware"

Socratic Models & PaLM-SayCan

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

Visual Language Model

CLIP, ALIGN, LiT,

SimVLM, ViLD, MDETR

Human input (task)

Large Language Models for

High-Level Planning

Language-conditioned Policies

Do As I Can, Not As I Say: Grounding Language in Robotic Affordances say-can.github.io

Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents wenlong.page/language-planner

One way to use Foundation Models with "language as middleware"

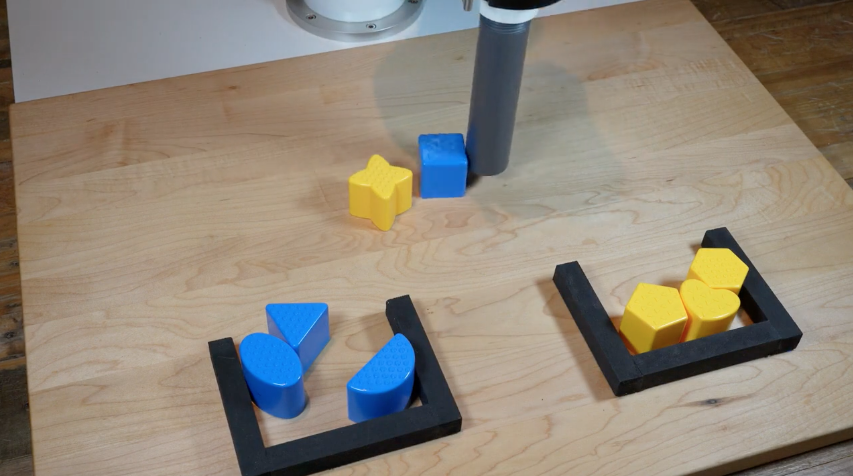

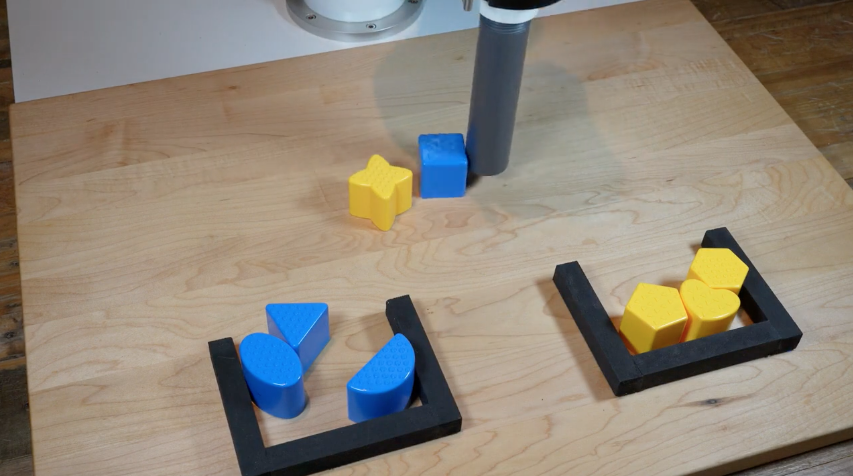

Socratic Models: Robot Pick-and-Place Demo

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

For each step, predict pick & place:

Socratic Models & PaLM-SayCan

Open research problem, but here's one way to do it

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

https://socraticmodels.github.io

Visual Language Model

CLIP, ALIGN, LiT,

SimVLM, ViLD, MDETR

Human input (task)

Large Language Models for

High-Level Planning

Language-conditioned Policies

Do As I Can, Not As I Say: Grounding Language in Robotic Affordances say-can.github.io

Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents wenlong.page/language-planner

Inner Monologue

Inner Monologue: Embodied Reasoning through Planning with Language Models

https://innermonologue.github.io

Limits of language as information bottleneck?

- Loses spatial (numerical) precision

- Highly (distributional) multimodal

- Not as information-rich as other modalities

Limits of language as information bottleneck?

- Loses spatial (numerical) precision

- Highly (distributional) multimodal

- Not as information-rich as other modalities

- Only for high level? what about control?

Perception

Planning

Control

Socratic Models

Inner Monologue

PaLM-SayCan

Wenlong Huang et al, 2022

Imitation? RL?

Engineered?

Intuition and commonsense is not just a high-level thing

Intuition and commonsense is not just a high-level thing

Applies to low-level behaviors too

- spatial: "move a little bit to the left"

- temporal: "move faster"

- functional: "balance yourself"

Behavioral commonsense is the "dark matter" of robotics:

Intuition and commonsense is not just a high-level thing

Can we extract these priors from language models?

Applies to low-level behaviors too

- spatial: "move a little bit to the left"

- temporal: "move faster"

- functional: "balance yourself"

Behavioral commonsense is the "dark matter" of robotics:

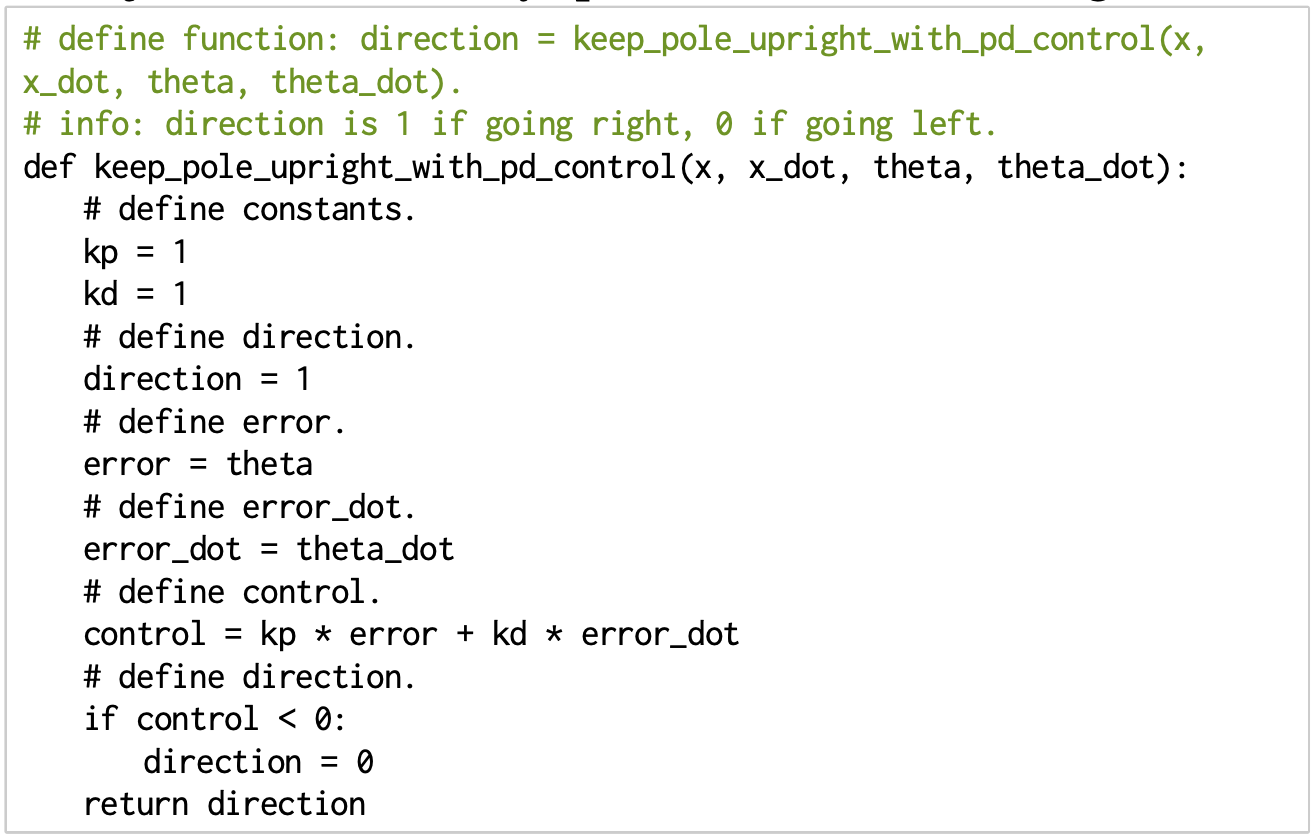

Language models can write code

Code as a medium to express low-level commonsense

Live Demo

In-context learning is supervised meta learning

Trained with autoregressive models via "packing"

In-context learning is supervised meta learning

Trained with autoregressive models via "packing"

Better with non-recurrent autoregressive sequence models

Transformers at certain scale can generalize to unseen (i.e. tasks)

"Data Distributional Properties Drive Emergent In-Context Learning in Transformers"

Chan et al., NeurIPS '22

"General-Purpose In-Context Learning by Meta-Learning Transformers" Kirsch et al., NeurIPS '22

"What Can Transformers Learn In-Context? A Case Study of Simple Function Classes" Garg et al., '22

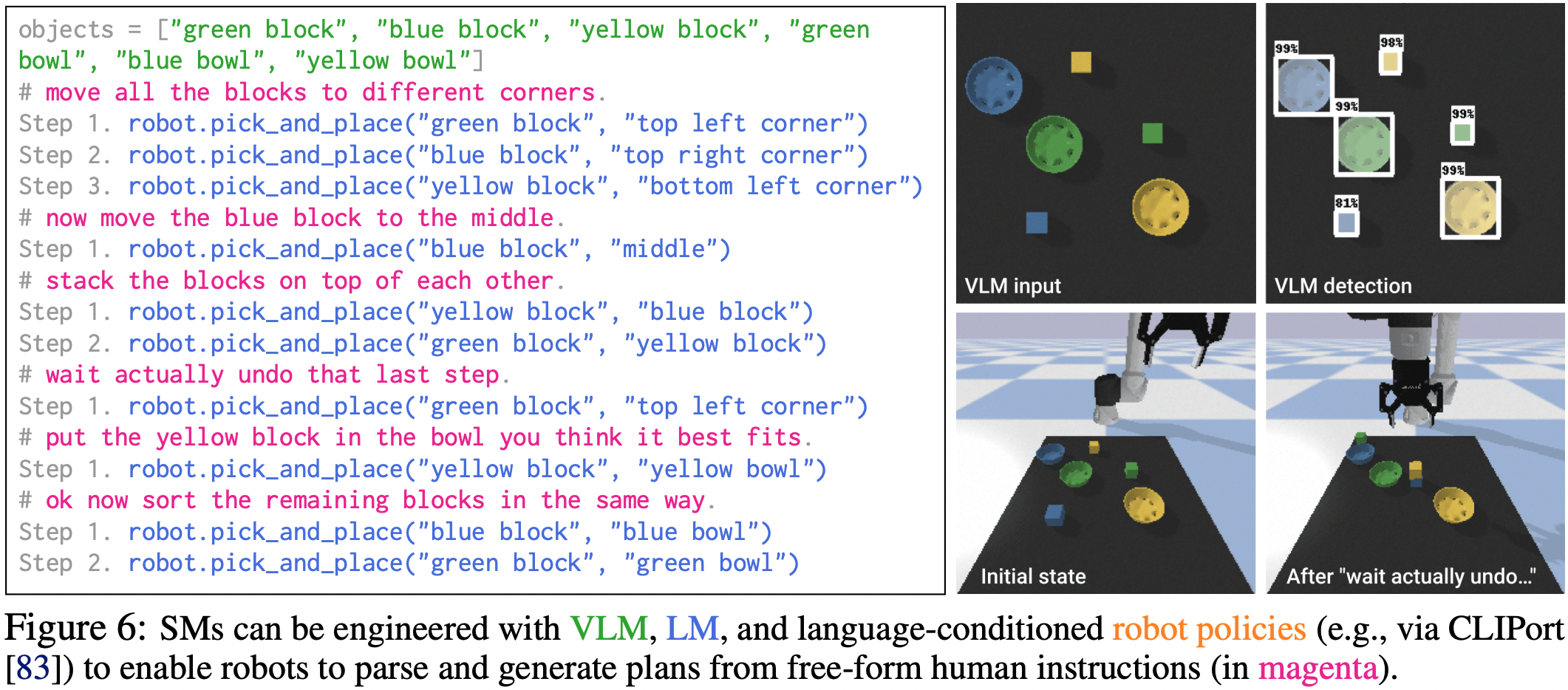

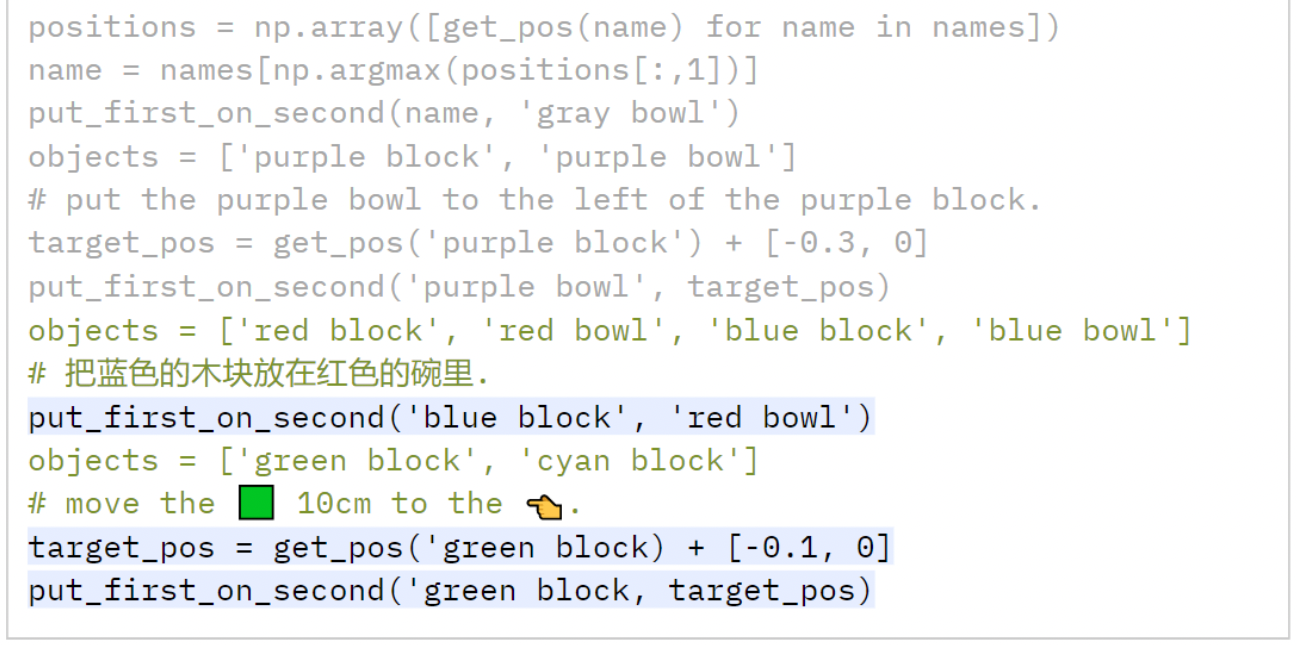

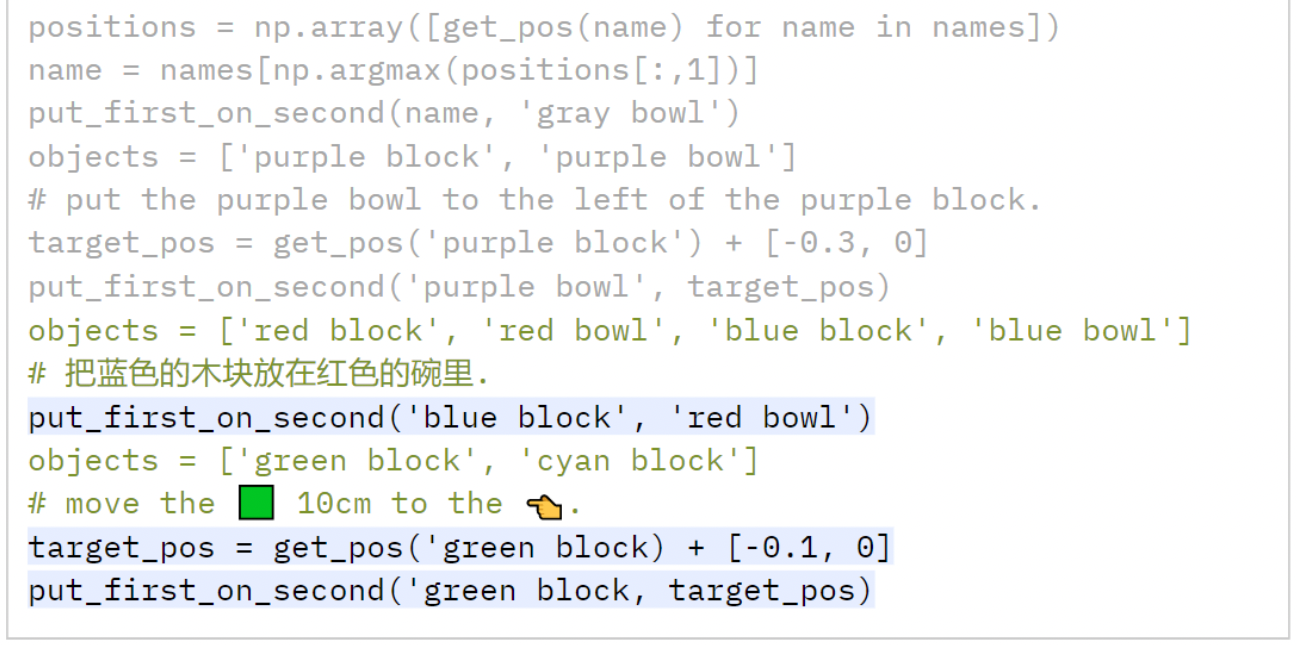

Language models can write code

Code as a medium to express more complex plans

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

Live Demo

https:youtu.be/byavpcCRaYI

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

Language models can write code

Code as a medium to express more complex plans

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

Live Demo

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

Language models can write code

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

use NumPy,

SciPy code...

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

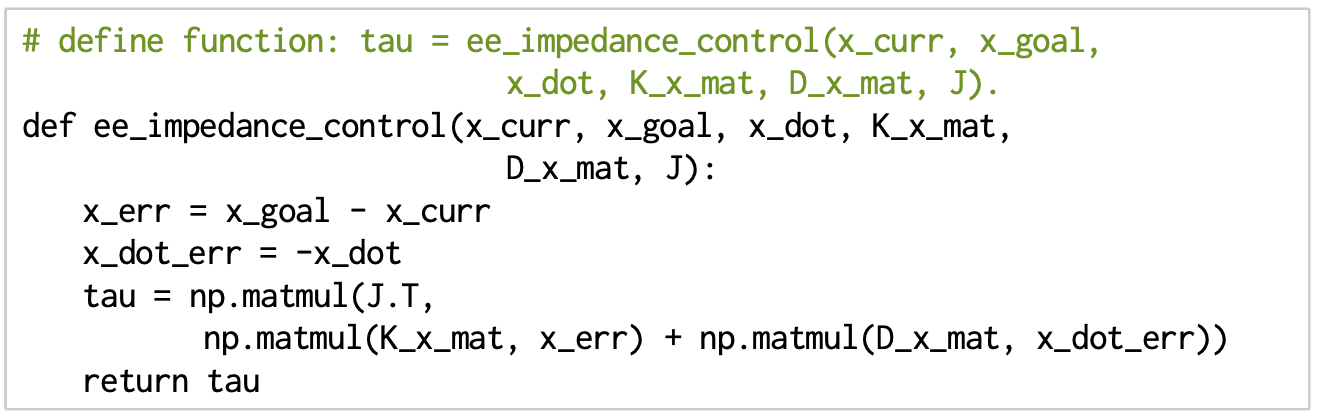

Language models can write code

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

- PD controllers

- impedance controllers

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

Language models can write code

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

Language models can write code

Jacky Liang, Wenlong Huang, Fei Xia, Peng Xu, Karol Hausman, Brian Ichter, Pete Florence, Andy Zeng

code-as-policies.github.io

Code as Policies: Language Model Programs for Embodied Control

What is the foundation models for robotics?

Outstanding Paper Award in Robot Learning Finalist, ICRA 2023

Extensions to Code as Policies

1. Fuse visual-language features into robot maps

2. Use code as policies to do spatial reasoning for navigation

"Visual Language Maps" Chenguang Huang et al., ICRA 2023

"Audio Visual Language Maps" Chenguang Huang et al., 2023

Fuse all the modalities! (including audio)

(i.e. semantic memory with Foundation Models)

Scaling robotics with Foundation Models

Robot Learning

Not a lot of robot data

Lots of Internet data

500 expert demos

5000 expert demos

50 expert demos

Foundation Models

Robot Learning

Not a lot of robot data

Lots of Internet data

500 expert demos

5000 expert demos

50 expert demos

Scaling robotics with Foundation Models

Foundation Models

Robot Learning

Not a lot of robot data

Lots of Internet data

256K token vocab w/ word embedding dim = 18,432

collecting (mostly) diverse data

Scaling robotics with Foundation Models

Foundation Models

PaLM-sized robot dataset = 100 robots for 24 yrs

Robot Learning

- Finding other sources of data (sim, YouTube)

- Improve data efficiency with prior knowledge

Not a lot of robot data

Lots of Internet data

256K token vocab w/ word embedding dim = 18,432

collecting (mostly) diverse data

Scaling robotics with Foundation Models

Foundation Models

PaLM-sized robot dataset = 100 robots for 24 yrs

Robot Learning

Foundation Models

- Finding other sources of data (sim, YouTube)

- Improve data efficiency with prior knowledge

Not a lot of robot data

Lots of Internet data

Embrace foundation models to help close the gap!

256K token vocab w/ word embedding dim = 18,432

PaLM-sized robot dataset = 100 robots for 24 yrs

collecting (mostly) diverse data

Scaling robotics with Foundation Models

Robot Learning

- Finding other sources of data (sim, YouTube)

- Improve data efficiency with prior knowledge

Not a lot of robot data

Lots of Internet data

Scaling robotics with Foundation Models

Phase 1

Build with off-the-shelf models

< 100 robots

Phase 2

Finetune off-the-shelf models

100 < number of robots < 1000

Phase 3

Train our own models

1000+ robots (over 2+ yrs)

Foundation Models

Embrace foundation models to help close the gap!

Building PaLM-E: An Embodied Multimodal Language Model

Building PaLM-E: An Embodied Multimodal Language Model

Finetuning pre-trained models helps

Larger models retain pre-trained performance

Limited compute budget (1k TPU chips for 10 days), higher learning rates, small batch sizes

Closing Thoughts

Thank you!

Pete Florence

Adrian Wong

Johnny Lee

Vikas Sindhwani

Stefan Welker

Vincent Vanhoucke

Kevin Zakka

Michael Ryoo

Maria Attarian

Brian Ichter

Krzysztof Choromanski

Federico Tombari

Jacky Liang

Aveek Purohit

Wenlong Huang

Fei Xia

Peng Xu

Karol Hausman

and many others!

2023-Science-Robotics-talk

By Andy Zeng

2023-Science-Robotics-talk

- 917