What This Talk Is

-

Practical workflows for agent-assisted testing

-

Framework-aware prompting

-

BDD as guardrails

-

TestBox 7 feedback loops

What This Talk Isn't

-

Vibe Coding

-

Agent-specific advice

-

A silver bullet for test quality

-

A deep dive into TestBox 7

-

A new testing framework to learn

-

Letting the intern merge to `main`

AI, make me this awesome application. Don't forget the tests.

The problem with trusting AI to come up with test cases

Tests have two jobs

Confirm behavior

Does the system do what users, maintainers, and the business expect?

Communicate intent

Can the next developer understand what matters without reverse-engineering the implementation?

That next developer may be an AI agent.

Agentic workflows make both jobs more important.

This is our job as the Developer

Prompt:

Don't forget tests

Prompt:

Implement the body of the specs I added

Prompt:

Implement the body of the specs I added

Prompt:

Here is the business user story. Generate specs based on this user story. Do not start implementation yet.

Prompt:

Scan the codebase for any logic errors. Create test cases that show the failures.

BDD refresher

BDD is not “describe / it” syntax

Observable Behavior

The test cares what happens, not how the object happened to do it.

Shared Language

The test names sound like the domain, not the implementation.

Concrete Examples

Examples clarify boundaries better than abstract requirements.

BDD Fundamentals

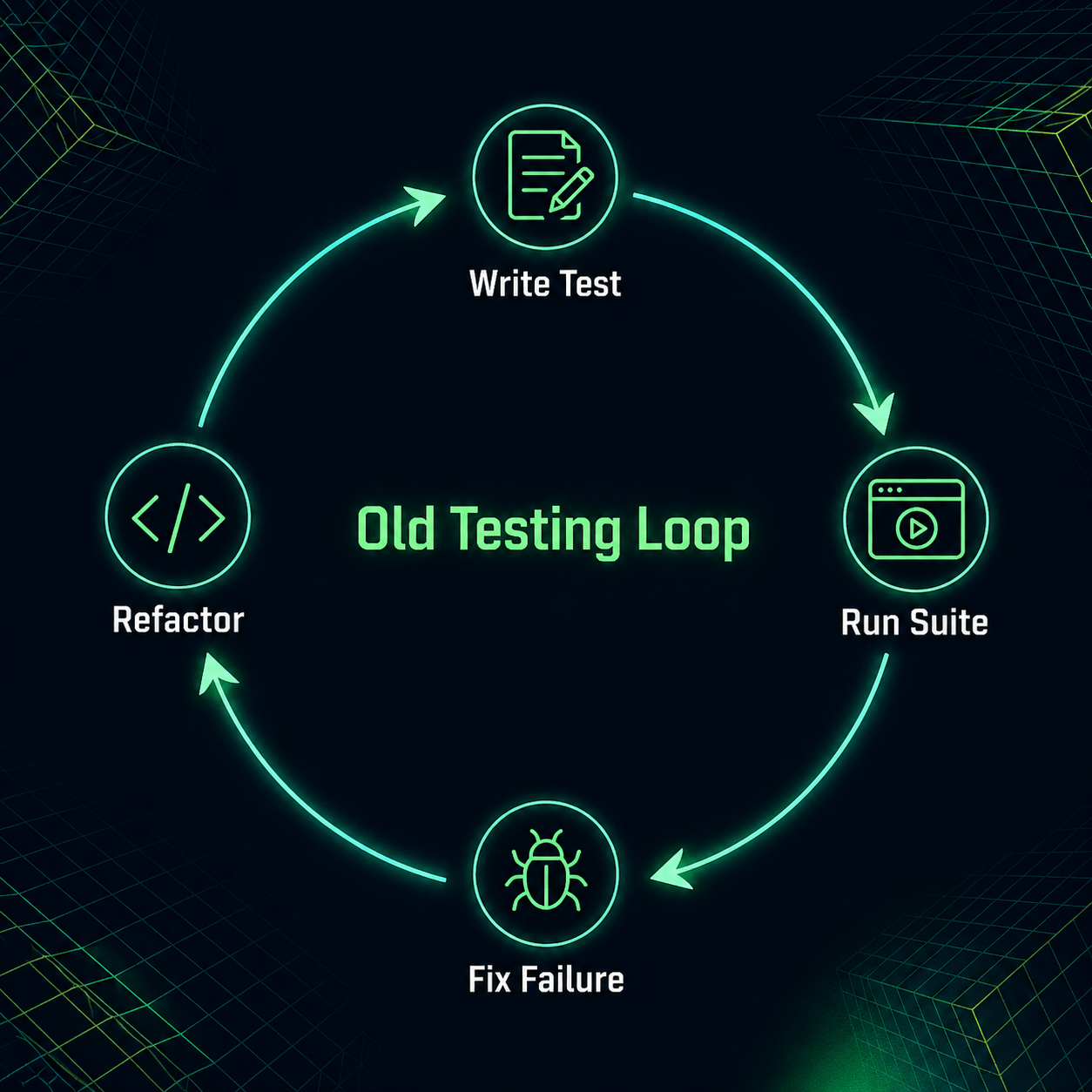

The Old Testing Loop

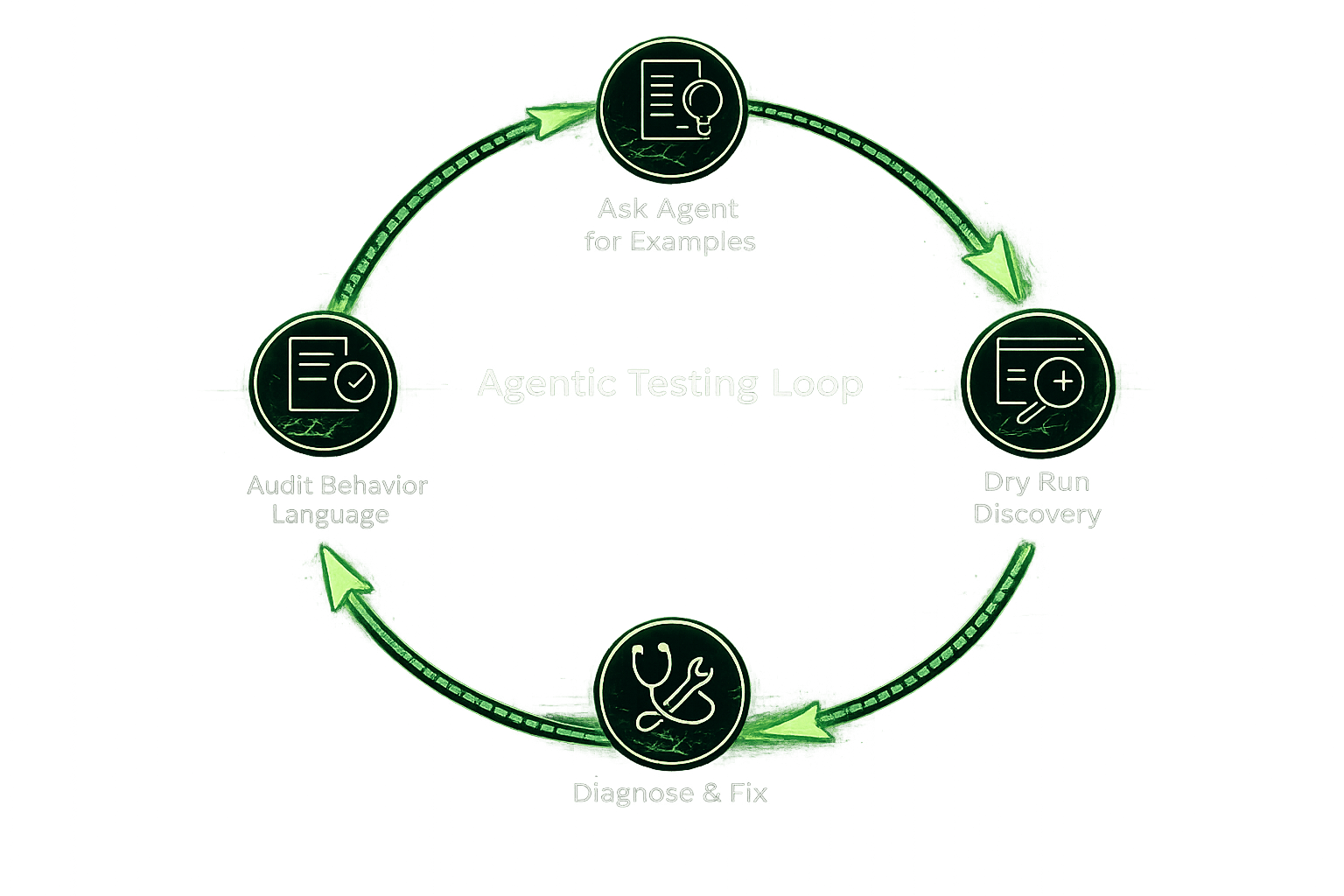

The Agentic Testing Loop

Tools

Test Streaming

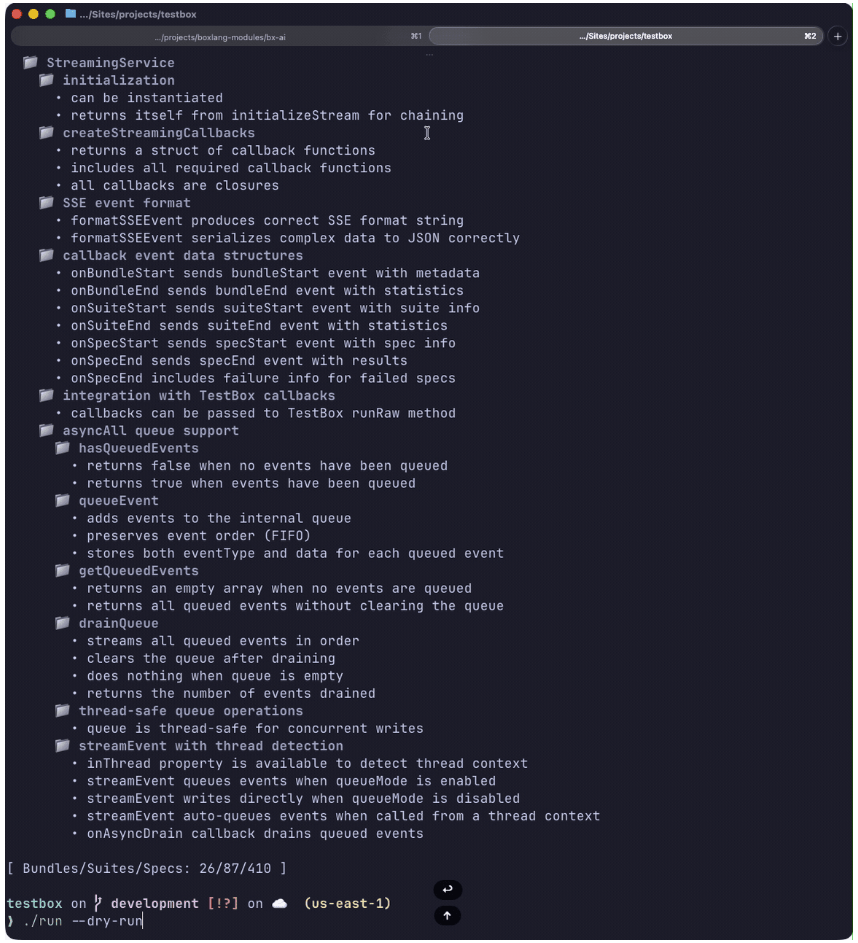

./testbox/run --streamDry Run

./testbox/run --dry-runEnhanced CLI Filters

Focus on Failures

./testbox/run --show-failed-only./testbox/run --stacktrace=fullStack Trace Control

Enhanced CLI Filters

Output & Performance Flags

# Suppress passing or skipped specs

./testbox/run --show-passed=false

./testbox/run --show-skipped=false

# Abort after N failures

./testbox/run --max-failures=10

# Flag slow specs

./testbox/run --slow-threshold-ms=500

# Report the N slowest specs at the end

./testbox/run --top-slowest=5

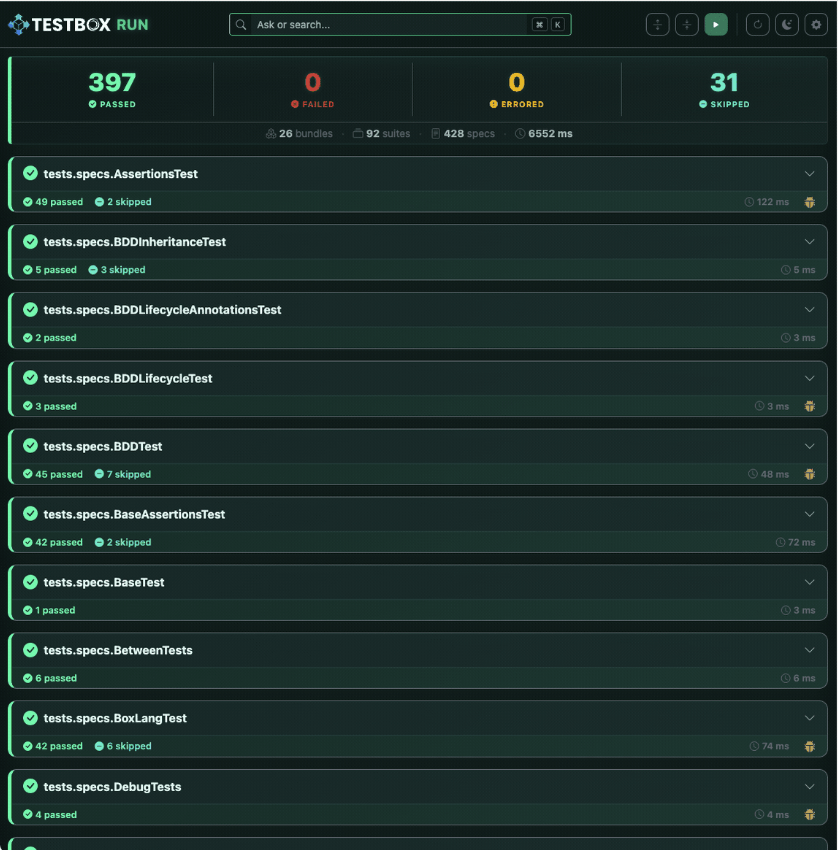

TestBox RUN

- Run and explore tests through a self-hosted web UI

- Real-time streaming results as specs execute (powered by TestBox 7 streaming)

- Navigate and execute individual bundles or specs interactively

- Visual test tree + progress feedback for quick inspection and debugging

Because AI can't be the only thing to get the new shiny

Agentic ColdBox

box coldbox ai install

Guidelines

"How to behave"

Global or scoped instructions that shape how the AI should respond.

Agents

"The AI Controller layer"

Uses skills and obeys guidelines to accomplish a goal.

Skills

"What can I do"

Discrete, reusable units of capability. Often include instructions and examples.

Agentic ColdBox

Guidelines

- Use qb, not raw SQL

- Return valid JSON

- Follow ColdBox conventions

Agents

Build a new API endpoint

Skills

- Generate handler

- Create route

- Write TestBox spec

Agentic ColdBox

Uses

While following

Agentic ColdBox

coldbox ai guidelines add qb

Add and Create Guidelines

coldbox ai guidelines create

coldbox ai guidelines override coldbox

Agentic ColdBox

Install and Create Skills

coldbox ai skills install ortus-boxlang/skills/boxlang-developer/file-handling

coldbox ai skills create

Agentic ColdBox

cbMCP

Turn your app into a live, AI-queryable backend for building, debugging, and testing.

-

Query your live ColdBox app — routes, handlers, WireBox, and config

-

Context-aware AI code generation (no more generic guesses)

-

Debug with real runtime insight, not just static analysis

-

Stay in sync automatically as your code changes

-

Expose your app as an MCP server for any AI tool to plug into

box coldbox ai mcp installDemo Overview

Conference Session Review

Key Business Rules

- A session starts as

draft - Submission requires title, abstract, speaker, and category

- Average score uses completed reviews only

- Accepted / waitlisted / rejected depends on thresholds

- Incomplete reviews must not affect final decisions

The AI is pre-generated

During prep

Ran the full Codex workflow and saved the useful prompts and responses.

During this talk

Show the prompts and paste the saved interaction, then run the local repo commands.

Every “AI moment” has a receipt

.ai/prompts/01-generate-first-spec.md

.ai/responses/01-generate-first-spec.md

.ai/prompts/02-audit-before-running.md

.ai/responses/02-audit-before-running.md

.ai/prompts/03-fix-bad-test-smells.md

.ai/responses/03-fix-bad-test-smells.mdTask Runners let us explore step-by-step

box task run demoGrab Bag

- Push back on Agents when you know the implementation or process is wrong

- Save your tokens for the tedious work, not the thoughtful work

- Ask your Agents to "remember" things or add guidelines yourself.

- If you find yourself writing the same prompts over and over, write it down in a skill.

- Pull Request reviews are more important than ever.

- Make sure the tests make sense to you and the business

- Pit agents against each other — have GitHub Copilot review Claude

- Make a skill to address PR feedback and upload it to skills.boxlang.io

- Have another human review the PR as well when possible

Thank You

Agentic BDD — Testing in the Age of AI Pair Programmers

By Eric Peterson

Agentic BDD — Testing in the Age of AI Pair Programmers

- 61