Presenter: Jakob

Theory journal club, 07.01.2022

NeurIPS 2020

Do brains and CNNs use the same

(or at least similar) decision strategies?

- classification accuracy is not sufficient to decide (in particular when accuracy is high): "two systems may achieve similar accuracy with very different strategies"

-

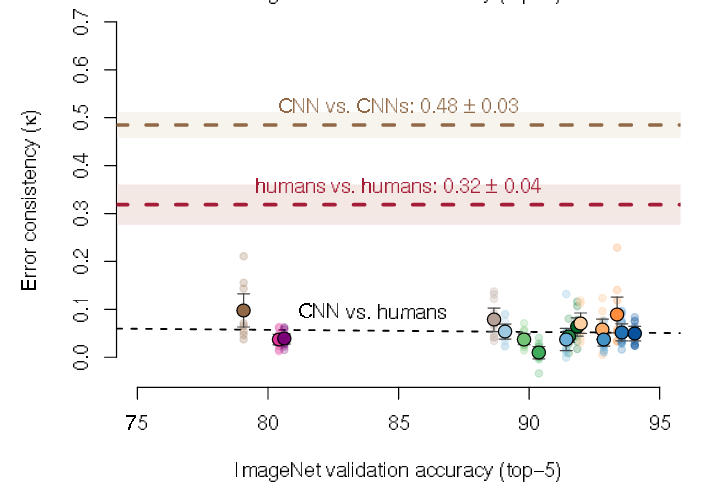

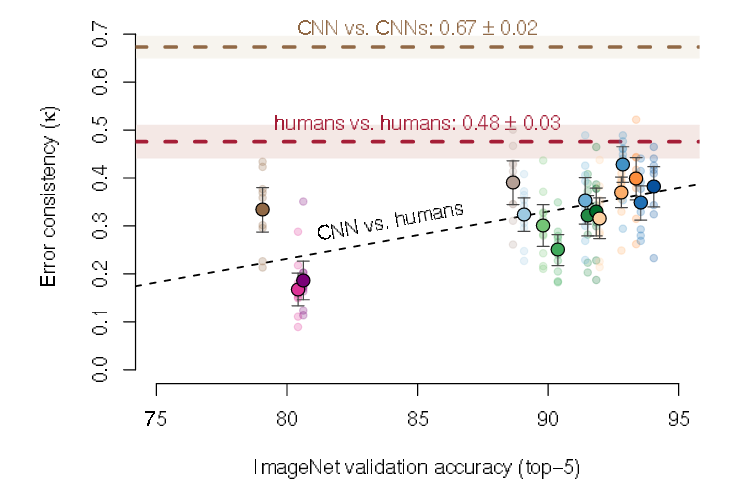

better measure: error consistency

- measure whether two systems make not just similar numbers of errors (~accuracy) but make similar mistakes per stimulus (~find the same individual stimuli difficult or easy)

- applicable to: algo-algo, human-human, algo-human

system1: 75%

system2: 75%

system1: 100%

system2: 50%

system1: 50%

system2: 100%

Error consistency

Consider two systems i and j:

How much do their decisions overlap?

observed overlap:

number of equal responses

error consistency

remove expected overlap

for high expected overlap additional overlap is more relevant

accuracy

expected overlap:

(binomial)

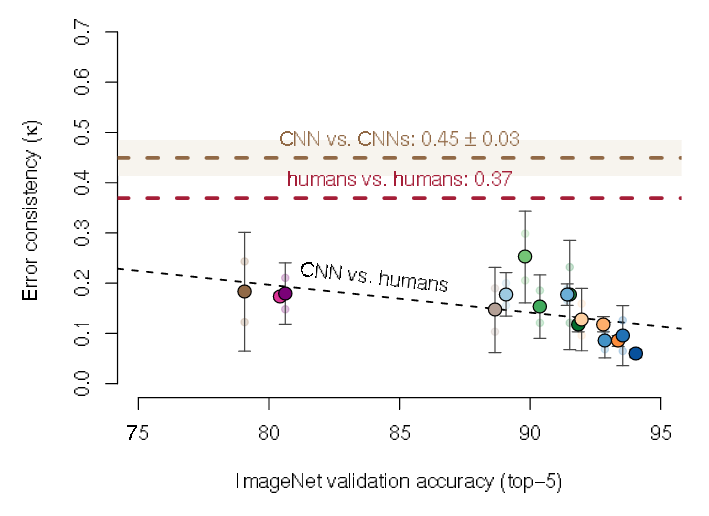

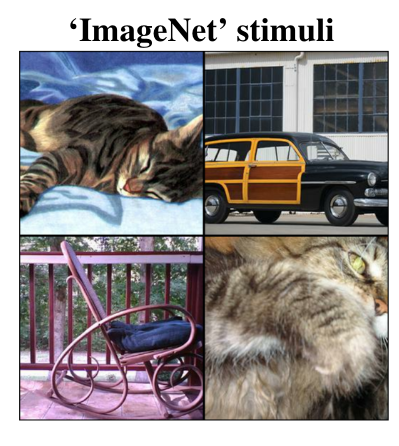

Do better ImageNet models make more human-like errors?

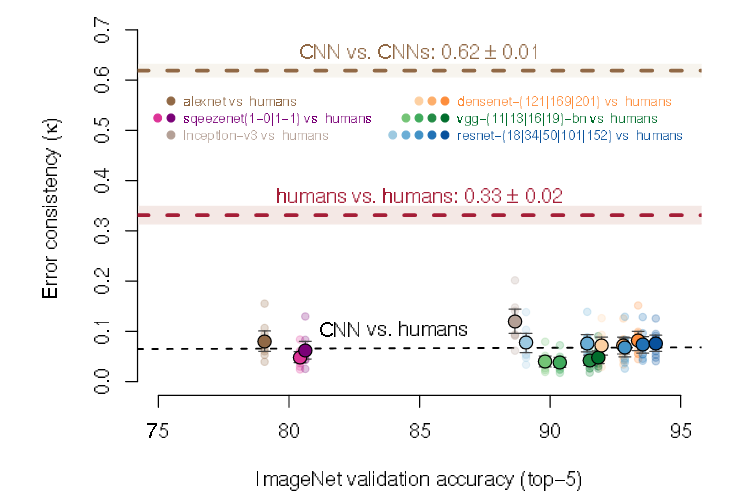

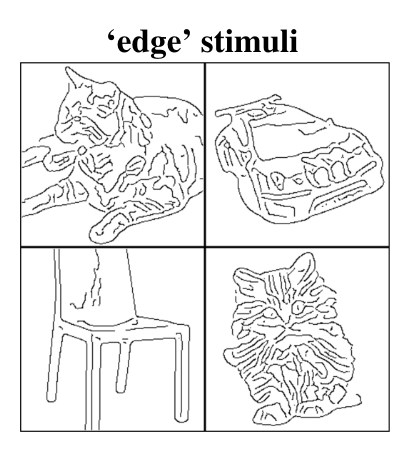

Do better ImageNet models make more human-like errors (o.o.d)?

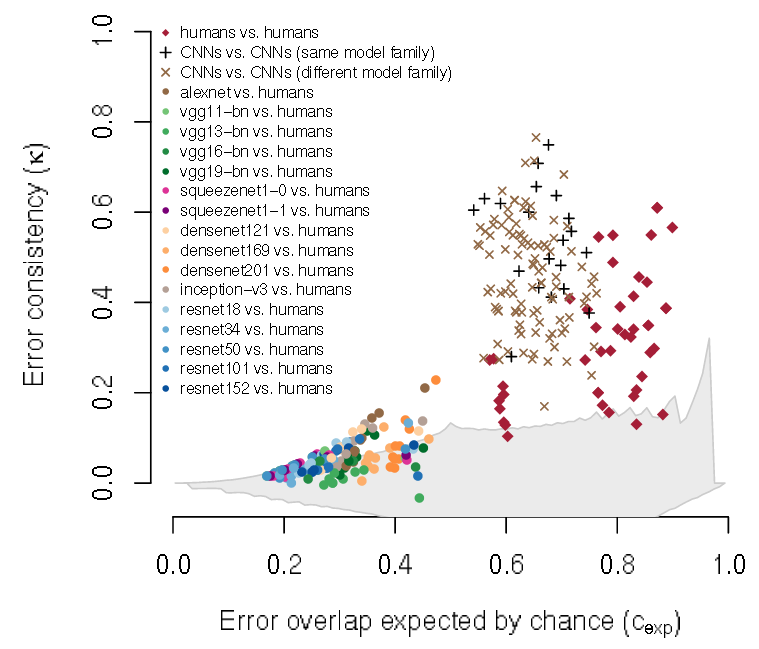

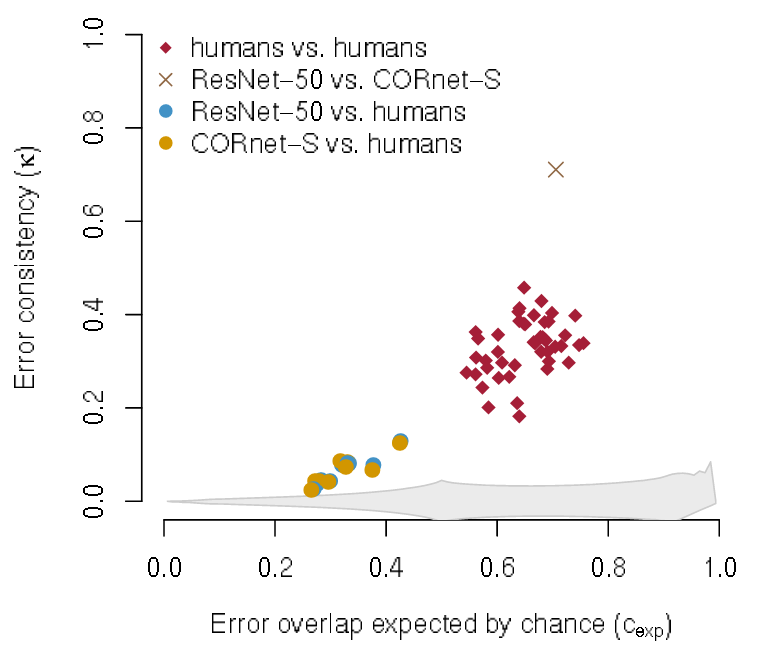

How is error consistency influenced by model architecture?

consistency by chance

Recurrence to the rescue!

"We developed CORnet-S, a shallow ANN with four anatomically mapped areas and recurrent connectivity, guided by Brain-Score, a new large-scale composite of neural and behavioral benchmarks for quantifying the functional fidelity of models of the primate ventral visual stream. Despite being significantly shallower than most models, CORnet-S is the top model on Brain-Score and outperforms similarly compact models on ImageNet. Moreover, our extensive analyses of CORnet-S circuitry variants reveal that recurrence is the main predictive factor of both Brain-Score and ImageNet top-1 performance. Finally, we report that the temporal evolution of the CORnet-S "IT" neural population resembles the actual monkey IT population dynamics. Taken together, these results establish CORnet-S, a compact, recurrent ANN, as the current best model of the primate ventral visual stream."

Kubelius ... DiCarlo: Brain-Like Object Recognition with High-Performing Shallow Recurrent ANNs [NeurIPS 2019 (oral)]

Recurrence to the rescue!

Recurrence to the rescue!

Conclusion

What now?

- don't trust aggregate metrics when comparing mechanisms

- role of recurrence?

- different training paradigms

- different algorithms (e.g., variational inference)

Beyond accuracy

By jakobj

Beyond accuracy

- 227