Machine Learning

Workshop: Part Four

Reinforcement Learning

(Let's dive in now)

@KatharineCodes @JavaFXpert

Using BURLAP for Reinforcement Learning

@KatharineCodes @JavaFXpert

Learning to Navigate a Grid World with Q-Learning

@KatharineCodes @JavaFXpert

Rules of this Grid World

- Agent may move left, right, up, or down (actions)

- Reward is 0 for each move

- Reward is 5 for reaching top right corner (terminal state)

- Agent can't move into a wall or off-grid

- Agent doesn't have a model of the grid world. It must discover as it interacts.

Challenge: Given that there is only one state that gives a reward, how can the agent work out what actions will get it to the reward?

(AKA the credit assignment problem)

Goal of an episode is to maximize total reward

@KatharineCodes @JavaFXpert

Visualizing training episodes

From BasicBehavior example in https://github.com/jmacglashan/burlap_examples

@KatharineCodes @JavaFXpert

This Grid World's MDP (Markov Decision Process)

In this example, all actions are deterministic

@KatharineCodes @JavaFXpert

Agent learns optimal policy from interactions with the environment (s, a, r, s')

@KatharineCodes @JavaFXpert

Expected future discounted rewards, and polices

@KatharineCodes @JavaFXpert

This example used discount factor 0.9

Low discount factors cause agent to prefer immediate rewards

@KatharineCodes @JavaFXpert

How often should the agent try new paths vs. greedily taking known paths?

@KatharineCodes @JavaFXpert

Q-Learning approach to reinforcement learning

| Left | Right | Up | Down | |

|---|---|---|---|---|

| ... | ||||

| 2, 7 | 2.65 | 4.05 | 0.00 | 3.20 |

| 2, 8 | 3.65 | 4.50 | 4.50 | 3.65 |

| 2, 9 | 4.05 | 5.00 | 5.00 | 4.05 |

| 2, 10 | 4.50 | 4.50 | 5.00 | 3.65 |

| ... |

Q-Learning table of expected values (cumulative discounted rewards) as a result of taking an action from a state and following an optimal policy. Here's an explanation of how calculations in a Q-Learning table are performed.

Actions

States

@KatharineCodes @JavaFXpert

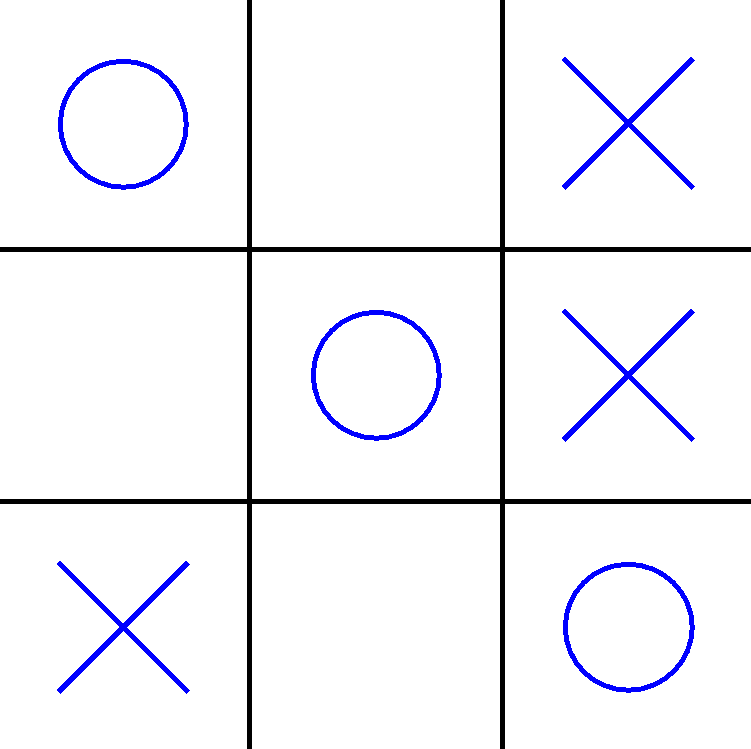

Tic-Tac-Toe with Reinforcement Learning

Learning to win from experience rather than by being trained

@KatharineCodes @JavaFXpert

Inspired by the Tic-Tac-Toe Example section...

@KatharineCodes @JavaFXpert

Tic-Tac-Toe Learning Agent and Environment

X

O

Our learning agent is the "X" player, receiving +5 for winning, -5 for losing, and -1 for each turn

The "O" player is part of the Environment. State and reward updates that it gives the Agent consider the "O" play.

@KatharineCodes @JavaFXpert

Tic-Tac-Toe state is the game board and status

| States | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 |

|---|---|---|---|---|---|---|---|---|---|

| O I X I O X X I O, O won | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A |

| I I I I I I O I X, in prog | 1.24 | 1.54 | 2.13 | 3.14 | 2.23 | 3.32 | N/A | 1.45 | N/A |

| I I O I I X O I X, in prog | 2.34 | 1.23 | N/A | 0.12 | 2.45 | N/A | N/A | 2.64 | N/A |

| I I O O X X O I X, in prog | +4.0 | -6.0 | N/A | N/A | N/A | N/A | N/A | -6.0 | N/A |

| X I O I I X O I X, X won | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A |

| ... |

Q-Learning table of expected values (cumulative discounted rewards) as a result of taking an action from a state and following an optimal policy

Actions (Possible cells to play)

Unoccupied cell represented with an I in the States column

@KatharineCodes @JavaFXpert

Tic-Tac-Toe with Reinforcement Learning

@KatharineCodes @JavaFXpert

Summary of neural network links (1/2)

Andrew Ng video:

https://www.coursera.org/learn/machine-learning/lecture/zcAuT/welcome-to-machine-learning

Iris flower dataset:

https://en.wikipedia.org/wiki/Iris_flower_data_set

Visual neural net server:

http://github.com/JavaFXpert/visual-neural-net-server

Visual neural net client:

http://github.com/JavaFXpert/ng2-spring-websocket-client

Deep Learning for Java: http://deeplearning4j.org

Spring initializr: http://start.spring.io

Kaggle datasets: http://kaggle.com

@KatharineCodes @JavaFXpert

Summary of neural network links (2/2)

Tic-tac-toe client: https://github.com/JavaFXpert/tic-tac-toe-client

Gluon Mobile: http://gluonhq.com/products/mobile/

Tic-tac-toe REST service: https://github.com/JavaFXpert/tictactoe-player

Java app that generates tic-tac-toe training dataset:

https://github.com/JavaFXpert/tic-tac-toe-minimax

Understanding The Minimax Algorithm article:

http://neverstopbuilding.com/minimax

Optimizing neural networks article:

https://medium.com/autonomous-agents/is-optimizing-your-ann-a-dark-art-79dda77d103

A.I Duet application: http://aiexperiments.withgoogle.com/ai-duet/view/

@KatharineCodes @JavaFXpert

Summary of reinforcement learning links

BURLAP library: http://burlap.cs.brown.edu

BURLAP examples including BasicBehavior:

https://github.com/jmacglashan/burlap_examples

Markov Decision Process:

https://en.wikipedia.org/wiki/Markov_decision_process

Q-Learning table calculations: http://artint.info/html/ArtInt_265.html

Exploitation vs. exploration:

https://en.wikipedia.org/wiki/Multi-armed_bandit

Reinforcement Learning: An Introduction:

https://webdocs.cs.ualberta.ca/~sutton/book/bookdraft2016sep.pdf

Tic-tac-toe reinforcement learning app:

https://github.com/JavaFXpert/tic-tac-toe-rl

@KatharineCodes @JavaFXpert

Machine Learning

Workshop: Part Four

Machine Learning Exposed Workshop: Part Four

By javafxpert

Machine Learning Exposed Workshop: Part Four

Part four of Machine Learning Exposed workshop. Shedding light on machine learning, being gentle with the math.

- 3,055