Householder's Methods

Numerical Methods

David Mayerich

Scalable Tissue Imaging and Modeling (STIM) Laboratory

Department of Electrical and Computer Engineering

Cullen College of Engineering

University of Houston

David Mayerich

STIM Laboratory, University of Houston

Newton's Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Method

-

Start with an initial guess \(x_0\)

-

Calculate the value of the function \(f(x_0)\) and its derivative \(f'(x_0)\)

-

Update the guess based on the ratio:

David Mayerich

STIM Laboratory, University of Houston

Newton-Raphson Algorithm

David Mayerich

STIM Laboratory, University of Houston

// implement Newton’s Method where:

// f is a function pointer that takes a parameter x and returns a value

// fp is a function pointer for the derivative of f

double newton(double (*f)(double), double (*fp)(double), double x){

double x0;

return x; // the root with double precision

}Newton-Raphson Algorithm

David Mayerich

STIM Laboratory, University of Houston

// implement Newton’s Method where:

// f is a function pointer that takes a parameter x and returns a value

// fp is a function pointer for the derivative of f

double newton(double (*f)(double), double (*fp)(double), double x){

double x0;

do{

x0 = x; // initialize the guess x0

double fx = f(x0); // calculate the value of f(x)

if(fx == 0) return x0; // if the root is exactly at x0, return it

double fpx = fp(x0); // calculate the derivative

x = x0 – fx / fpx; // update x

} while(x != x0); // if the updated value doesn’t change

return x; // the root with double precision

}Newton's Method Evaluation

-

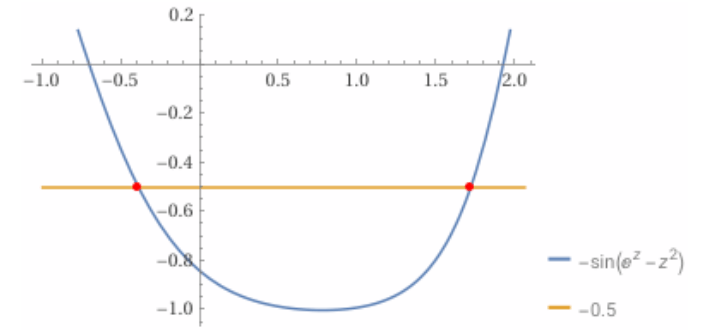

Calculate the solution to \(y=\sin\left(x^2 - e^x\right)\) for \(y=-0.5\) given an initial guess of \(x_0=-1.0\) out to \(4\) digits of precision

David Mayerich

STIM Laboratory, University of Houston

\(z=-0.3909\)

Householder's Method

Generalization of Newton's Method

Convergence

David Mayerich

STIM Laboratory, University of Houston

Householder's Method

-

Solve a nonlinear equation \(f(x)=0\) for a real-valued function \(f\in\mathbb{R}\):

David Mayerich

STIM Laboratory, University of Houston

for the \(k\) derivative.

-

If \(r\) is a root, then the error is:

-

The error of one update step is \(\epsilon = O\left(h^{k+1} \right)\), where \(h\) is the distance between the root and the guess

1st-Order Householder

-

Derive the 1st-order Householder method (\(k=1\)):

David Mayerich

STIM Laboratory, University of Houston

-

1st-order Householder is Newton-Raphson and has convergence:

Halley's Method

-

Calculate the 2nd-order Householder method:

David Mayerich

STIM Laboratory, University of Houston

-

Halley's Method convergence:

Convergence Comparison

-

Error for each iteration:

David Mayerich

STIM Laboratory, University of Houston

#1: \(|x_{n+1}-r| \leq C|x_n-r|^{d+1}\)

#2: \(|x_{n+2}-r| \leq C|x_{n+1}-r|^{d+1}\)

-

Substitute \(C|x_n-r|^{d+1}\) for \(|x_{n+1}-r|\):

#3: \(|x_{n+3}-r| \leq C|x_{n+2}-r|^{d+1}\)

-

In general, \(N\) iterations will have a final error of:

Comparing Evaluation Time

-

Newton's vs. Halley's Method:

David Mayerich

STIM Laboratory, University of Houston

2 polynomial evaluations:

\(f(x)\) and \(f'(x)\)

3 polynomial evaluations:

\(f(x)\), \(f'(x)\), and \(f''(x)\)

-

Householder's Methods require \(k+1\) function evaluations

(function and \(k\) derivatives)

-

If \(f(x)\) is a polynomial, we can use Horner's method to evaluate all of the derivatives

-

\(N\) iterations will require \(N(k+1)\) evaluations of Horner's method

(Note: Horner's method is constant time (\(O(1)\)) because the number of coefficients is constant)

Error vs. Time

-

The error of the \(k\)-order Householder's method after \(n\) iterations is:

David Mayerich

STIM Laboratory, University of Houston

-

The number of evaluations required for \(n\) iterations is \(n(k+1)\)

-

One iteration consists of \(k+1\) function evaluations

-

A single evaluation is therefore \(\frac{1}{k+1}\) of an iteration

-

What method provides the least error per evaluation?

Error vs. Evaluations

-

Newton's method (1st order Householder): \(O(h)^{\sqrt{2}} = O(h)^{1.414}\)

-

Halley's method (2nd order Householder): \(O(h)^{\sqrt[3]{3}} = O(h)^{1.442}\)

-

3rd order: \(O(h)^{1.414}\)

-

4th order: \(O(h)^{1.380}\)

-

5th order: \(O(h)^{1.348}\)

-

Halley's method provides the best error per function evaluation

-

After 2nd order, increasing the number of iterations \(n\) is more efficient

David Mayerich

STIM Laboratory, University of Houston

Error vs. Evaluations for General Functions

-

Given an analytical (non-polynomial) function, then \(k\) derivatives must be computed using automatic differentiation

-

Automatic differentiation requires \(\frac{(k+1)(k+2)}{2}\) evaluations of \(f(x)\)

-

What is our best error per evaluation?

David Mayerich

STIM Laboratory, University of Houston

| d | Exp/Eval |

|---|---|

| 1 | 1.260 |

| 2 | 1.201 |

| 3 | 1.149 |

| 4 | 1.113 |

| 5 | 1.089 |

| d | Exp/Eval |

|---|---|

| 6 | 1.072 |

| 7 | 1.059 |

| 8 | 1.050 |

| 9 | 1.042 |

| 10 | 1.037 |

Newton's Method Theorem

-

Newton's method converges quadratically to the exact root \(r\):

David Mayerich

STIM Laboratory, University of Houston

If \(f\), \(f'\), and \(f''\) are continuous in a neighborhood of a root \(r\) of \(f\) and if \(f'(r)\neq 0\), then there is a positive \(\delta\) with the following property:

If the initial point in Newton's method satisfies \(|r-x_0|\leq \delta\), the all subsequent points \(x_n\) satisfy the same inequality, converge to \(r\), and do so quadratically.

-

The number of significant figures nearly doubles every iteration

-

\(5-6\) iterations for

binary32(float) IEEE 754 -

\(6-7\) iterations for

binary64(double) IEEE 754

Limitations and Solutions

David Mayerich

STIM Laboratory, University of Houston

Divergence

David Mayerich

STIM Laboratory, University of Houston

Hybrid Methods

David Mayerich

STIM Laboratory, University of Houston

interval

\([a,b]\)

function

\(f(x)\)

Newton

\(x_n = c\)

Test

\(x_n \in [a,b]\)?

yes

\(x_n \in [a,b]\)

update to

\([c,b]\) or \([a,c]\)

no

\(x_n \notin [a,b]\)

bisect

\(c\)

D.2 Householder's Methods

By STIM Laboratory

D.2 Householder's Methods

- 179