"Future State Maximisation" as an Intrinsic

Henry Charlesworth (H.Charlesworth@warwick.ac.uk)

DR@W Forum, Warwick, 2 May 2019

Motivation for Decision Making

About me

- PhD student in the Mathematics for Real-World Systems CDT (based in the Centre for Complexity Science, Maths department)

- Supervised by Professor Matthew Turner (Physics, Centre for Complexity Science)

- Recently started a post doc in the WMG Data Science group (deep reinforcement learning research), working with Professor Giovanni Montana

Motivation

- Primary motivation: can we think of general, task-independent principles for decision making.

- Related to the idea of "intrinsic motivations" introduced in the psychology literature.

- Can include things such as:

- Curiosity driven behaviour - e.g. attraction to novel states

- Accumulation of knowledge

- Development of reusable skills

Future State Maximisation (FSM)

- Loosely speaking: "all else being equal, it is preferable to make decisions that keep your options open".

- That is, make decisions that maximise the number of possible things that you can potentially do in the future.

- Candidate for an intrinsic motivation that could potentially be useful in a wide range of situations.

- Rationale is that in an uncertain world, maximising the number of things you're able to do in the future gives you the most control over your environment - puts you in a position where you are most prepared to deal with many different possible scenarios.

FSM - Simple, high-level examples

- Owning a car vs. not owning a car

- Going to university

- Aiming to be healthy rather than sick

- Aiming to be rich rather than poor

Future State Maximisation (FSM)

- In principle, achieving "mastery" over your environment in terms of maximising the control you have over the future things that you can do should naturally lead to: developing re-usable skills, obtaining knowledge about how your environment works, etc.

- Could also be useful as a proxy to evolutionary fitness in many situations. Achieving more control over what it is potentially able to do is almost always beneficial.

How can FSM be useful?

- If you accept this is often a sensible principle to follow, we might expect behaviours/heuristics to have evolved that make it seem like an organism is applying FSM.

Could be useful in explaining/understanding

certain animal behaviours.

- Could also be used to generate behaviour for artificially intelligent agents.

Quantifying FSM

- Two frameworks that are closely related to this idea of future state maximisation:

- "Empowerment" (Information theoretic approach)

- "Causal Entropic Forces" (A more physics-based approach)

[1] "Empowerment: A Universal Agent-Centric Measure of Control" - Klyubin et al. 2005

[2] "Causal Entropic Forces" - Wissner-Gross and Freer, 2013

Empowerment

- Formulates a measure of the amount of control an agent has over it's future in the language of information theory.

- Mutual information - measures how much information one variable tells you about the other:

- Channel capacity:

Empowerment

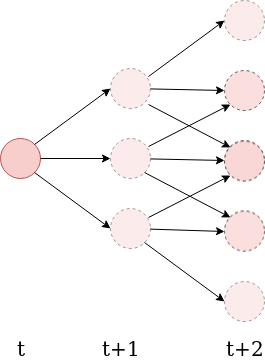

- Consider an agent making decisions at discrete time steps. Let \( \mathcal{A} \) be the set of actions it can take, and \( \mathcal{S} \) be the set of possible states its "sensors" can be in.

- Define the n-step empowerment to be the channel capacity between the agent's available action sequences, and the possible states of its sensors at a later time \(t+n\):

- Explicitly measures how much an agents actions are capable of influencing the potential states its sensors can be in a later time.

Empowerment Examples

- Initially, only applied to very simple toy systems. Difficult quantity to calculate.

Taken from: [1] Klyubin, A.S., Polani, D. and Nehaniv, C.L., 2005.

In European Conference on Artificial Life

Empowerment Examples

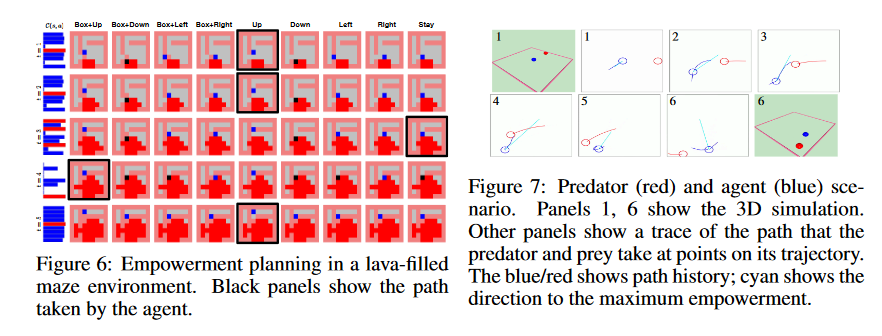

- Mohamed & Rezende (2015) used a variational bound on the mutual information that allowed them to use neural networks to estimate the empowerment in much higher-dimensional state spaces.

- Used it as an intrinsic reward for some simple reinforcement learning problems.

Another Approach: "Causal Entropic Forces"

- Rather than consider a microstate to be a point in the system's phase space consider it to be a trajectory \( \mathbf{x}(t) \) for some time \(\tau\) into the future.

- Partition trajectories into "macrostates" based on their starting point, i.e. \( \mathbf{x}(t) \) and \( \mathbf{x'}(t) \) belong to the same macrostate \(\mathbf{X}\) if \( \mathbf{x}(0) = \mathbf{x'}(0)\).

"Causal entropy":

"Causal entropic force":

Again: only very simple applications

- As with empowerment, very hard to calculate - path integral over all possible future trajectories out to some horizon \( \tau \)

How can FSM be useful in understanding collective motion?

Case study:

"Intrinsically Motivated Collective Motion"

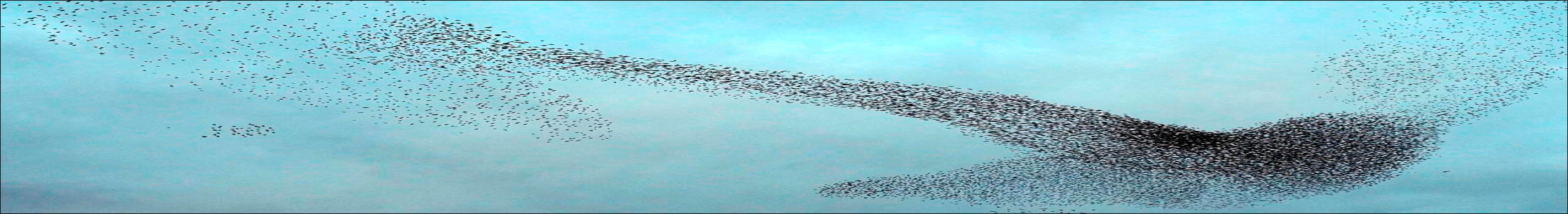

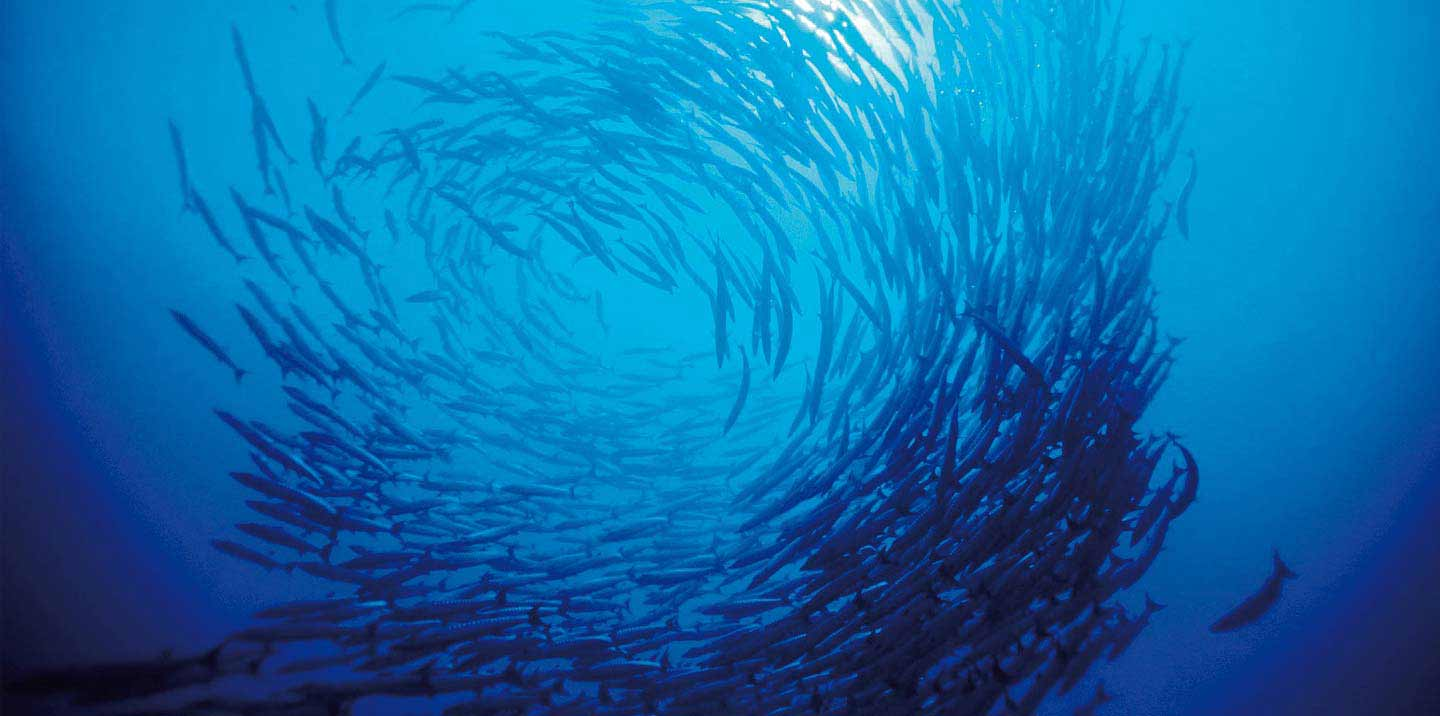

What is Collective Motion?

- Coordinated motion of many individuals all moving according to the same rules.

- Occurs all over the place in nature at many different scales - flocks of birds, schools of fish, bacterial colonies, herds of sheep etc.

Why Should we be Interested?

Applications

- Animal conservation

- Crowd Management

- Computer Generated Images

- Building decentralised systems made up of many individually simple, interacting components - e.g. swarm robotics.

- We are scientists - it's a fundamentally interesting phenomenon to understand!

Existing Collective Motion Models

- Often only include local interactions - do not account for long-ranged interactions, like vision.

- Tend to lack low-level explanatory power - the models are essentially empirical in that they have things like co-alignment and cohesion hard-wired into them.

Example: Vicsek Model

T. Vicsek et al., Phys. Rev. Lett. 75, 1226 (1995).

R

Our approach

- Based on a simple, low-level motivational principle - FSM.

- No built in alignment/cohesive interactions - these things emerge spontaneously.

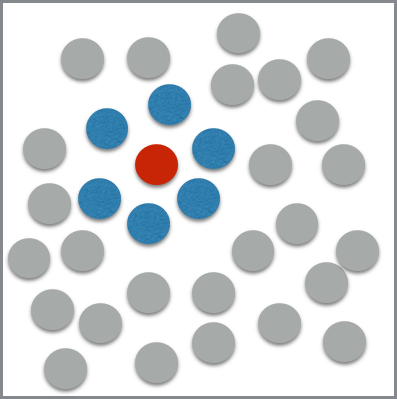

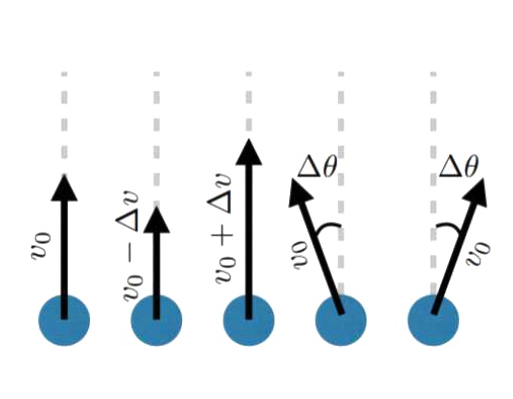

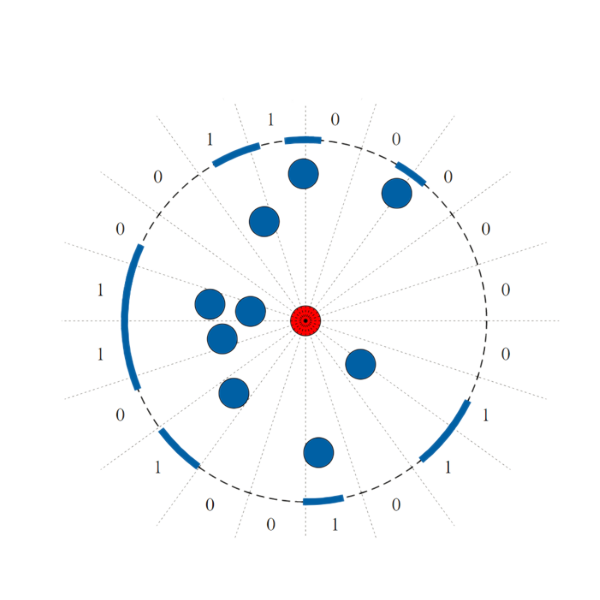

Our Model

- Consider a group of circular agents equipped with simple visual sensors, moving around on an infinite 2D plane.

Possible Actions:

Visual State

Making a decision

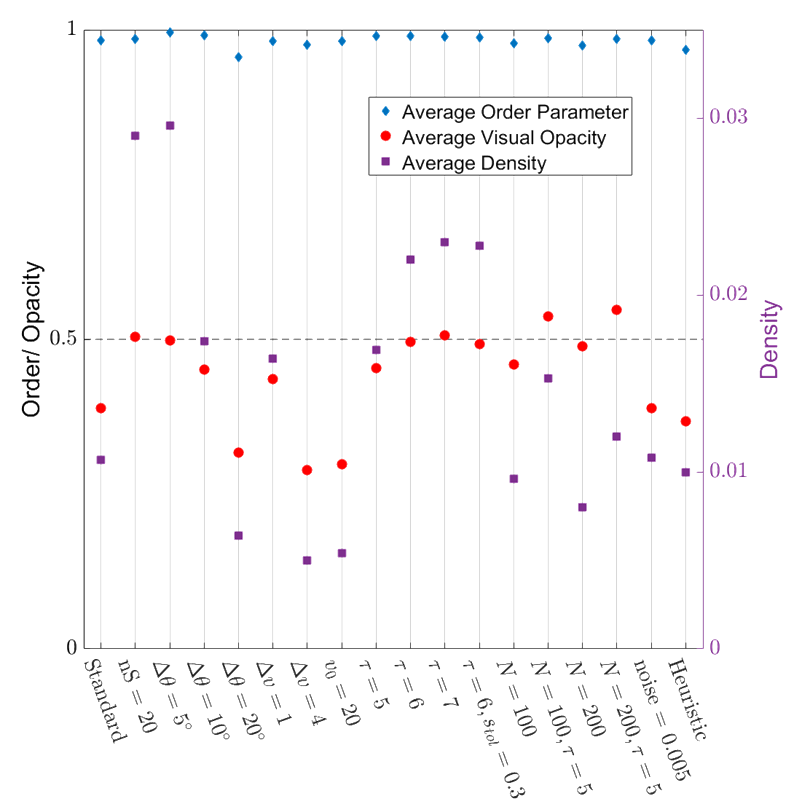

Simulation Result - Small flock

Leads to robust collective motion!

- Very highly ordered collective motion. (Average order parameter ~ 0.98)

- Density is well regulated, flock is marginally opaque.

- These properties are robust over variations in the model parameters!

Order Parameter:

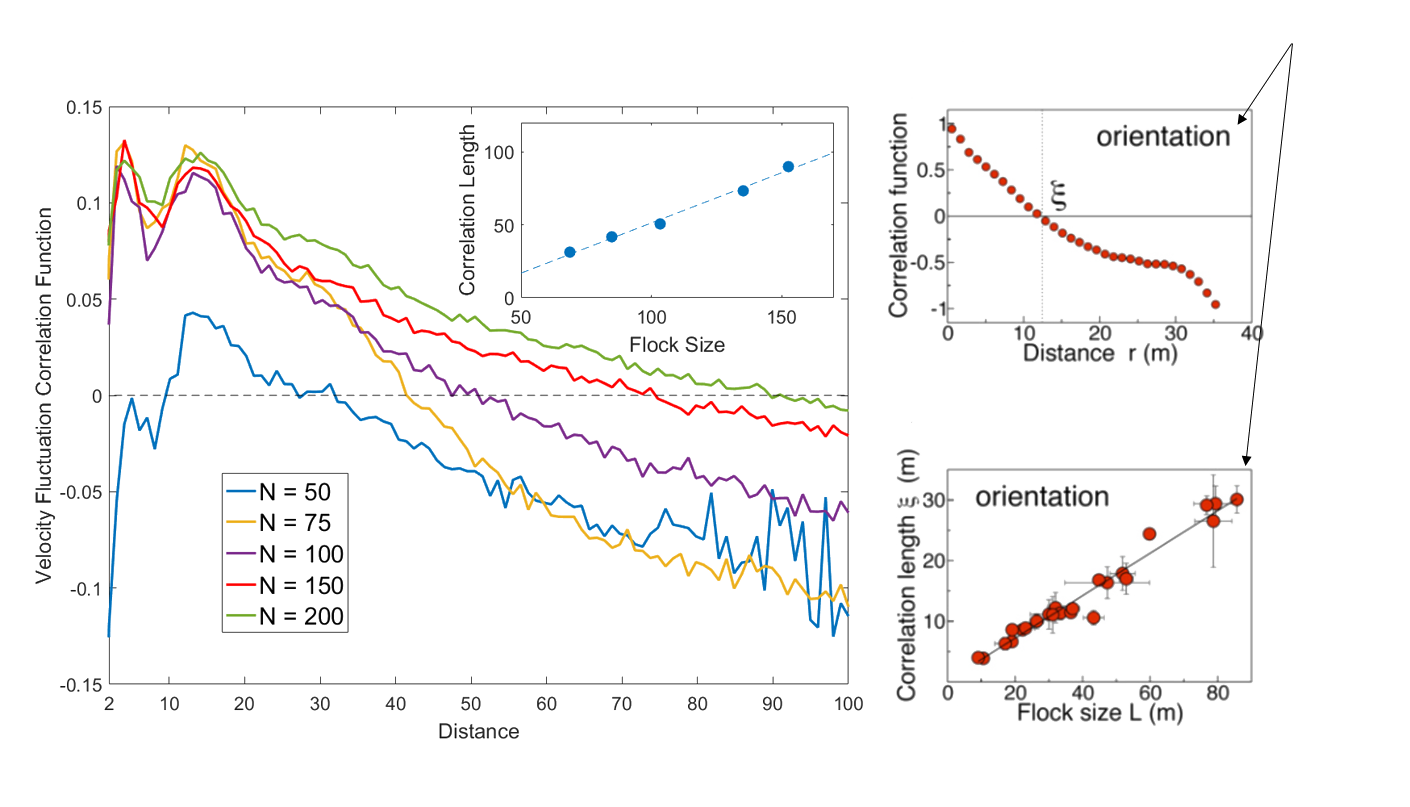

Also: Scale Free Correlations

Real starling data (Cavagna et al. 2010)

Data from model

- Scale free correlations mean an enhanced global response to environmental perturbations!

correlation function:

velocity fluctuation

Continuous version of the model

- Would be nice to not have to break the agents' visual fields into an arbitary number of sensors.

- Calculating the mutual information (and hence empowerment) for continuous random variables is difficult - need to take a different approach.

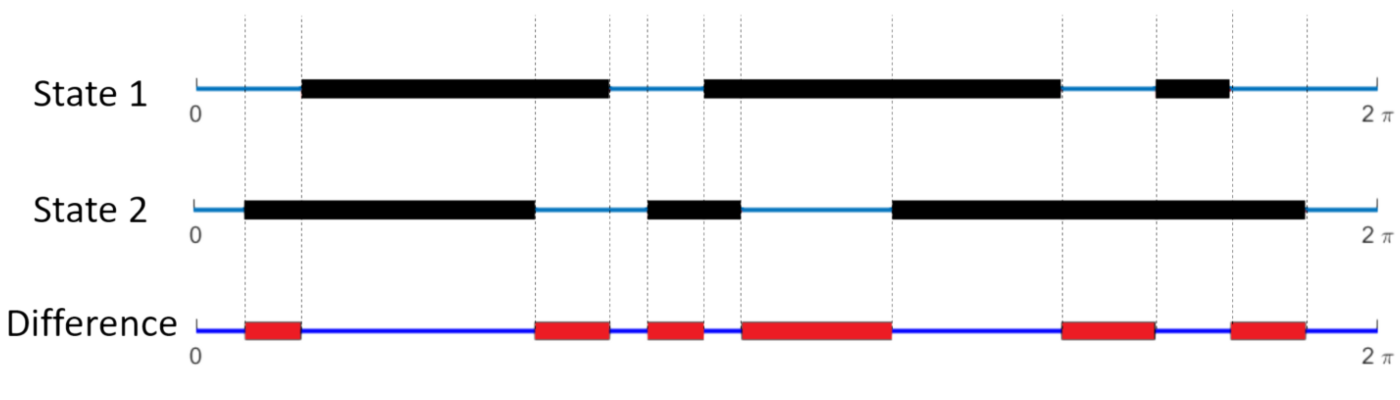

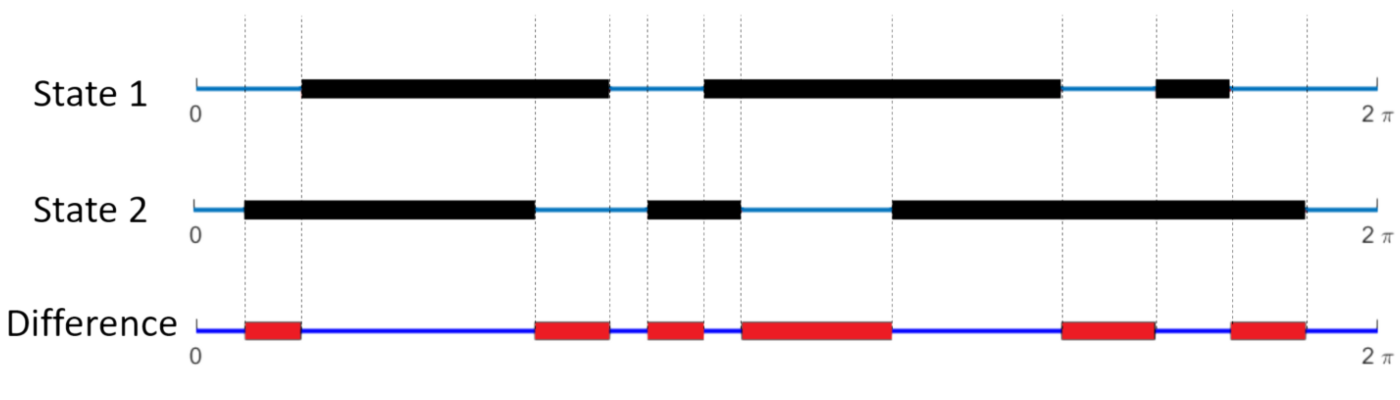

Continuous version of the model

- Let \(f(\theta)\) to be 1 if angle \(\theta\) is occupied by the projection of another agent, or 0 otherwise

- Define the "difference" between two visual states i and j to be:

- This is simply the fraction of the angular interval \([0, 2\pi)\) where they are different.

Continuous version of the model

branch \(\alpha\)

For each initial move, \( \alpha \), define a weight as follows:

- Rather than counting the number of distinct visual states on each branch, this weights every possible future state by its average difference between all other states on the same branch.

- Underlying philosophy of FSM remains the same.

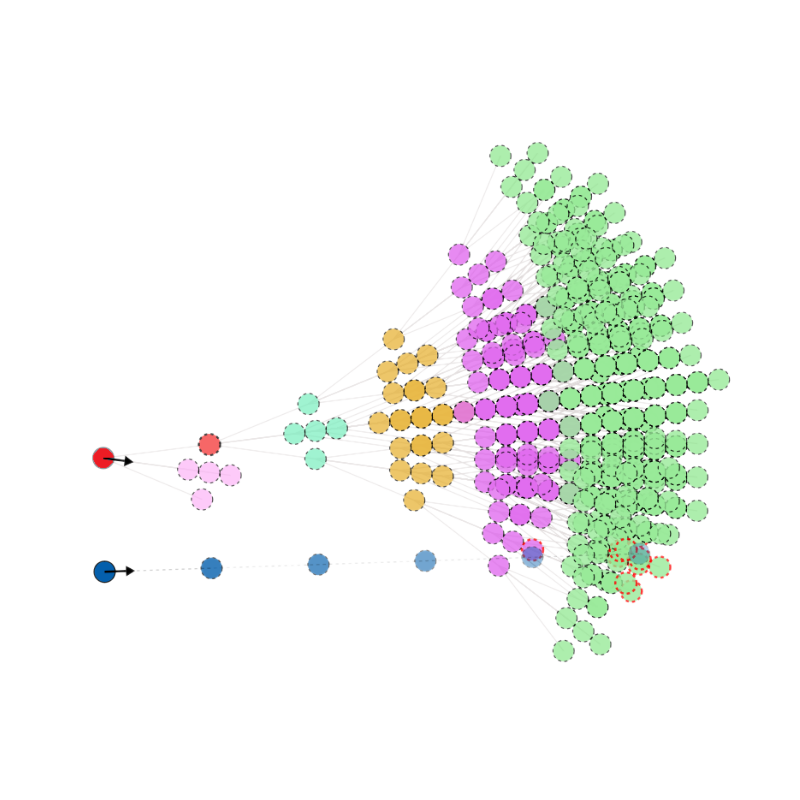

Result with a larger flock - remarkably rich emergent dynamics

Can we do this without a full search of future

- This algorithm is very computationally expensive. Can we generate a heuristic that acts to mimic this behaviour?

- Train a neural network on trajectories generated by the FSM algorithm to learn which actions it takes, given only the currently available visual information (i.e. no modelling of future trajectories).

states?

Visualisation of the neural network

previous visual sensor input

current visual sensor input

hidden layers of neurons

output: predicted probability of action

non-linear activation function: f(Aw+b)

How does it do?

Some other things you can do

Making the swarm turn

Guiding the swarm to

follow a trajectory

Finally: recent, related idea that I find interesting

"Goal-conditioned" Reinforcement Learning

Standard reinforcement learning paradigm: Markov decision processes

\(s_0, a_1, r_1, s_1, a_2, r_2, \dots\)

states

actions

rewards

Learn a policy \(\pi(a | s) \) to maximise expected return:

Goal-conditioned RL: learn a policy conditioned on a goal g:

Relation of goal-conditioned RL to FSM

- Let's consider the case where the set of goals is the same as the set of states the system can be in, and take the reward function to be e.g. the distance between the state s and the goal g, or an indicator function for if the goal has been achieved.

- In this case you are trying to learn (ideally) a policy \( \pi(a| s_i, s_f)\) that allows you to get between any two states \(s_i\) and \(s_f\).

Example of some recent work: "Skew-Fit"

- Aim is to explicitly maximise the coverage of the state space that can be reached - philosophy is very closely related to FSM.

https://sites.google.com/view/skew-fit

- Maximises an objective: \(I(S;G) = H(G) - H(G|S)\)

set diverse goals

Make sure you can actually

achieve the goal from each state

- Very similar to empowerment - advantage is that learning a goal-conditioned policy actually tells you how to do everything as well.

Conclusions

- FSM might be a useful principle for understanding/generating a wide range of interesting behaviour.

- Calculating useful things with these frameworks is hard - but not impossible.

- Very general applicability - can apply to any agent-environment interaction.

Future State Maximisation as an Intrinsic Motivation for Decision Making

By Henry Charlesworth

Future State Maximisation as an Intrinsic Motivation for Decision Making

- 1,139