Testing Semantic Importance

via Betting

Jeremias Sulam

Interpretability in Modern AI

Foundations, Methods,

and Emerging Directions

UCSD 2026

"The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin. [...] Seeking an improvement that makes a difference in the shorter term, researchers seek to leverage their human knowledge of the domain, but the only thing that matters in the long run is the leveraging of computation. [...]

We want AI agents that can discover like we can, not which contain what we have discovered."The Bitter Lesson, Rich Sutton 2019

What parts of the image are important for this prediction?

What are the subsets of the input so that

Interpretability in Image Classification

-

Sensitivity or Gradient-based perturbations

-

Shapley coefficients

-

Variational formulations

-

Counterfactual & causal explanations

LIME [Ribeiro et al, '16], CAM [Zhou et al, '16], Grad-CAM [Selvaraju et al, '17]

Shap [Lundberg & Lee, '17], ...

RDE [Macdonald et al, '19], ...

[Sani et al, 2020] [Singla et al '19],..

Interpretability in Image Classification

-

Sensitivity or Gradient-based perturbations

-

Shapley coefficients

-

Variational formulations

-

Counterfactual & causal explanations

LIME [Ribeiro et al, '16], CAM [Zhou et al, '16], Grad-CAM [Selvaraju et al, '17]

Shap [Lundberg & Lee, '17], ...

RDE [Macdonald et al, '19], ...

[Sani et al, 2020] [Singla et al '19],..

-

Adebayo et al, Sanity checks for saliency maps, 2018

-

Ghorbani et al, Interpretation of neural networks is fragile, 2019

-

Shah et al, Do input gradients highlight discriminative features? 2021

Interpretability in Image Classification

-

Sensitivity or Gradient-based perturbations

-

Shapley coefficients

-

Variational formulations

-

Counterfactual & causal explanations

LIME [Ribeiro et al, '16], CAM [Zhou et al, '16], Grad-CAM [Selvaraju et al, '17]

Shap [Lundberg & Lee, '17], ...

RDE [Macdonald et al, '19], ...

[Sani et al, 2020] [Singla et al '19],..

Post-hoc Interpretability Methods

Interpretable by

construction

-

Adebayo et al, Sanity checks for saliency maps, 2018

-

Ghorbani et al, Interpretation of neural networks is fragile, 2019

-

Shah et al, Do input gradients highlight discriminative features? 2021

Interpretability in Image Classification

-

Sensitivity or Gradient-based perturbations

-

Shapley coefficients

-

Variational formulations

-

Counterfactual & causal explanations

LIME [Ribeiro et al, '16], CAM [Zhou et al, '16], Grad-CAM [Selvaraju et al, '17]

Shap [Lundberg & Lee, '17], ...

RDE [Macdonald et al, '19], ...

[Sani et al, 2020] [Singla et al '19],..

Post-hoc Interpretability Methods

Interpretable by

construction

-

Adebayo et al, Sanity checks for saliency maps, 2018

-

Ghorbani et al, Interpretation of neural networks is fragile, 2019

-

Shah et al, Do input gradients highlight discriminative features? 2021

Interpretability in Image Classification

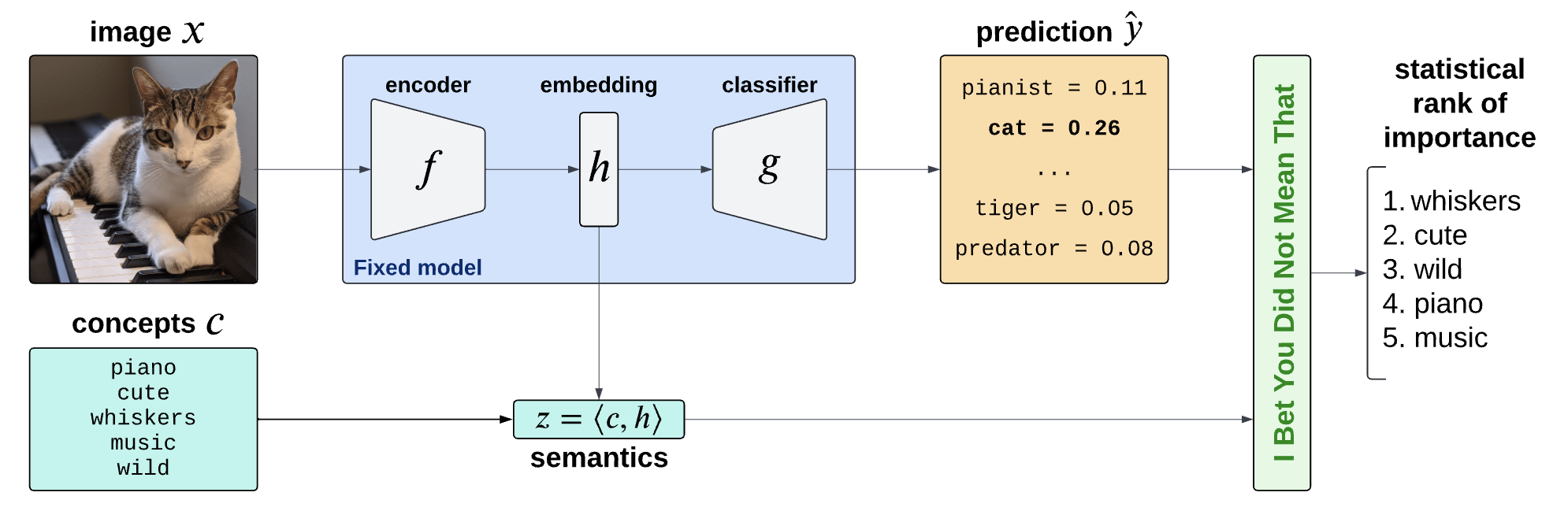

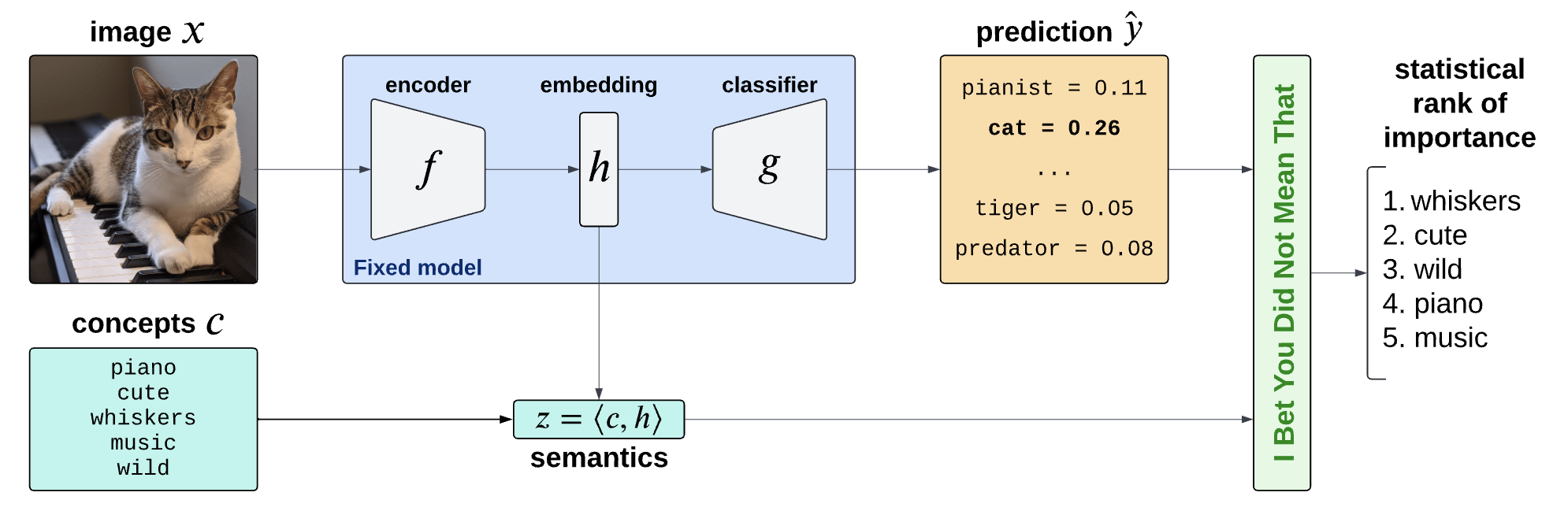

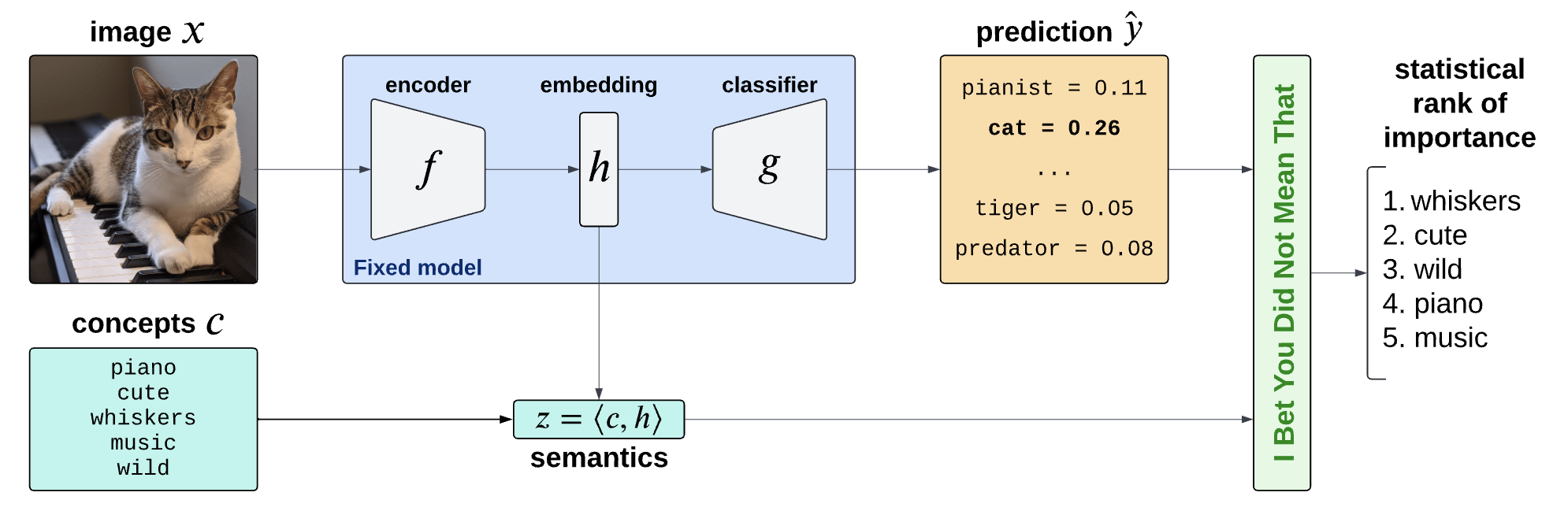

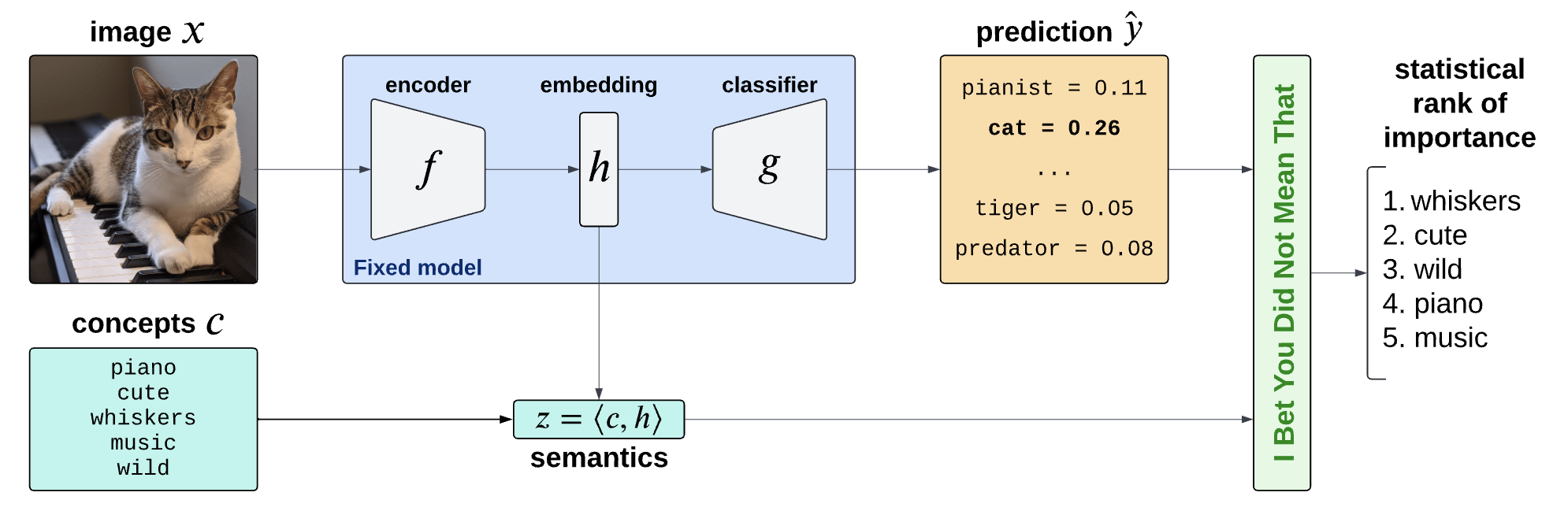

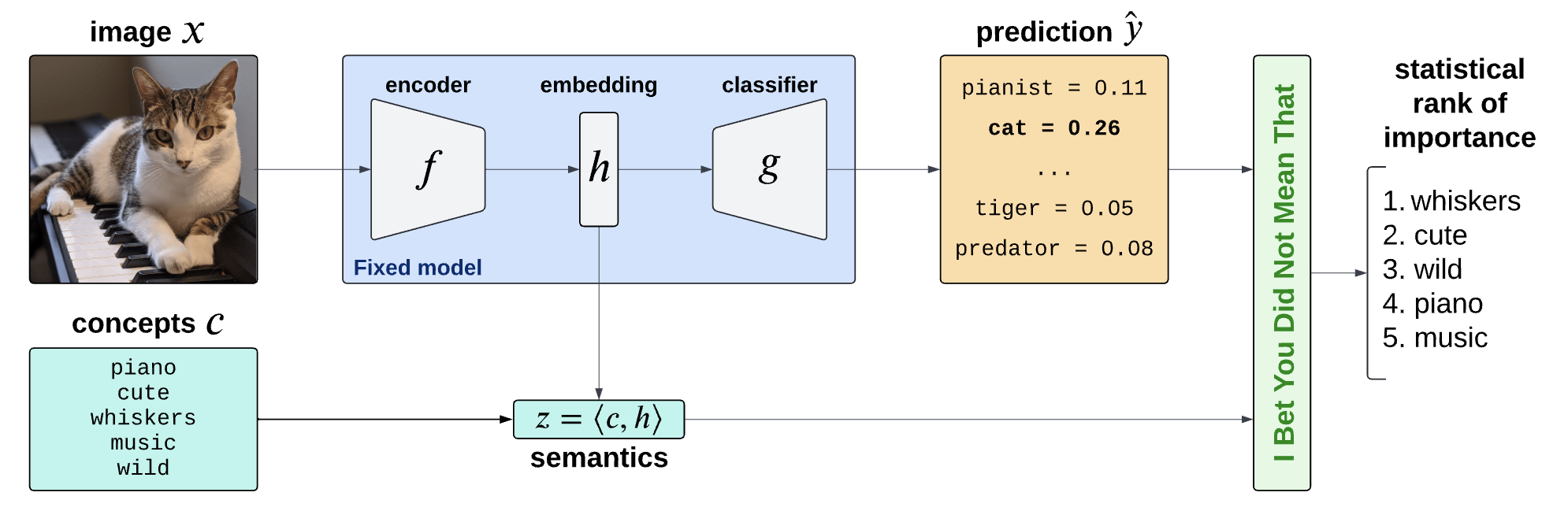

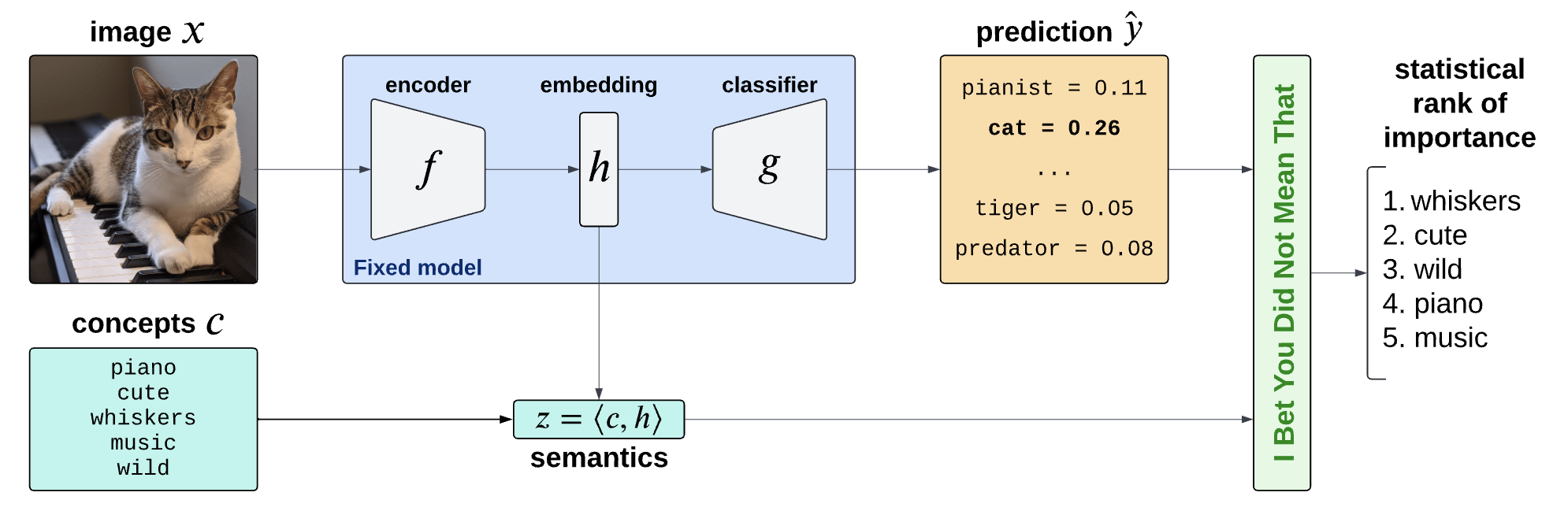

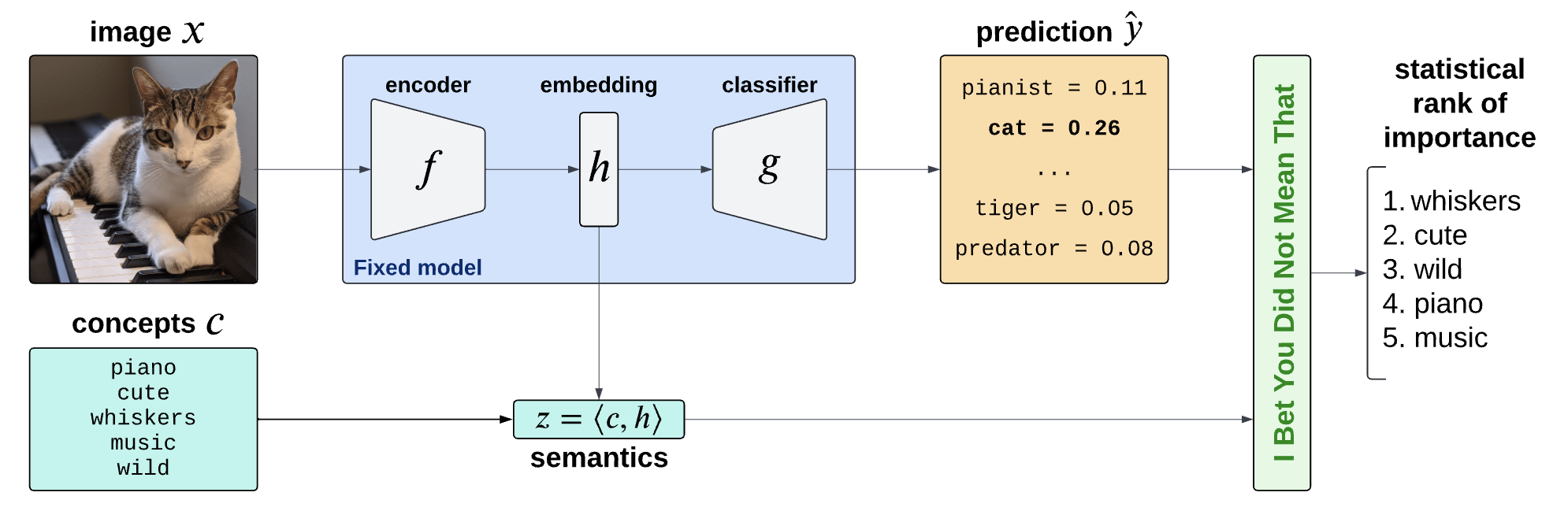

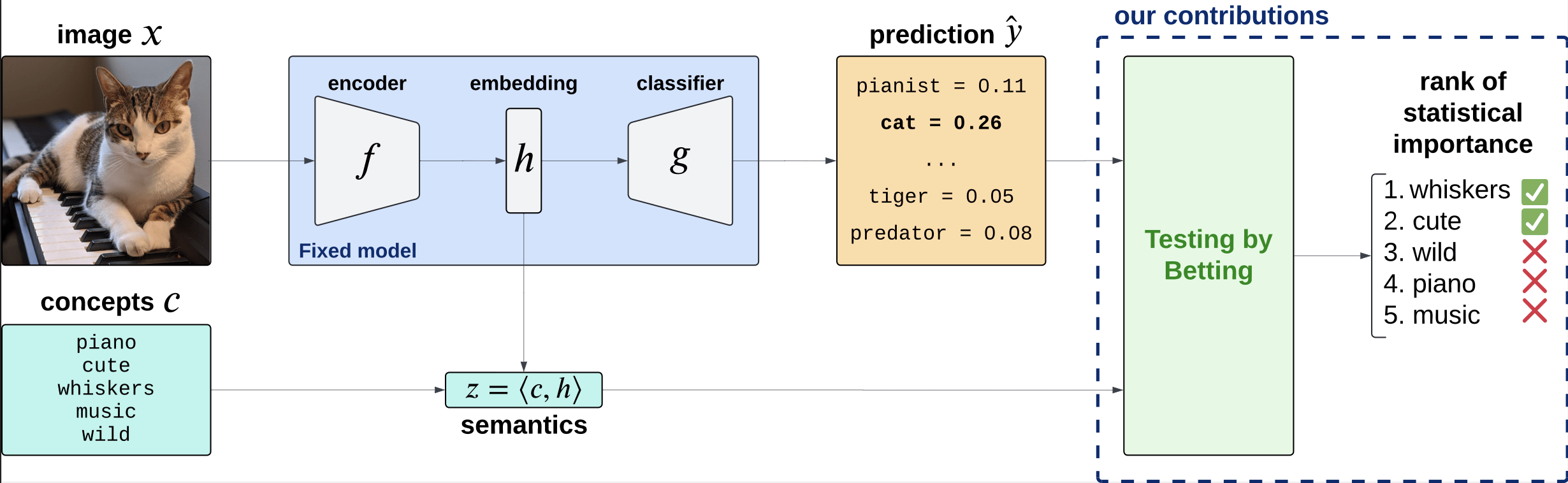

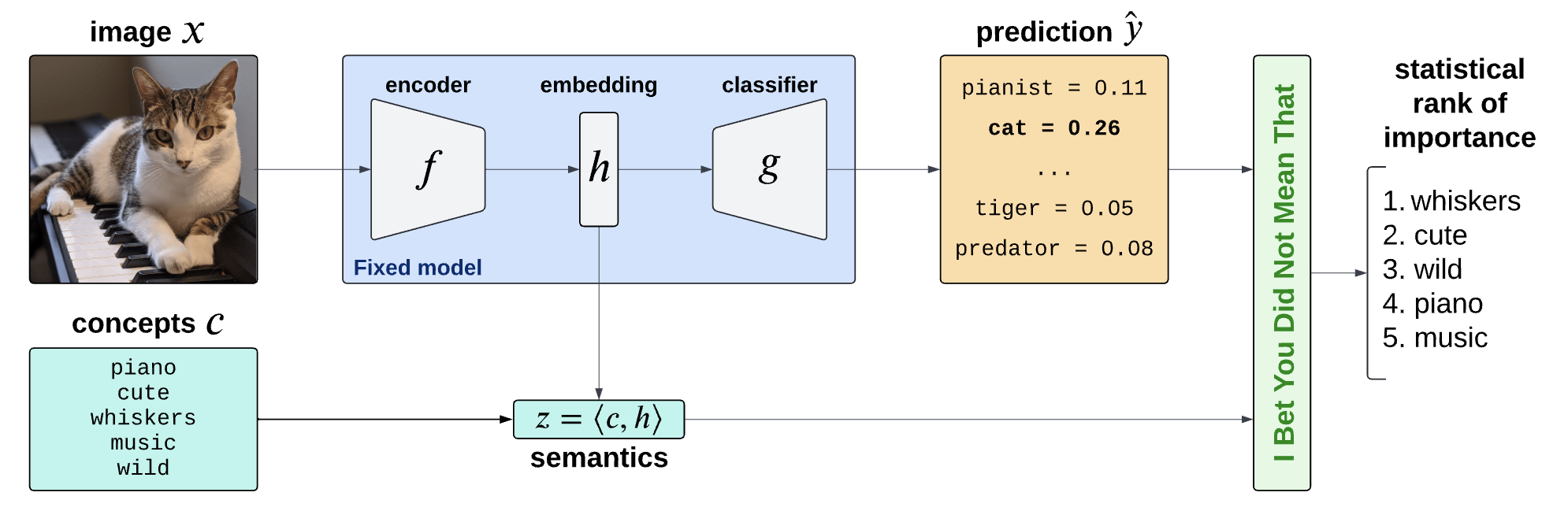

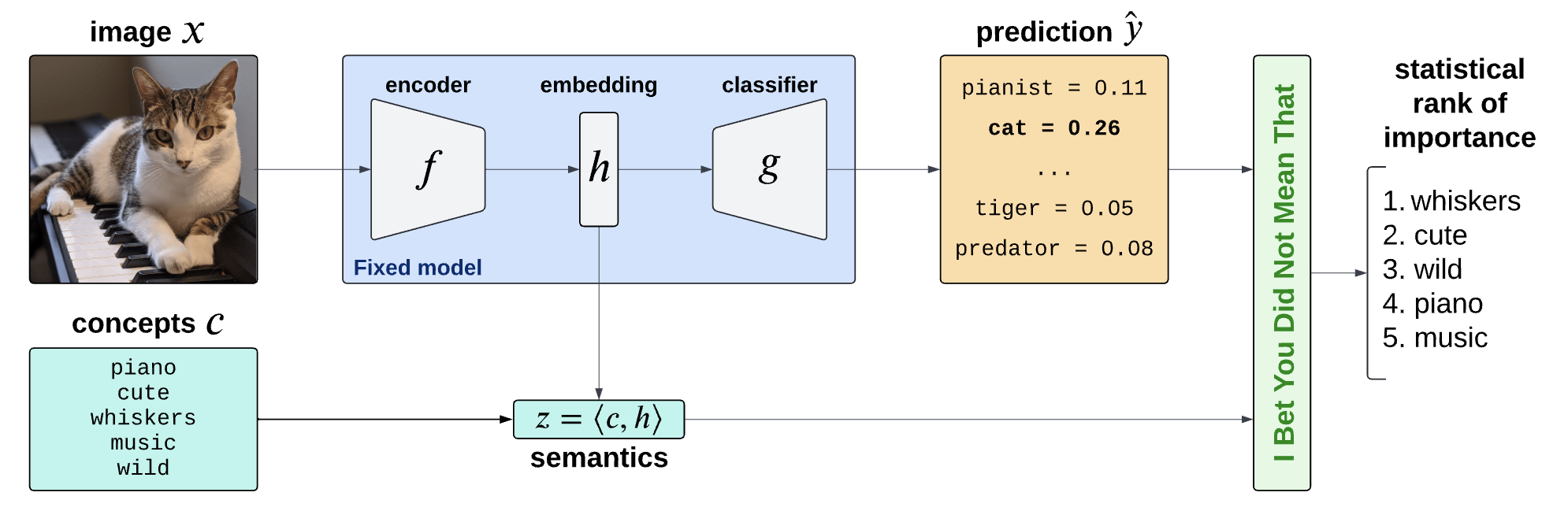

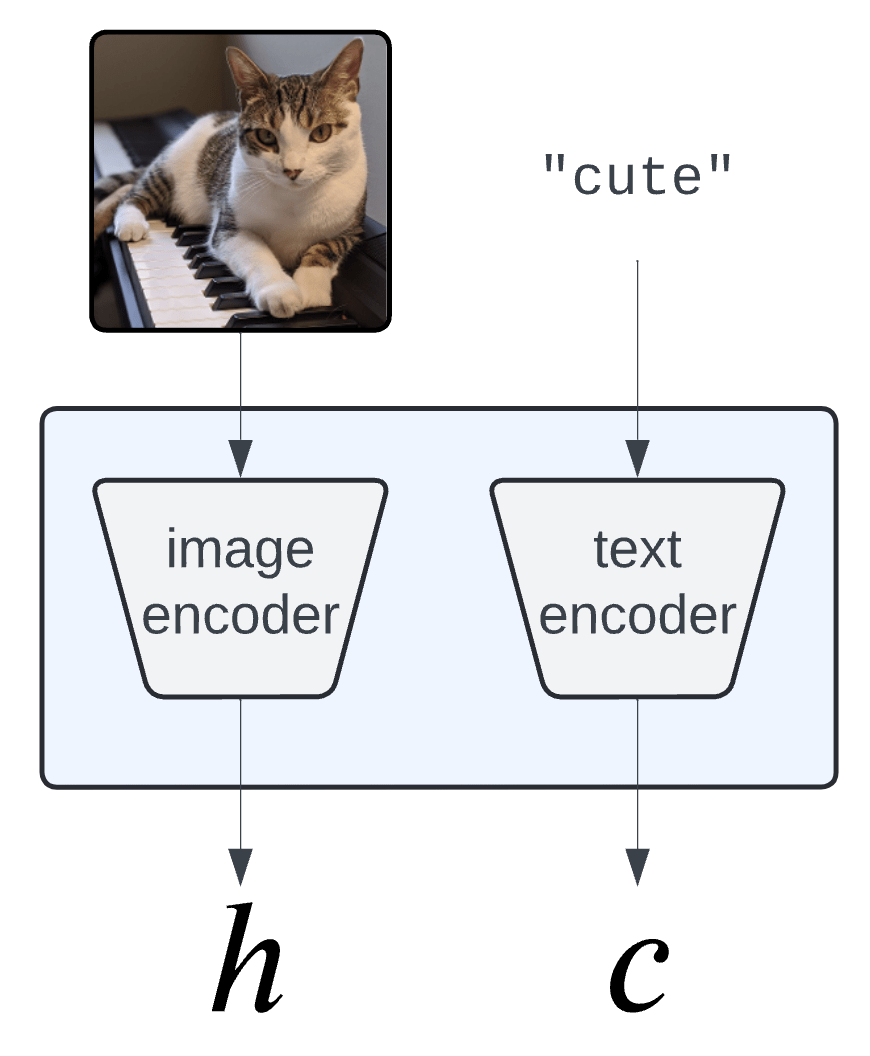

Is the piano important for \(\hat Y = \text{cat}\)?

How can we explain black-box predictors with semantic features?

Is the piano important for \(\hat Y = \text{cat}\), given that there is a cute mammal in the image?

Semantic Interpretability of classifiers

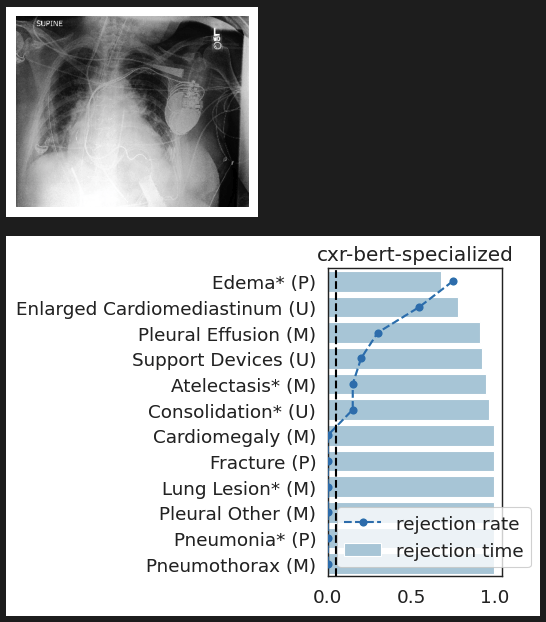

Is the presence of \(\color{Blue}\texttt{edema}\) important for \(\hat Y = \text{lung opacity}\)?

How can we explain black-box predictors with semantic features?

Is the presence of \(\color{magenta}\texttt{devices}\) important for \(\hat Y = \texttt{lung opacity}\), given that there is \(\color{blue}\texttt{edema}\) in the image?

lung opacity

cardiomegaly

fracture

no findding

Semantic Interpretability of classifiers

Semantic Interpretability of classifiers

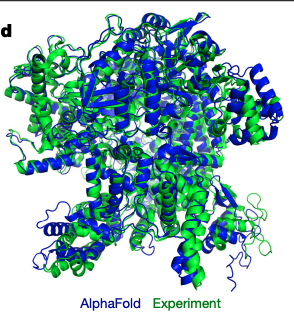

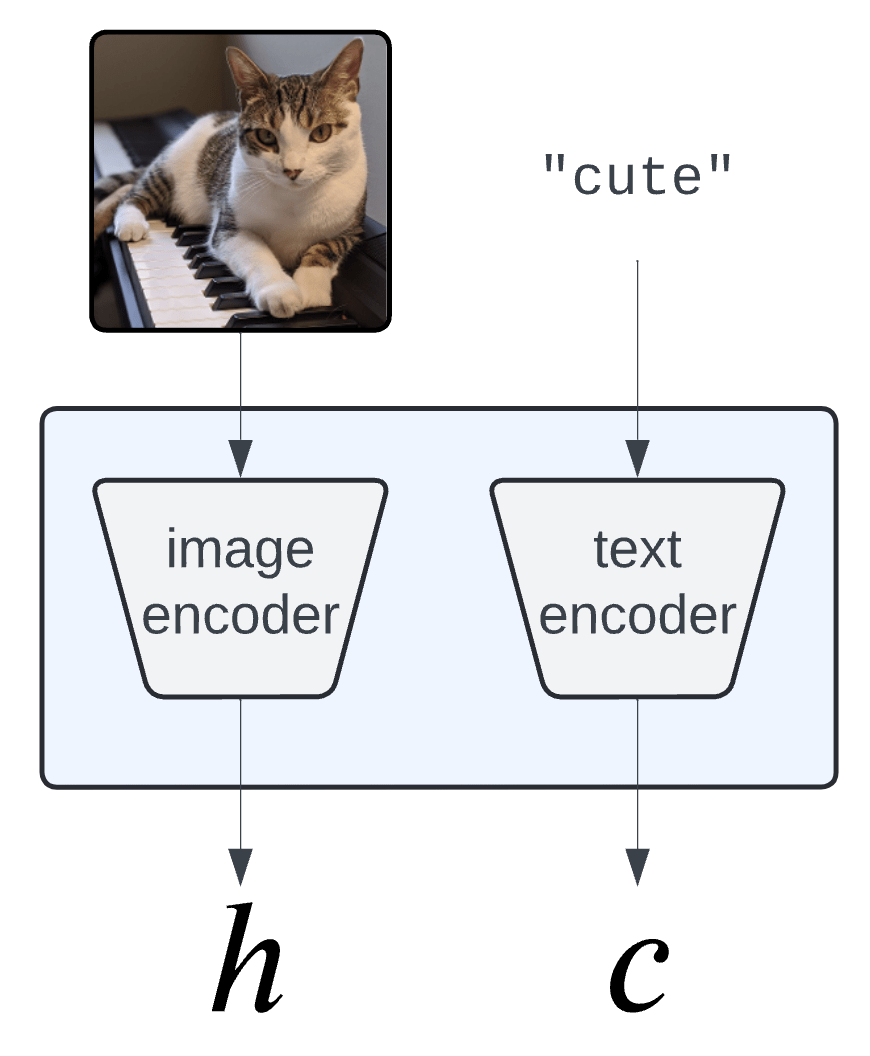

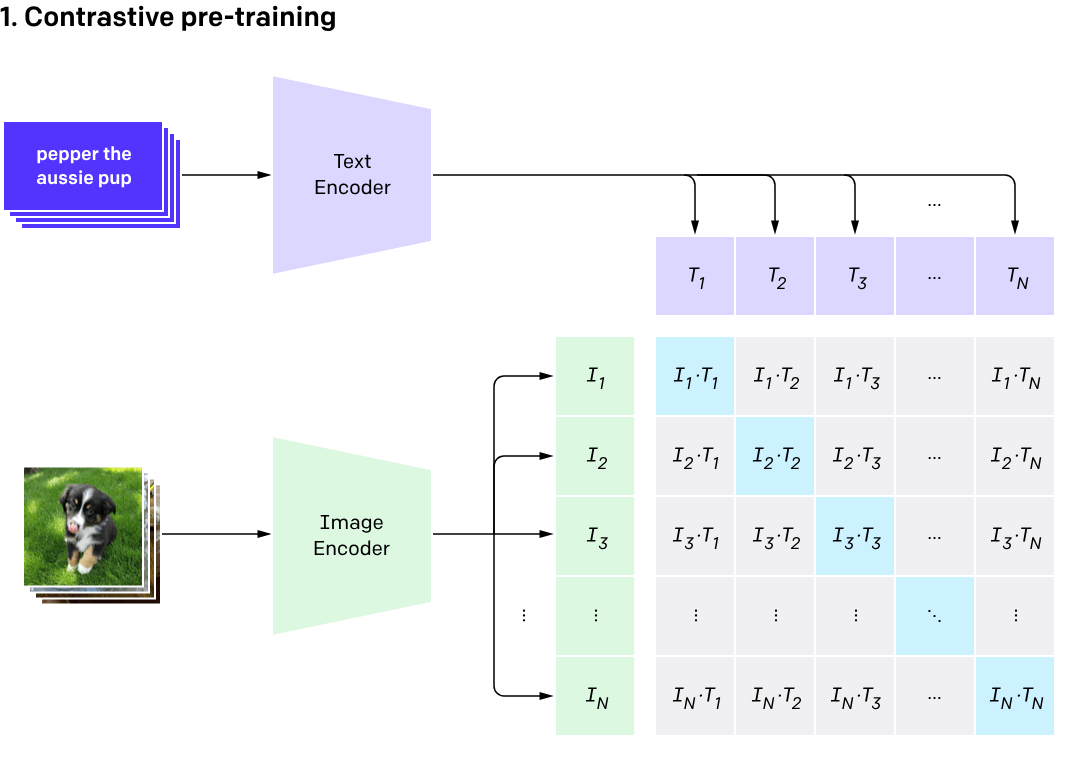

Embeddings

\(H = f(X) \in \mathbb R^d\)

Concept Bank:

\(C = [c_1, c_2, \dots, c_m] \in \mathbb R^{d\times m}\)

Semantic Interpretability of classifiers

Embeddings

\(H = f(X) \in \mathbb R^d\)

Concept Bank:

\(C = [c_1, c_2, \dots, c_m] \in \mathbb R^{d\times m}\)

Semantic Interpretability of classifiers

Embeddings

\(H = f(X) \in \mathbb R^d\)

Semantics

\(Z = C^\top H \in \mathbb R^m\)

Concept Activation Vectors

(Kim et al, 2018)

\(c_\text{cute}\)

Semantic Interpretability of classifiers

Vision-language models

(CLIP, BLIP, etc... )

Concept Bank:

\(C = [c_1, c_2, \dots, c_m] \in \mathbb R^{d\times m}\)

Semantic Interpretability of classifiers

[Bhalla et al, "Splice", 2024]

Concept Bottleneck Models (CMBs)

[Koh et al '20, Yang et al '23, Yuan et al '22 ]

Need to engineer a (large) concept bank

Performance hit w.r.t. original predictor

\(\tilde{Y} = \hat w^\top Z\)

\(\hat w_j\) is the importance of the \(j^{th}\) concept

Desiderata: Semantic explanations with

-

Fixed original predictor (post-hoc)

-

Global and local importance notions

-

Testing for any concepts (no need for large concept banks)

-

Precise testing with guarantees (Type 1 error/FDR control)

Precise notions of importance

Global Feature Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j \)

cuteness

cat

Precise notions of importance

mammal, piano, whiskers, ...

Global Feature Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j \)

cuteness

Global Conditional Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j | Z_{-j}\)

cuteness

cat

cat

Precise notions of importance

Global Conditional Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j | Z_{-j}\)

Testing

Procedure

(at level \(\alpha\))

Reject

Fail to Reject

( \(Z_j:\) important )

Precise notions of importance

Global Conditional Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j | Z_{-j}\)

Testing

Procedure

(at level \(\alpha\))

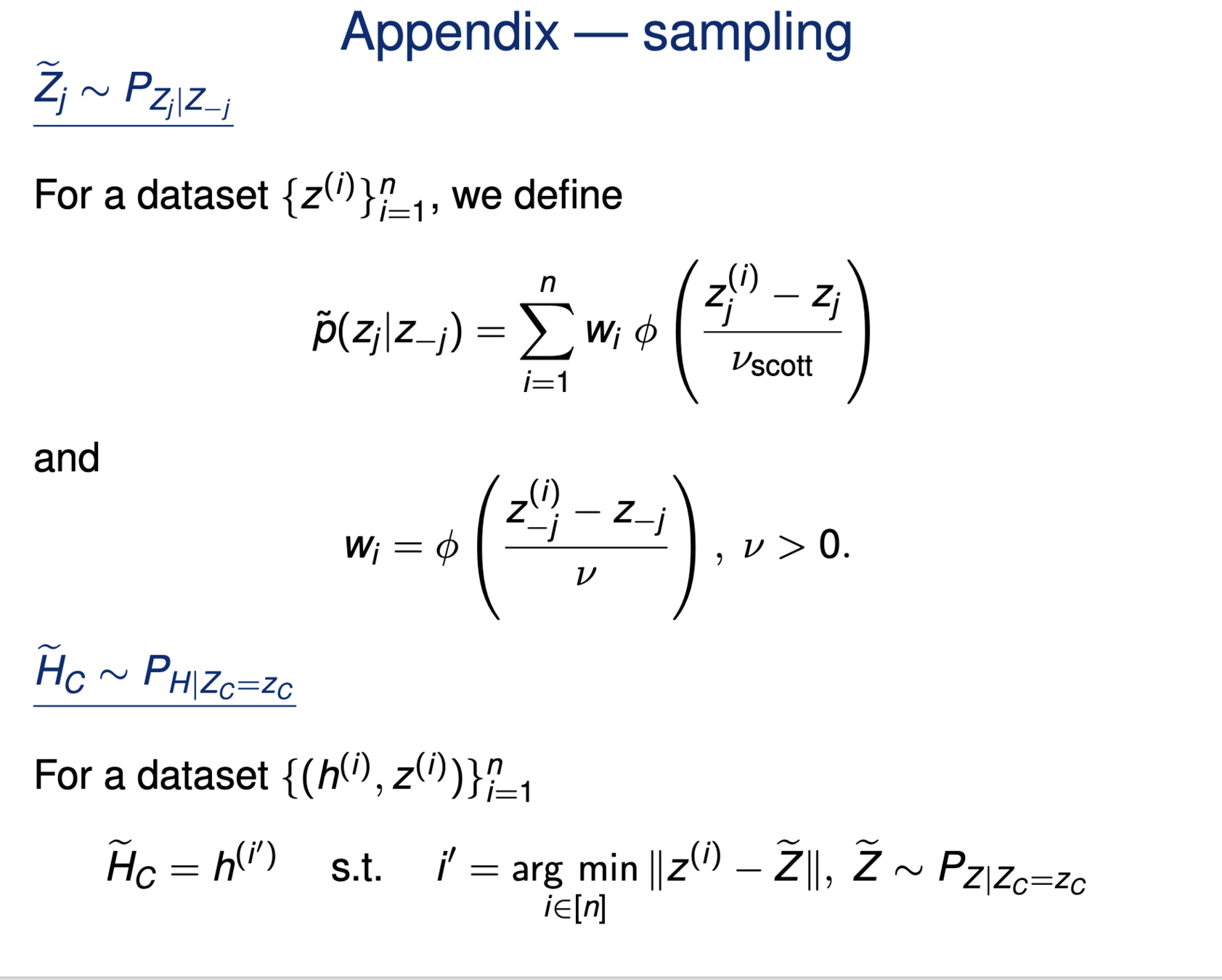

[Candes et al, 2018]

\(\rightarrow\) Conditional Randomization Test (CRT)

requiring \(Z_j \sim P_{Z_j|Z_{-j}}\)

Correct testing: \(\mathbb P [\text{rejecting} | H_{0,j}:\text{true}]\leq \alpha\)

Reject

Fail to Reject

( \(Z_j:\) important )

Precise notions of importance

Global Conditional Importance

\(H_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j | Z_{-j}\)

Reject

Fail to Reject

Testing

Procedure

(at level \(\alpha\))

[Candes et al, 2018]

\(\rightarrow\) Conditional Randomization Test (CRT)

requiring \(Z_j \sim P_{Z_j|Z_{-j}}\)

\(\quad \hat{Y}|(Z_j,Z_{-j}) \overset{d}{=} \hat{Y}|Z_{-j}\)

Correct testing: \(\mathbb P [\text{rejecting} | H_{0,j}:\text{true}]\leq \alpha\)

( \(Z_j:\) important )

Local Conditional Importance

"\(\hat{Y}(x) \perp\!\!\!\perp {Z_j}(x) | Z_{-j}(x)\)"

semantic \(j\) in \(x\)

all other semantics \(\{-j\}\) in \(x\)

Precise notions of importance

Local Conditional Importance

Semantically-conditioned features: \(\tilde H_S \sim P_{H|Z_S = z_S} \)

\[H^{j,S}_0:~ \text{classifier}({\tilde H_{S \cup \{j\}}}) \overset{d}{=} \text{classifier}(\tilde H_S)\]

features with semantic \(j\) in \(x\)

features with semantics \(\{-j\}\) in \(x\)

we can sample from these

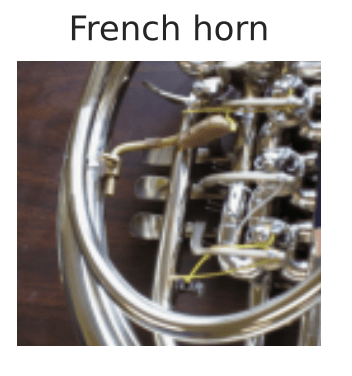

Precise notions of importance

"The classifier (its distribution) does not change if we condition

on concepts \(S\) vs on concepts \(S\cup\{j\} \)"

eXplanation Randomization

Test (XRT)

[Teneggi et al, 2023]

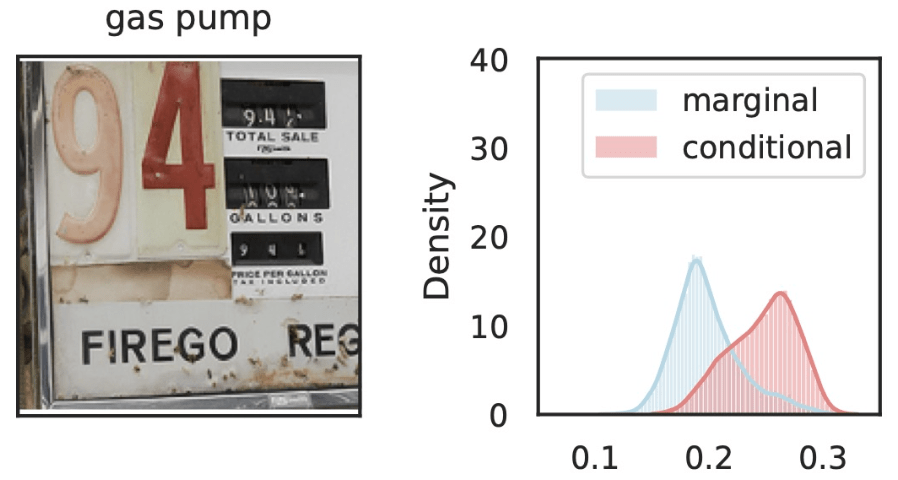

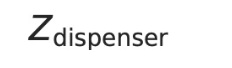

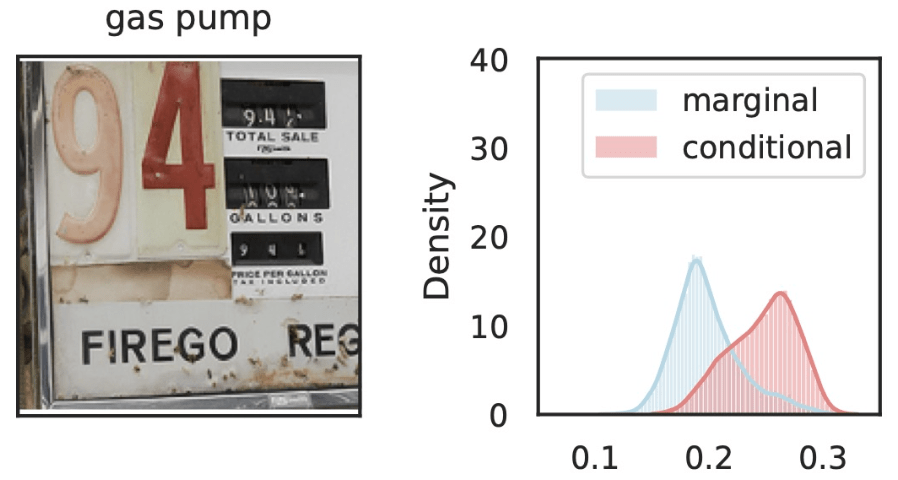

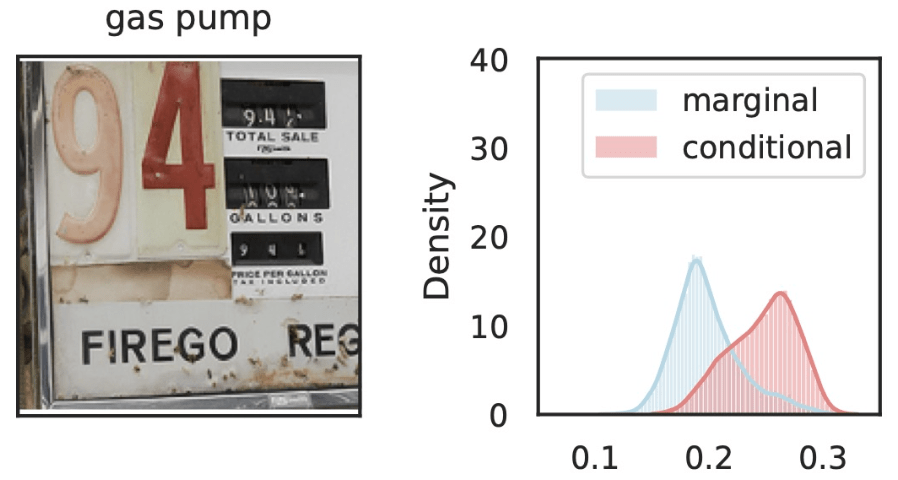

\(\hat{Y}_\text{gas pump}\)

\(Z_S\cup Z_{j}\)

\(Z_{S}\)

\(Z_j=\)

Local Conditional Importance

\(\tilde{Z}_S = [z_\text{text}, z_\text{old}, Z_\text{dispenser}, Z_\text{trumpet}, Z_\text{fire}, \dots ] \)

\(S\)

\(\tilde{Z}_{S\cup j} = [z_\text{text}, z_\text{old}, z_\text{dispenser}, Z_\text{trumpet}, Z_\text{Fire}, \dots ] \)

\(S\)

\(j\)

Precise notions of semantic importance

\[H^{j,S}_0:~ \text{classifier}({\tilde H_{S \cup \{j\}}}) \overset{d}{=} \text{classifier}(\tilde H_S)\]

\(\tilde H_S \sim P_{H|Z_S = z_S} \)

\(\hat{Y}_\text{gas pump}\)

\(\hat{Y}_\text{gas pump}\)

\(Z_S\cup Z_{j}\)

\(Z_{S}\)

\(Z_S\cup Z_{j}\)

\(Z_{S}\)

Local Conditional Importance

\(Z_j=\)

\(Z_j=\)

\(\tilde{Z}_S = [z_\text{text}, z_\text{old}, Z_\text{dispenser}, Z_\text{trumpet}, Z_\text{fire}, \dots ] \)

\(\tilde{Z}_{S\cup j} = [z_\text{text}, z_\text{old}, Z_\text{dispenser}, z_\text{trumpet}, Z_\text{Fire}, \dots ] \)

\(S\)

\(S\)

\(j\)

Precise notions of semantic importance

\[H^{j,S}_0:~ \text{classifier}({\tilde H_{S \cup \{j\}}}) \overset{d}{=} \text{classifier}(\tilde H_S)\]

\(\tilde H_S \sim P_{H|Z_S = z_S} \)

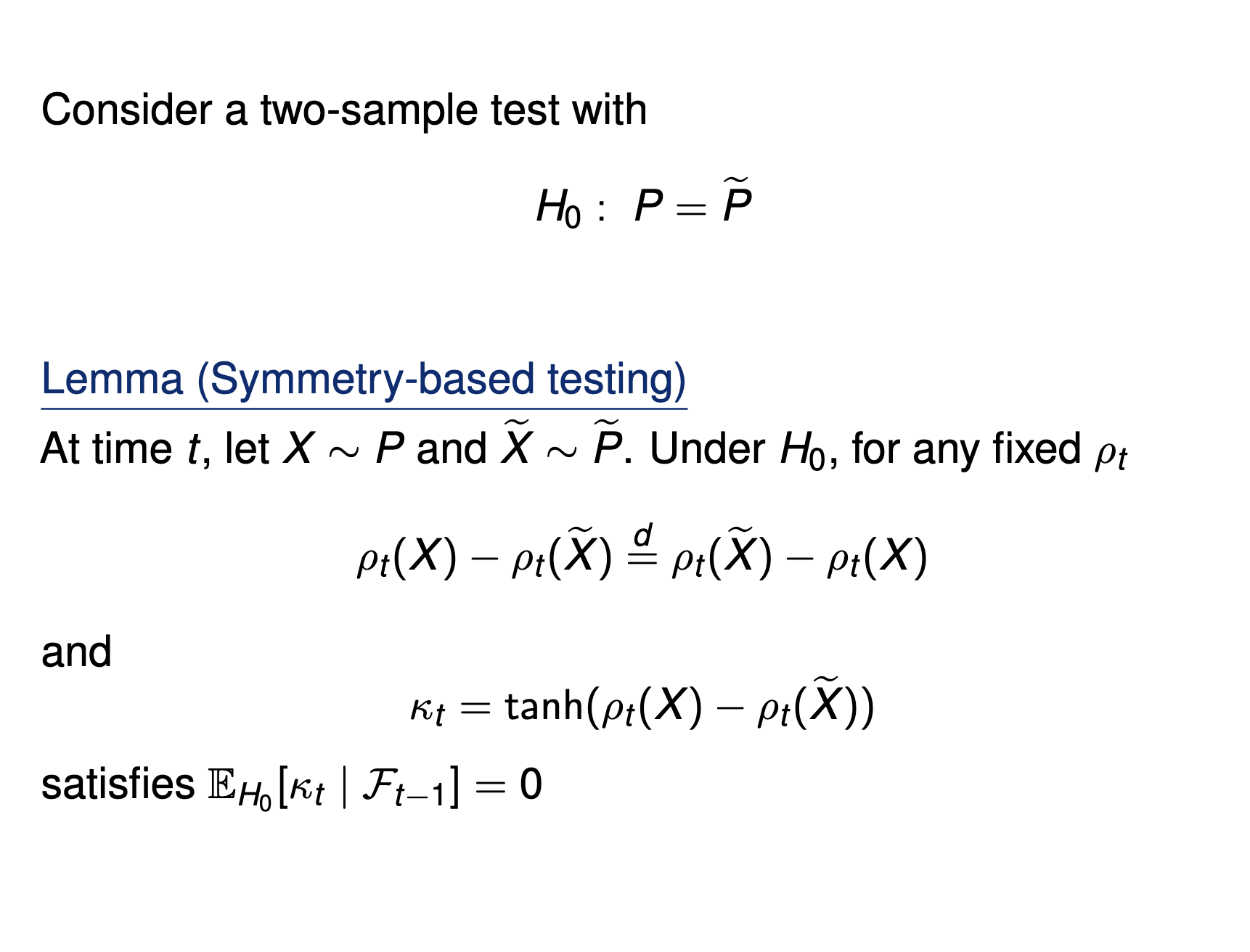

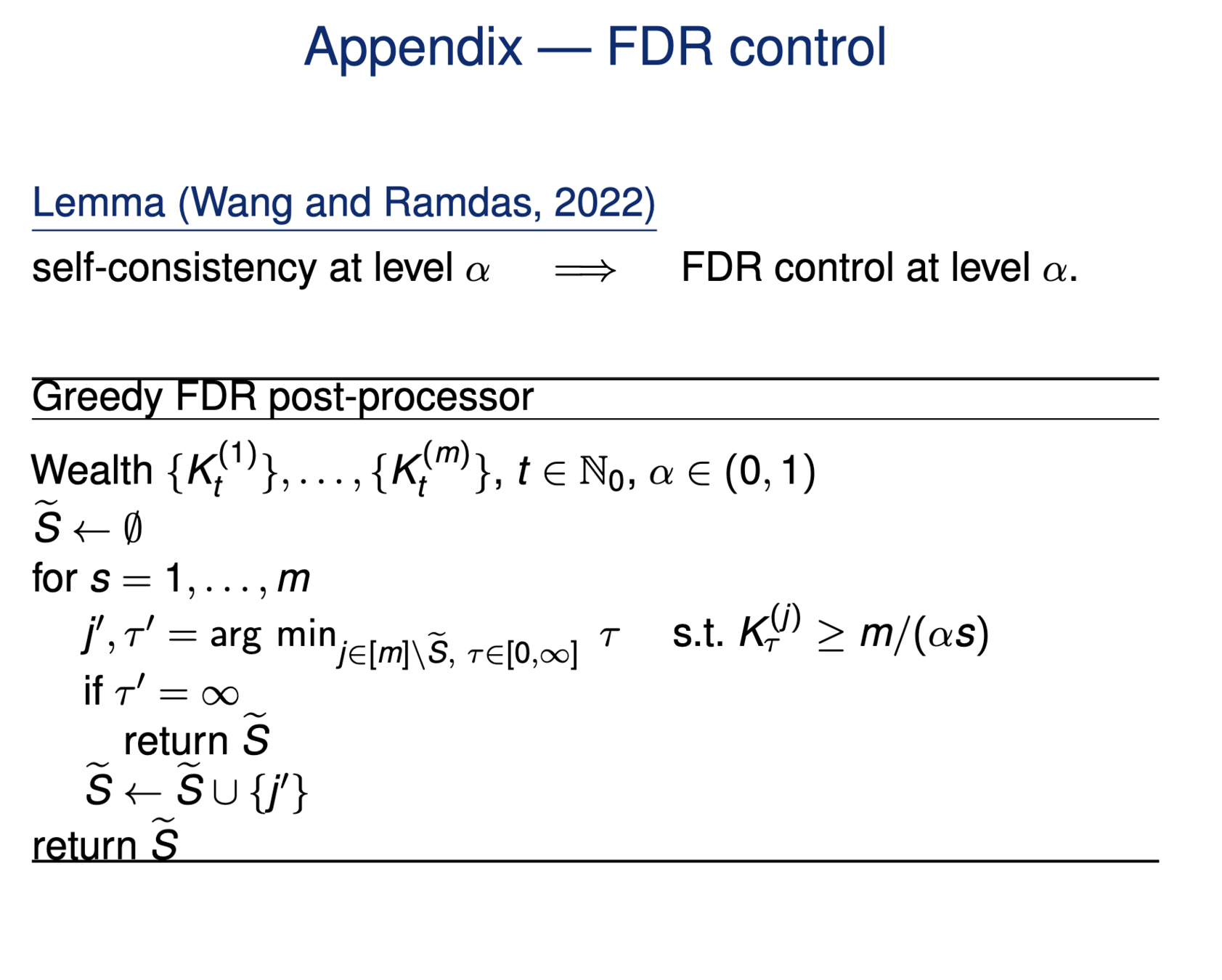

Testing by betting

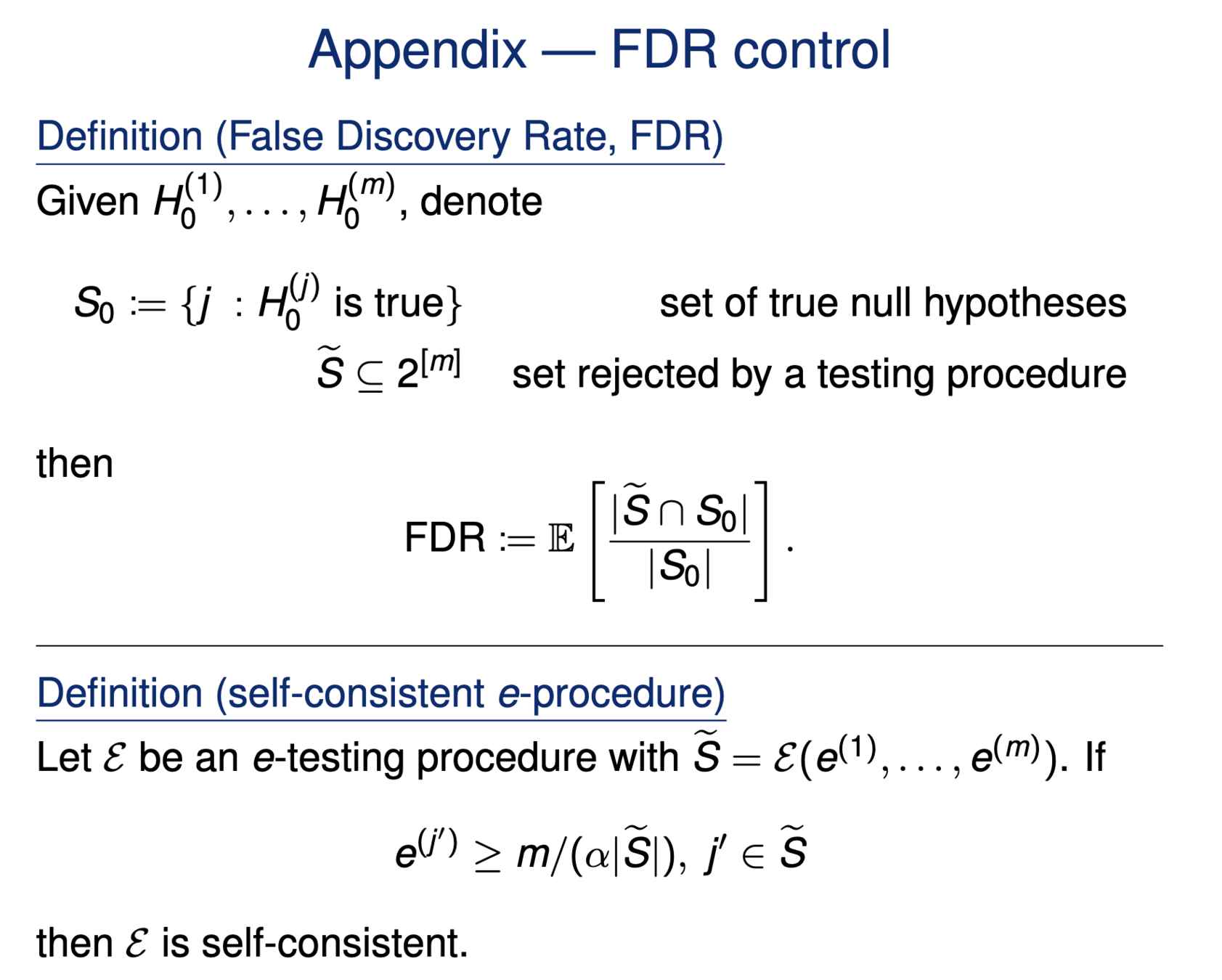

\(H^G_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j \iff P_{\hat{Y},Z_j} = P_{\hat{Y}} \times P_{Z_j}\)

Testing importance via two-sample tests

\(H^{GC}_{0,j} : \hat{Y} \perp\!\!\!\perp Z_j | Z_{-j} \iff P_{\hat{Y}Z_jZ_{-j}} = P_{\hat{Y}\tilde{Z}_j{Z_{-j}}}\)

\(\tilde{Z_j} \sim P_{Z_j|Z_{-j}}\)

[Candes et al, 2018; Shaer et al, 2023]

[Teneggi et al, 2023]

\[H^{j,S}_0:~ \text{classifier}({\tilde H_{S \cup \{j\}}}) \overset{d}{=} \text{classifier}(\tilde H_S), \qquad \tilde H_S \sim P_{H|Z_S = z_S} \]

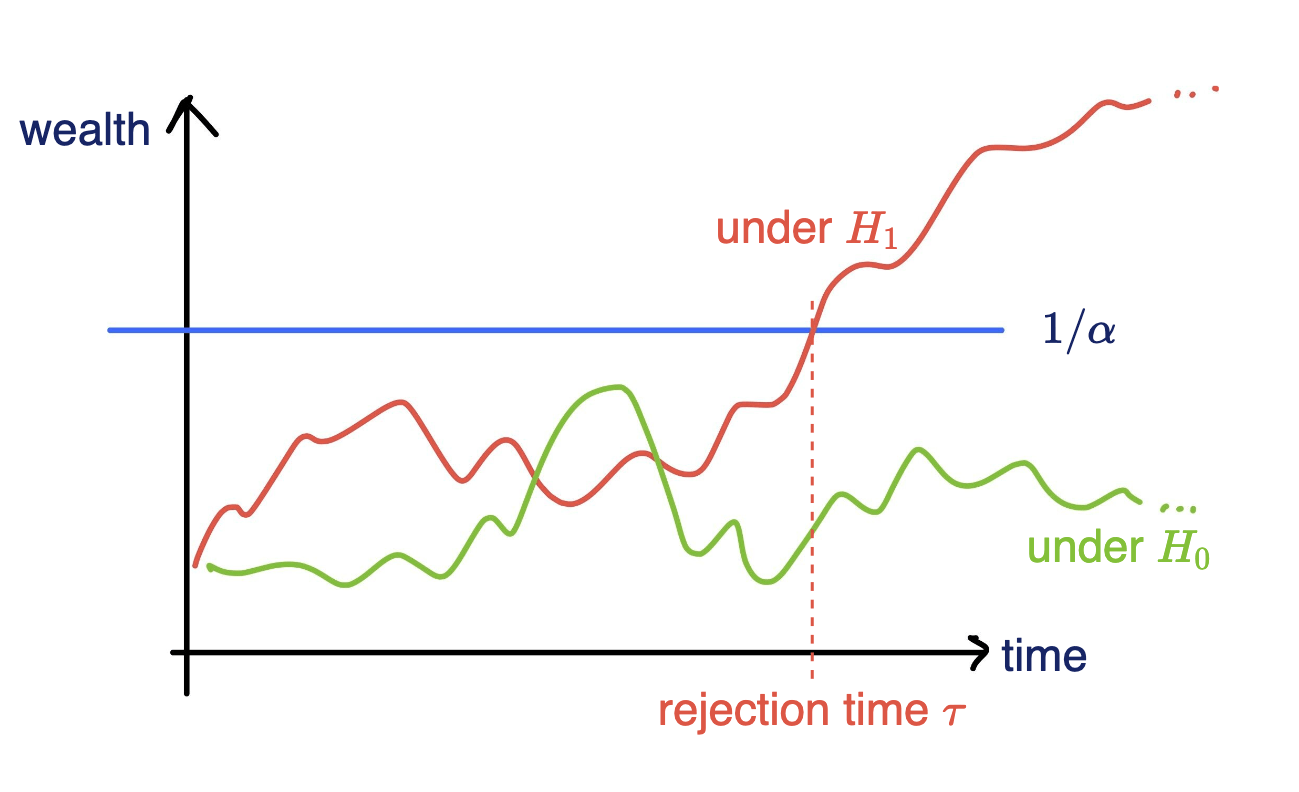

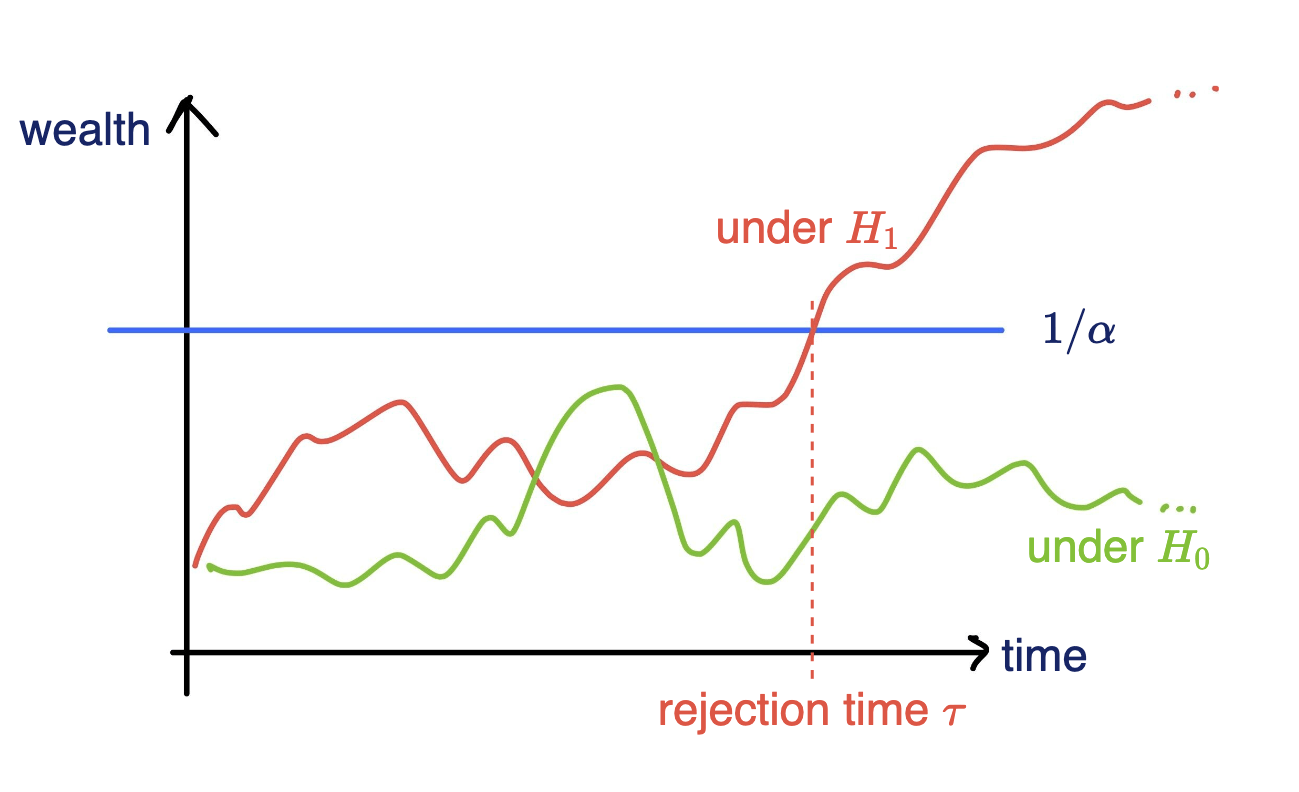

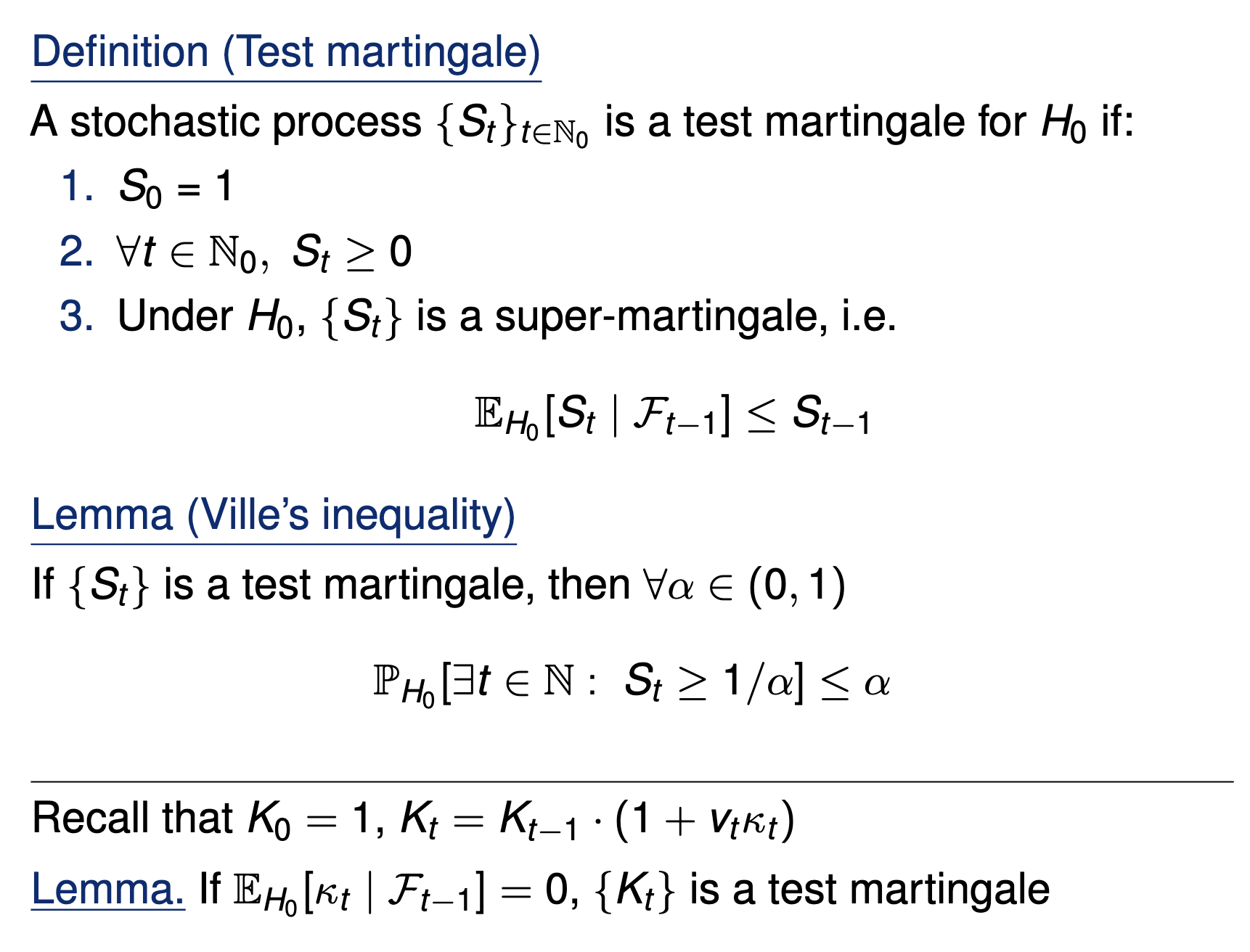

Testing by betting

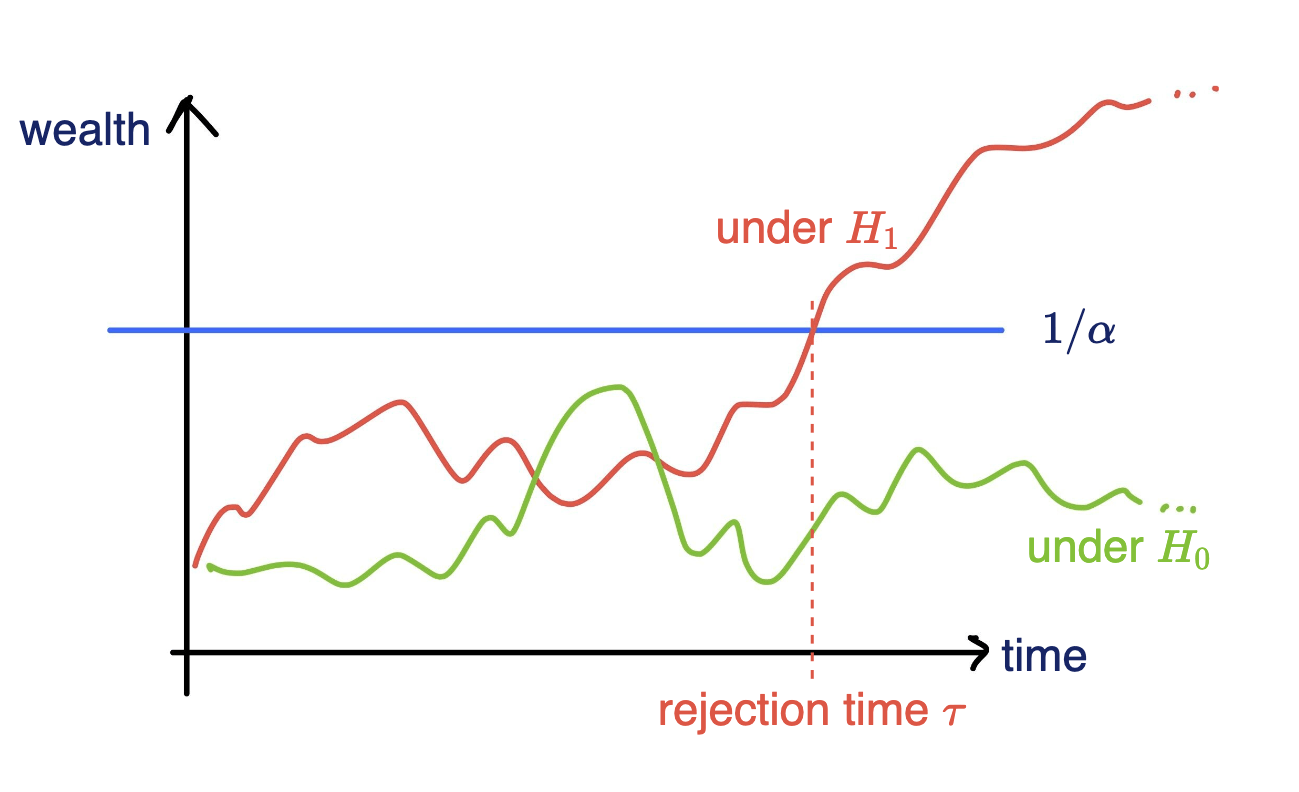

Goal: Test a null hypothesis \(H_0\) at significance level \(\alpha\)

Standard testing by p-values

Collect data, then test, and reject if \(p \leq \alpha\)

Online testing by e-values

Any-time valid inference, monitor online and reject when \(e\geq 1/\alpha\)

[Shaer et al. 2023, Shekhar and Ramdas 2023, Podkopaev et al 2023]

Testing by betting via SKIT (Podkopaev et al., 2023)

Online testing by e-values

Any-time valid inference, track and reject when \(e\geq 1/\alpha\)

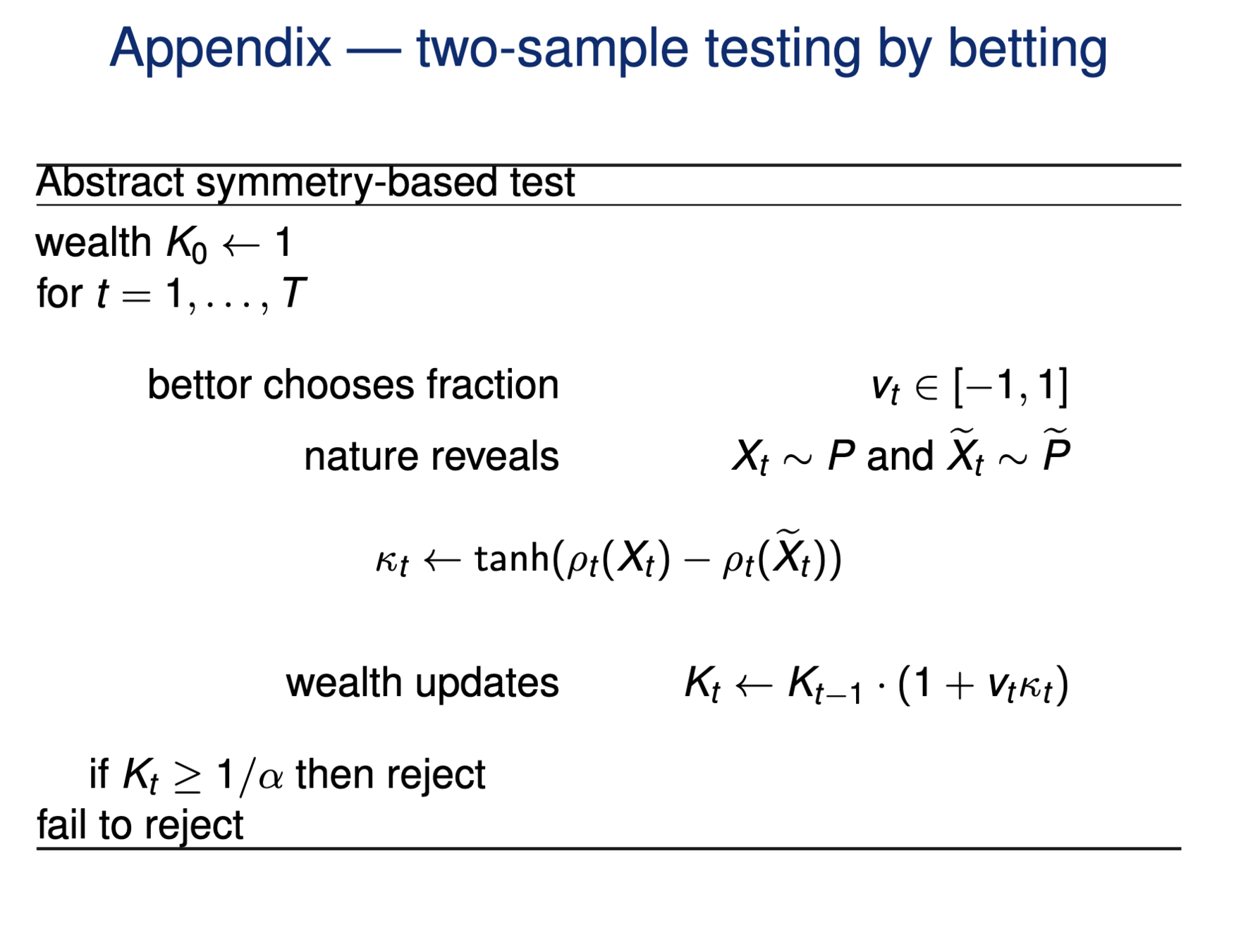

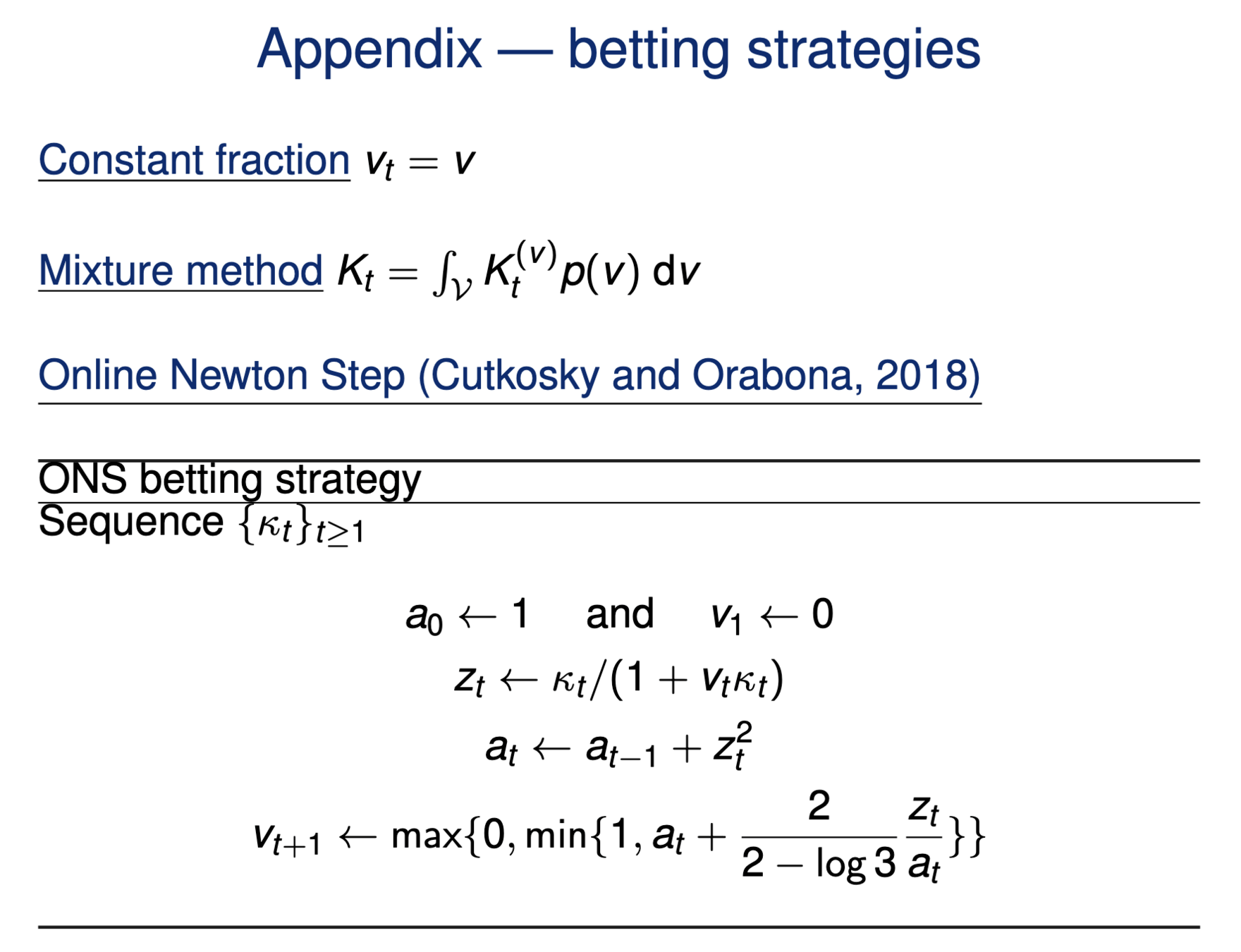

Consider a wealth process

\(K_0 = 1;\)

\(\text{for}~ t = 1, \dots \\ \quad K_t = K_{t-1}(1+\kappa_t v_t)\)

Fair game (test martingale): \(~~\mathbb E_{H_0}[\kappa_t | \text{Everything seen}_{t-1}] = 0\)

\(v_t \in (0,1):\) betting fraction

\(\kappa_t \in [-1,1]\) payoff

[Grünwald 2019, Shafer 2021, Shaer et al. 2023, Shekhar and Ramdas 2023. Podkopaev et al., 2023]

\(\mathbb P_{H_0}[\exists t \in \mathbb N: K_t \leq 1/\alpha]\leq \alpha\)

Goal: Test a null hypothesis \(H_0\) at significance level \(\alpha\)

Testing by betting via SKIT (Podkopaev et al., 2023)

Online testing by e-values

Any-time valid inference, track and reject when \(e\geq 1/\alpha\)

Consider a wealth process

\(K_0 = 1;\)

\(\text{for}~ t = 1, \dots \\ \quad K_t = K_{t-1}(1+\kappa_t v_t)\)

Fair game (test martingale): \(~~\mathbb E_{H_0}[\kappa_t | \text{Everything seen}_{t-1}] = 0\)

\(v_t \in (0,1):\) betting fraction

\(\kappa_t \in [-1,1]\) payoff

[Grünwald 2019, Shafer 2021, Shaer et al. 2023, Shekhar and Ramdas 2023. Podkopaev et al., 2023]

\(\mathbb P_{H_0}[\exists t \in \mathbb N: K_t \leq 1/\alpha]\leq \alpha\)

Goal: Test a null hypothesis \(H_0\) at significance level \(\alpha\)

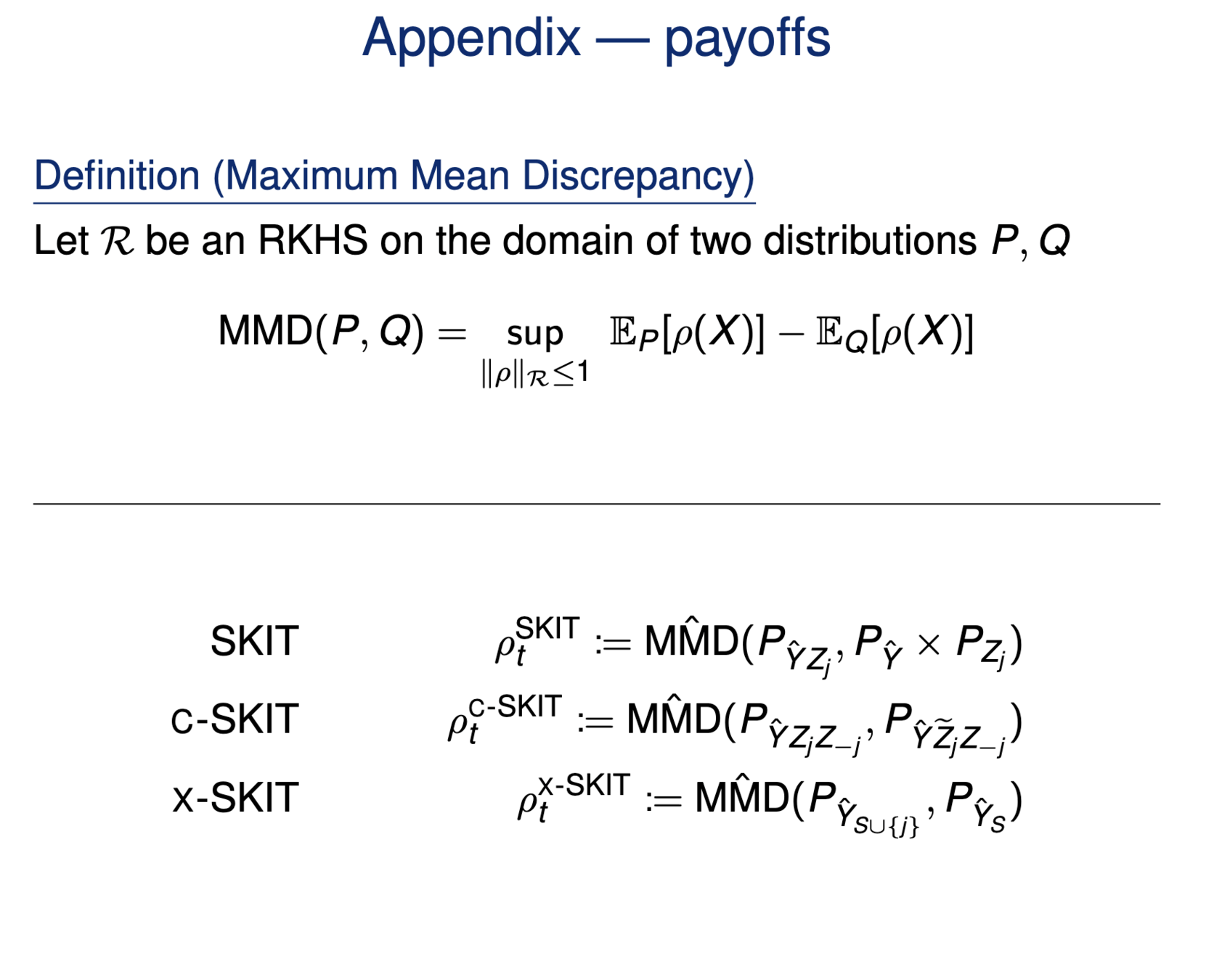

\(H_0: ~ P = Q\)

\(\kappa_t = \text{tahn}({\color{teal}\rho(X_t)} - {\color{teal}\rho(Y_t)})\)

Iteratively:

\( K_t = K_{t-1}(1+\kappa_t v_t)\)

\(\color{teal}{X_t \sim P; ~ Y_t\sim Q}\)

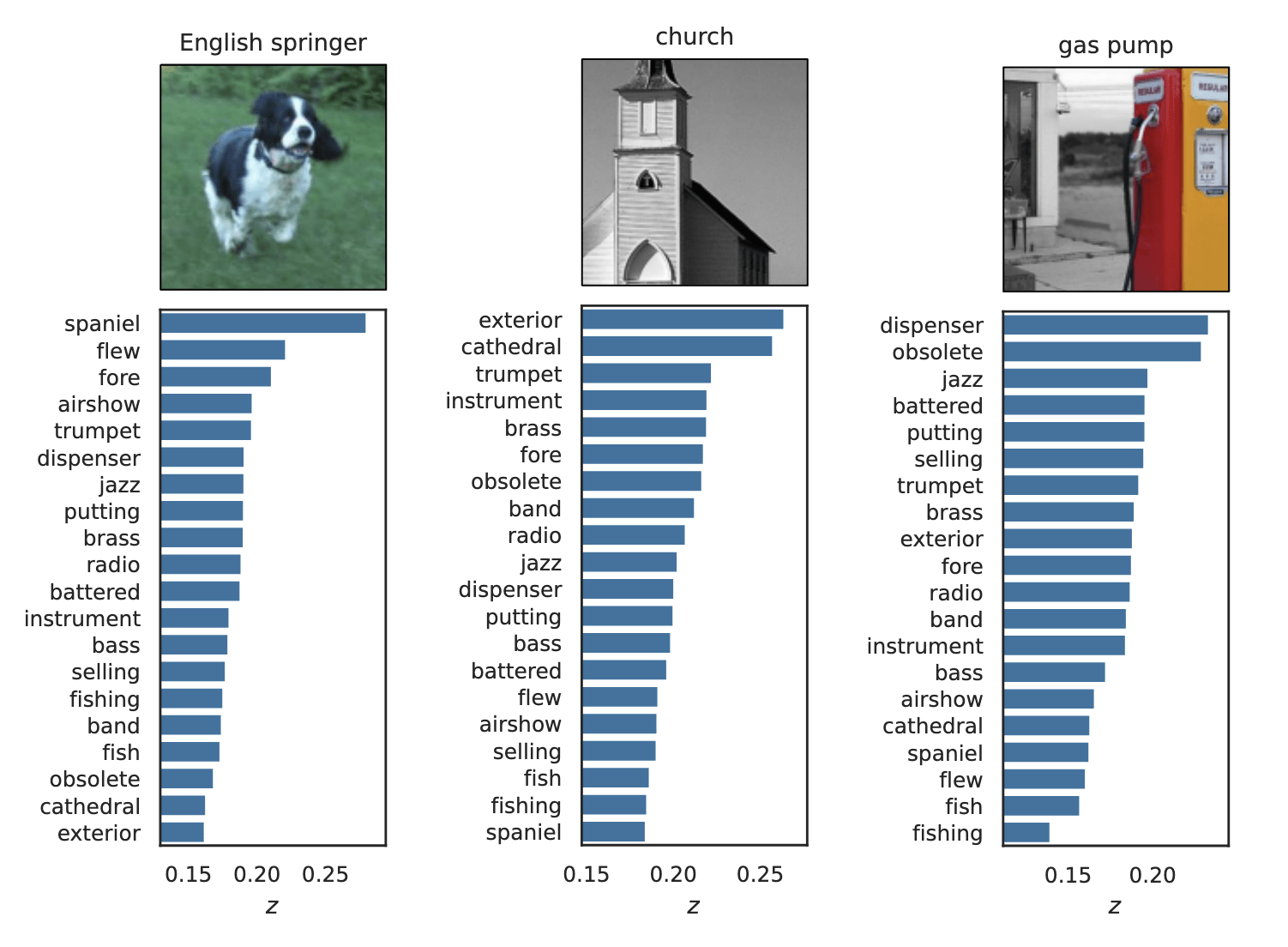

Example:

Data efficient

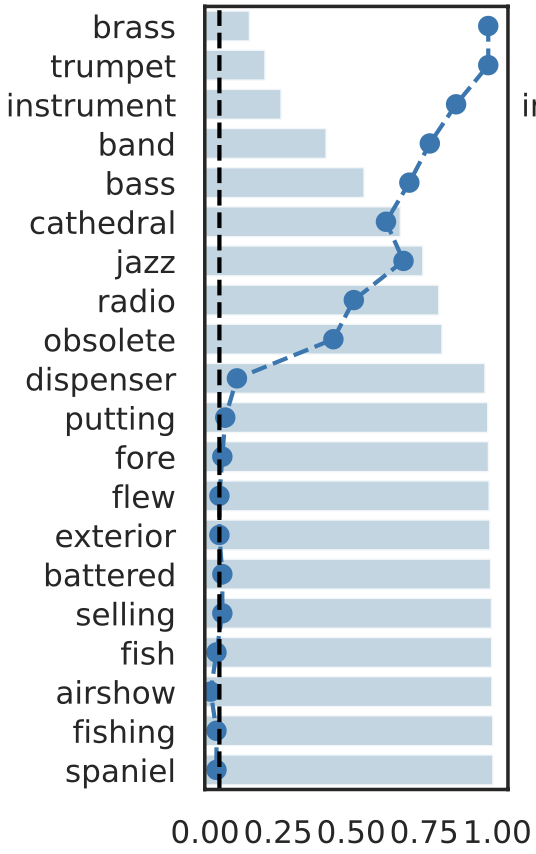

Rank induced by rejection time

Online testing by e-values

\(v_t \in (0,1):\) betting fraction

\(H_0: ~ P = Q\)

\(\kappa_t = \text{tahn}({\color{teal}\rho(X_t)} - {\color{teal}\rho(Y_t)})\)

Payoff function

\({\color{black}\text{MMD}(P,Q)} : \text{ Maximum Mean Discrepancy}\)

\({\color{teal}\rho} = \underset{\rho\in \mathcal R:\|\rho\|_\mathcal R\leq 1}{\arg\sup} ~\mathbb E_P [\rho(X)] - \mathbb E_Q[\rho(Y)]\)

\( K_t = K_{t-1}(1+\kappa_t v_t)\)

Data efficient

Rank induced by rejection time

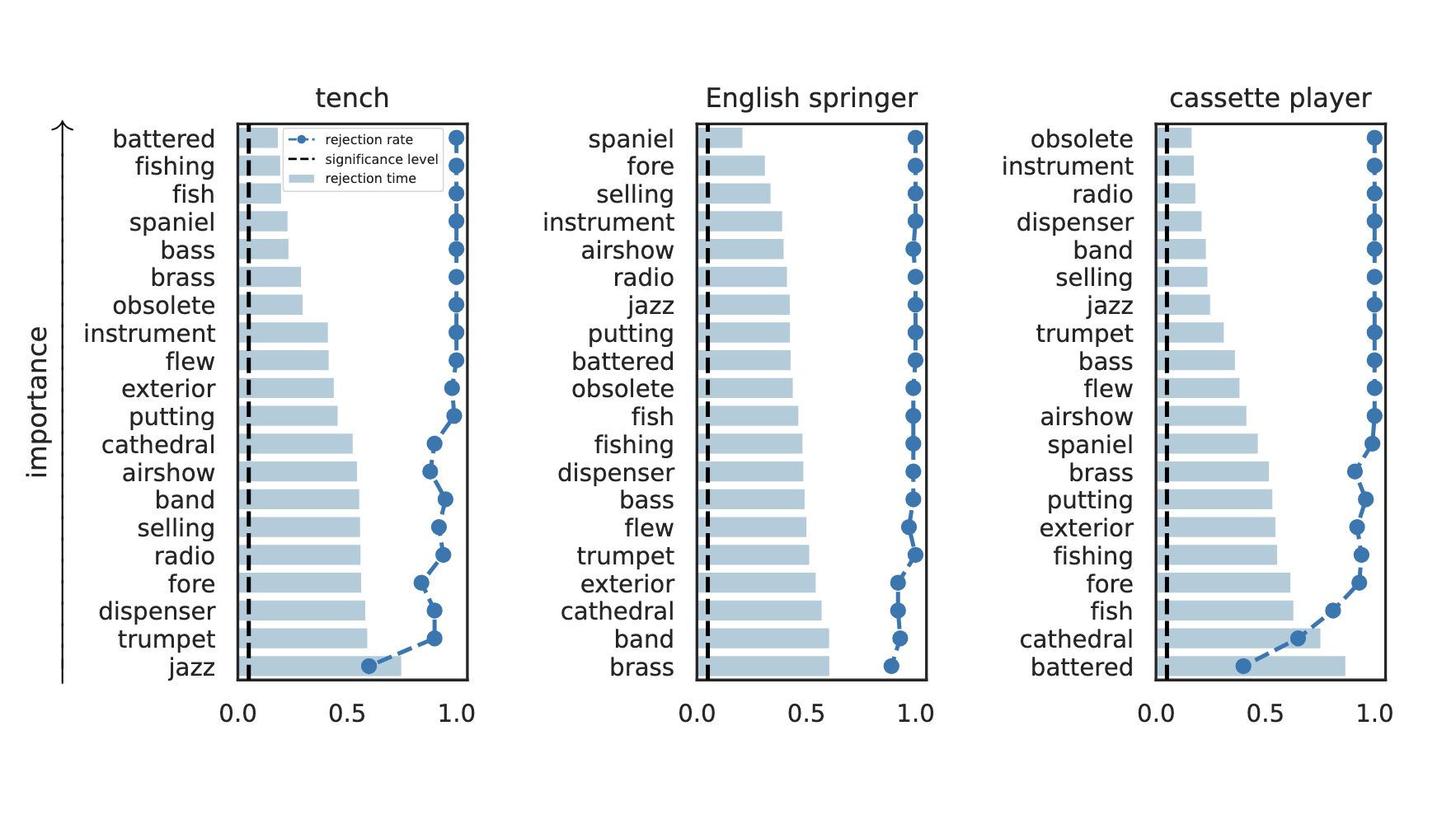

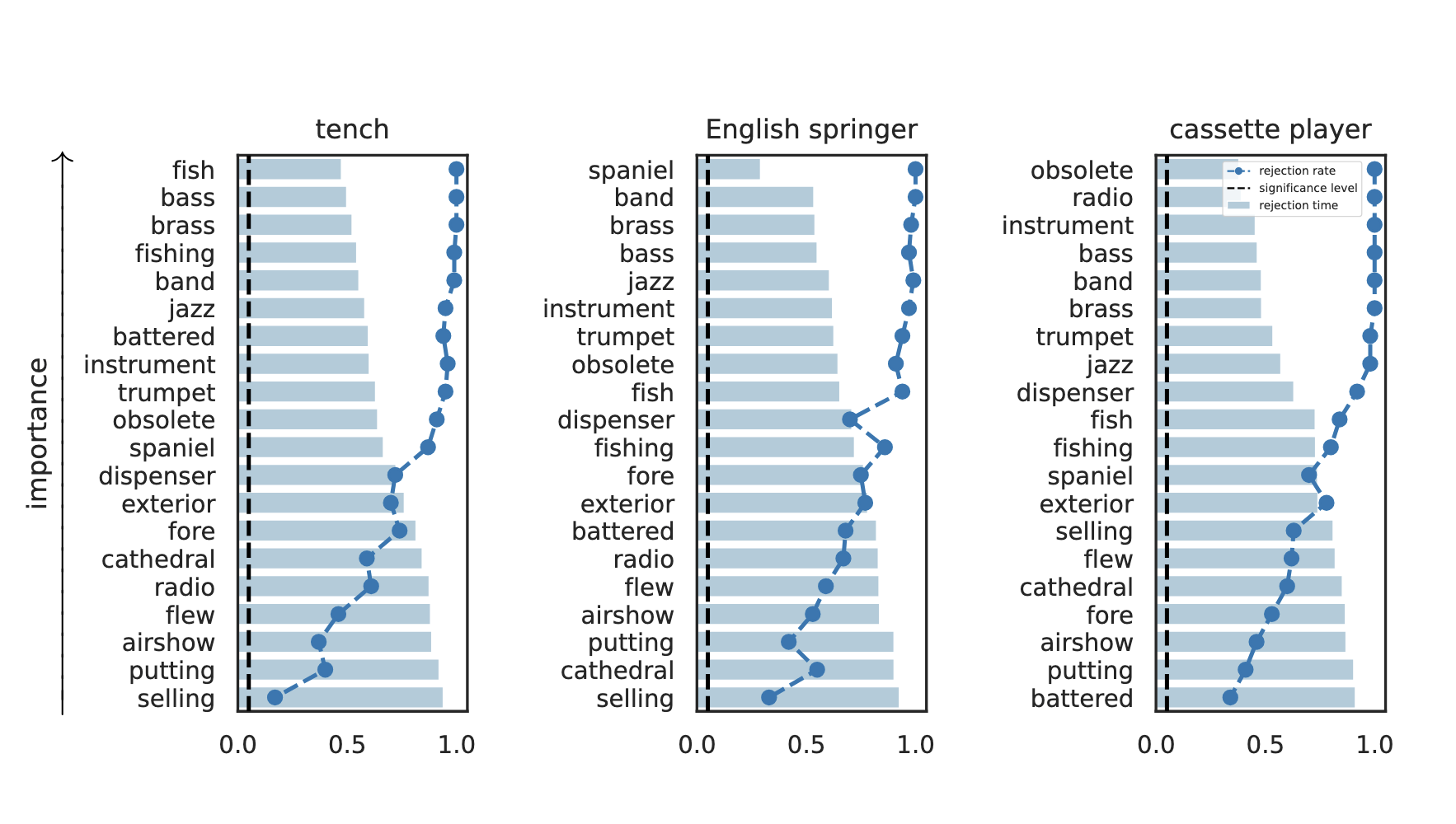

Testing by betting via SKIT (Podkopaev et al., 2023)

[Shaer et al. 2023, Shekhar and Ramdas 2023, Podkopaev et al 2023]

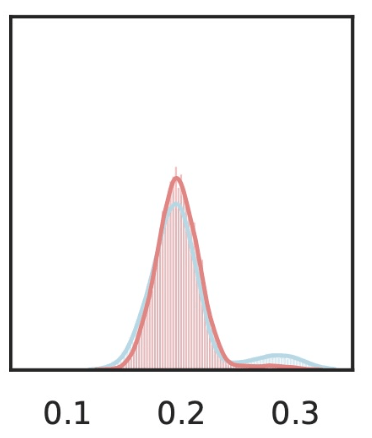

rejection time

rejection rate

Important Semantic Concepts

(Reject \(H_0\))

Unimportant Semantic Concepts

(fail to reject \(H_0\))

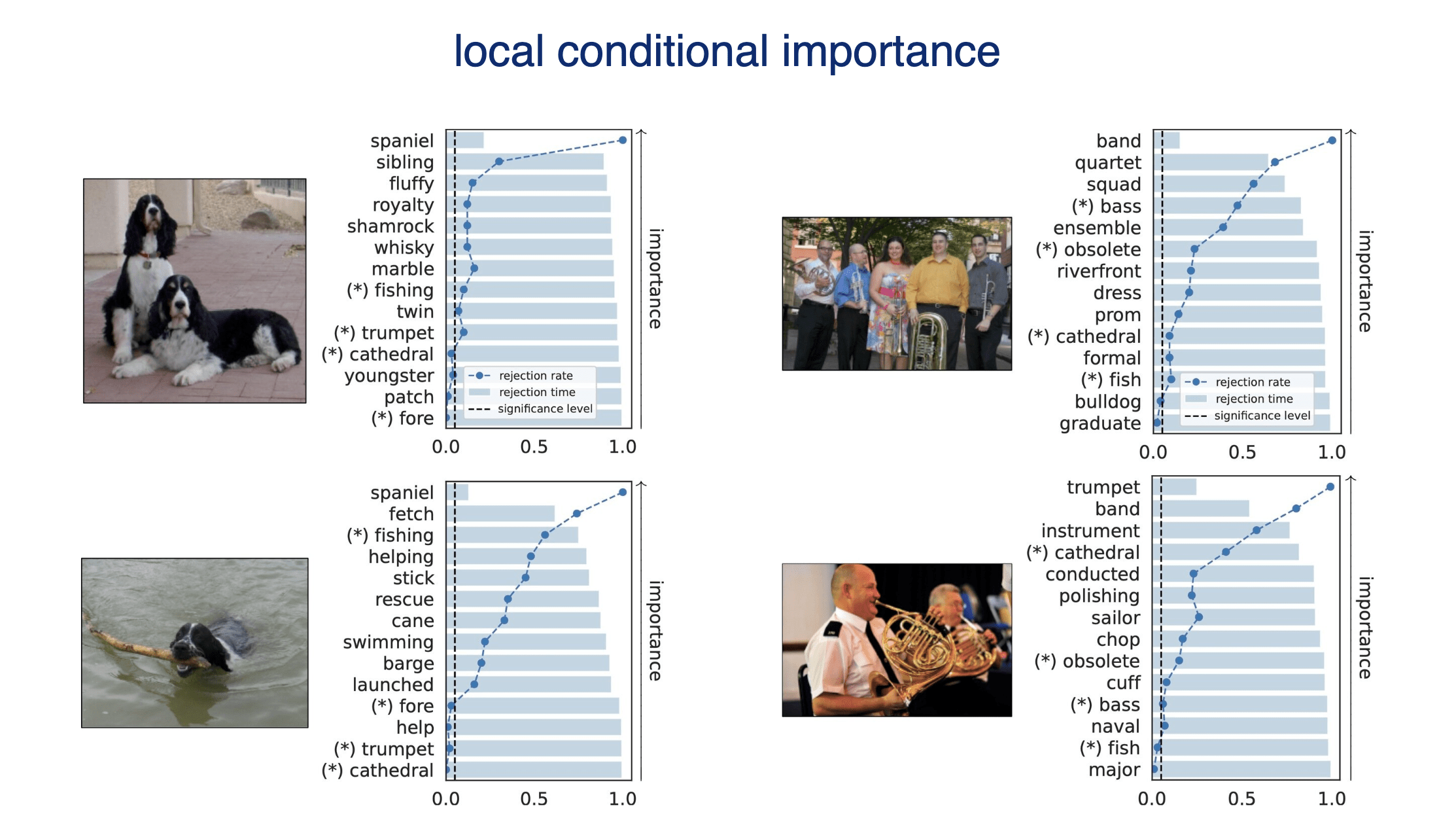

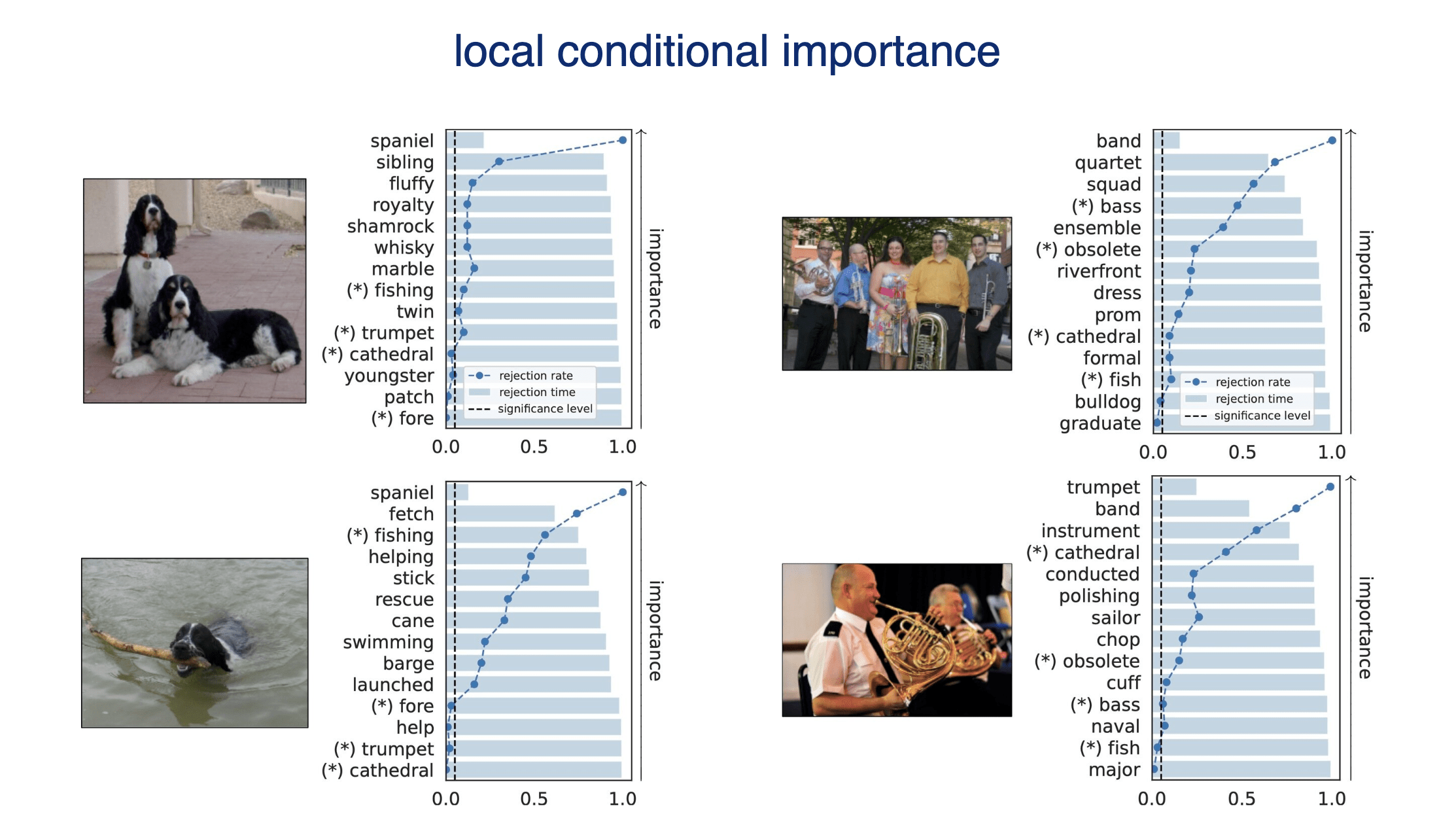

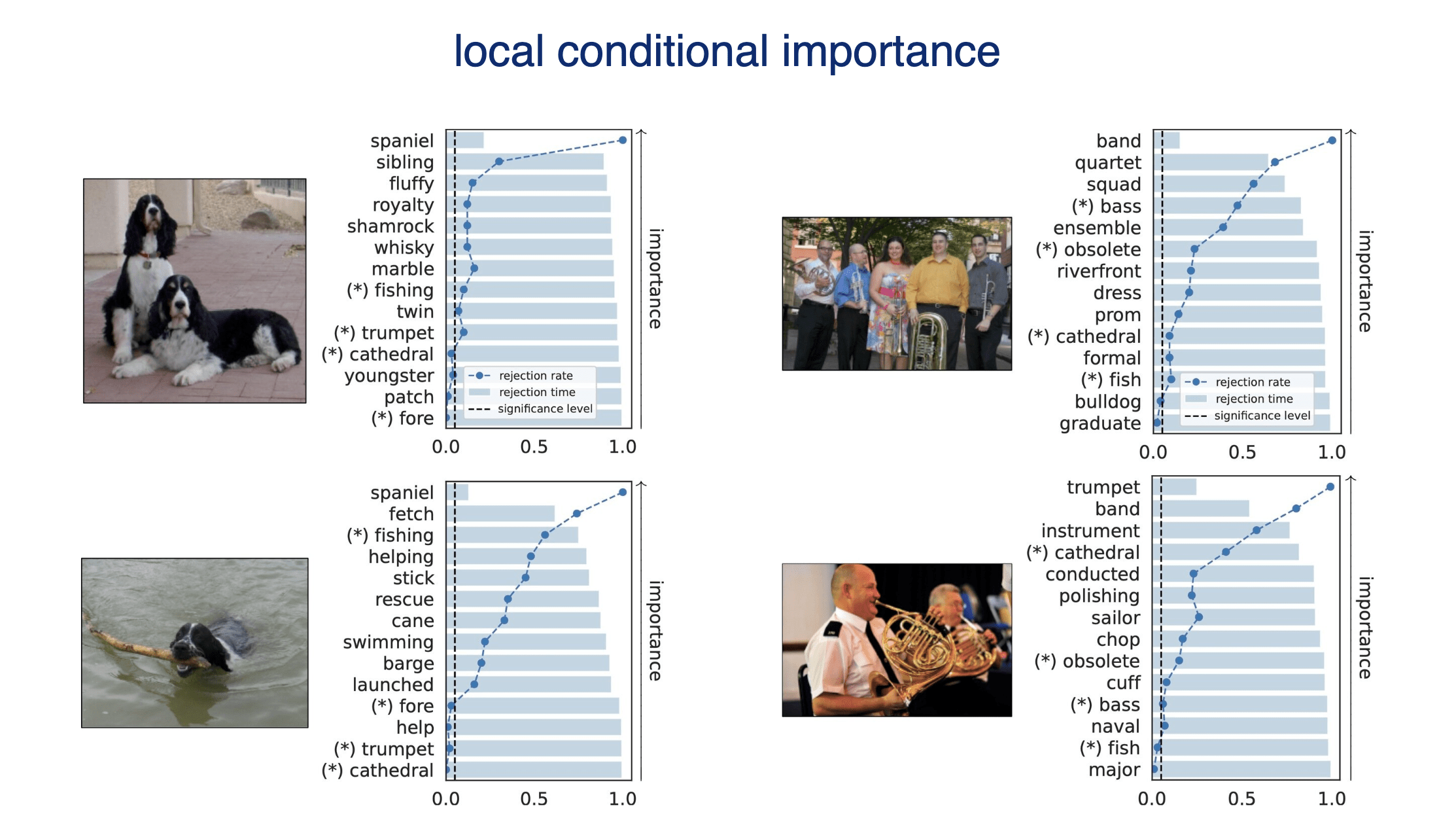

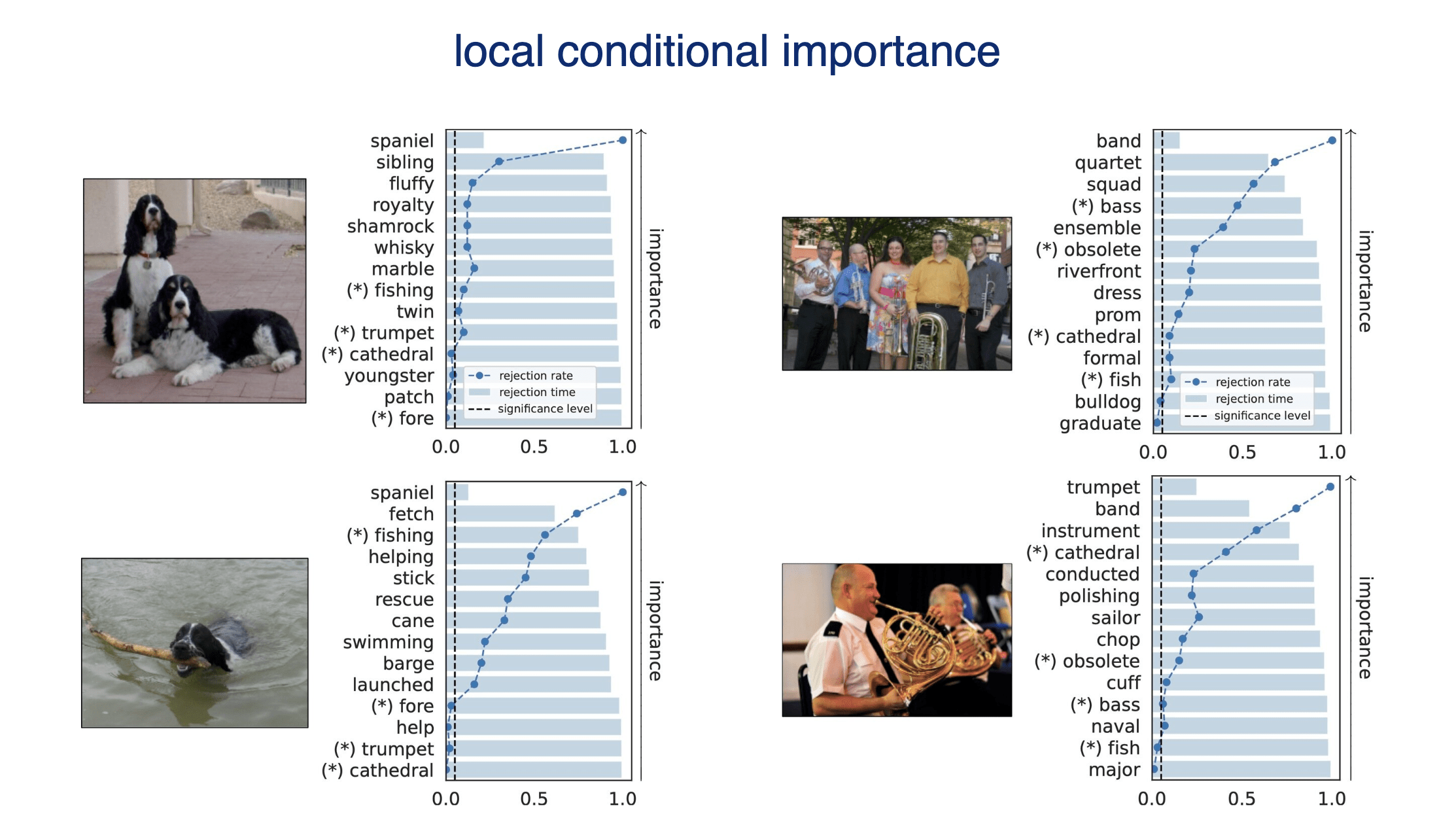

Results: Imagenette

Type 1 error control

False discovery rate control

\(\alpha = 0.05\)

Results: Imagenette

Results: Imagenette

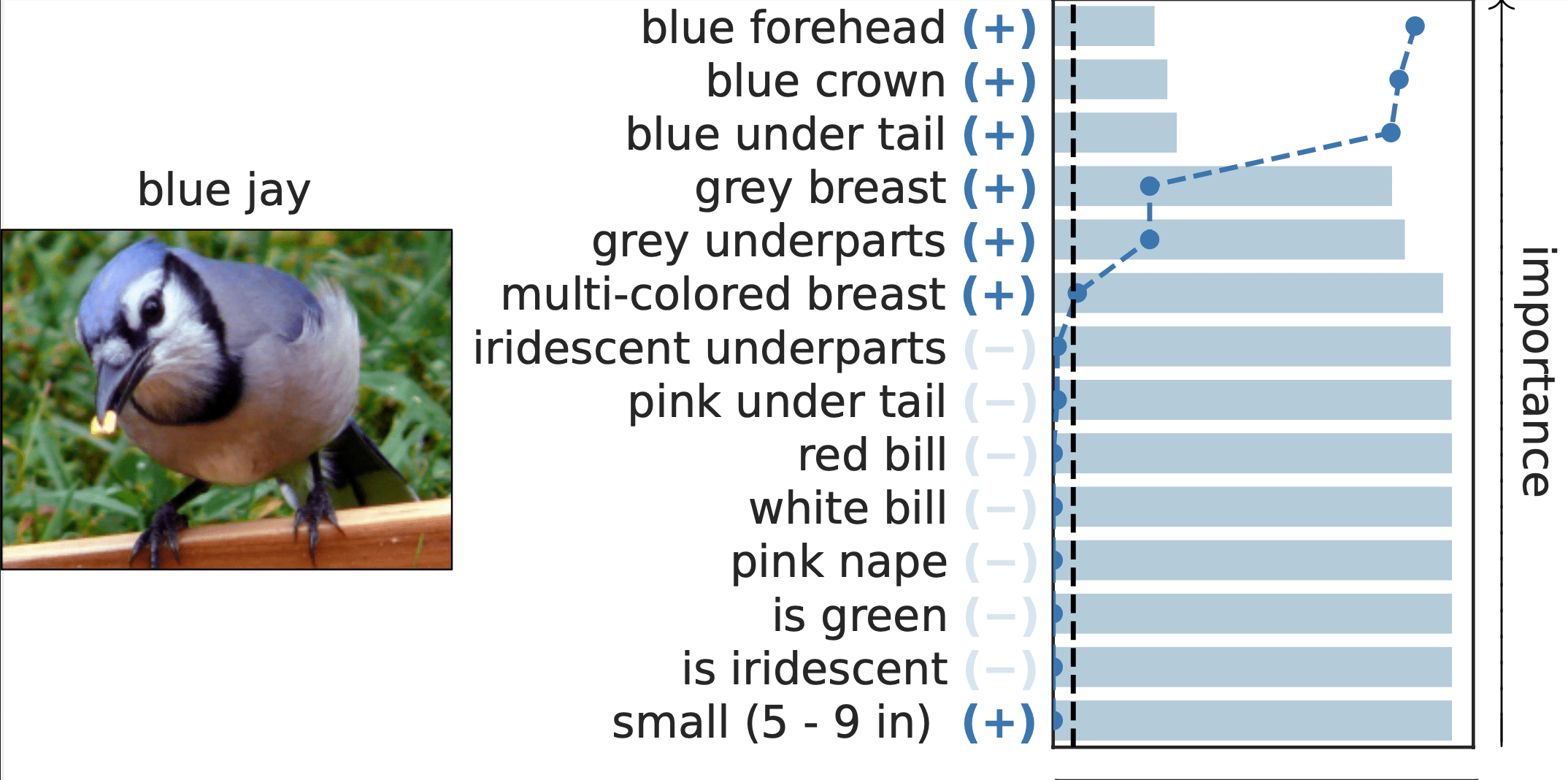

Results: CUB dataset

Important Semantic Concepts

(Reject \(H_0\))

Unimportant Semantic Concepts

(Fail to reject)

rejection time

rejection rate

0.0

1.0

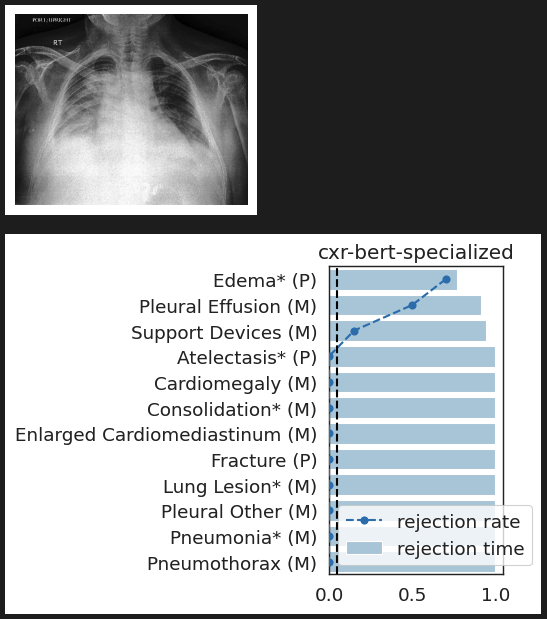

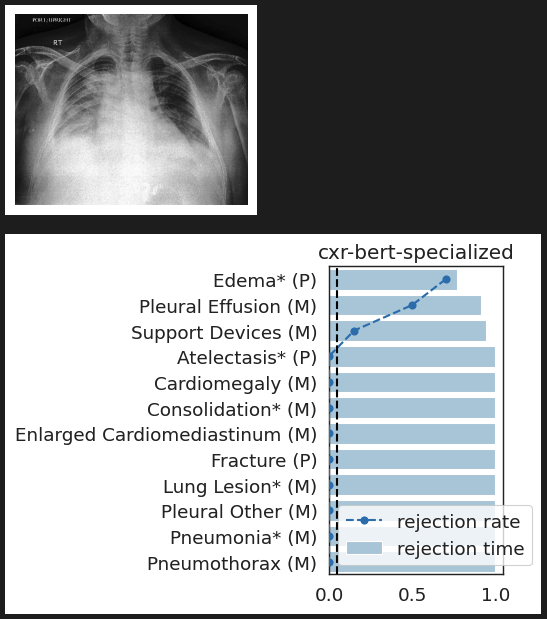

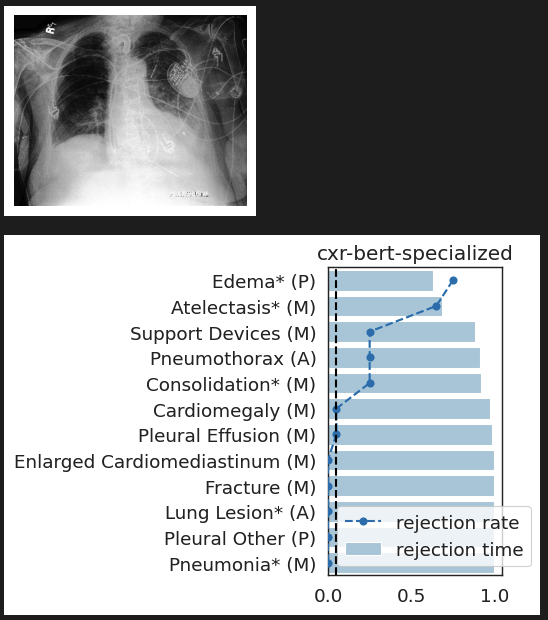

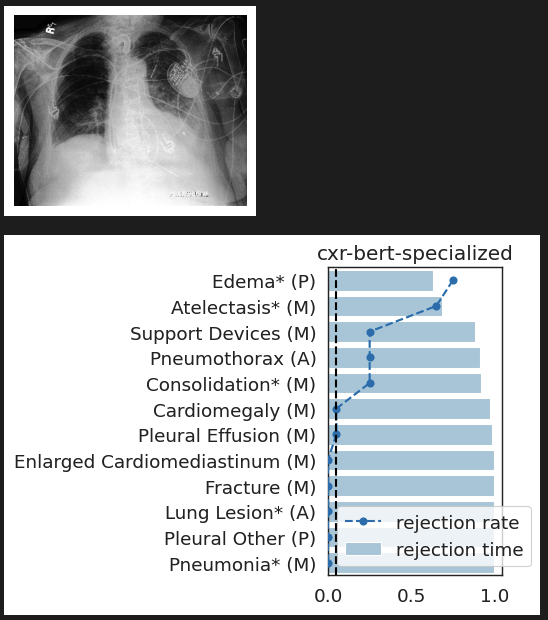

CheXpert: validating BiomedVLP

What concepts does BiomedVLP find important to predict ?

lung opacity

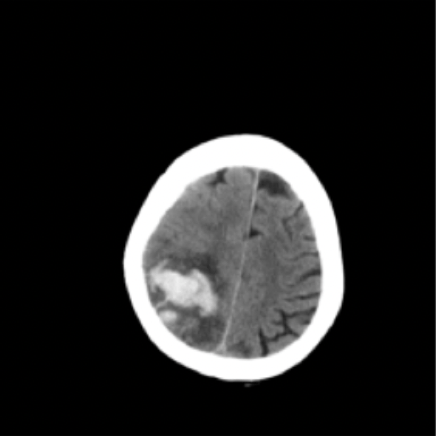

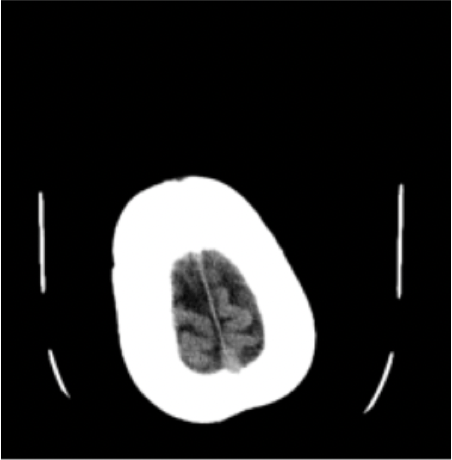

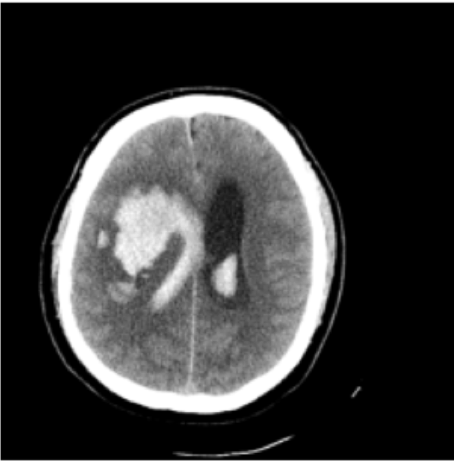

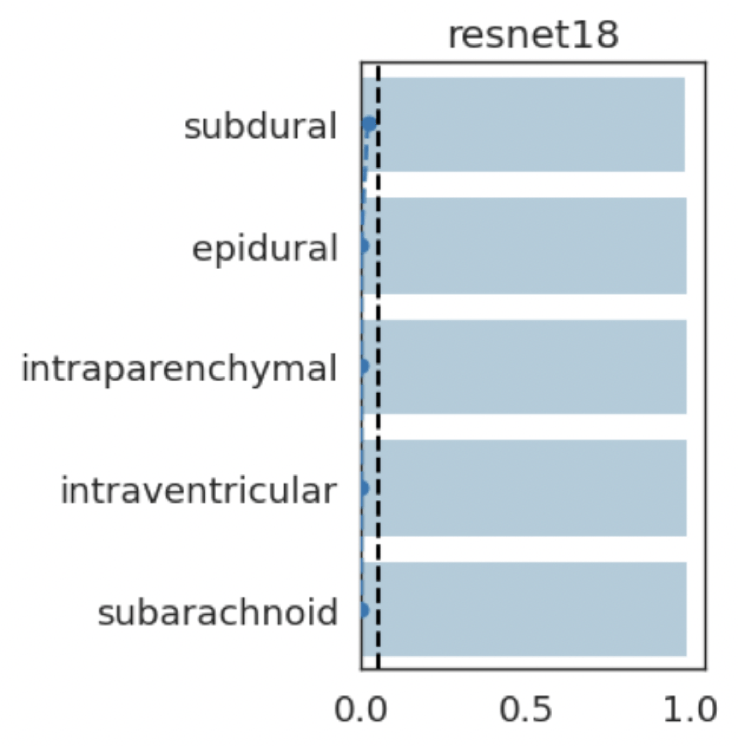

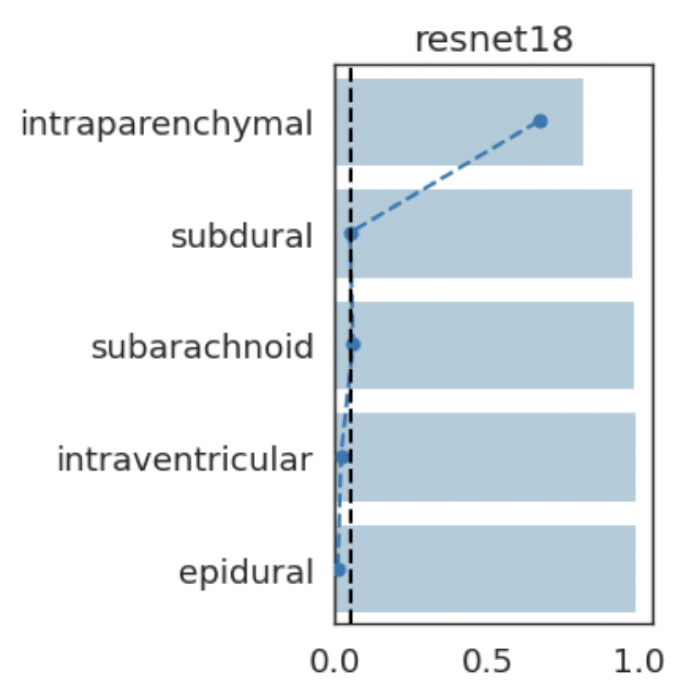

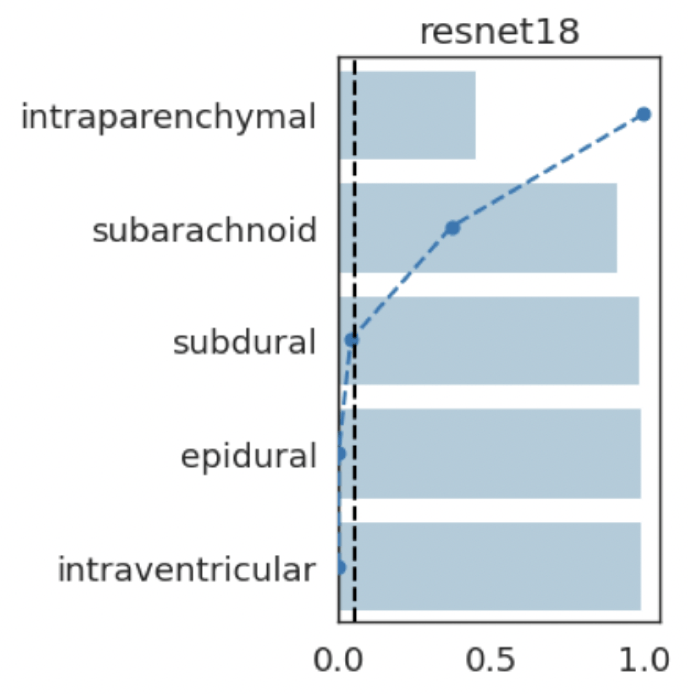

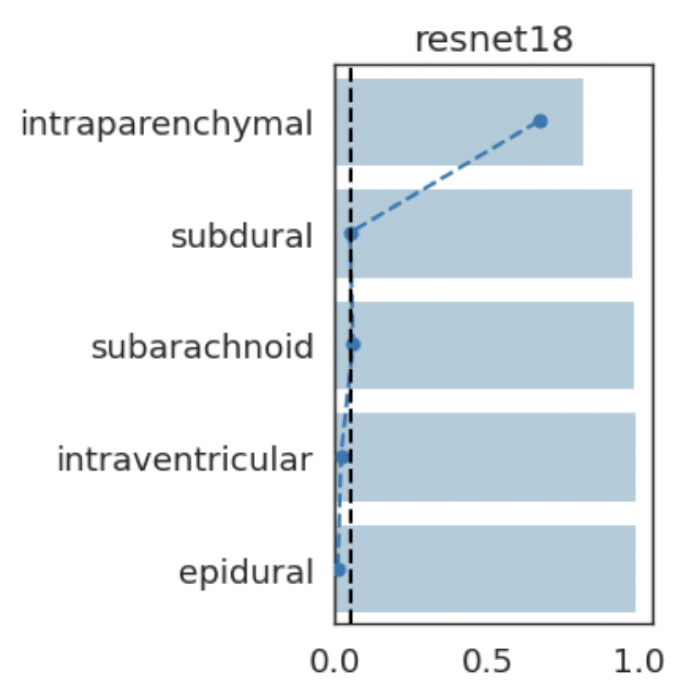

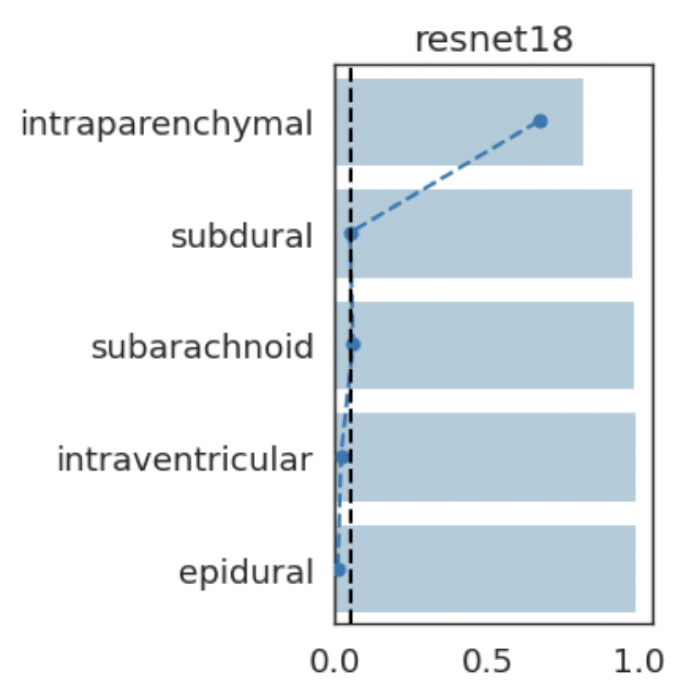

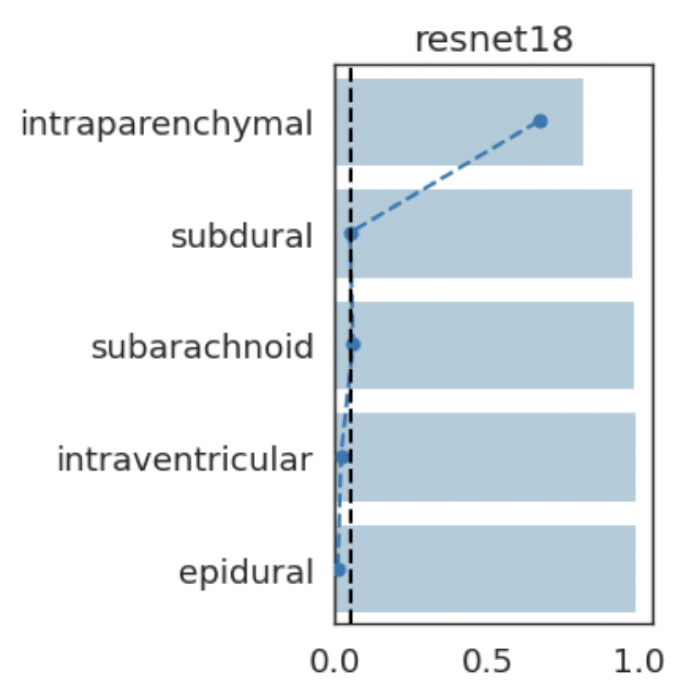

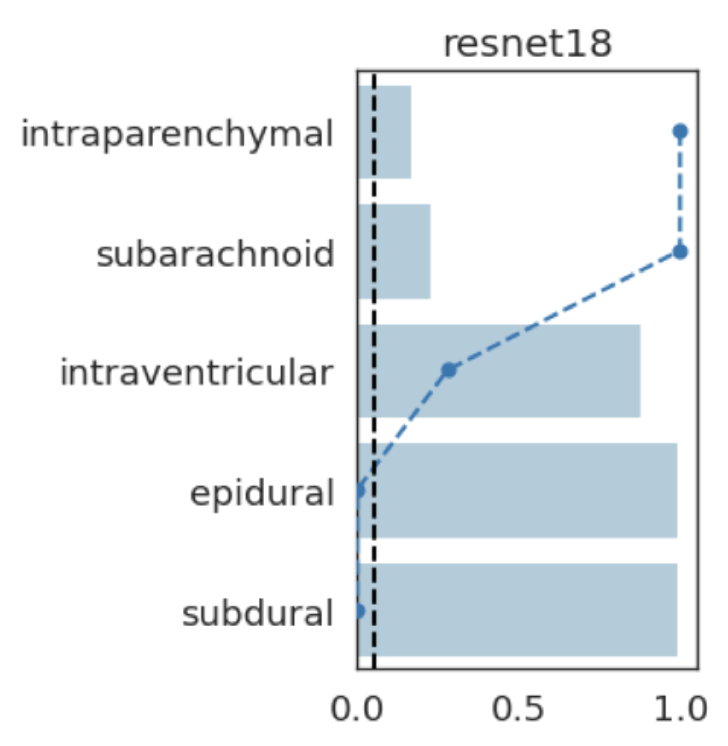

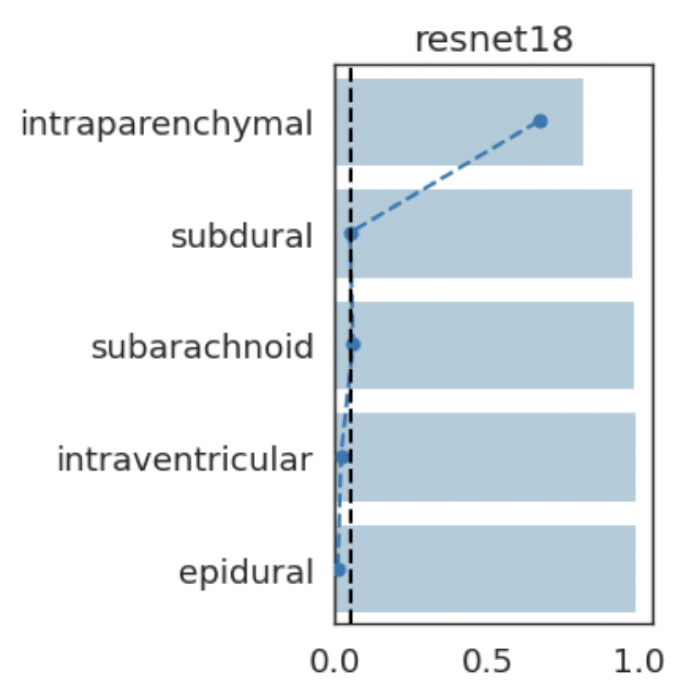

Results: RSNA Brain CT Hemorrhage Challenge

Hemorrhage

No Hemorrhage

Hemorrhage

Hemorrhage

intraparenchymal

subdural

subarachnoid

intraventricular

epidural

intraparenchymal

subarachnoid

intraventricular

epidural

subdural

intraparenchymal

subarachnoid

subdural

epidural

intraventricular

intraparenchymal

subarachnoid

intraventricular

epidural

subdural

(+)

(-)

(-)

(-)

(-)

(+)

(-)

(+)

(-)

(-)

(+)

(+)

(-)

(-)

(-)

(-)

(-)

(-)

(-)

(-)

Results: Imagenette

Global Importance

Results: Imagenette

Global Conditional Importance

Results: Imagenette

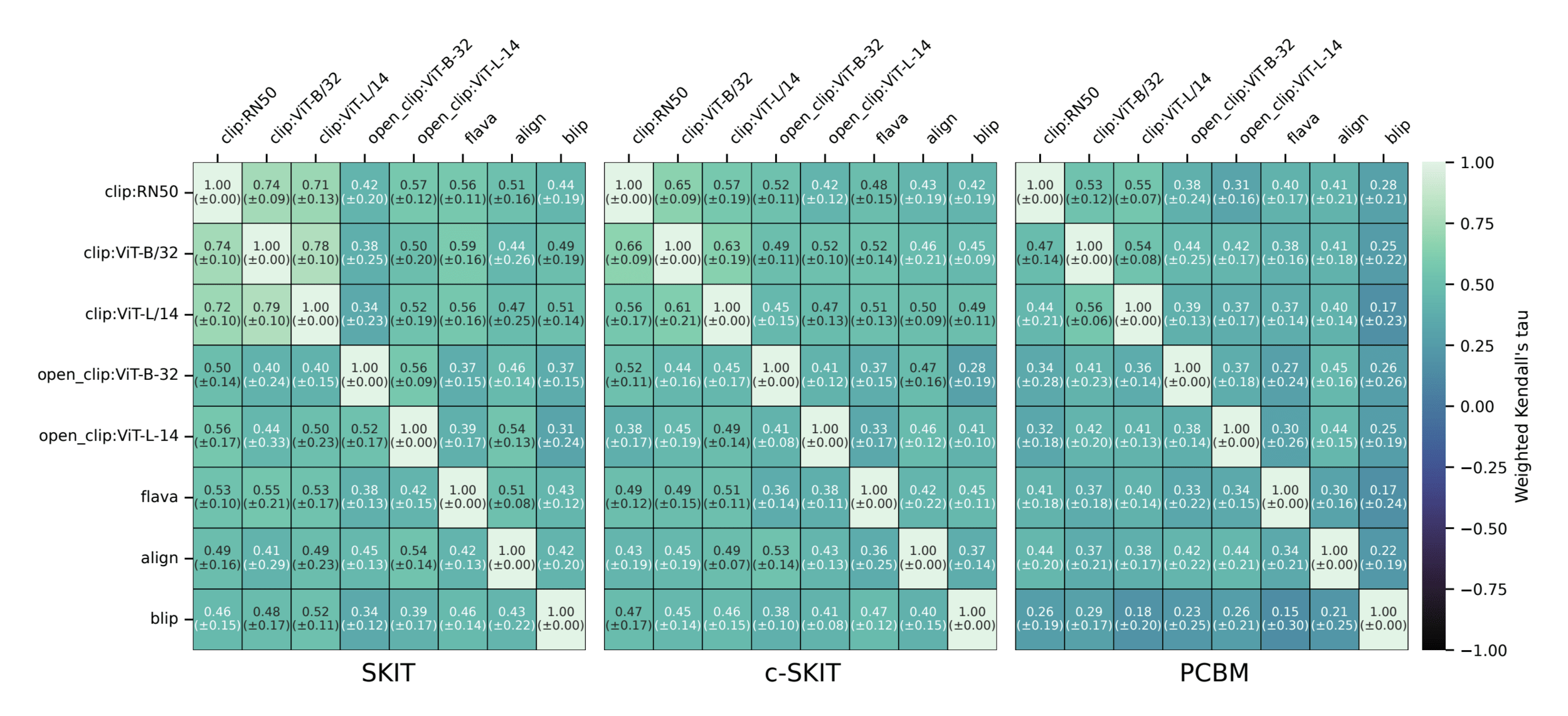

Semantic comparison of vision-language models

Summary

- Notions of importance that are rigorously testable

- Local conditional tests for semantic importance

- Any-time-valid inference for efficient testing and ranking

Jacopo Teneggi

JHU

Teneggi & S., Testing Semantic Importance via Betting, Neurips (2024).

Appendix

Semantic Interpretability of classifiers

Concept Bank: \(C = [c_1, c_2, \dots, c_m] \in \mathbb R^{d\times m}\)

Vision-language models

(training)

[Radford et al, 2021]

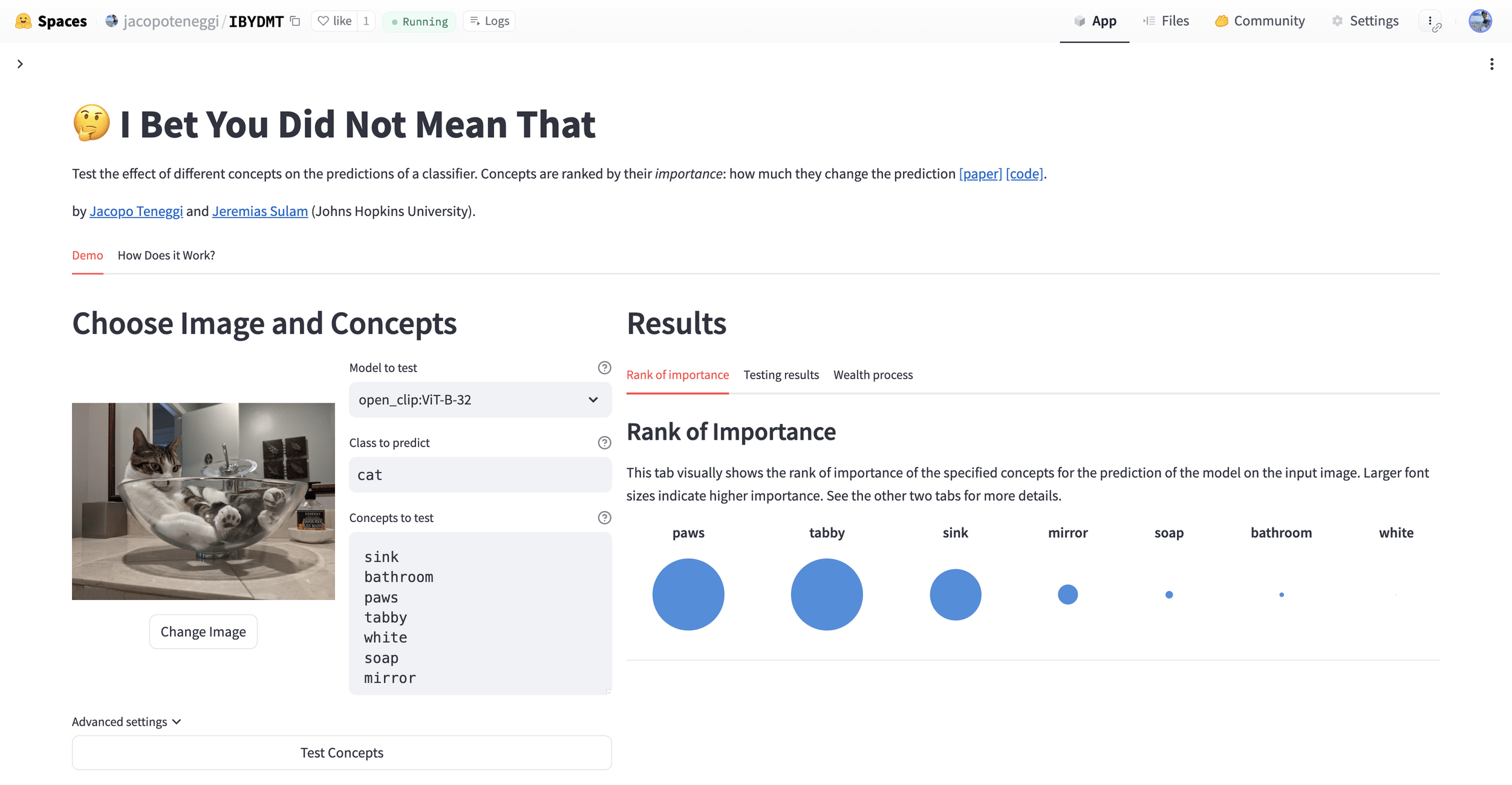

Testing semantic importance

By Jeremias Sulam

Testing semantic importance

Workshop @ UCSD 2026

- 65