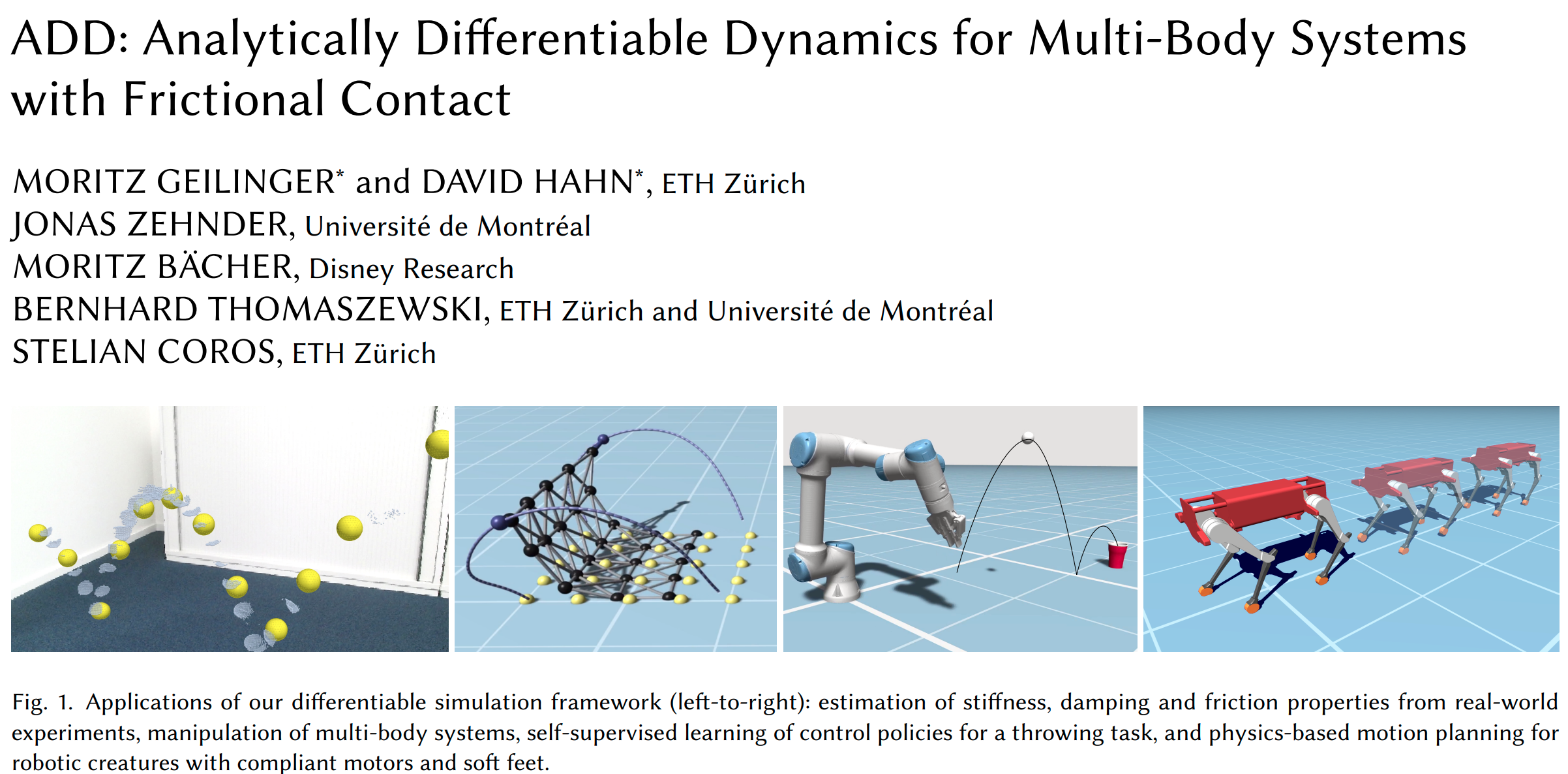

Contact-rich Planning for Robotic Manipulation with Quasi-static Contact Models

Tao Pang

Word cloud of my PhD thesis :D

Why contact-rich manipulation?

Collision-free Motion Planning

Interact with the world

Smaller

Why is contact-rich planning hard?

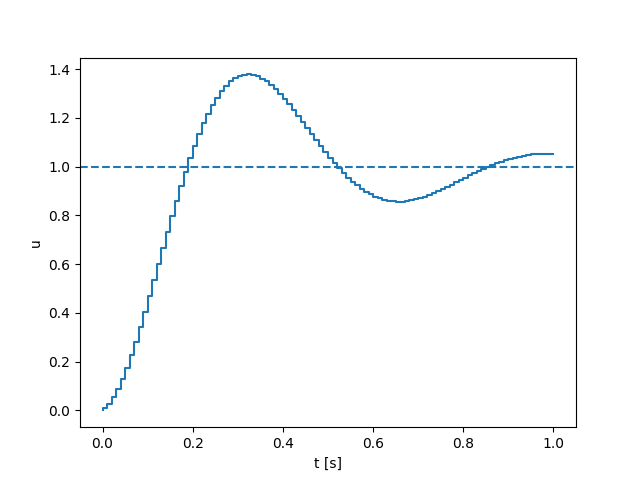

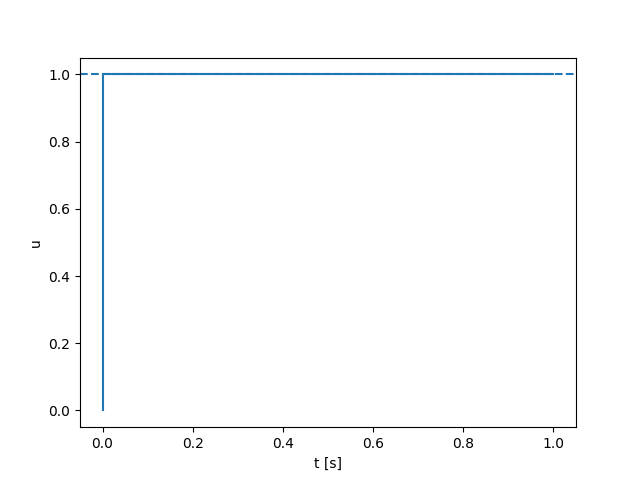

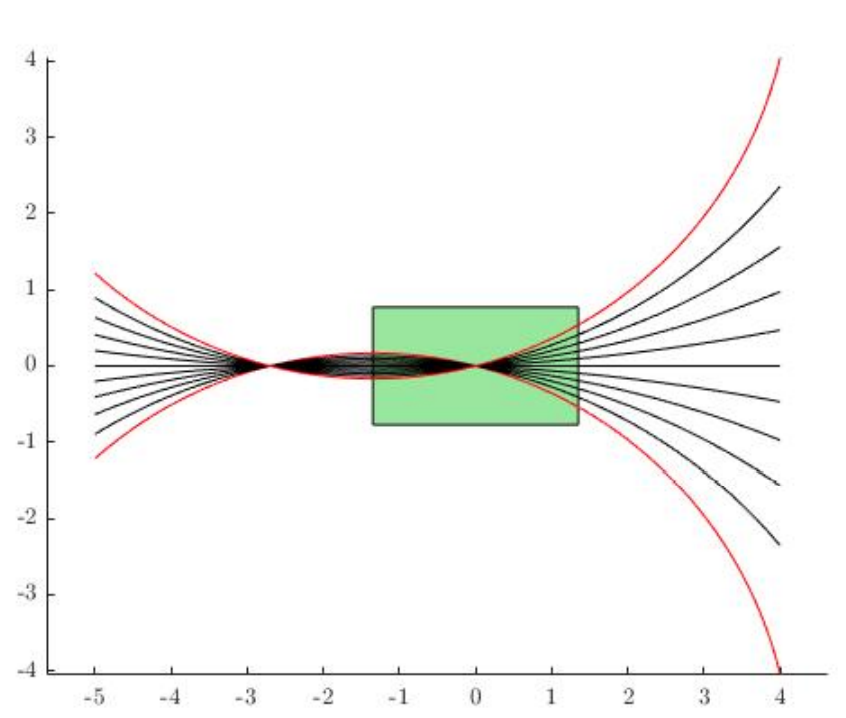

Contact dynamics is non-smooth!

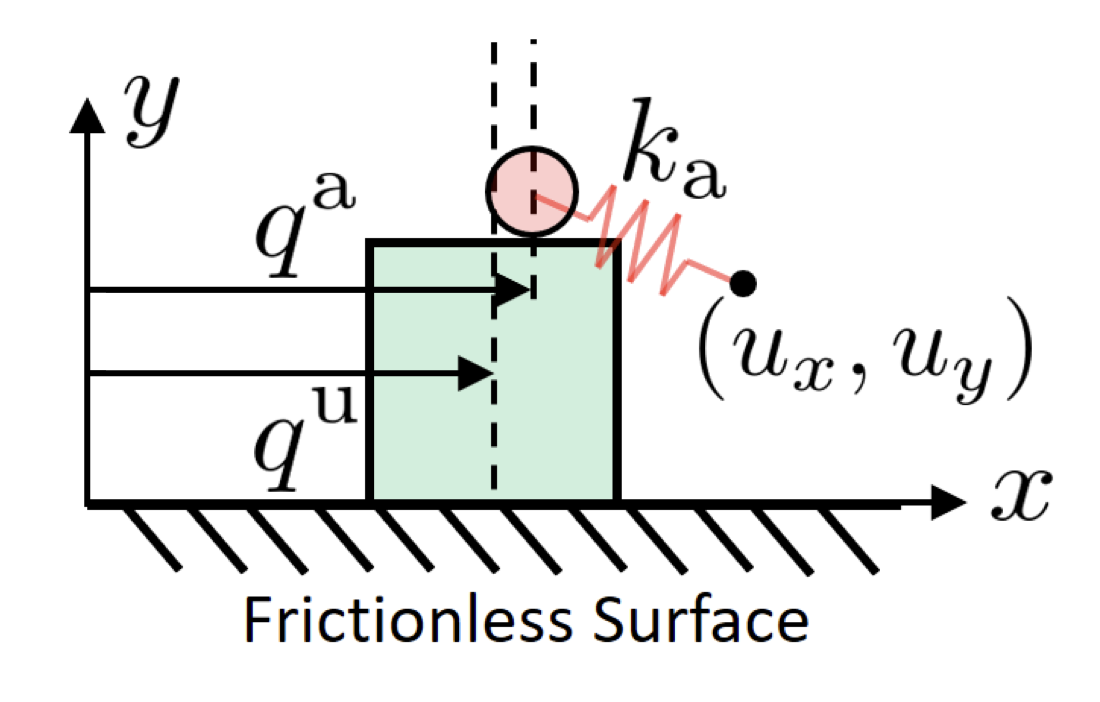

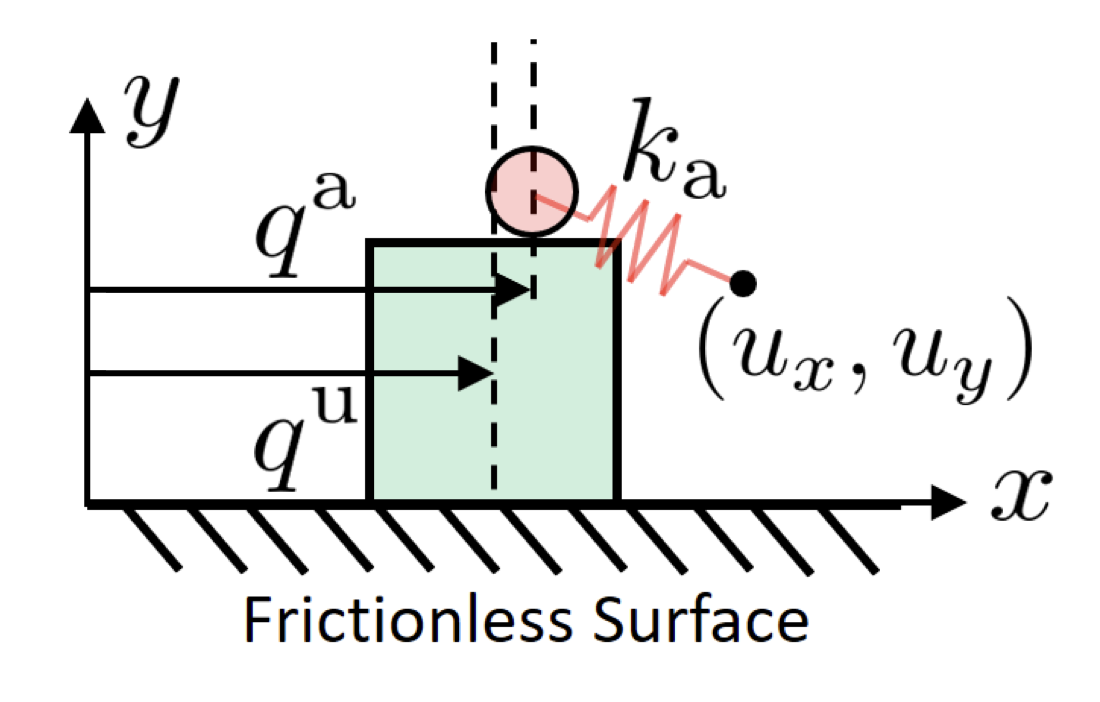

\(\mathrm{u}\): un-actuated

control input: commanded ball position

\(\mathrm{a}\): actuated

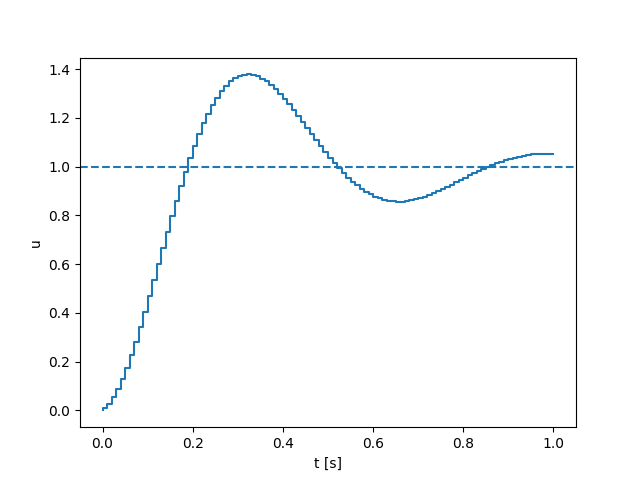

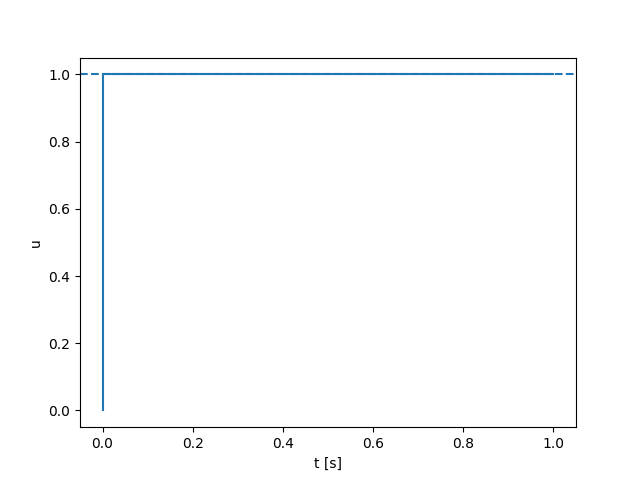

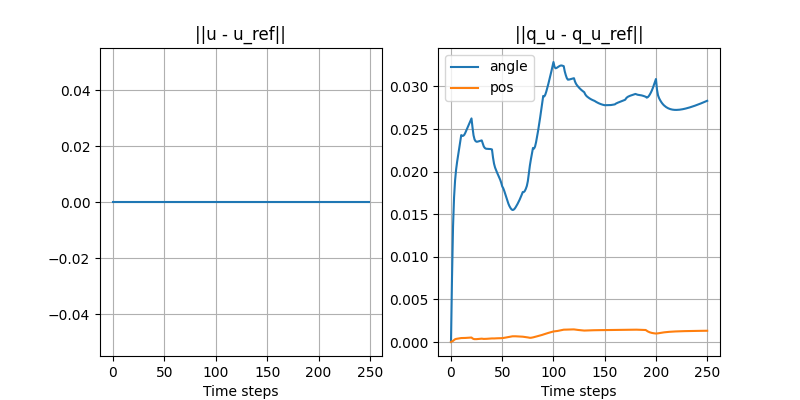

What does the plot of this dynamics look like? (demo)

Contact dynamics is non-smooth

Moving the box to the right

No contact

Contact

Solve with gradient descent

No contact

Contact

Smoothing comes to the rescue!

Smoothing comes to the rescue!

No contact

Contact

Solve with gradient descent

No contact

Contact

Moving the box to the left?

No contact

Contact

Solve with gradient descent

No contact

Contact

Stuck in local minima!

Global Search with Contact Modes

System

Number of modes

- Left not touching

- Left touching

- Right touching

- Right not touching

x

Friction cone

- Not touching

- Sticking

- Sliding left

- Sliding right

y

Number of faces

x

x

y

z

- Not touching

- Sticking

- 4 directions of sliding

Number of contact pairs.

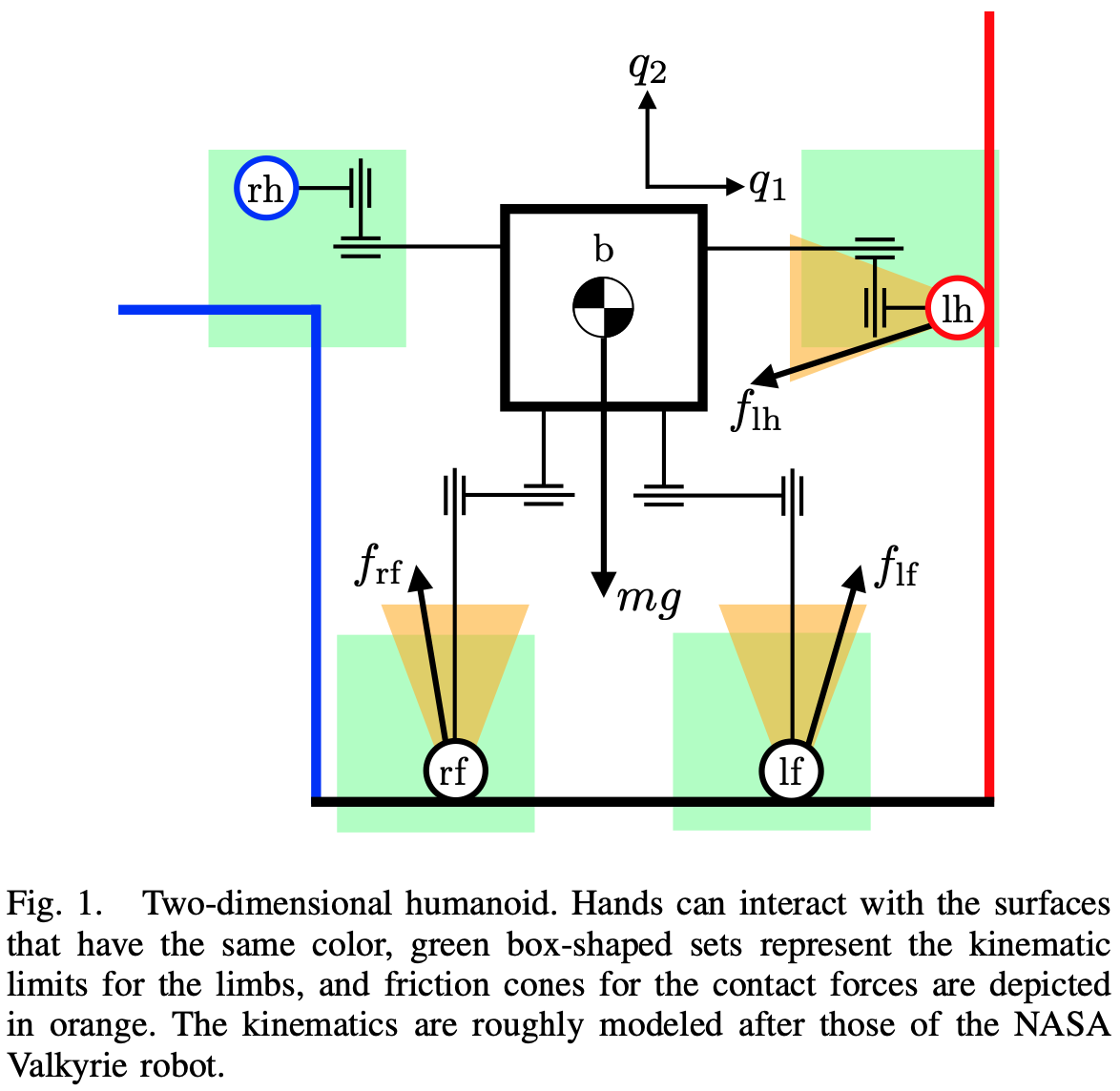

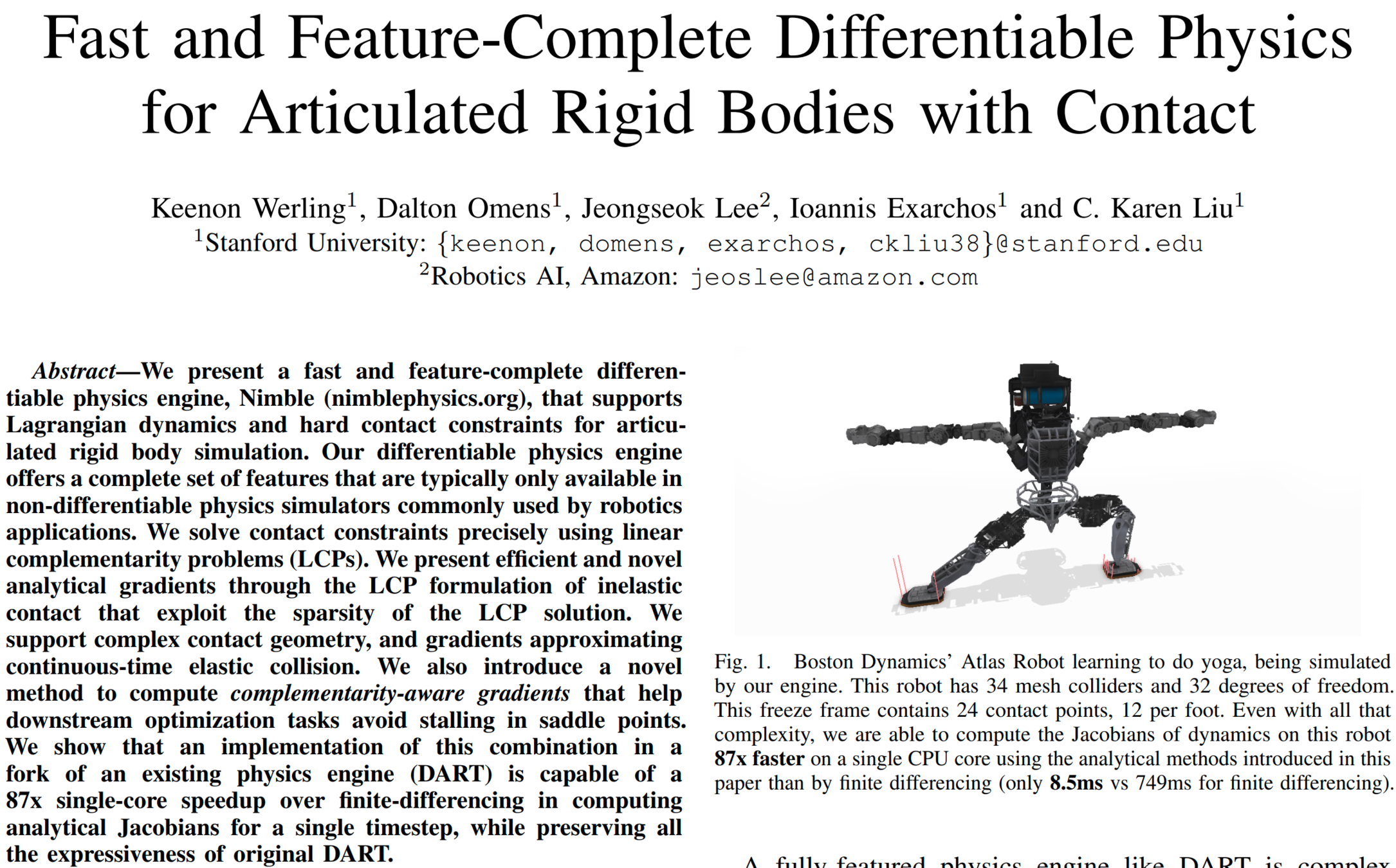

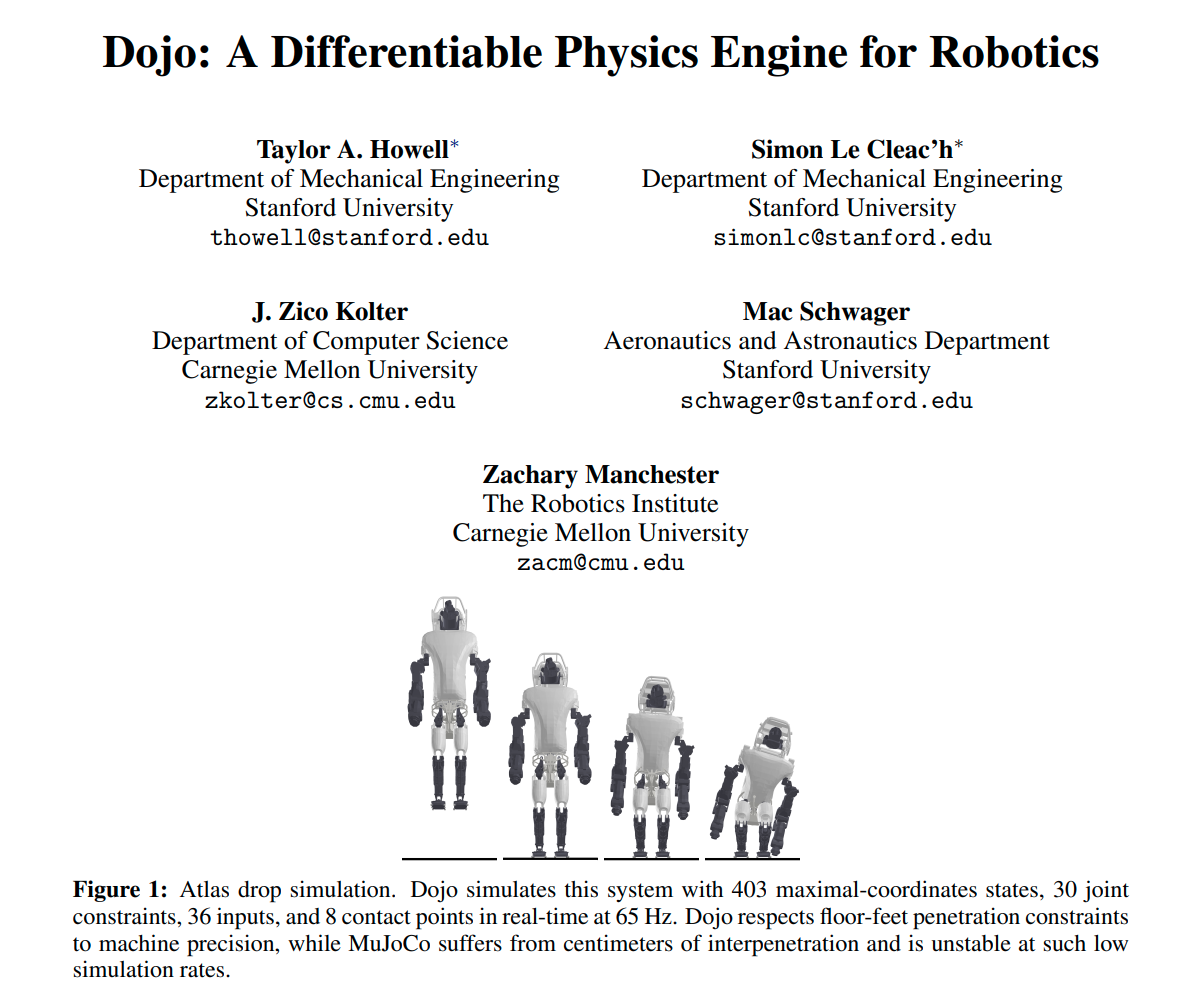

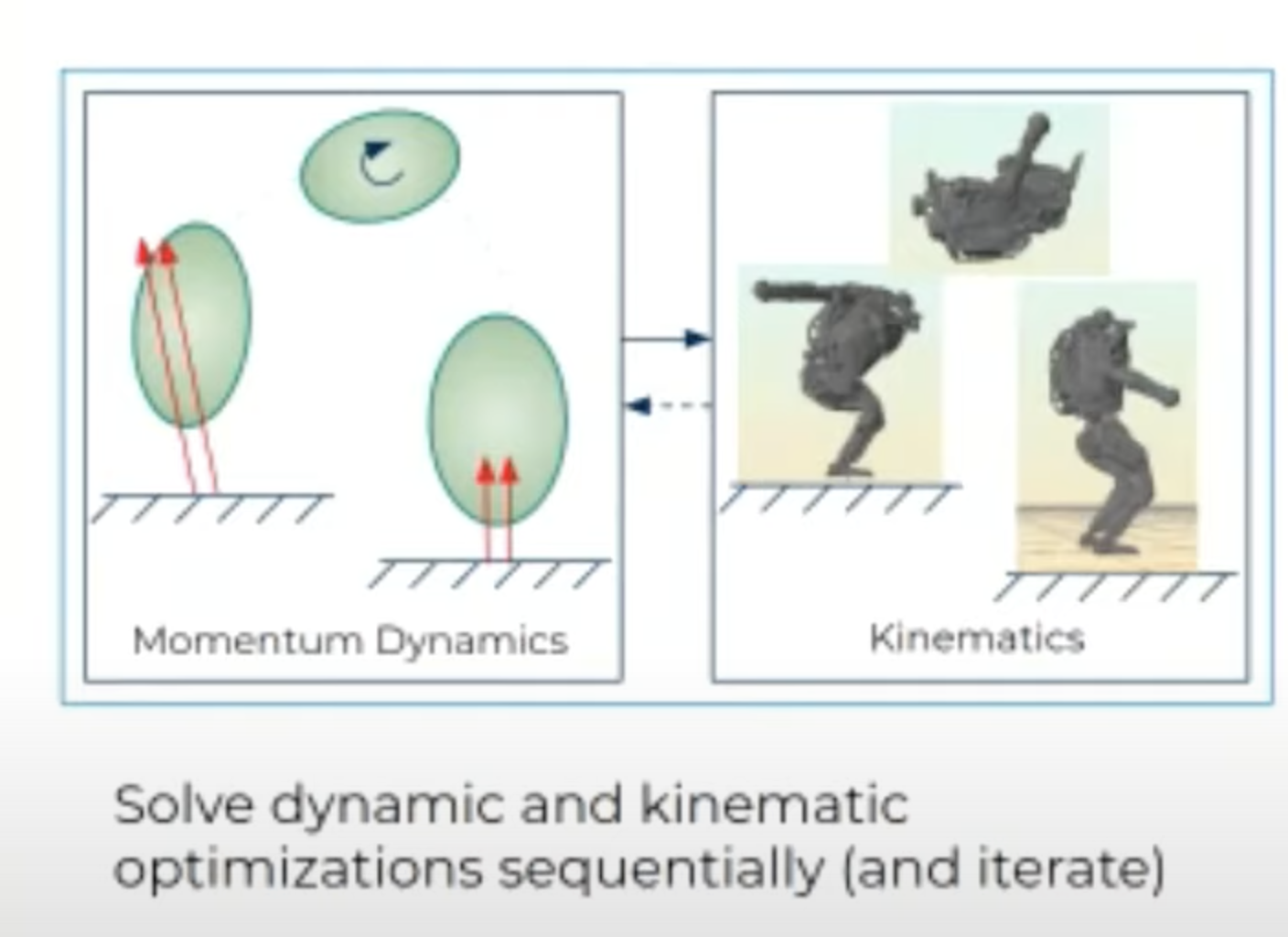

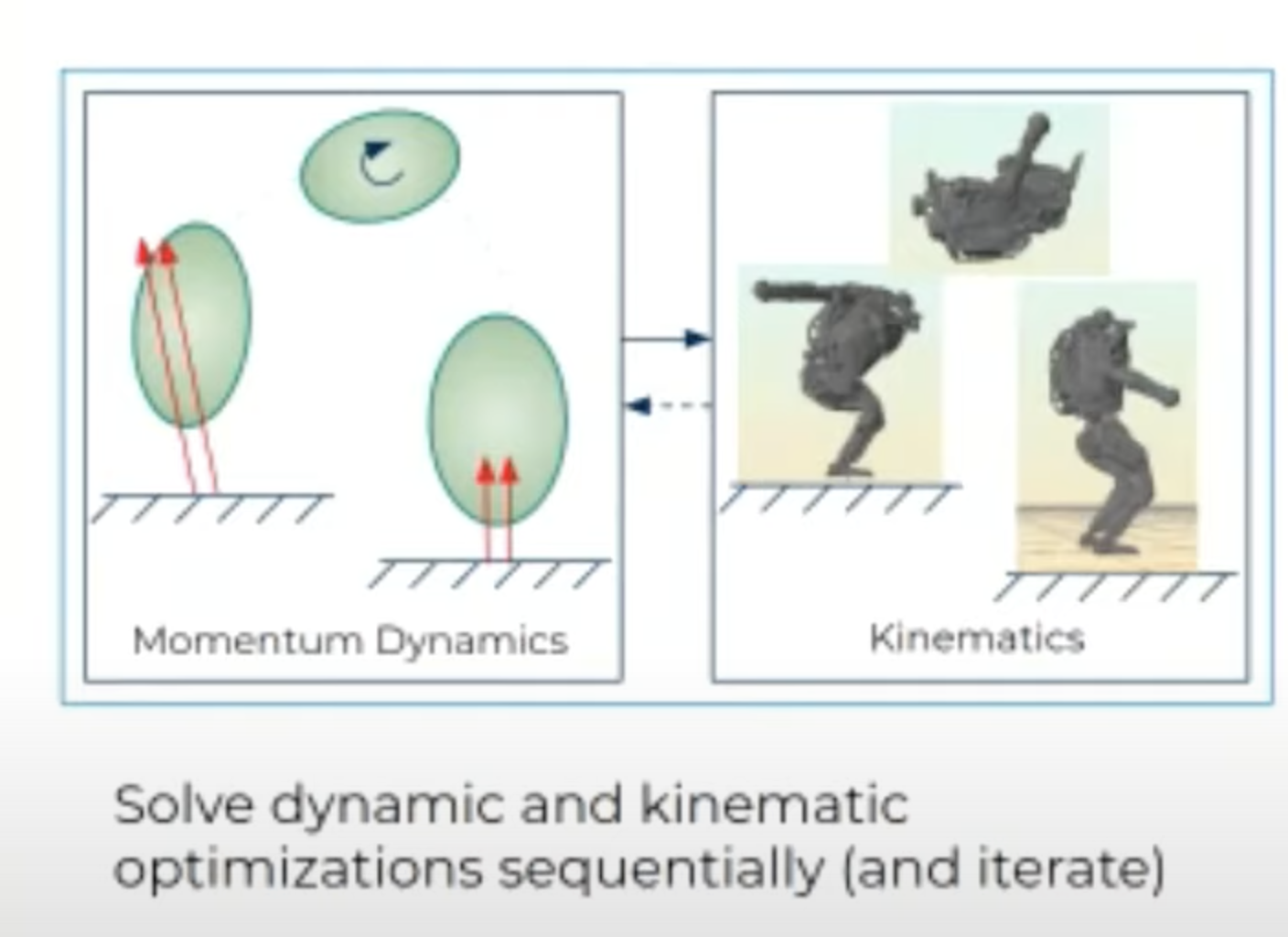

Model-based Planning through Contact: a Quick Review

- Trajectory Optimization through Contact

- Mordatch, Igor, Emanuel Todorov, and Zoran Popović. "Discovery of complex behaviors through contact-invariant optimization." ACM Transactions on Graphics (TOG), 2012.

- Hogan, François Robert, and Alberto Rodriguez. "Feedback control of the pusher-slider system: A story of hybrid and underactuated contact dynamics." arXiv preprint arXiv:1611.08268, 2016.

- Posa, Michael, Cecilia Cantu, and Russ Tedrake. "A direct method for trajectory optimization of rigid bodies through contact." The International Journal of Robotics Research, 2014.

- Aydinoglu, Alp, and Michael Posa. "Real-time multi-contact model predictive control via admm." 2022 International Conference on Robotics and Automation (ICRA), 2022.

- Cheng, Xianyi, et al. "Contact mode guided motion planning for quasidynamic dexterous manipulation in 3d." 2022 International Conference on Robotics and Automation (ICRA), 2022.

- Marcucci, Tobia, et al. "Approximate hybrid model predictive control for multi-contact push recovery in complex environments." 2017 IEEE-RAS 17th International Conference on Humanoid Robotics (Humanoids), 2017.

-

Tao Pang*, H.J. Terry Suh*, Lujie Yang, Russ Tedrake, "Global Planning for Contact-Rich Manipulation via Local Smoothing of Quasi-dynamic Contact Models", under review, 2022.

- Locally enumerate mode switches (fast)

- Globally enumerate Mode Switches (slow)

Good Scalability (Smoothed dynamics)

Limited Scalability (Mode Transitions)

Local Planning

Global Planning

- Our method: Global planning with RRT + Smoothed dynamics [7]

[1] Mordatch et al.

[3] Posa et al.

[6] Marcucci et al.

[4] Aydinoglu and Posa.

[2] Hogan and Rodriguez.

- Sampling mode switches.

[5] Cheng et al.

What can we learn from RL (reinforcement learning)?

- RL generates impressive policies for specific tasks, but...

- RL requires heavy offline computation,

- The Nvidia policy is trained with "only 8 NVIDIA A40 GPUs" for 60 hours.

- The learned policy does not generalize (e.g. to opening a door).

- RL requires heavy offline computation,

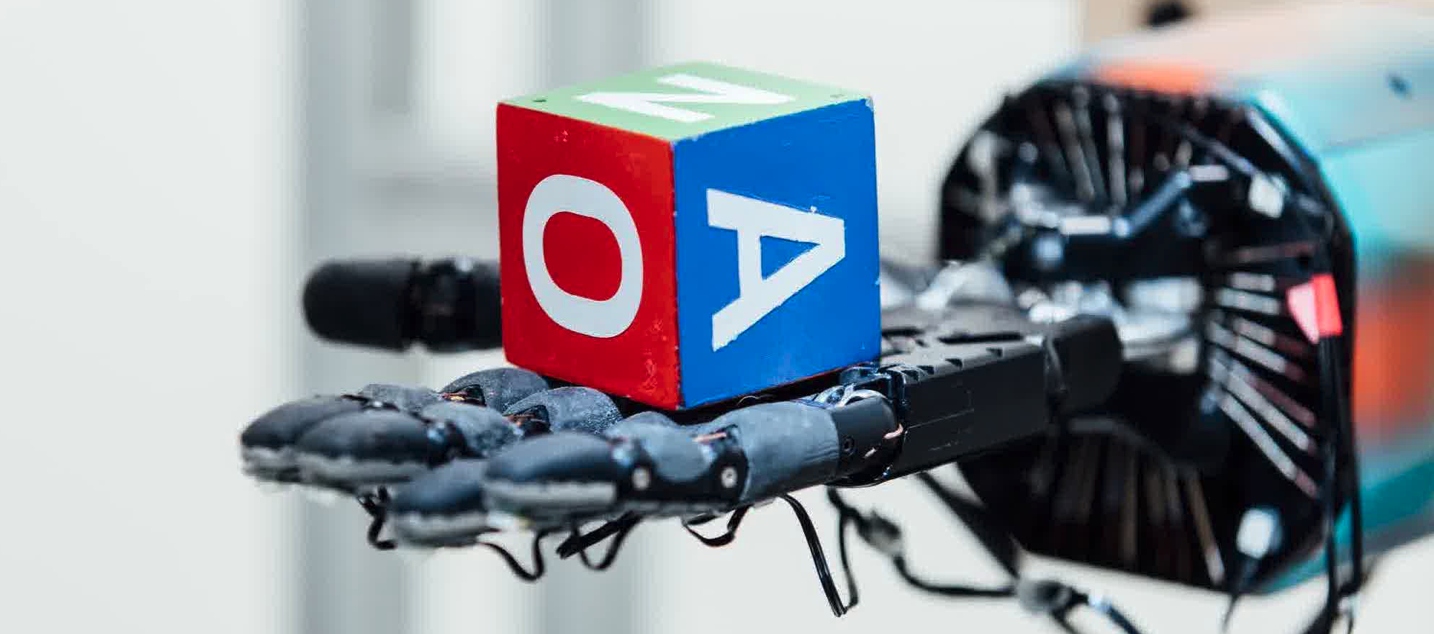

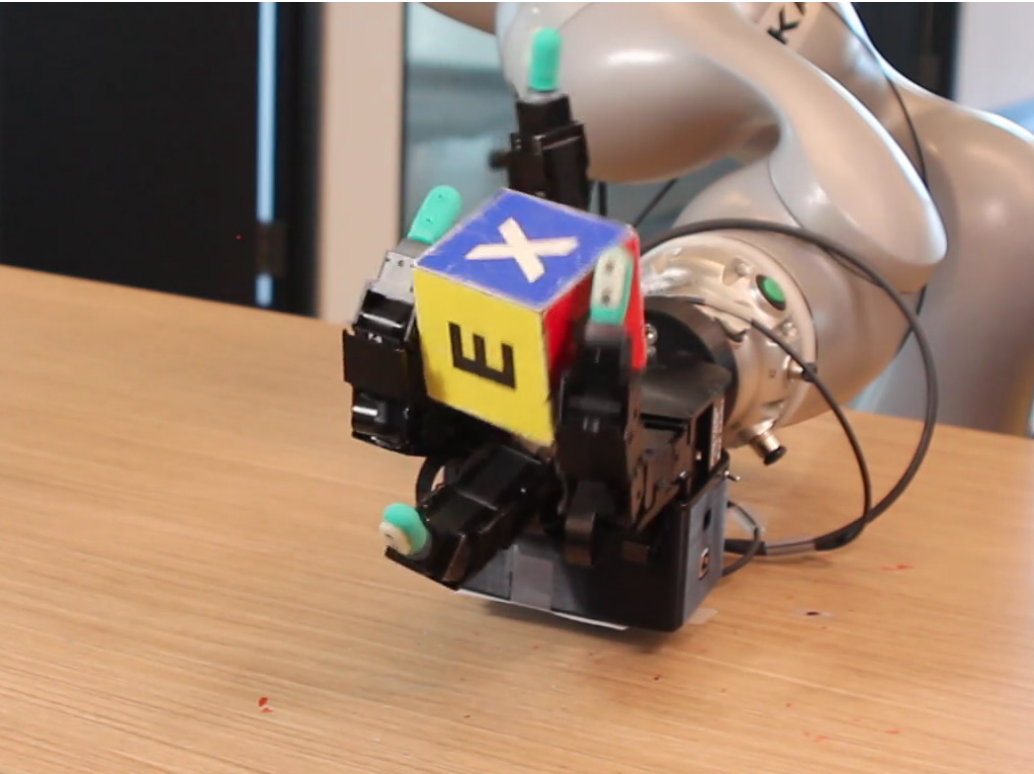

- (OpenAI) Andrychowicz et al. "Learning dexterous in-hand manipulation." The International Journal of Robotics Research, 2020.

- (Nvidia) Handa et al. "DeXtreme: Transfer of Agile In-hand Manipulation from Simulation to Reality", arXiv preprint, 2022.

-

H.J. Terry Suh*, Tao Pang*, Russ Tedrake, “Bundled Gradients through Contact via Randomized Smoothing”, RA-L, 2022.

-

Tao Pang*, H.J. Terry Suh*, Lujie Yang, Russ Tedrake, "Global Planning for Contact-Rich Manipulation via Local Smoothing of Quasi-dynamic Contact Models", under review, 2022.

[1] OpenAI, 2018

[2] Nvidia, 2022

How does RL power through non-smooth contact dynamics? [3][4]

- RL maximizes a stochastic reward using gradients estimated from sampling.

- Sampling has a smoothing effect on the gradients.

In model-based planning, we can smooth contact dynamics, but without using samples (faster!).

Terry Suh

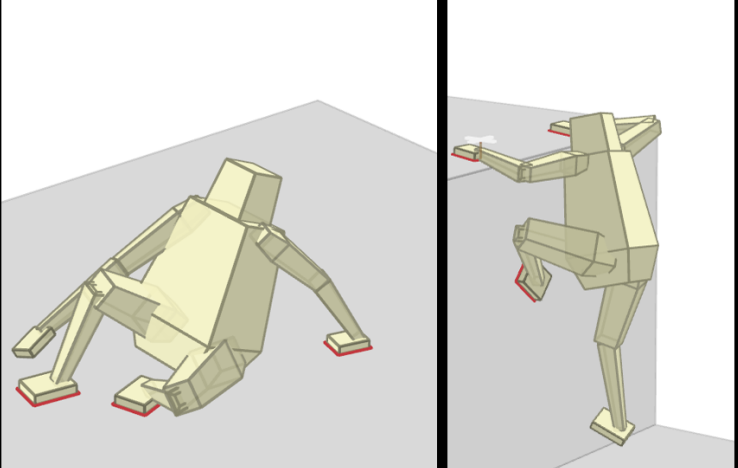

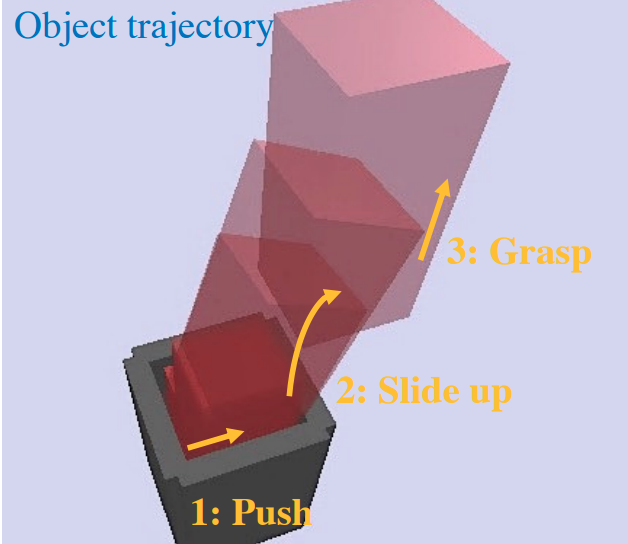

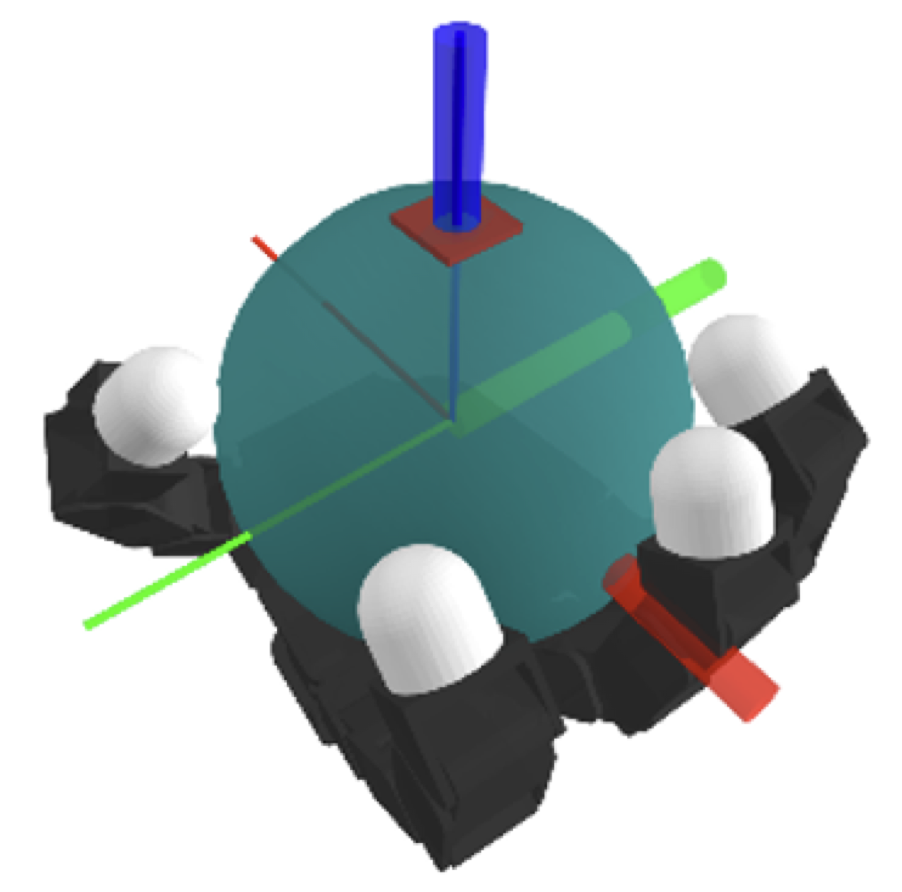

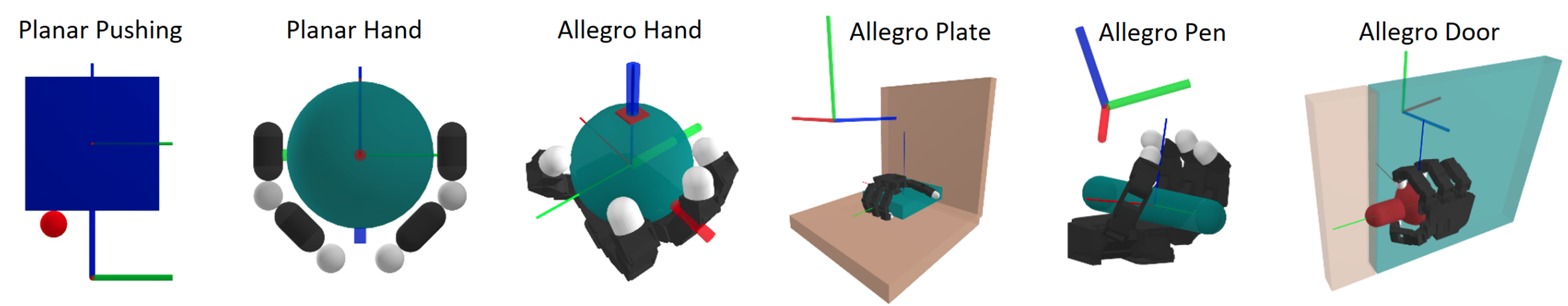

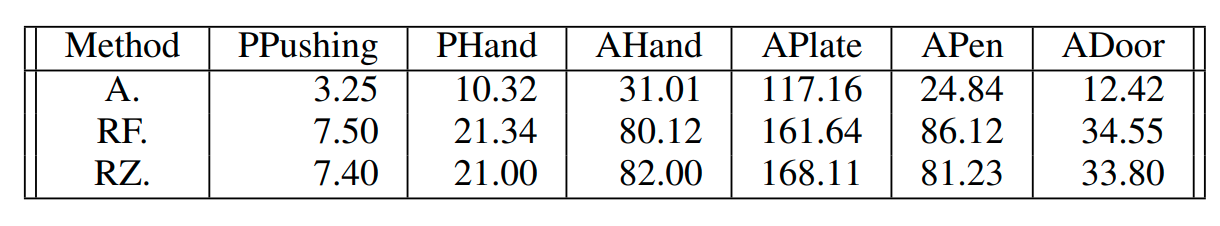

What our planner can do

What our planner can do

Contributions

- A novel contact dynamics model which is

- quasi-static

- convex

- differentiable

- amenable to smoothing

- A sampling-based global search algorithm guided by the proposed contact model.

A contact-rich planner that can generate plans for complex systems involving dexterous hands / hardware with about 1 minute of online computation on a laptop.

In contrast, RL-based methods needs tens of hours of offline computation on a beefy workstation.

Our planner is enabled by

A Quasi-static, Convex, Differentiable Contact Dynamics Model

What is quasi-static dynamics?

- Quasi-static: velocity is small so that inertial and Coriolis forces are negligible.

- \(q \coloneqq [q^\mathrm{u}, q^\mathrm{a}]\): system configuration.

- \(q^\mathrm{a}\): actuated, imepdance-controlled robots

- \(q^\mathrm{u}\): un-actuated objects.

- \( u \): position command to the stiffness-controlled robots [1].

- \(\delta q \coloneqq q_+ - q\)

- \(h\): step size in seconds.

\( q_{+} = f(q, u)\)

- \(\lambda_i\): contact forces/impulses

- \(\phi_i \): signed distances

(KKT)

- A convex quadratic program (QP), after linearizing \(\phi_i\).

- "Minimizing potential energy, subject to non-penetration constraints."

-

Tao Pang, Russ Tedrake, "A Convex Quasistatic Time-stepping Scheme for Rigid Multibody Systems with Contact and Friction", ICRA, 2021.

Force balance of the robot.

Force balance of the object.

Non-penetration.

"Contact forces cannot pull."

"Contact force needs contact."

Quasi-static dynamics is good for manipulation planning!

- Spatial benefit: Smaller state space (\([q, v]\) vs. only \(q\)).

-

Temporal benefit: Ignoring transients => looking into the future with fewer steps.

-

Many manipulation tasks are quasi-static.

Second-order Dynamics

Quasi-static Dynamics

Quasi-static dynamics needs regularization

Quasi-static Dynamics

- The quadratic cost is positive semi-definite.

- The object can be anywhere between the robot and the wall.

Regularized least squares

Regularized Quasi-static Dynamics

- The quadratic cost is now positive definite.

- "Among all possible object motions, give me the one that moves the least."

- Picking \(\epsilon = m^\mathrm{u} / h\) gives Matt Mason's definition of quasi-dynamic dynamics.

What about general systems with friction?

- For a frictionless multi-body system with \(n_\mathrm{c}\) contacts, the dynamics can be solved as a QP:

- \(\mathcal{K}^\star_i\) is the dual of the \(i\)-th friction cone.

- Still convex!

- Anitescu [1] has a nice convex approximation of Coulomb friction constraints, allowing us to write down the dynamics as a Second-Order Cone Program (SOCP):

Tangential displacements

Dynamics of the Toy Problem

- Anitescu, Mihai. "Optimization-based simulation of nonsmooth rigid multibody dynamics." Mathematical Programming 105.1 (2006): 113-143.

Differentiability

Convex, Quasi-Static, Differentiable Dynamics (an SOCP)

- \(\mathbf{A}, \mathbf{B}\) can then be computed by applying the Implicit Function Theorem to the active constraints at optimality.

- Standard practice for many differentiable simulators.

- Allows first-order randomized smoothing.

Analytic Smoothing

Optimality Condition

KKT

Original Dynamics

Smoothed Dynamics

Analytic Smoothing with Friction

Put constraints in a generalized log for \(\mathcal{\kappa}_i^\star\)

- An unconstrained convex program.

- Can be solved with Newton's method.

- Also differentiable.

Convex, Quasi-Dynamic, Differentiable Dynamics (an SOCP)

Notation:

Original dynamics

Smoothed dynamics

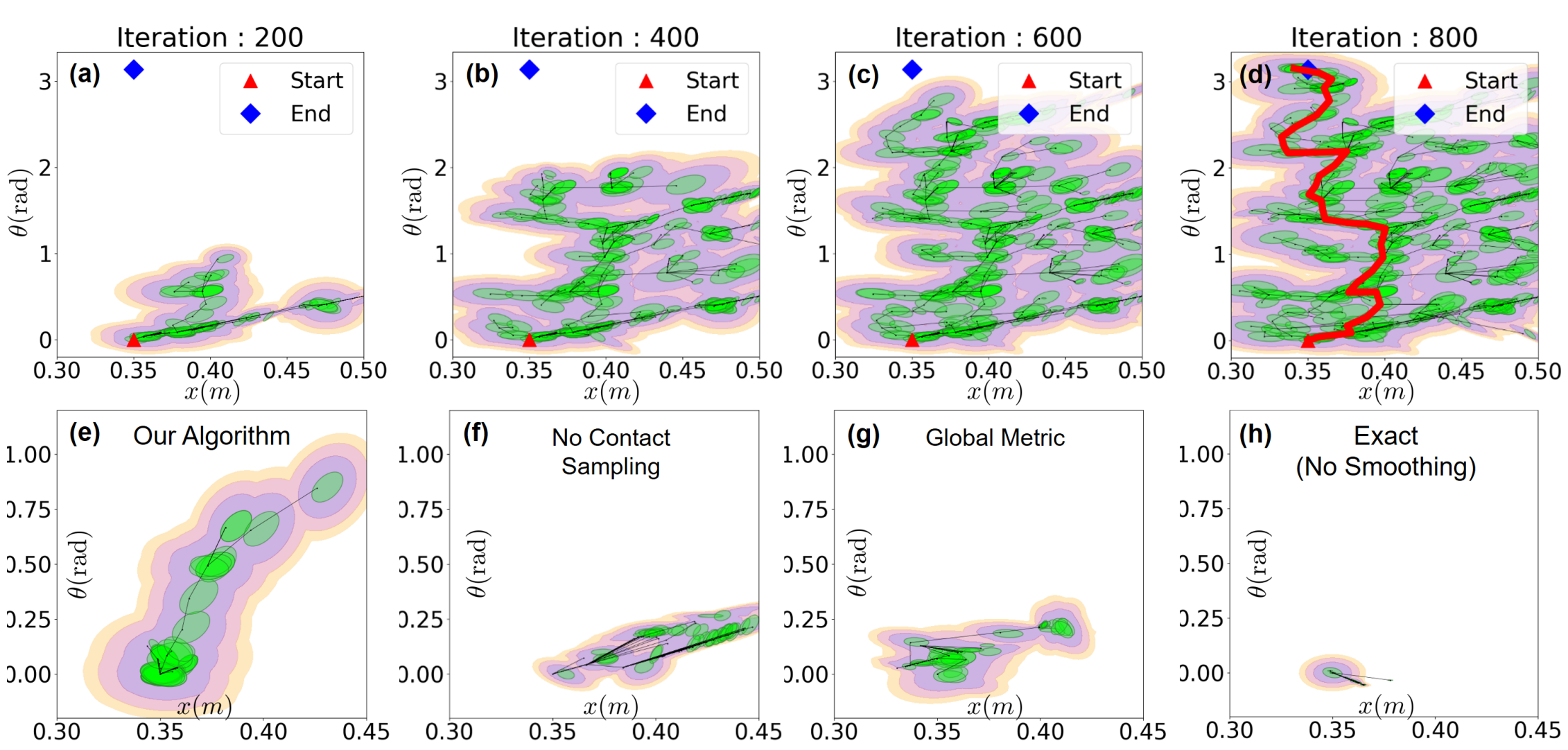

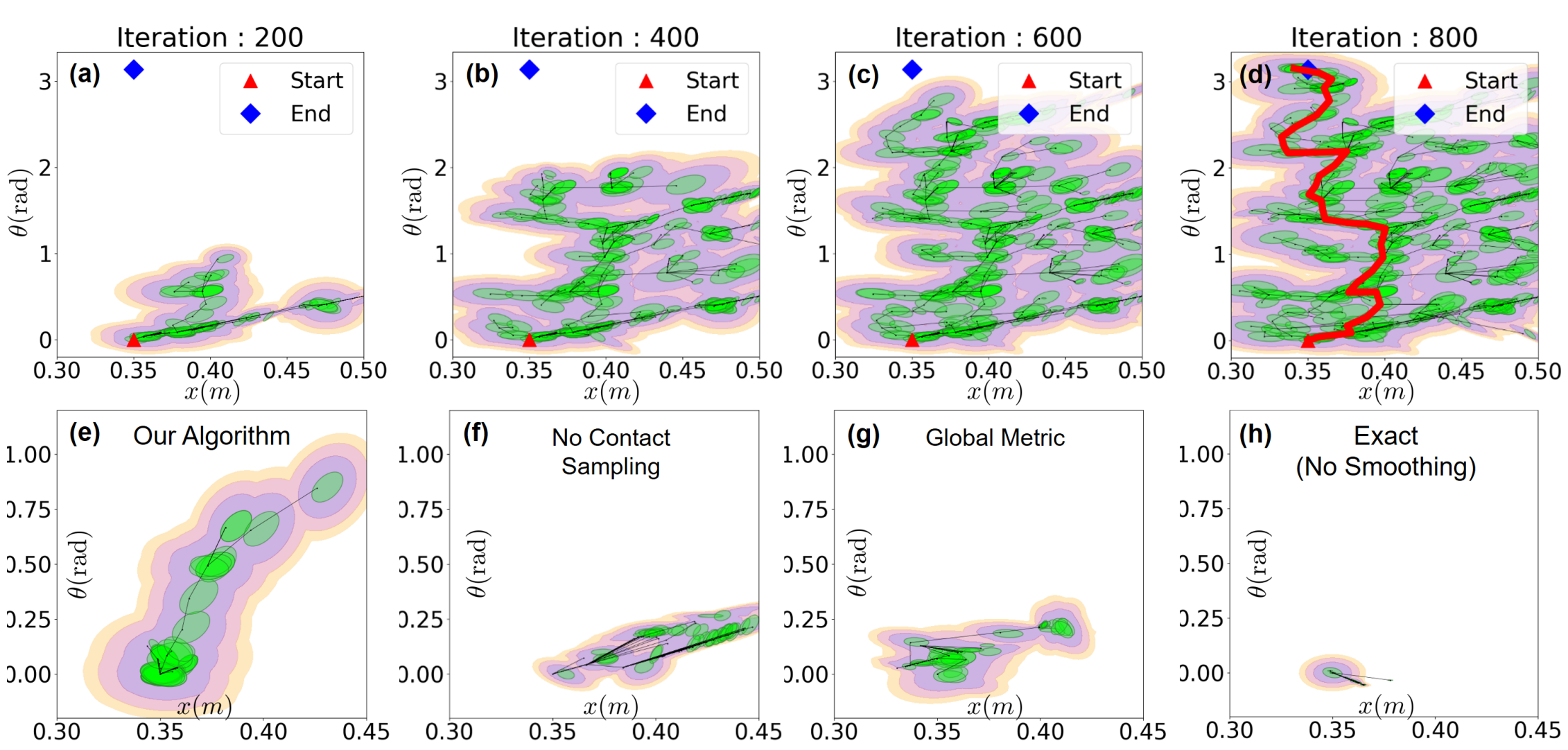

Global Sampling-based Contact-rich Planning with Quasi-static Contact Models

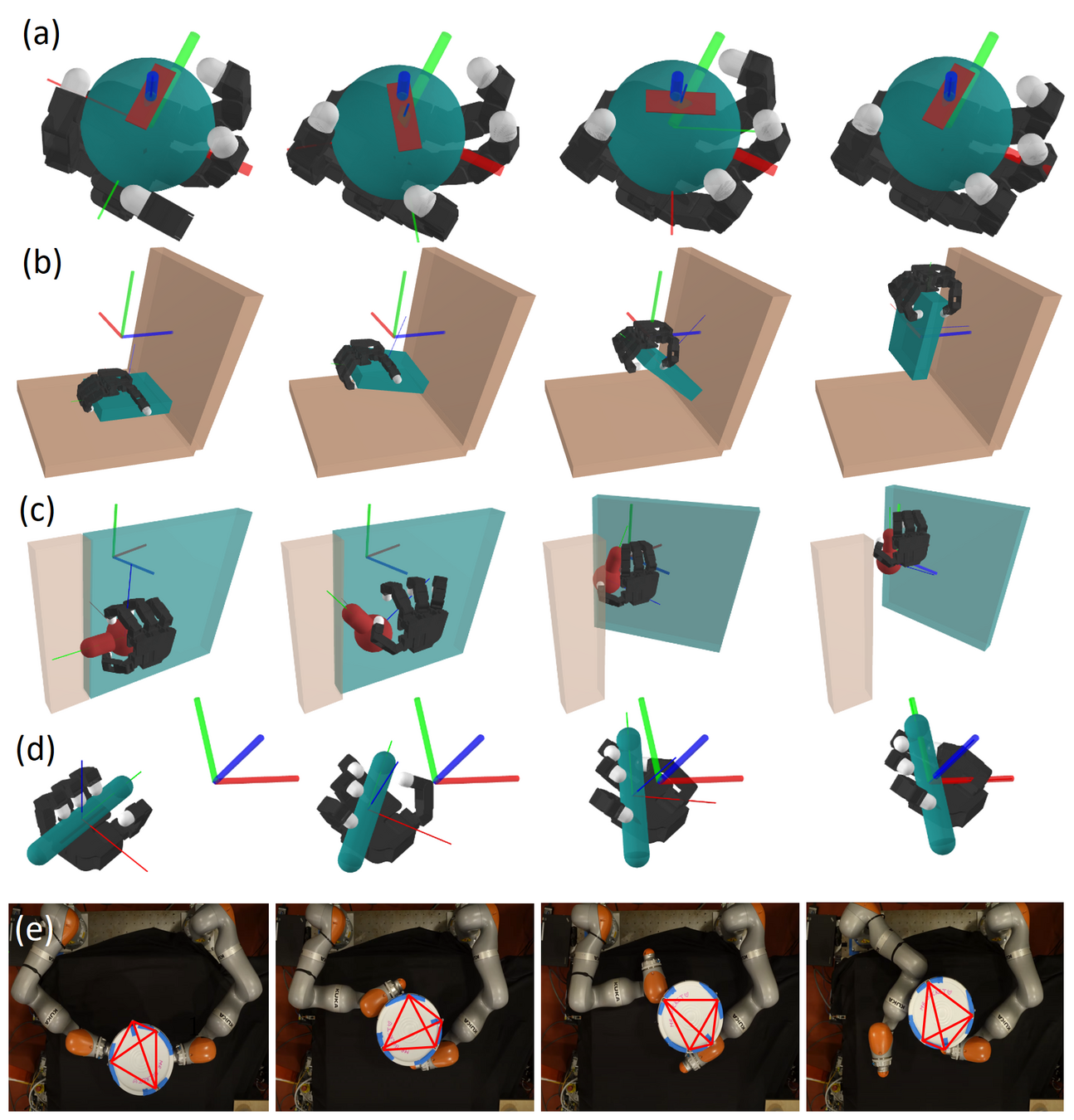

Smoothed gradients enable trajectory optimization for dexterous hands, but...

Task: Turning the ball by 30 degrees.

What about 180 degrees?

Finding this trajectory requires powering through local minima!

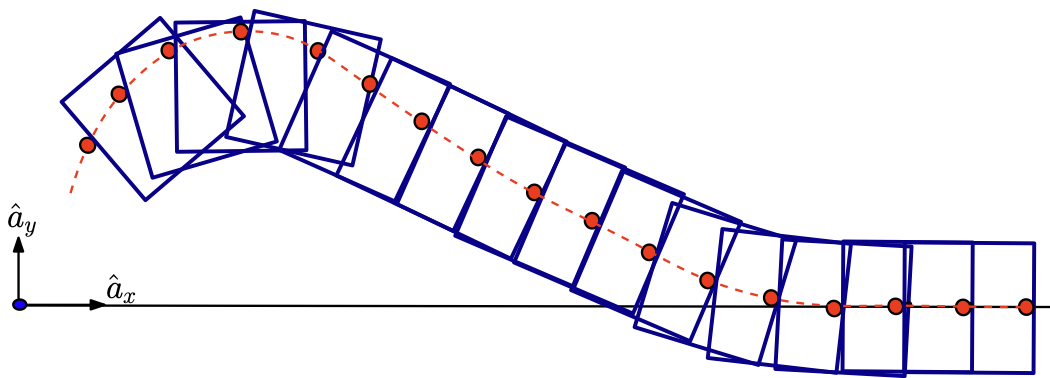

Sampling-based Motion Planing is Great at Global Exploration

- Rapidly-Exploring Random Tree (RRT): iteratively builds a tree that fills the state space. [1]

- Steven M. LaValle, "Planning Algorithms", Cambridge University Press , 2006.

One iteration of RRT (simplified)

(1) Sample subgoal

(2) Find nearest node

(3) Grow towards

"Nearest" is tricky to define under dynamics constraint.

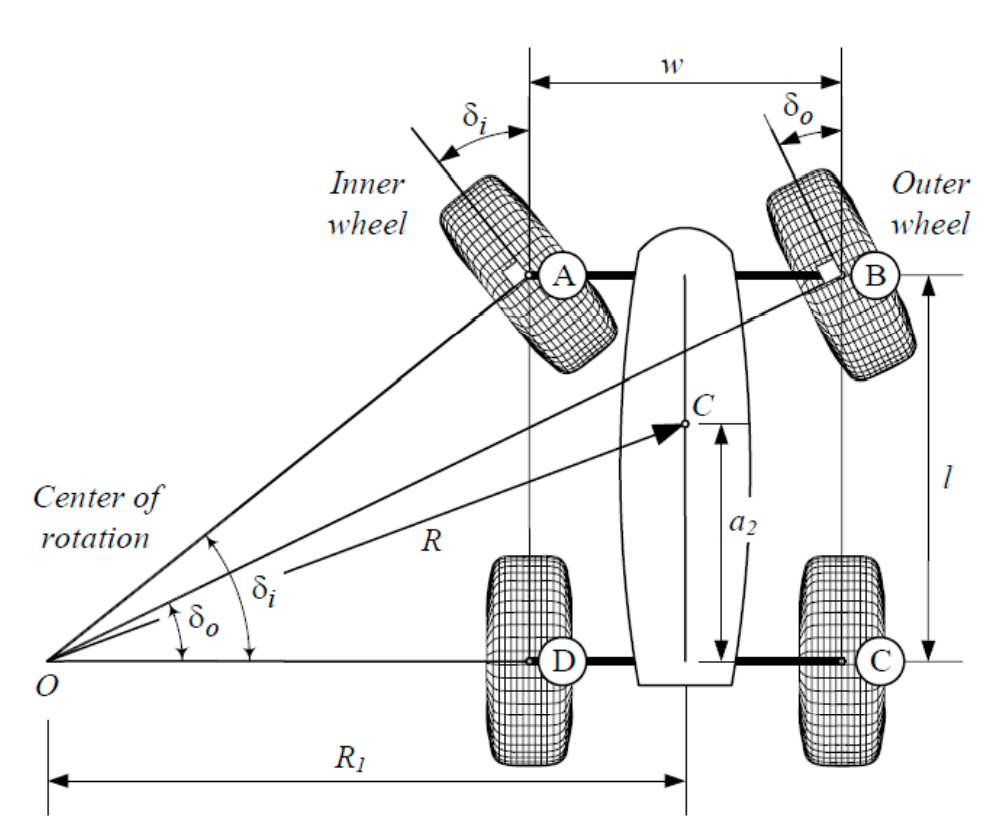

- Dynamic reachability is essential for efficient exploration. [1]

- H.J.T. Suh, J. Deacon, Q. Wang, "A Fast PRM Planner for Car-like Vehicles", self-hosted, 2018.

How do we measure reachability under the contact-dynamics constraint?

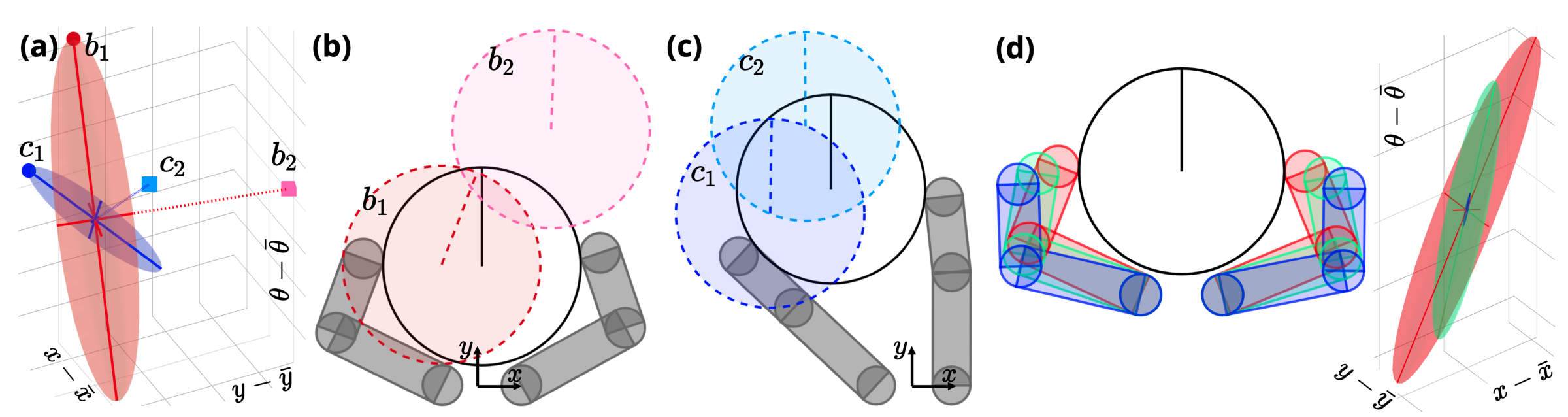

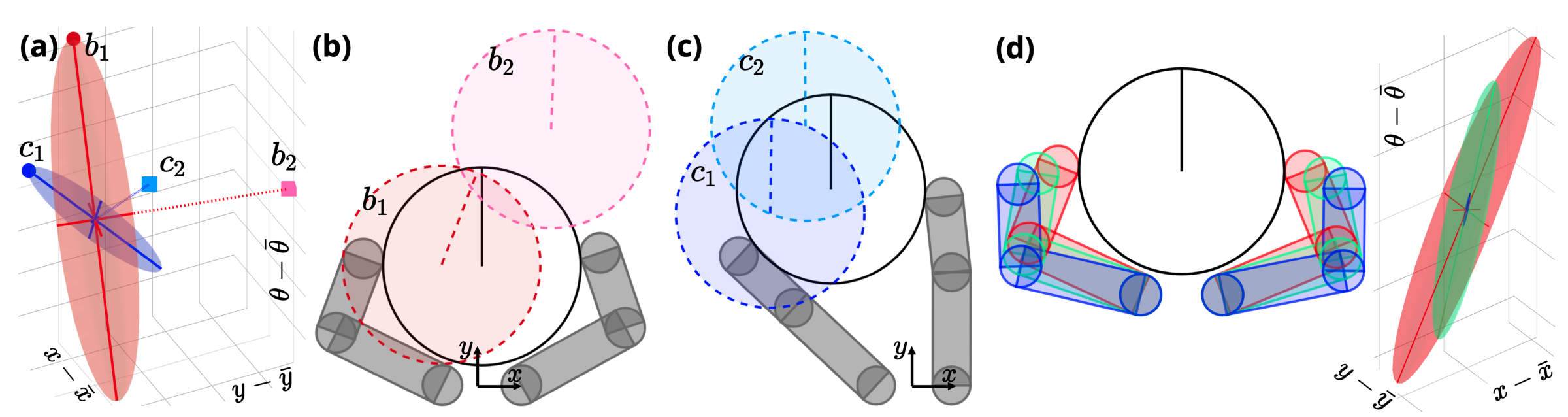

A distance "metric" based on smoothed linearization

Smoothed Contact Dynamics

A node in the RRT tree: \(\bar{q} = [\bar{q}^\mathrm{a}, \bar{q}^\mathrm{u}]\)

Take the rows corresponding to the object:

Smoothed

Smoothed Input Linearization:

\(\varepsilon \) sub-level set

configuration space of the object.

un-actauted(objects)

\(d_\rho^\mathrm{u}(\cdot; \bar{q})\) locally reflects dynamic reachability

\(\varepsilon \) sub-level set

(Distance Metric Demo)

RRT through contact (so far)

One iteration of RRT through Contact

(1) Sample subgoal \(\blacktriangle\)

(2) Find nearest node

\(q^\mathrm{a}\) is only changed locally, this is against the RRT spirit! (demo)

Dynamically-consistent Extension

is only valid locally.

(3) Grow towards \(\blacktriangle\)

Introducing contact sampling

One iteration of RRT through contact, with contact sampling

(1) Sample a different grasp (\(q^\mathrm{a}\)) for one of the nodes, giving a new distance metric

Contact sampling allows global exploration of \(q^\mathrm{a}\) on the contact manifold!

(2) Find nearest node

(3) Grow towards

Contact-sampler is system-specific

- Treat each finger as a robot arm and solve IK.

- With the hand open, pick a random direction to close the fingers until contact.

RRT through contact

Three modifications to the vanilla RRT are made:

- Nearest node on tree is found using \( d^\mathrm{u}_{\rho}(q; \bar{q}) \).

- Contact sampling: global exploration of robot configuration near the contact manifold.

- Dynamic-consistent extension: edges respect non-smooth quasi-static dynamics.

Distance Metric

RRT tree for a simple system with contacts

Ablation study: what trees look like after growing a fixed number of nodes.

Sim2Real/Hardware Transfer

Sim2Real/Hardware Transfer

Sim2Real/Hardware Transfer

What's next?

Low-resolution models

High-resolution models

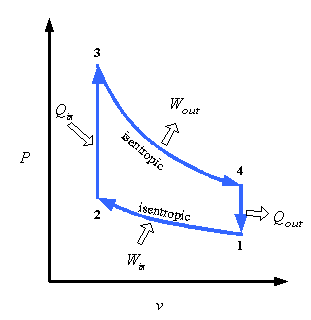

Locomotion

[Boston Dynamics]

Heat Cycle of an Internal Combustion Engine

Contact-rich Manipulation

Quasi-static contact dynamics?

- Full second-order dynamics?

- Second-order centroidal dynamics?

End of Presentation

Quasi-static dynamics is good for manipulation planning!

- Spatial benefit: Smaller state space (\([q, v]\) vs. only \(q\)).

-

Temporal benefit: Ignoring transients => looking into the future with fewer steps.

-

Many manipulation tasks are quasi-static.

Second-order Dynamics

Quasi-static Dynamics

RRT through contact (so far)

One iteration of RRT through Contact

(3) Grow towards

But only take small actions.

Normalized ellipsoid volume

(1) Sample subgoal

(2) Find nearest node

\(q^\mathrm{a}\) is only changed locally!

Introducing contact sampling

One iteration of RRT through contact, with contact sampling

(1) Sample a different grasp (\(q^\mathrm{a}\)) for one of the nodes, giving a new distance metric

Contact sampling allows global exploration of \(q^\mathrm{a}\) on the contact manifold.

Normalized ellipsoid volume

(2) Find nearest node

(3) Grow towards

But only take small actions.

Sim2Real/Hardware Transfer

Rotating the Ball by 45 degrees.

The modified RRT works well for contact-rich tasks!

Planning wall-clock time (seconds)

Why is contact-rich planning hard?

Contact dynamics is non-smooth!

(a)

(b)

No contact

Contact

No contact

Contact

Global Search with Contact Modes

No contact

Contact

Solve with gradient descent

No contact

Contact

(a)

(b)

No contact

Contact

No contact

Contact

(c)

(d)

Why is contact-rich planning hard?

Contact dynamics is non-smooth!

Two solutions

Descend with gradients of smoothed dynamics

Reason explicitly about contact mode transitions

Contact

No Contact

- Non-linear optimization can scale to complex systems.

- But descent methods get stuck in local minima.

- Good at escaping local minima,

- But mode enumeration scales poorly (exponentially) with the number of contacts.

No contact

Contact

Push Left

Do not Push Left

Push Right

Do not Push Right

x

phd_defense_deck

By Pang

phd_defense_deck

- 565