Lecture 9: Transformers

Intro to Machine Learning

[video edited from 3b1b]

Recap: Word embedding

this enables "soft" dictionary look-up:

dict_en2fr = {

"apple" : "pomme",

"banana": "banane",

"lemon" : "citron"}Good word-embeddings space is equipped with semantically meaningful vector arithmetic

Key

Value

apple

pomme

\(:\)

banane

banana

\(:\)

citron

lemon

\(:\)

dict_en2fr = {

"apple" : "pomme",

"banana": "banane",

"lemon" : "citron"}

query = "orange"

output = dict_en2fr[query]Python would complain. 🤯

orange

apple

pomme

banane

citron

banana

lemon

Key

Value

\(:\)

\(:\)

\(:\)

Query

Output

???

But we can probably see the rationale behind something like this:

Query

Key

Value

Output

orange

apple

\(:\)

pomme

banana

\(:\)

banane

lemon

\(:\)

citron

dict_en2fr = {

"apple" : "pomme",

"banana": "banane",

"lemon" : "citron"}

query = "orange"

output = dict_en2fr[query]0.1

pomme

0.1

banane

0.8

citron

+

+

0.1

pomme

0.1

banane

0.8

citron

+

+

via these mixing percentages \([0.1 0.1 0.8]\) made sense

We put (query, key, value) in "good" embeddings in our human brain

such that mixing the values

Query

Key

Value

Output

orange

apple

\(:\)

pomme

0.1

pomme

0.1

banane

0.8

citron

banana

\(:\)

banane

lemon

\(:\)

citron

+

+

orange

orange

0.1

pomme

0.1

banane

0.8

citron

+

+

apple

banana

lemon

orange

0.8

0.1

0.1

pomme

banane

citron

+

+

very roughly, the attention mechanism in transformers automates this process.

apple

banana

lemon

orange

orange

orange

Query

Key

Value

Output

orange

apple

\(:\)

pomme

banana

\(:\)

banane

lemon

\(:\)

citron

orange

orange

pomme

banane

citron

0.1

pomme

0.1

banane

0.8

citron

+

+

pomme

banane

citron

+

+

dot-product similarity

softmax

a. compare query and key for merging percentages:

0.1

0.1

0.8

0.1

0.1

0.8

Query

Key

Value

Output

orange

apple

\(:\)

pomme

0.1

pomme

0.1

banane

0.8

citron

banana

\(:\)

banane

lemon

\(:\)

citron

+

+

orange

orange

pomme

banane

citron

+

+

0.8

0.1

0.1

pomme

banane

citron

+

+

b. then output mixed values

a. compare query and key for merging percentages:

Let's see how this intuition becomes a trainable mechanism.

apple

banana

lemon

orange

orange

orange

softmax

0.1

0.1

0.8

Outline

- Transformers high-level intuition and architecture

- Attention mechanism

- Multi-head attention

- (Applications)

- Transformers high-level intuition and architecture

- Attention mechanism

- Multi-head attention

- (Applications)

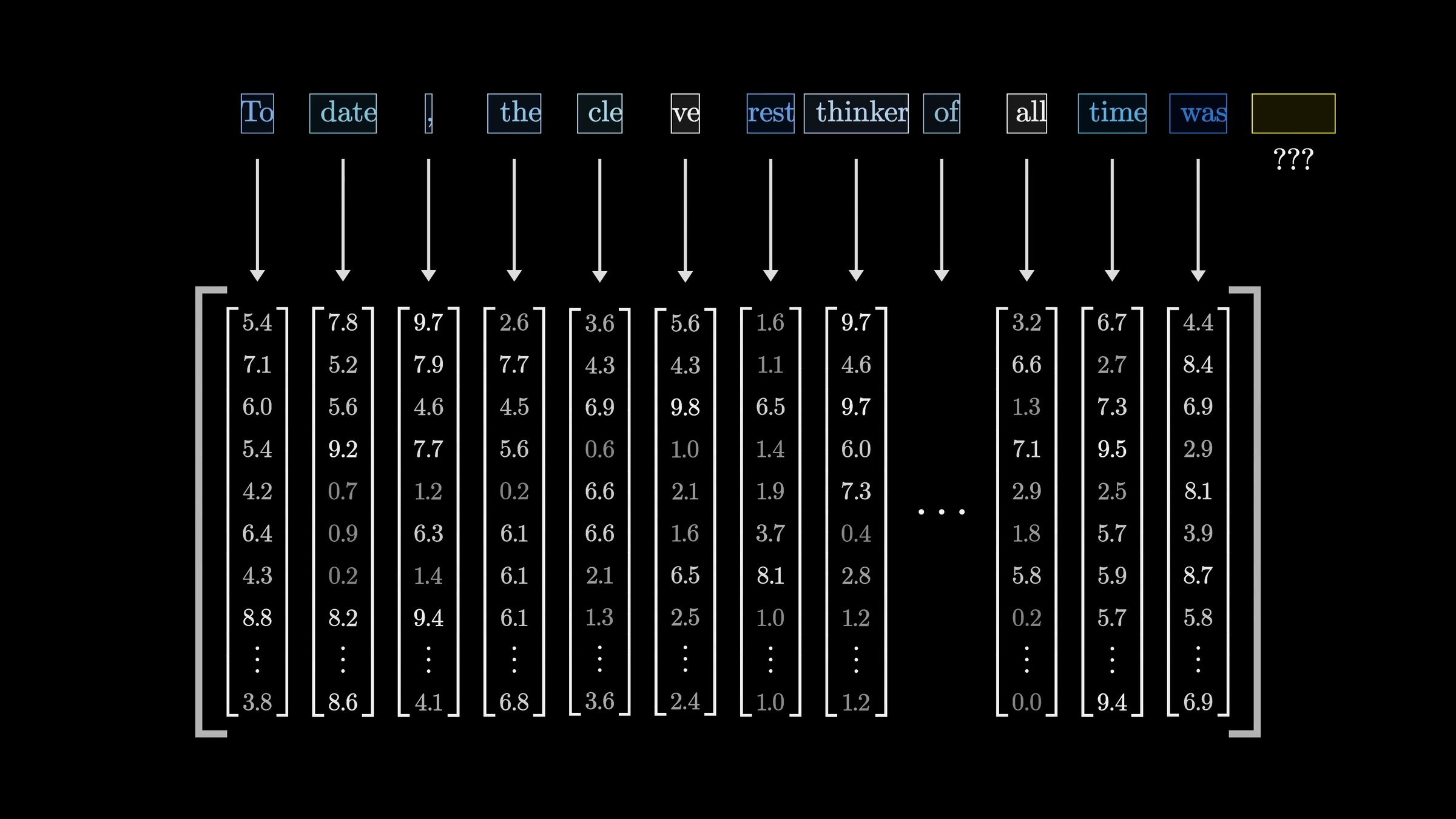

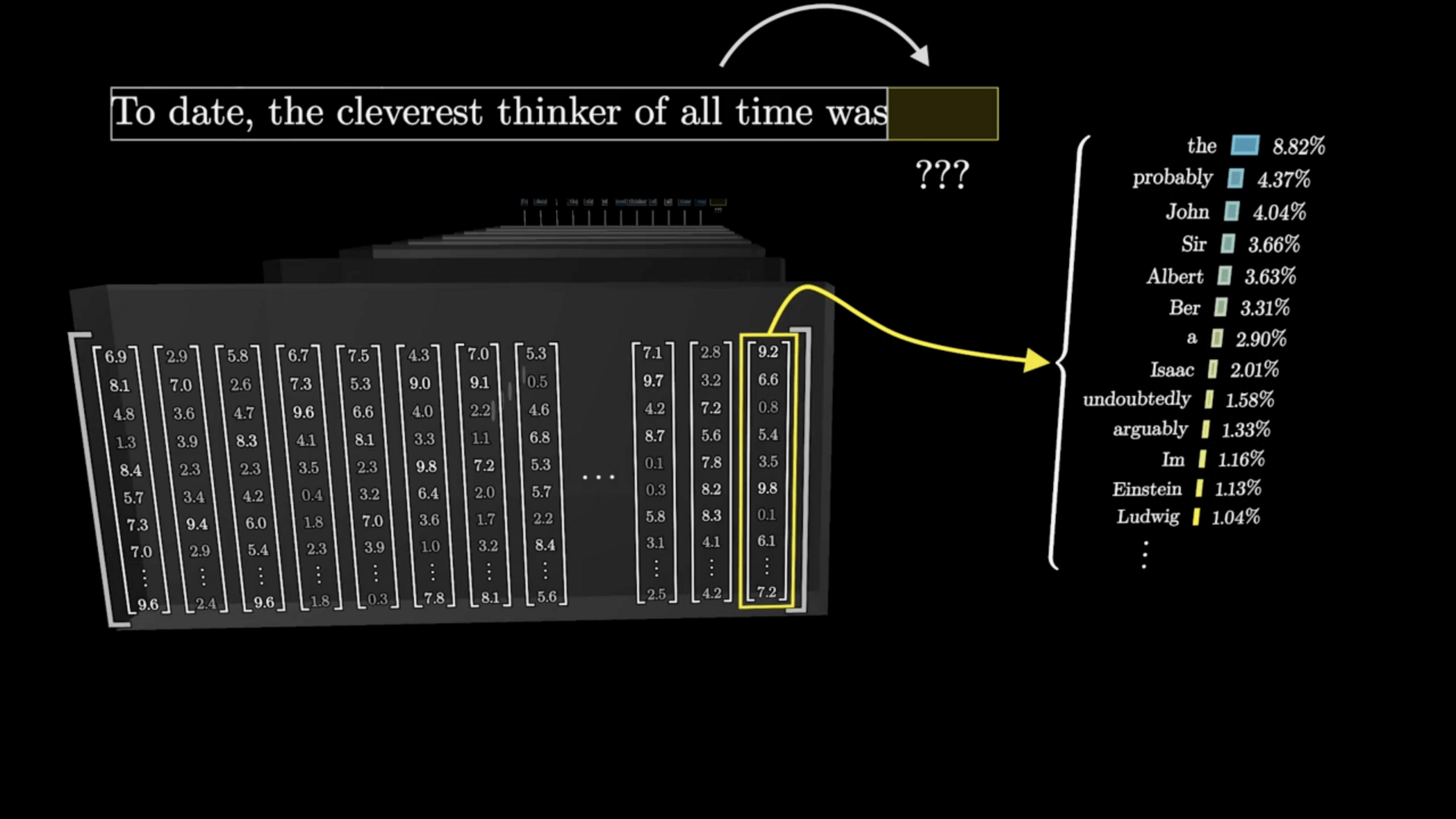

Large Language Models (LLMs) are trained in this self-supervised way

- Scrape the internet for plain texts.

- Cook up “labels” (prediction targets) from these texts.

- Convert “unsupervised” problem into “supervised” setup.

"To date, the cleverest thinker of all time was Issac. "

feature

label

To date, the

cleverest

To date, the cleverest

thinker

To date, the cleverest thinker

was

To date, the cleverest thinker of all time was

Issac

auto-regressive prediction

[video edited from 3b1b]

\(n\)

\(d\)

input embedding

[video edited from 3b1b]

Minimizing cross-entropy loss drives the weights to assign higher probability to the correct next token

word embedding to a softmax distribution over the vocabulary

[video edited from 3b1b]

Transformer

"To date, the cleverest [thinker] of all time was Issac.

push for Prob("date") to be high

push for Prob("the") to be high

push for Prob("cleverest") to be high

push for Prob("thinker") to be high

distribution over the vocabulary

\(\dots\)

\(\dots\)

\(\dots\)

\(\dots\)

To

date

the

cleverest

input embedding

\(\dots\)

\(\dots\)

\(\dots\)

transformer block

transformer block

transformer block

\(L\) blocks

\(\dots\)

\(\dots\)

output embedding

To

date

the

cleverest

\(\dots\)

transformer block

transformer block

transformer block

A sequence of \(n\) tokens, each token in \(\mathbb{R}^{d}\)

\(\dots\)

\(\dots\)

\(\dots\)

\(\dots\)

output embedding

input embedding

input embedding

To

date

the

cleverest

input embedding

\(\dots\)

transformer block

output embedding

\(\dots\)

\(\dots\)

\(\dots\)

\(\dots\)

transformer block

transformer block

each of the \(n\) tokens transformed, block by block

within a shared \(d\)-dimensional word-embedding space.

To

date

the

cleverest

attention layer

MLP

\(\dots\)

\(\dots\)

\(\dots\)

\(\dots\)

neuron weights

input embedding

transformer block

output embedding

To

date

the

cleverest

attention layer

attention output projection

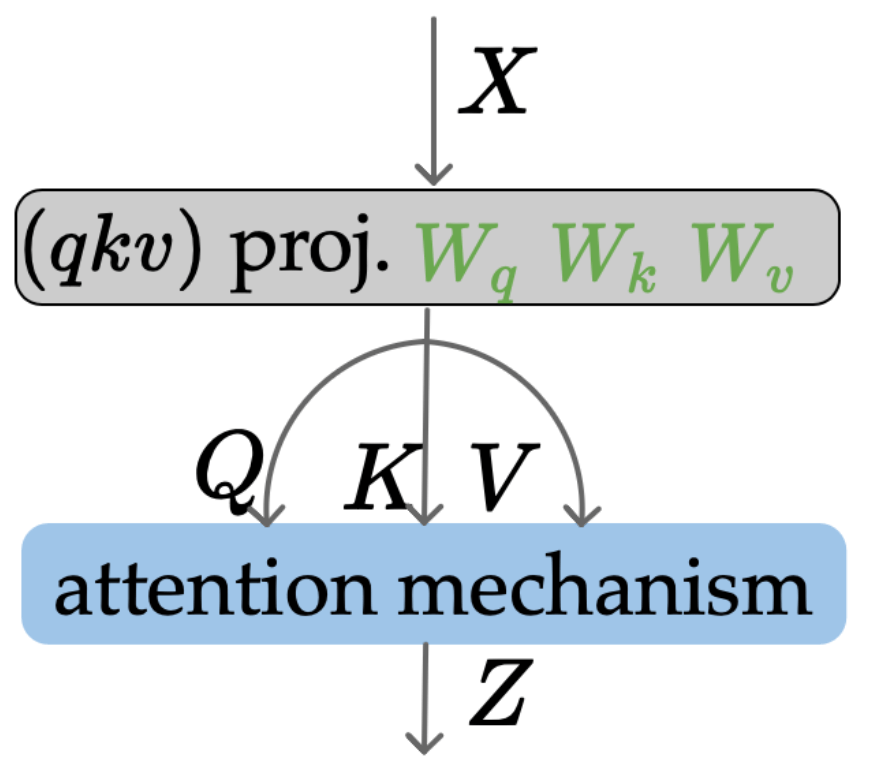

\((qkv)\) projection

attention mechanism

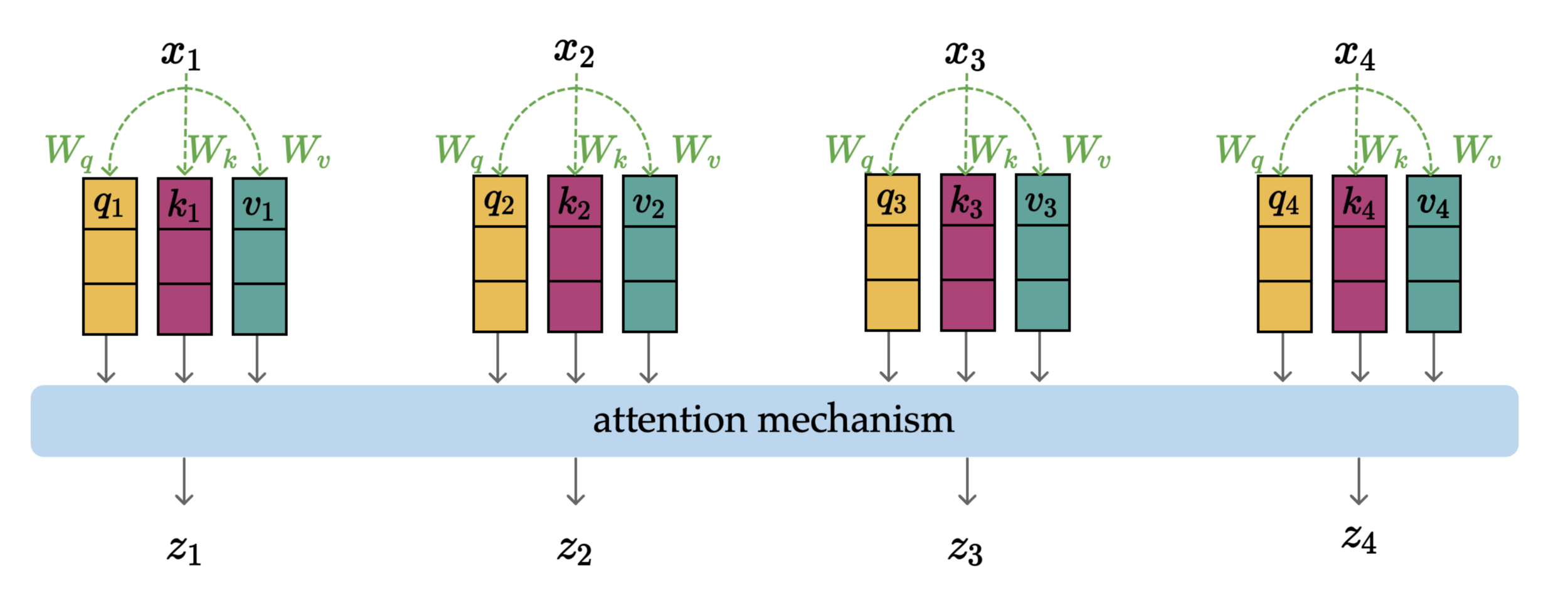

Most important bits in an attention layer:

- (query, key, value) projection

- attention mechanism

Why learning these projections:

- \(W_q\) learns how to ask

- \(W_k\) learns how to listen

- \(W_v\) learns how to speak

With learned projections, we frame \(x\) into:

- a query to be the questions

- a key to be compared

- a value to contribute

1. (query, key, value) projection

- \(W_q, W_k, W_v\), all in \(\mathbb{R}^{d \times d_k}\)

- project word embedding \(x\) from \(d\)-dimensional space to \(d_k\)-dimensional (\(q, k, v\)) spaces (typically \(d_k < d\))

- \(q_i = W_q^Tx_i,\; k_i = W_k^Tx_i,\; v_i = W_v^Tx_i,\; \forall i\) — weight sharing across positions

- parallel and structurally identical processing

1. (query, key, value) projection

To

date

the

cleverest

- Attention mechanism turns the projected \((q,k,v)\) into \(z\)

- Each \(z\) is context-aware: a mixture of everyone's values, weighted by relevance

2. Attention mechanism

attention mechanism

To

date

the

cleverest

Outline

- Transformers high-level intuition and architecture

- Attention mechanism

- Multi-head attention

- (Applications)

attention mechanism

To

date

the

cleverest

softmax

To

date

the

cleverest

for numerical stability

softmax

To

date

the

cleverest

attention mechanism

To

date

the

cleverest

softmax

To

date

the

cleverest

softmax

To

date

the

cleverest

attention mechanism

To

date

the

cleverest

softmax

parallel and structurally identical processing

can calculate \(z_4\) without \(z_3\)

To

date

the

cleverest

softmax

To

date

the

cleverest

attention mechanism

To

date

the

cleverest

softmax

To

date

the

cleverest

softmax

To

date

the

cleverest

attention mechanism

maps sequence of \(x\) to sequence of \(z\):

1. (query, key, value) projection

2. attention mechanism

parallel and structurally identical processing

Attention head

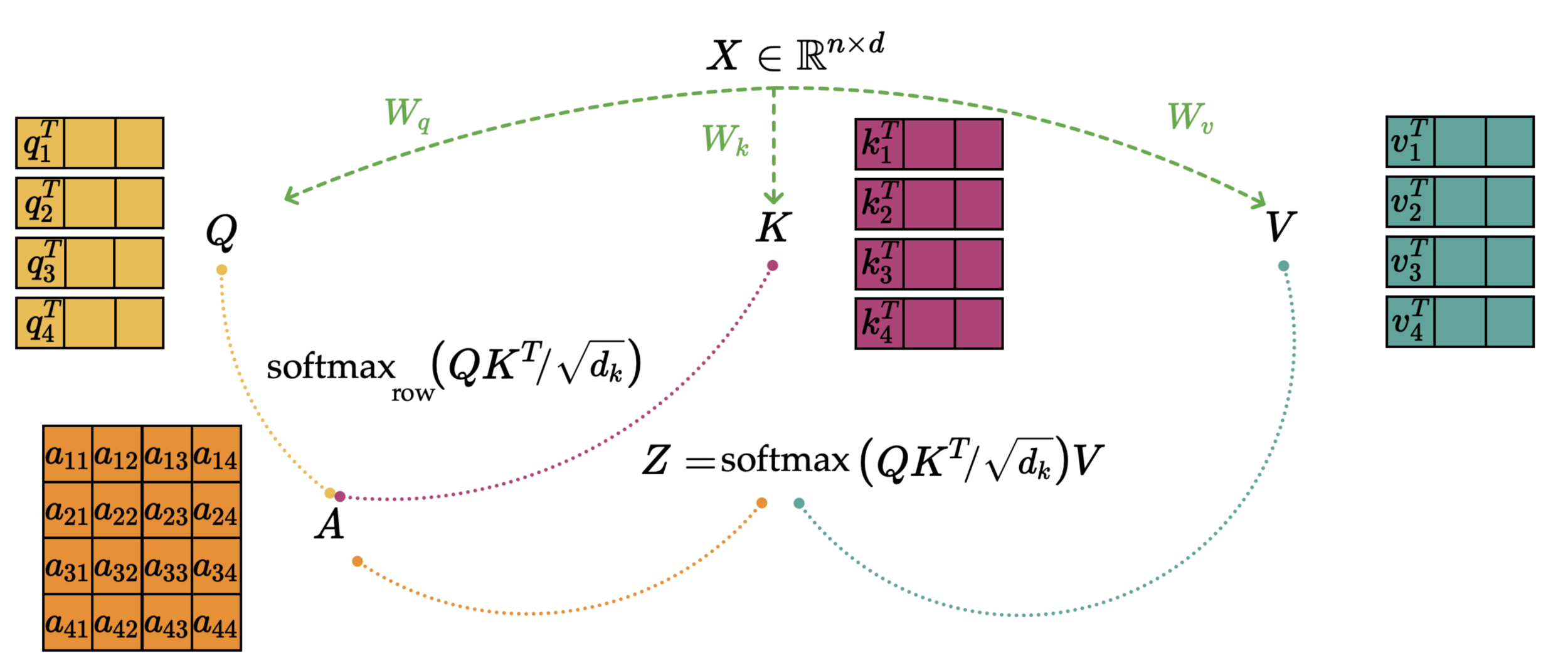

Attention head - compact matrix form

By stacking each individual vector in the sequence as a row

To

date

the

cleverest

input embedding

output embedding

\(\dots\)

transformer block

transformer block

transformer block

A sequence of \(n\) tokens, each token in \(\mathbb{R}^{d}\)

\(\dots\)

\(\dots\)

\(\dots\)

\(\dots\)

Stack each token as a row in the input

1. (query, key, value) projection

2a. dot-product similarity

compare \(q_i\) and \(k_j\)

assemble the \(n \times n\) similarities so rows correspond to query

2a. dot-product similarity

2a. dot-product similarity

2a. dot-product similarity

each row sums up to 1

softmax

softmax

softmax

softmax

softmax

row

\(A\)

2b. attention matrix

2c. attention-weighted values \(Z\)

softmax

row

softmax

row

or, often even more compactly

attention mechanism

attention scores depend on the (query, key) only

attention mechanism

attention mechanism

attention mechanism

attention mechanism

Outline

- Transformers high-level intuition and architecture

- Attention mechanism

- Multi-head attention

- (Applications)

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

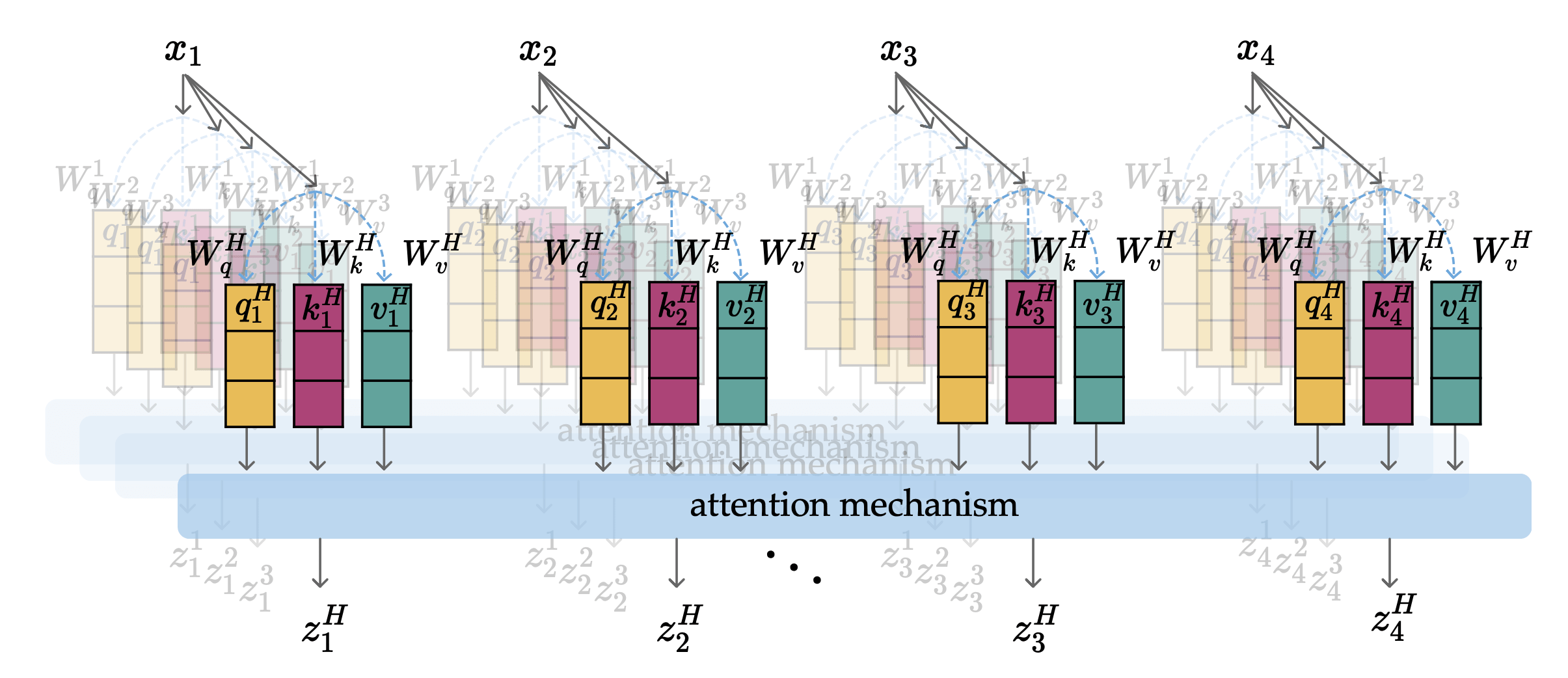

In particular, each head:

- learns its own set of \(W_q, W_k, W_v\)

- creates its own projected sequence of \((q,k,v)\)

- computes its own sequence of \(z\)

- structurally identical processing

- for each token in the sequence:

- structurally identical processing

Parallel, and structurally identical processing across all heads and tokens.

Multi-head Attention

index along heads

index along sequence

concatenated as \(z_1\)

concatenated as \(z_2\)

concatenated as \(z_3\)

concatenated as \(z_4\)

each concatenated \(z_i \in \mathbb{R}^{Hd_k}\)

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention output projection

attention mechanism

attention mechanism

attention mechanism

attention mechanism

attention output projection

all in \(\mathbb{R}^{d}\)

multi-head attention

Shape Example:

\(W\)s are the learned weights

\(n\)

num tokens

5

\(d\)

word-embedding dim

6

\(X\)

input

\(n \times d\)

\(5 \times 6\)

\(W_q^h\)

query proj

\(d \times d_k\)

\(6 \times 3\)

\(W_k^h\)

key proj

\(d \times d_k\)

\(6 \times 3\)

\(W_v^h\)

value proj

\(d \times d_k\)

\(6 \times 3\)

\(Q^h\)

query

\(n \times d_k\)

\(5 \times 3\)

\(K^h\)

key

\(n \times d_k\)

\(5 \times 3\)

\(V^h\)

value

\(n \times d_k\)

\(5 \times 3\)

\(A^h\)

attn matrix

\(n \times n\)

\(5 \times 5\)

\(Z^h\)

attn head out

\(n \times d_k\)

\(5 \times 3\)

for a single

attention head

\(H\)

num heads

2

\(\text{concat}(Z^1 \dots Z^H)\)

multi-head out

\(n \times Hd_k\)

\(5 \times 6\)

\(W^o\)

output proj

\(Hd_k \times d\)

\(6 \times 6\)

\(Z_{\text{out}}\)

attn layer out

\(n \times d\)

\(5 \times 6\)

\(d_k\)

\((qkv)\)-embedding dim

3

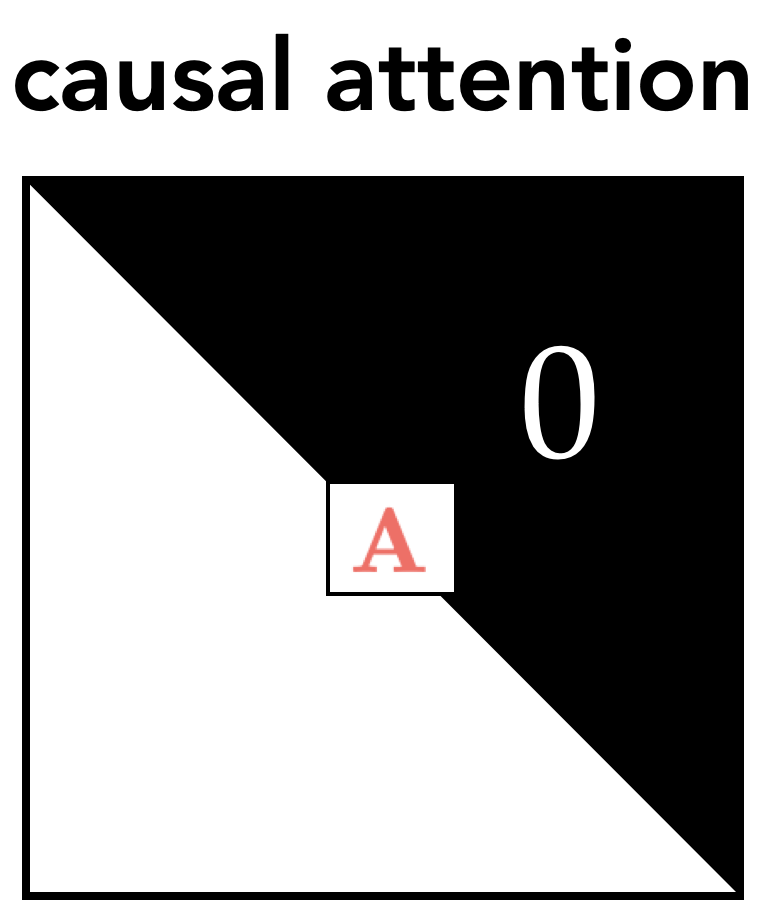

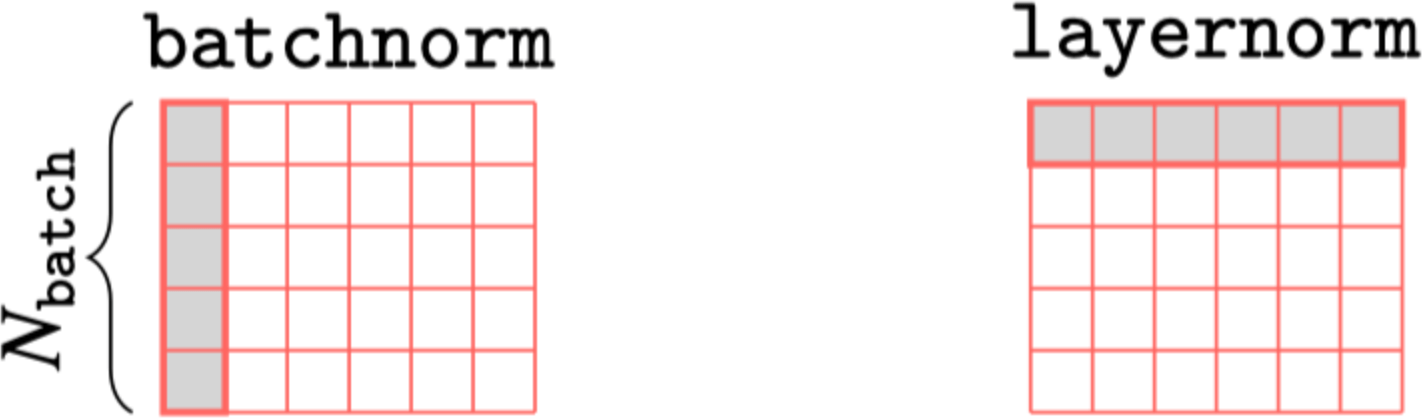

Some practical techniques commonly needed when training auto-regressive transformers:

masking

Layer normalization

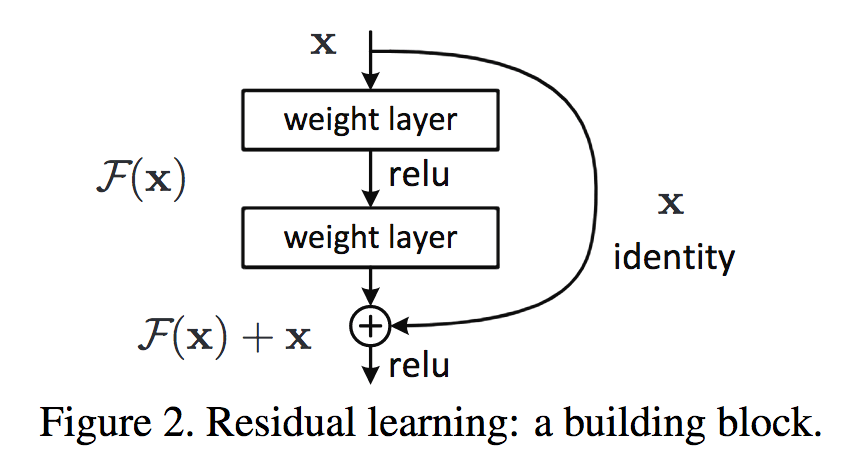

Residual connection

Positional encoding

Outline

- Transformers high-level intuition and architecture

- Attention mechanism

- Multi-head attention

- (Applications)

image credit: Nicholas Pfaff

Generative Boba by Boyuan Chen in Bldg 45

😉

😉

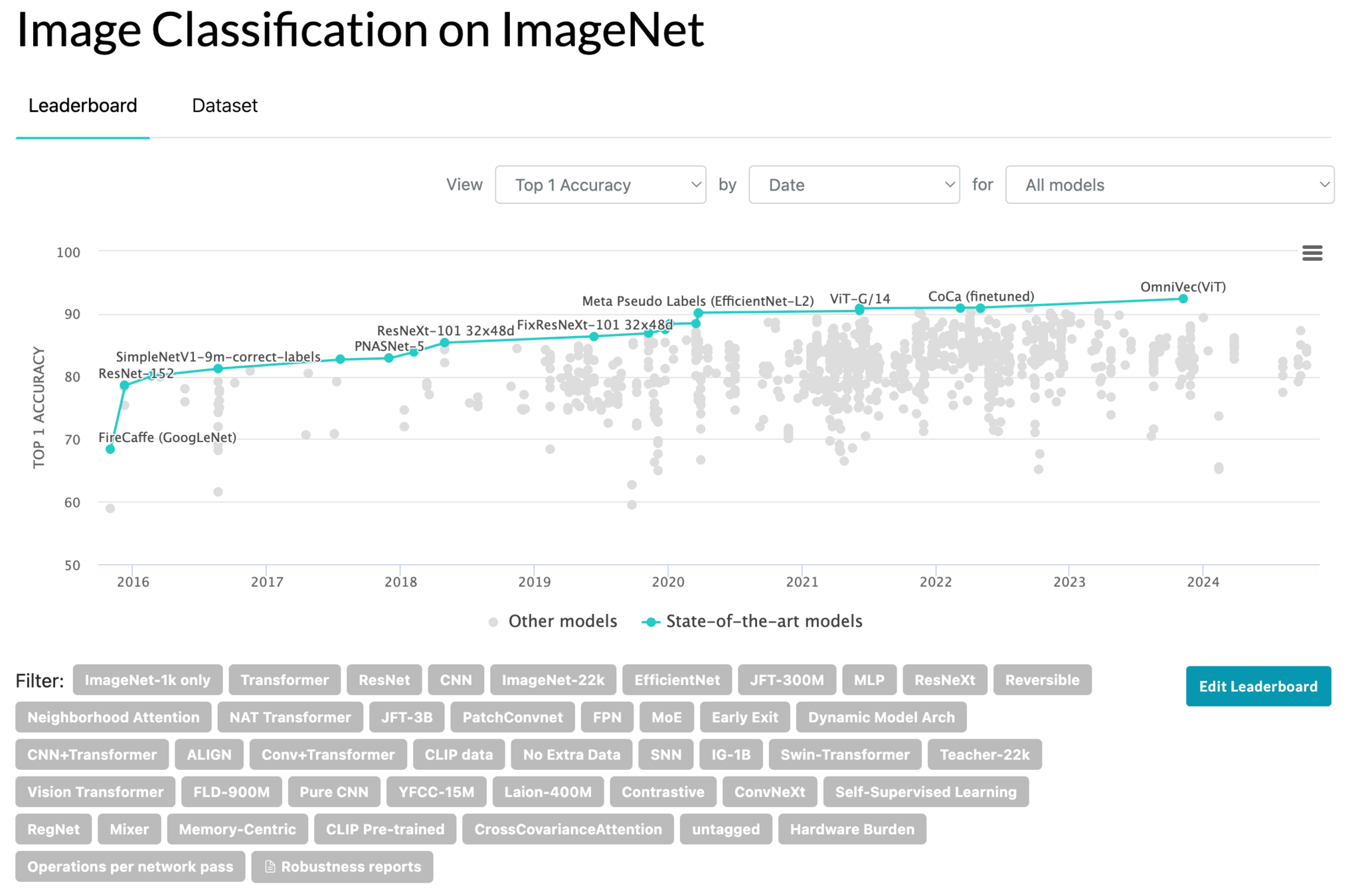

Transformers in Action: Performance across domains

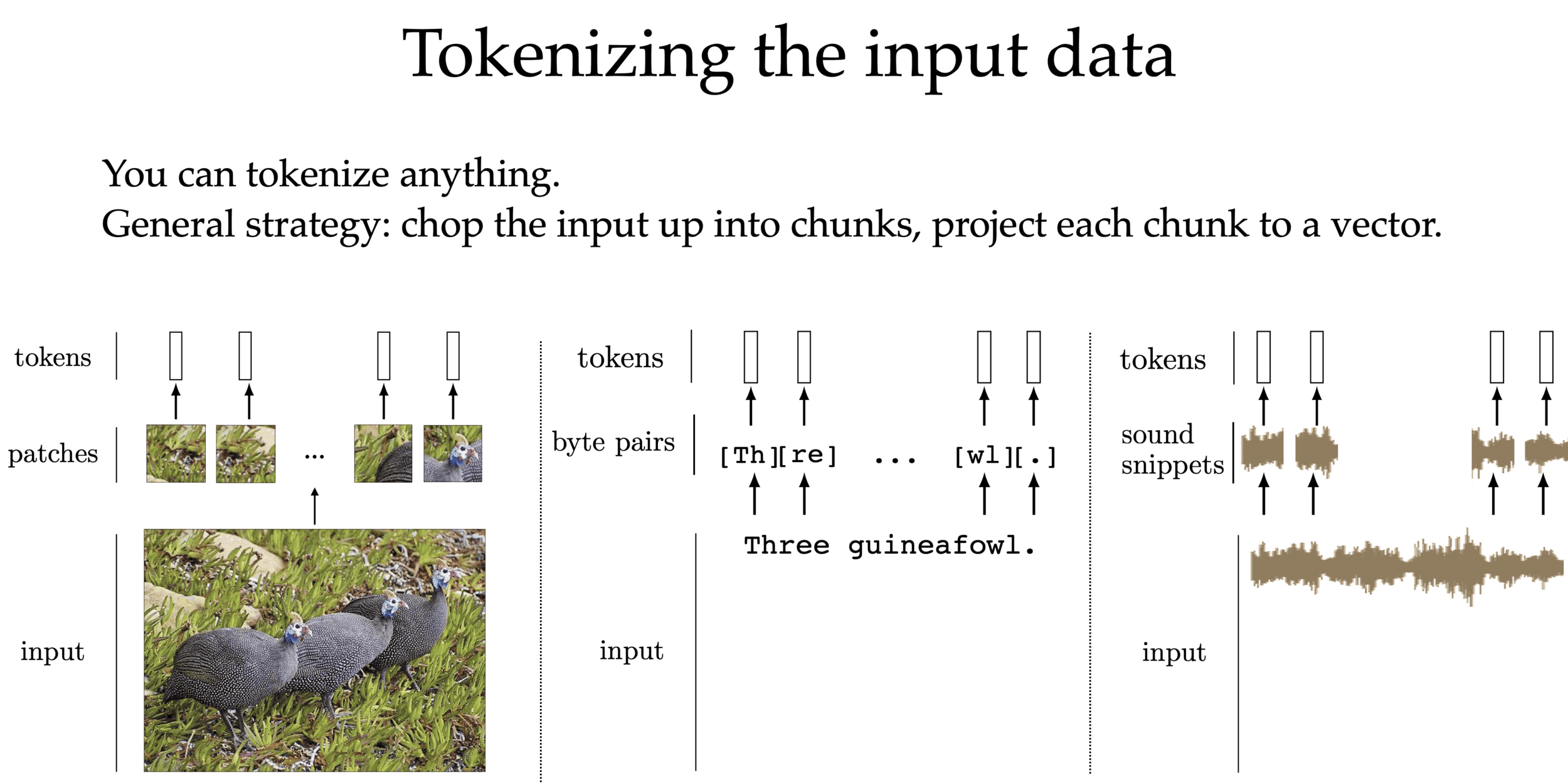

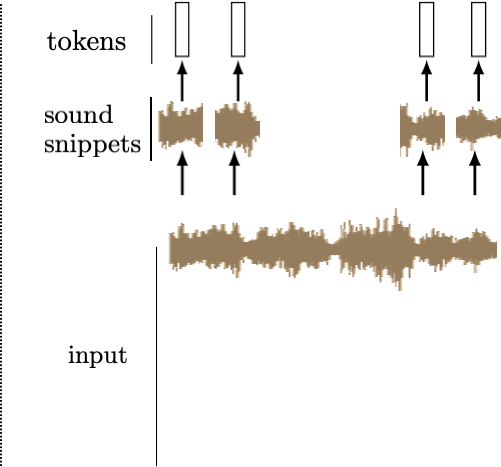

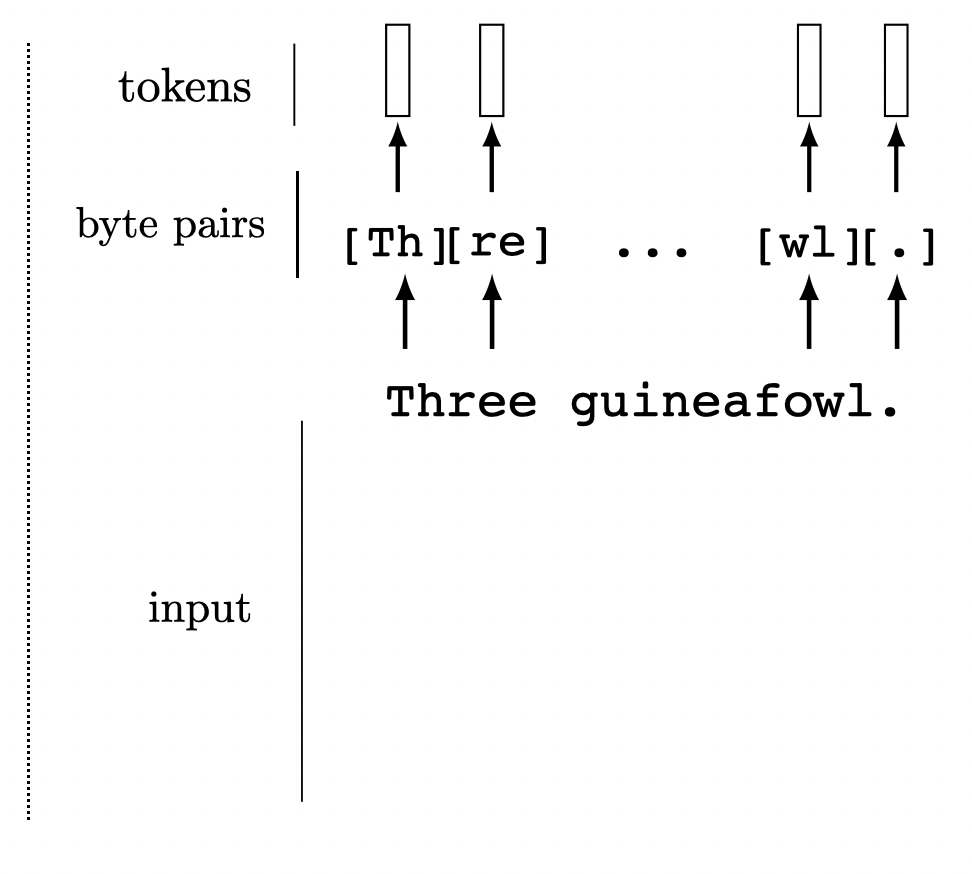

We can tokenize anything.

General strategy: chop the input up into chunks, project each chunk to an embedding

[images credit: visionbook.mit.edu]

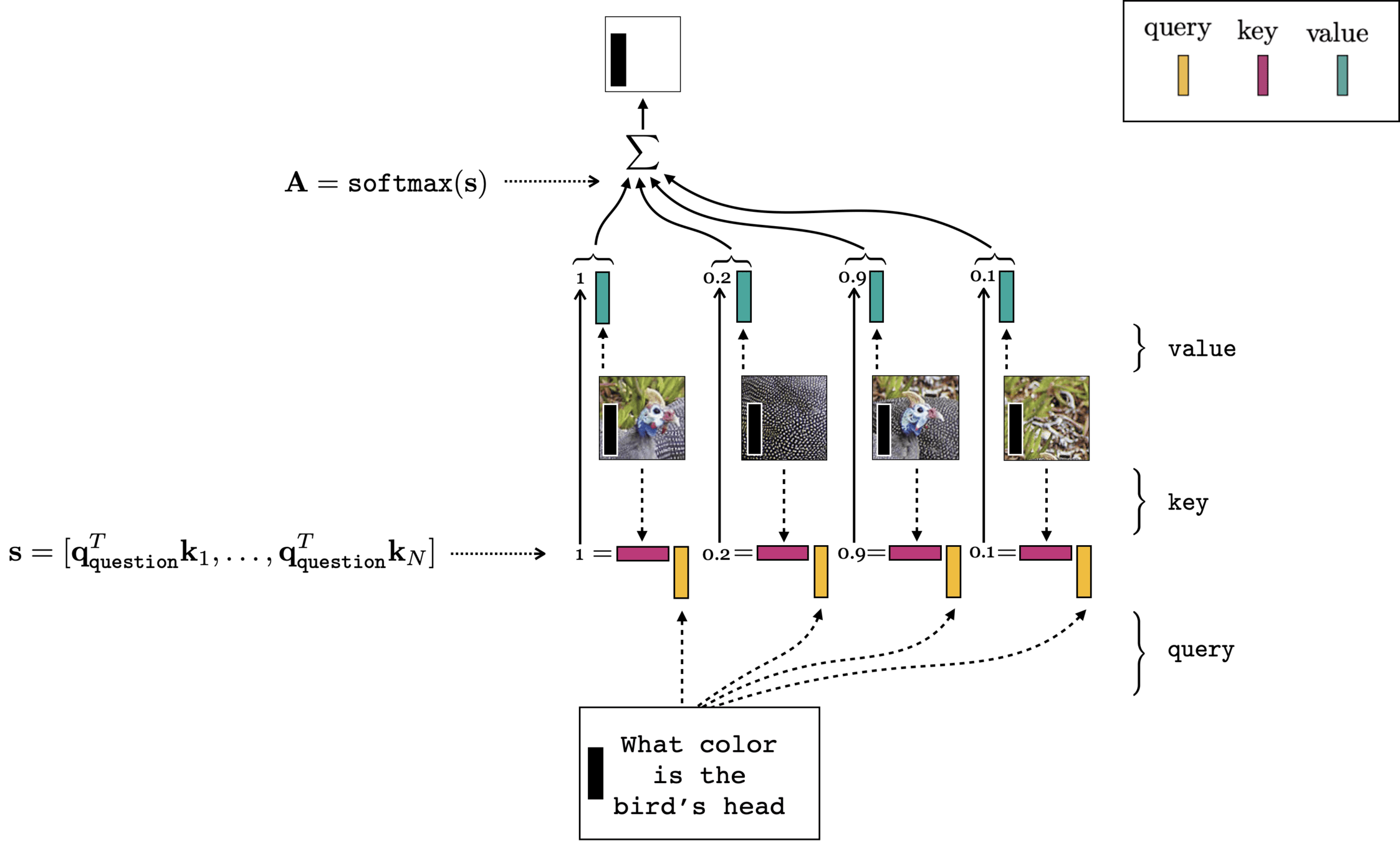

Multi-modality (image q&a)

- (query, key, value) come from different input modality

- cross-attention

[images credit: visionbook.mit.edu]

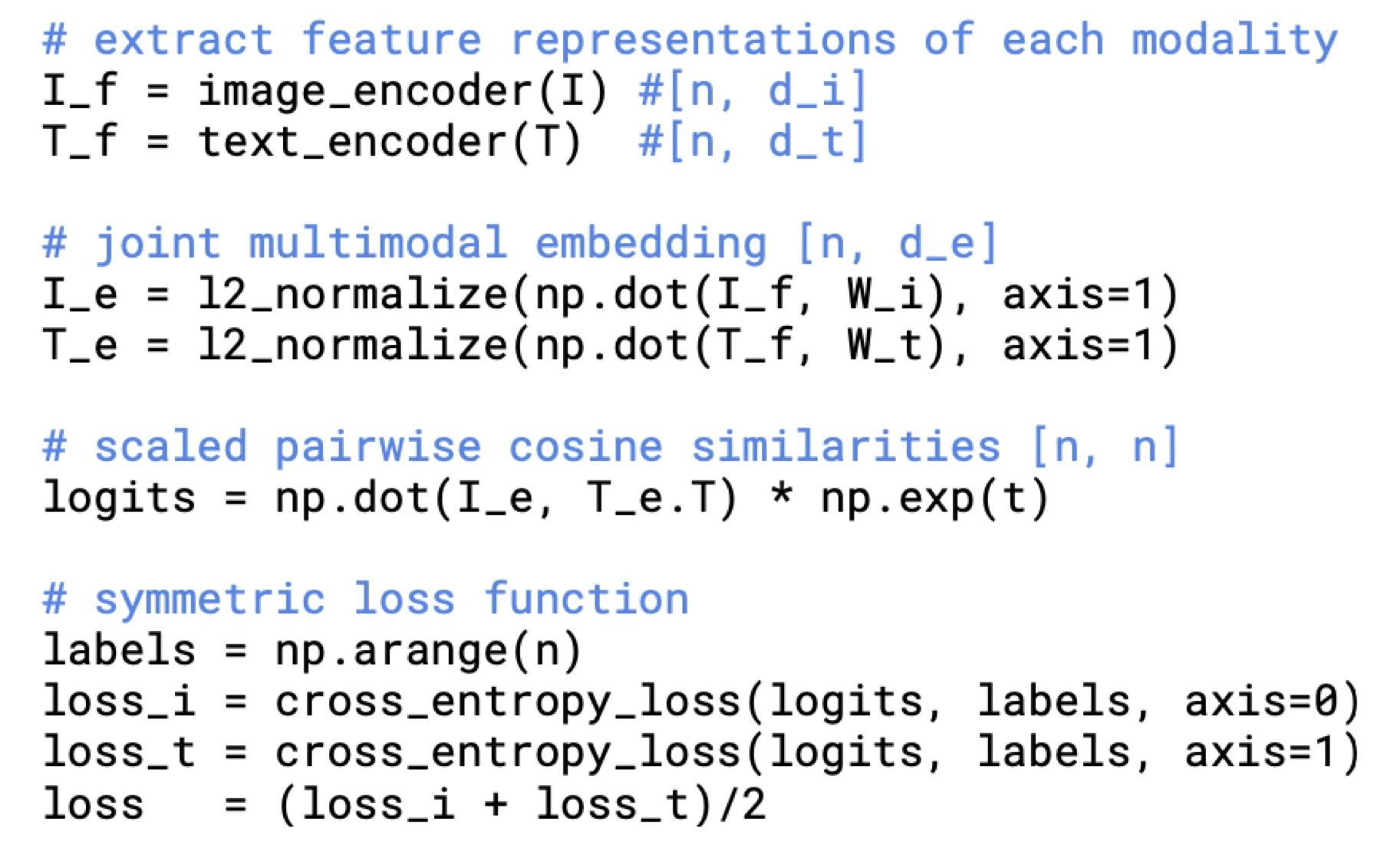

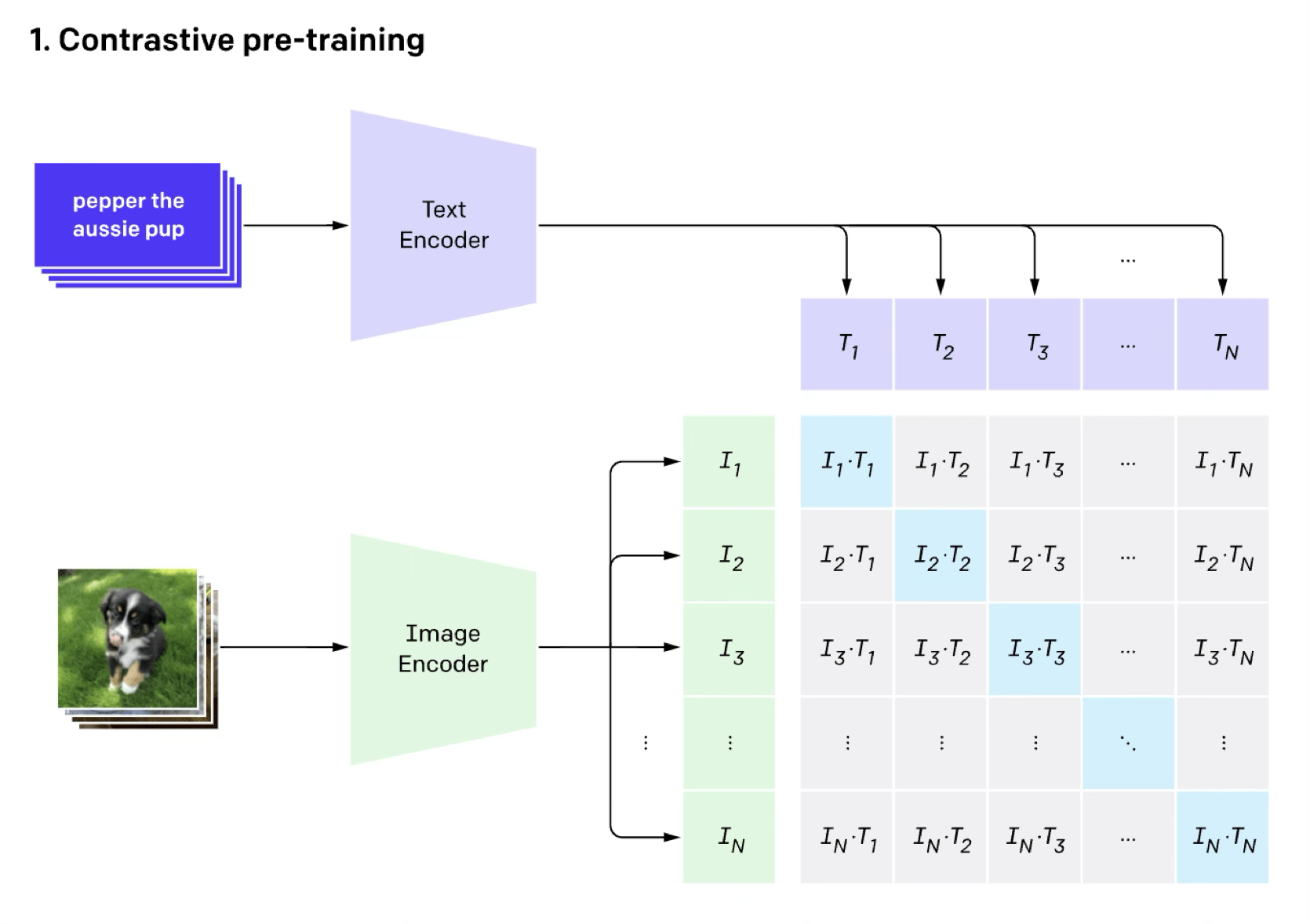

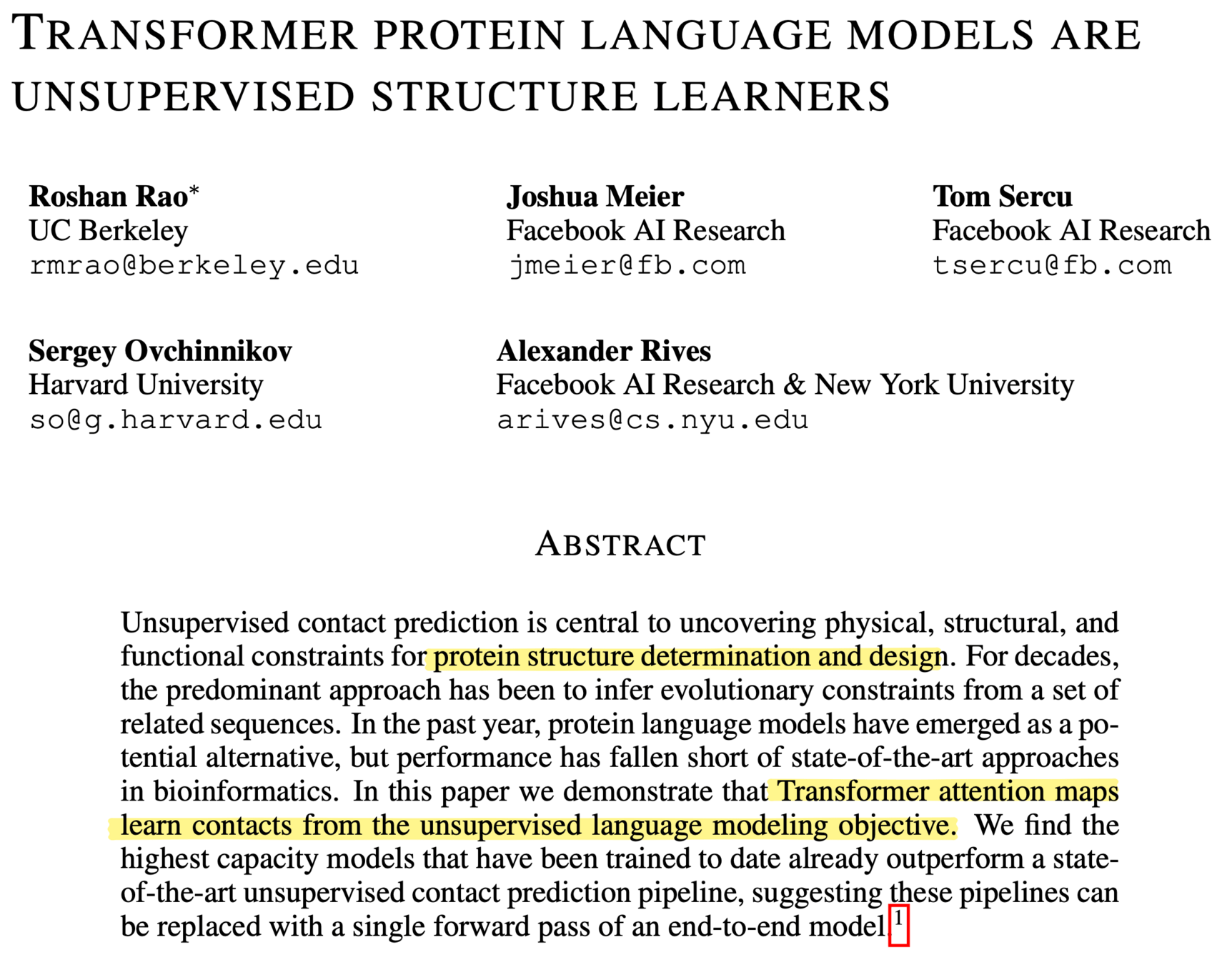

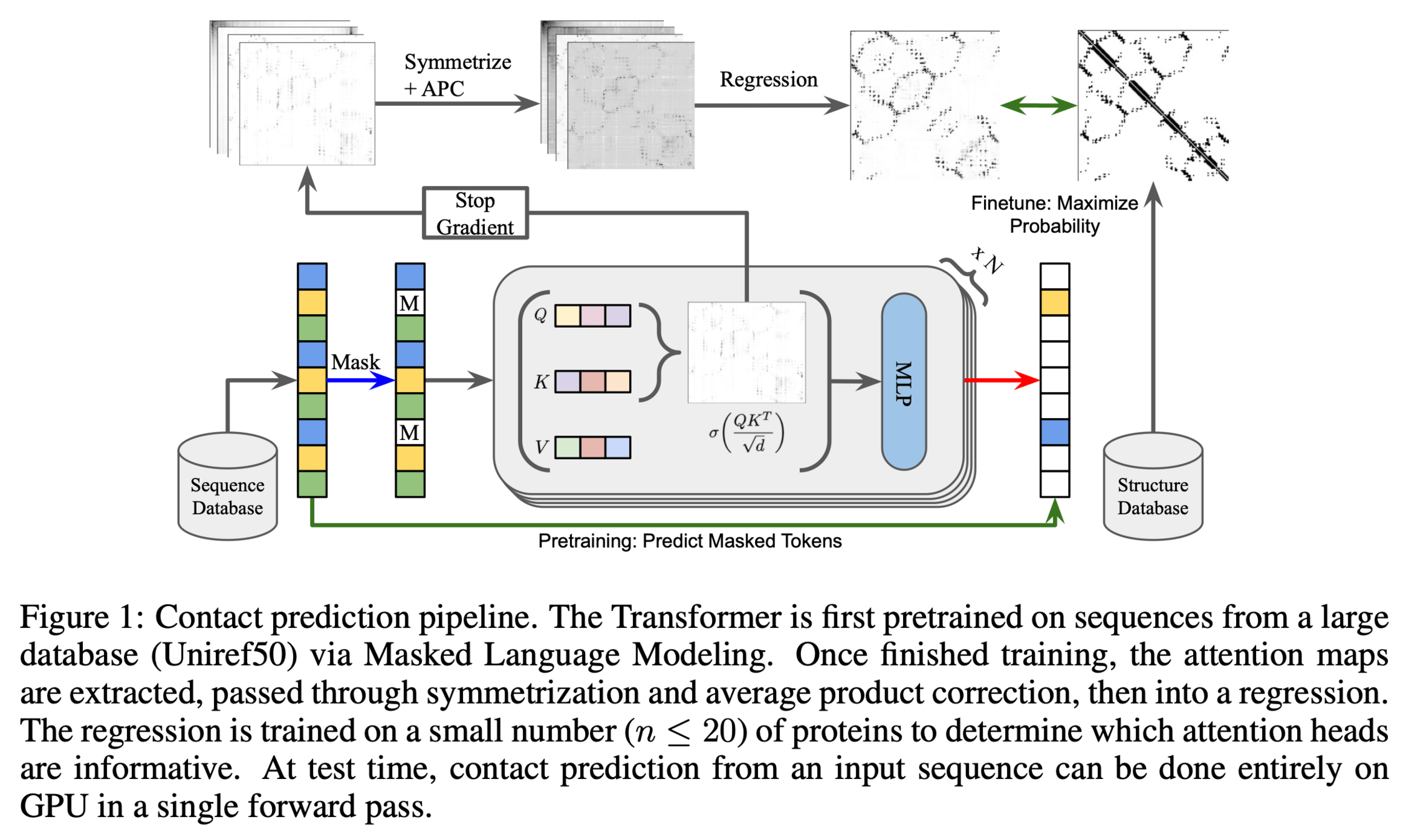

image classification (done in the contrastive way)

[Radford et al, Learning Transferable Visual Models From Natural Language Supervision, ICML, 2011]

[“DINO”, Caron et all. 2021]

Success mode:

Success mode:

[Show, Attend and Tell: Neural Image Caption Generation with Visual Attention. Xu et al. CVPR (2016)]

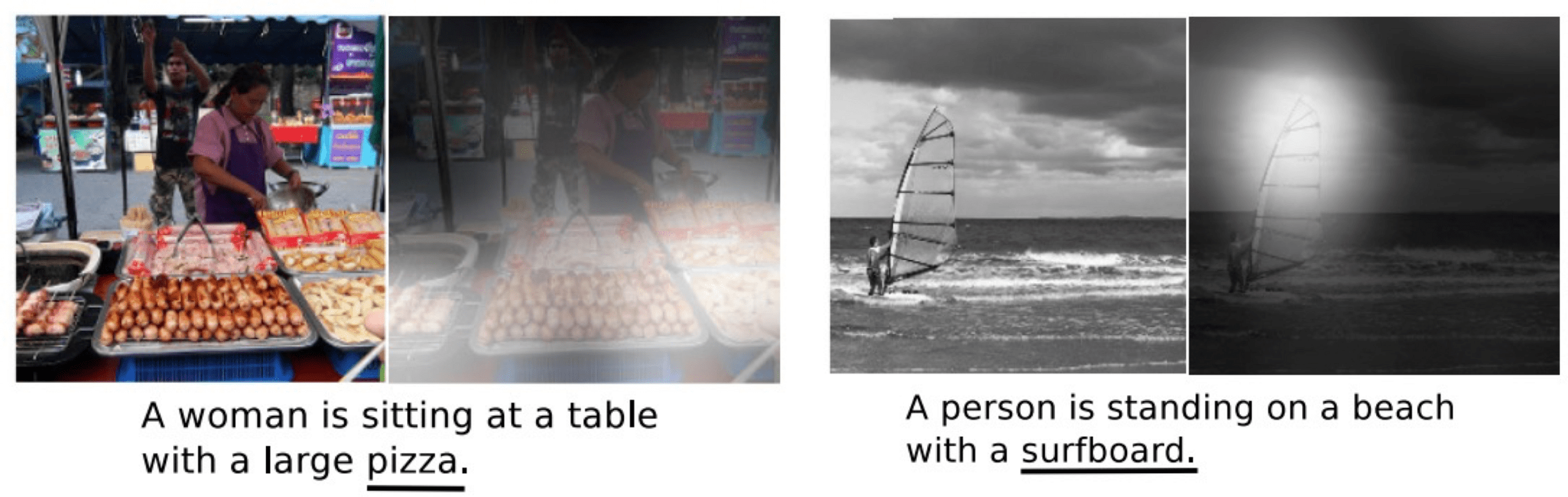

Failure mode:

[Show, Attend and Tell: Neural Image Caption Generation with Visual Attention. Xu et al. CVPR (2016)]

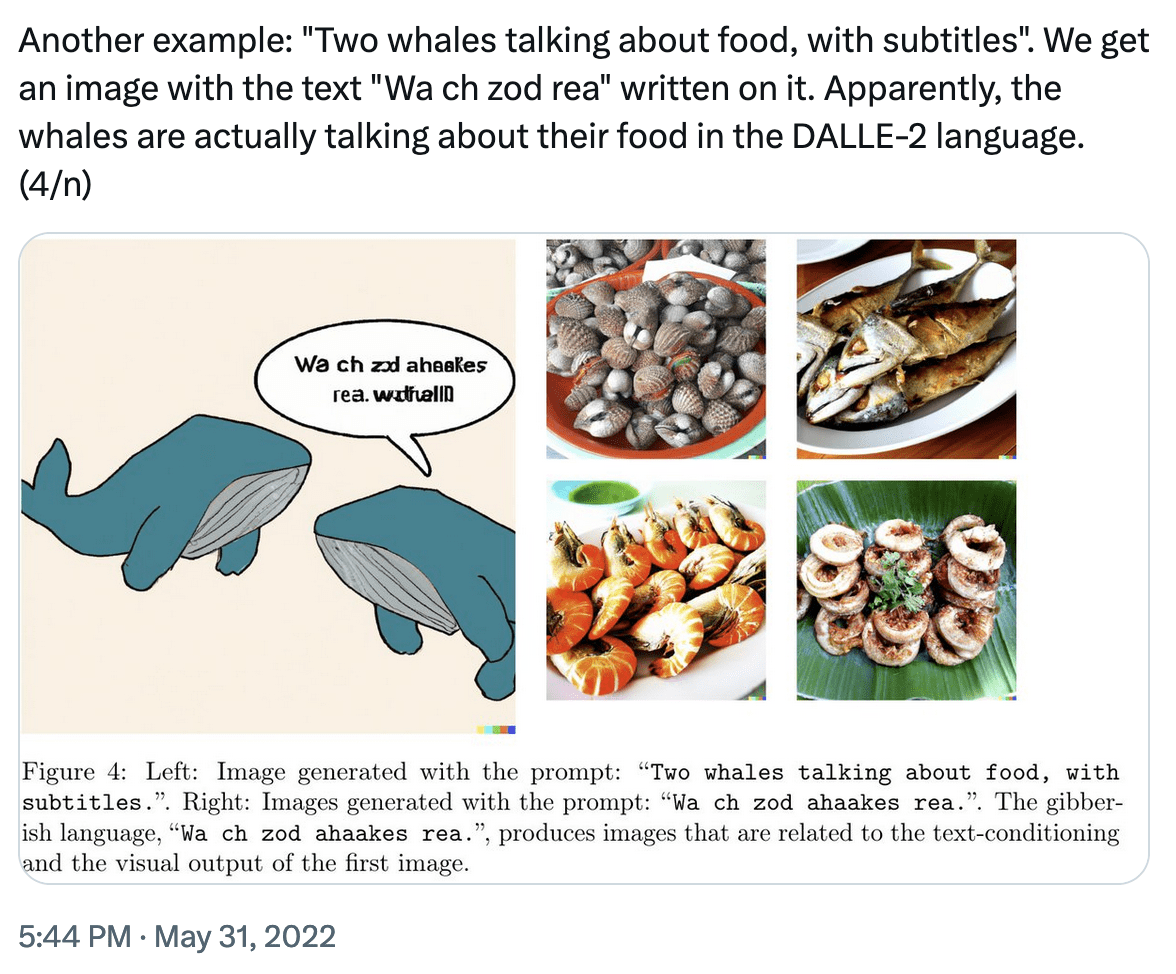

Success or Failure? mode:

Summary

Transformers combine ideas from earlier architectures (convolution, ReLU, residual connections) with new innovations: embedding and attention layers.

Transformers start with generic hard-coded embeddings and, block-by-block, create increasingly context-aware embeddings.

Attention enables massive parallelism: each head, each \(q,k,v\) sequence, each attention score, and each output are all computed in parallel.

(Because the architecture is input-agnostic — "attention is all you need" — transformers have become one model that rules across language, vision, and multi-modal tasks.)

6.390 IntroML (Spring26) - Lecture 9 Transformers

By Shen Shen

6.390 IntroML (Spring26) - Lecture 9 Transformers

- 164