Understanding Domain Shift

When the World Changes

Face ID: A Success Story

Apple's Face ID. Trained on over a billion images.

Works in the dark.

Works with glasses.

Works with hats.

Ship it!

Then 2020 Happened

COVID-19 → everyone wears masks

Your phone keeps asking for your passcode.

Again. And again. And again.

Millions of frustrated users worldwide.

Face ID Meets Masks

Training

Deployment

Face ID didn't get worse. The input to the model changed.

Apple had to ship iOS 15.4 with "Face ID with a Mask" to fix this.

This is Domain Shift

Also called: distribution shift, dataset shift

Wait... Is This Just Overfitting?

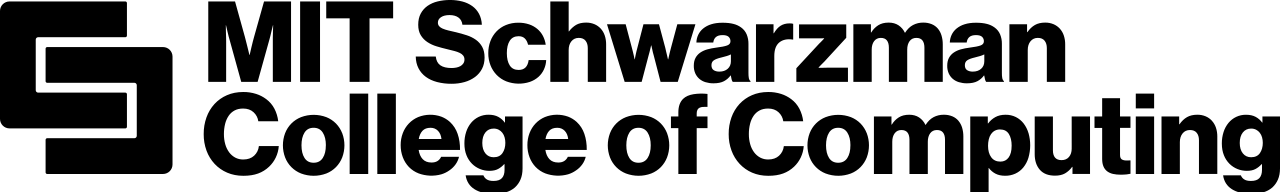

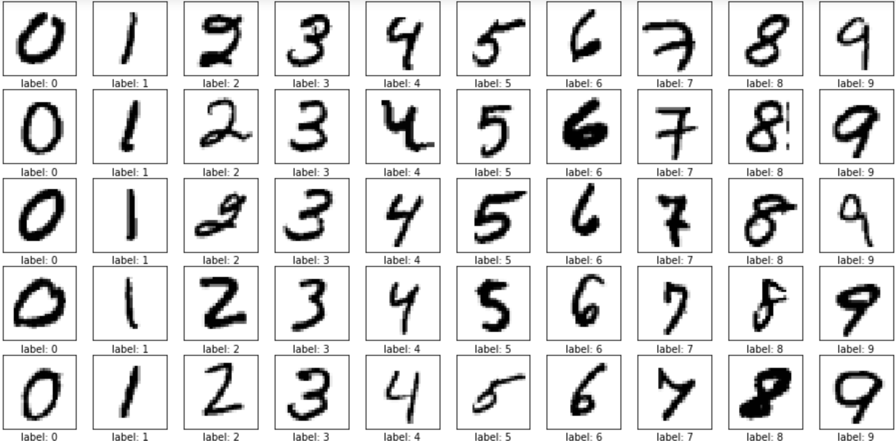

Let's look at a trained neural network on MNIST digits

Training samples

Accuracy: 97%

Test samples

Accuracy: 81%

Looks like overfitting... or is it?

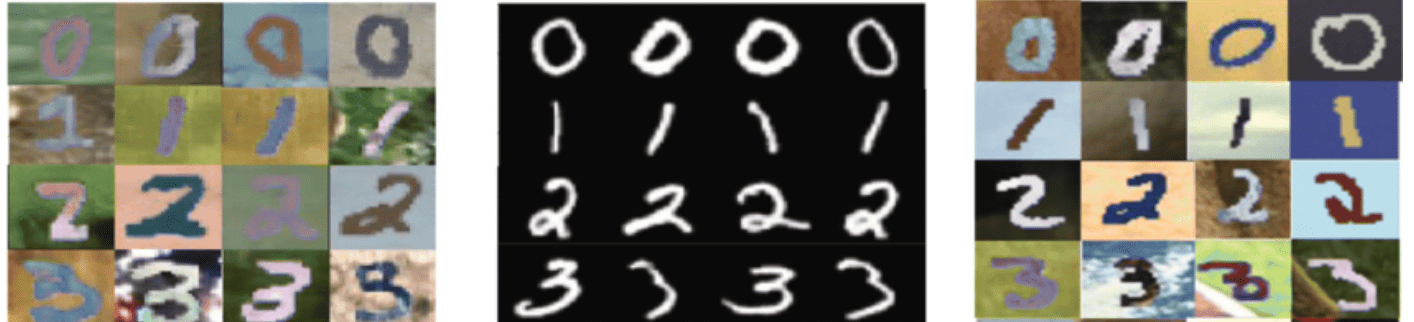

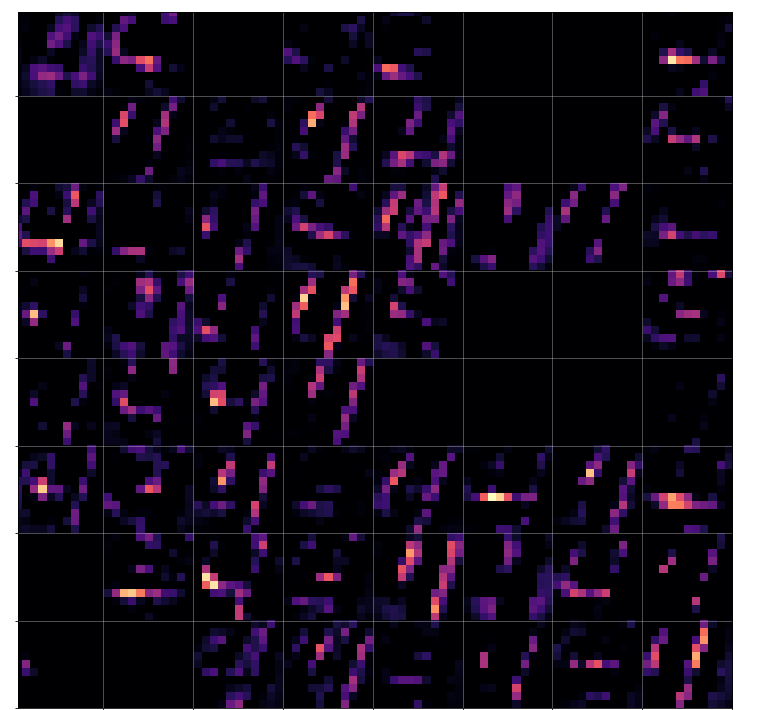

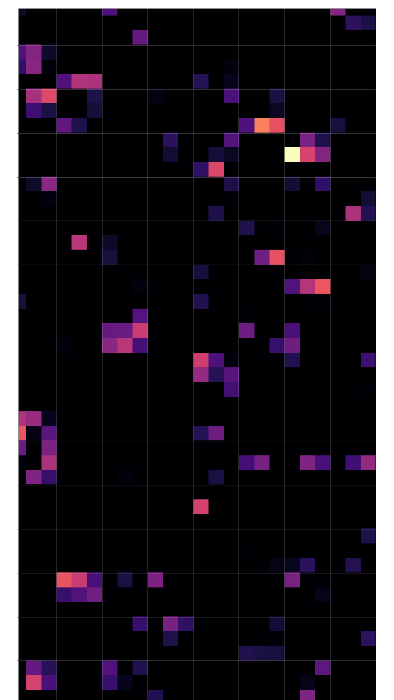

What the MNIST Network Learned

Activation maps at each layer — what each filter responds to for a digit "4":

Layer 1 (32 filters): strokes, edges — digit still recognizable

Layer 2 (64 filters): stroke fragments, spatial patterns

Layer 3 (128 filters): abstract, sparse codes

The network builds hierarchical features — not memorizing individual digits.

Training ≠ Deployment

Training: upright, centered

Test: rotated and shifted

The distribution changed, not the model complexity

This is domain shift, not overfitting

Domain Shift

| Overfitting | Domain Shift | |

|---|---|---|

| Symptom | Training ✓, testing ✗ | |

| Analogy | Studies only past exams, tested on same course | Masters C01, tested on C011 exam |

| Root cause | Model memorizes noise | \(P_{\text{source}}(x,y) \neq P_{\text{target}}(x,y)\) |

| Fixes | Regularization, dropout, simpler model | Different — that's today & next lecture |

Source domain = training | Target domain = deployment

What Does \(P_{\text{source}} \neq P_{\text{target}}\) Look Like?

Face ID: \(x\) = face scan, \(y\) = unlock / reject

Randomly sample one unlock attempt...

| \(x\) | \(y\) | \(P_{\text{source}}\) (pre-2020) | \(P_{\text{target}}\) (mid-2020) |

|---|---|---|---|

| full face | unlock | 47% | 8% |

| masked face | unlock | 0.1% | 42% |

An \((x, y)\) pair that was 0.1% of training is now 42% of deployment.1

1 Contrived numbers for illustration.

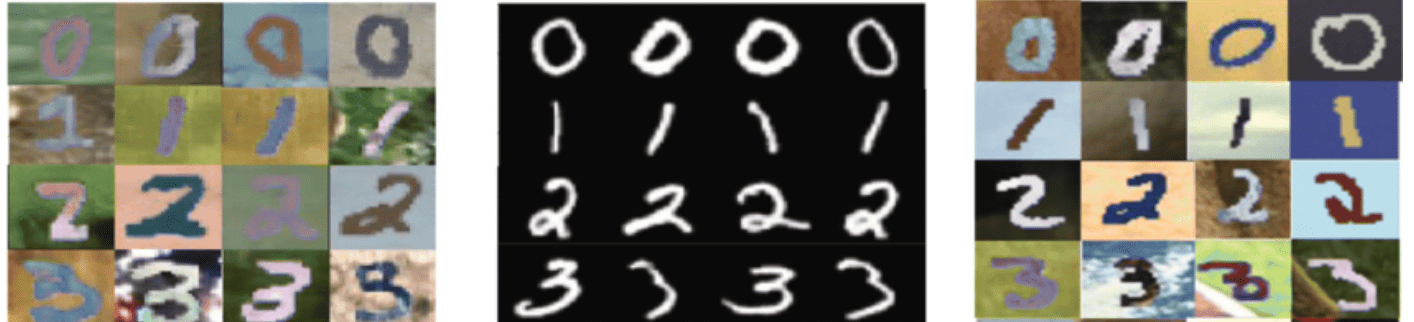

Domain Shift is Everywhere

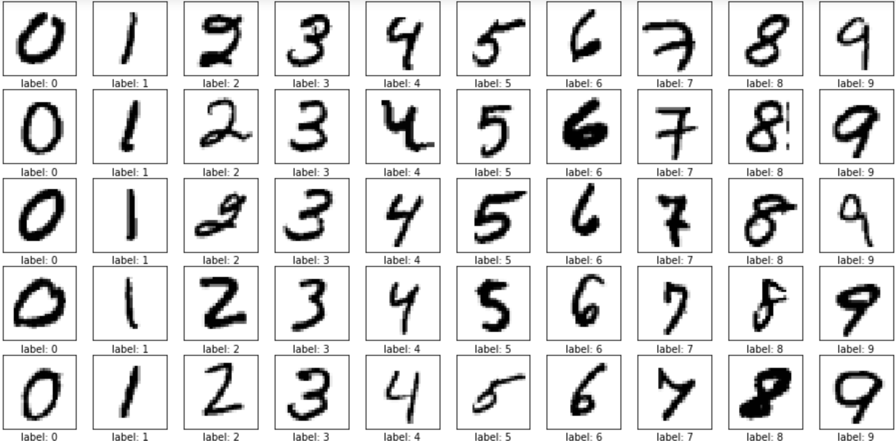

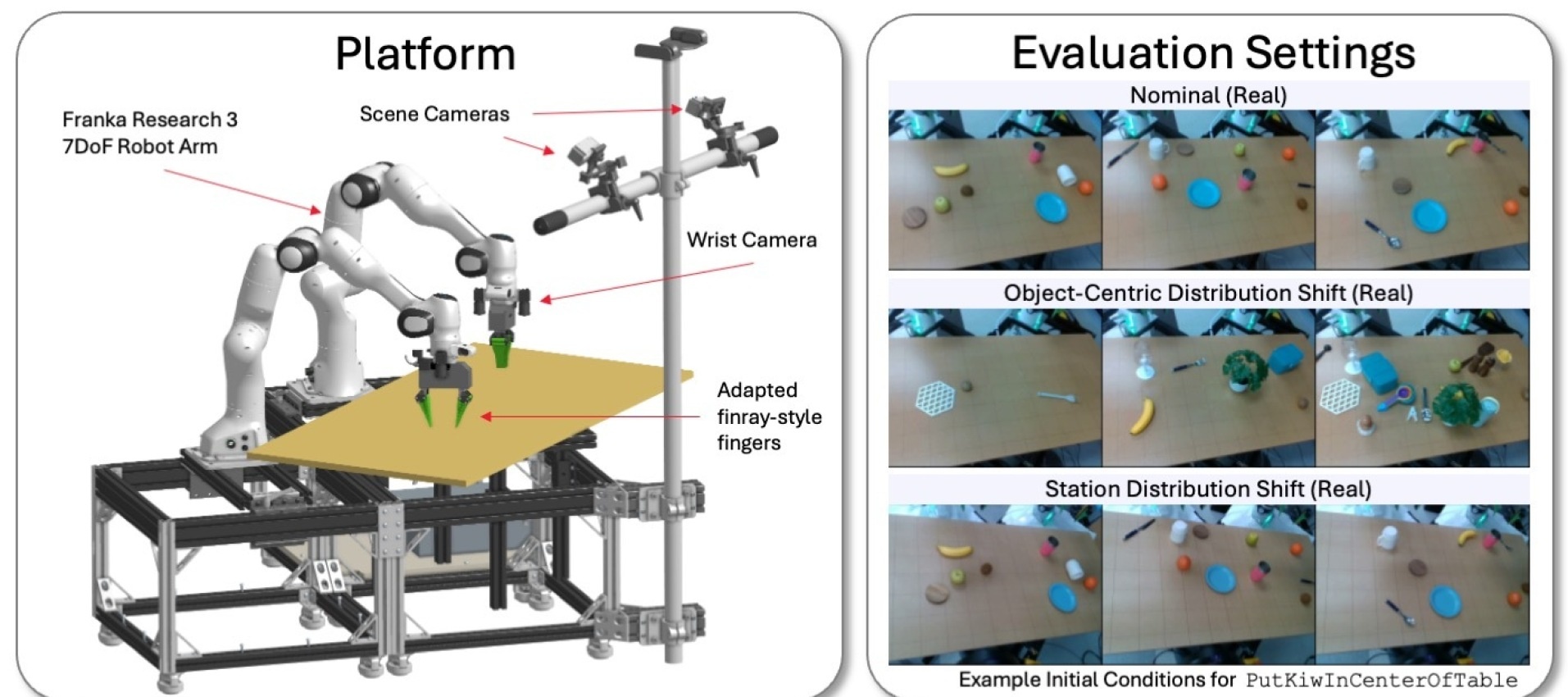

Robot platform and distribution shift settings: same task, different object and station configurations

Tedrake et al. (2025)

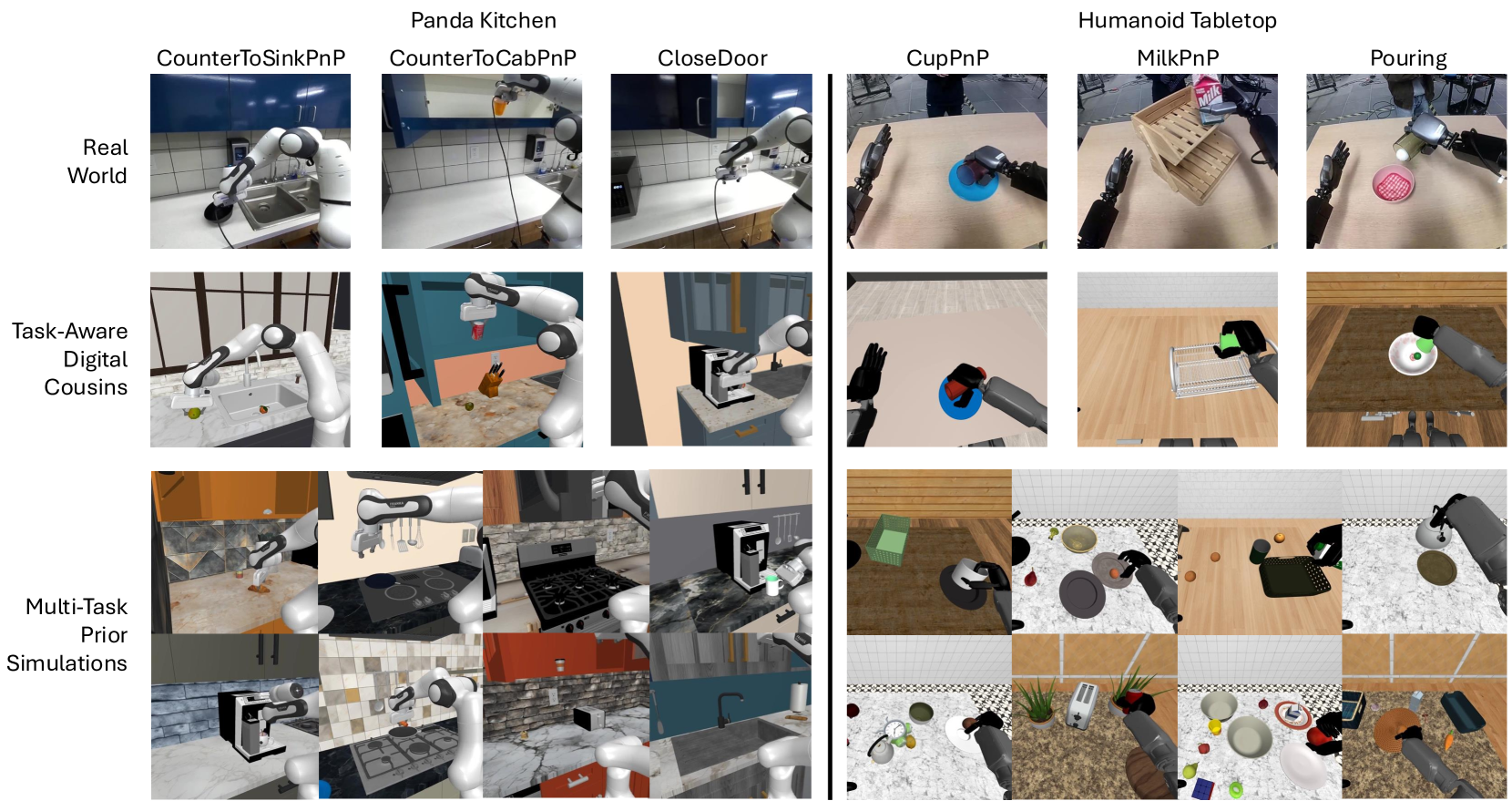

The Sim-to-Real Gap

Top: real world | Middle & bottom: simulation counterparts

Maddukuri et al. (2025)

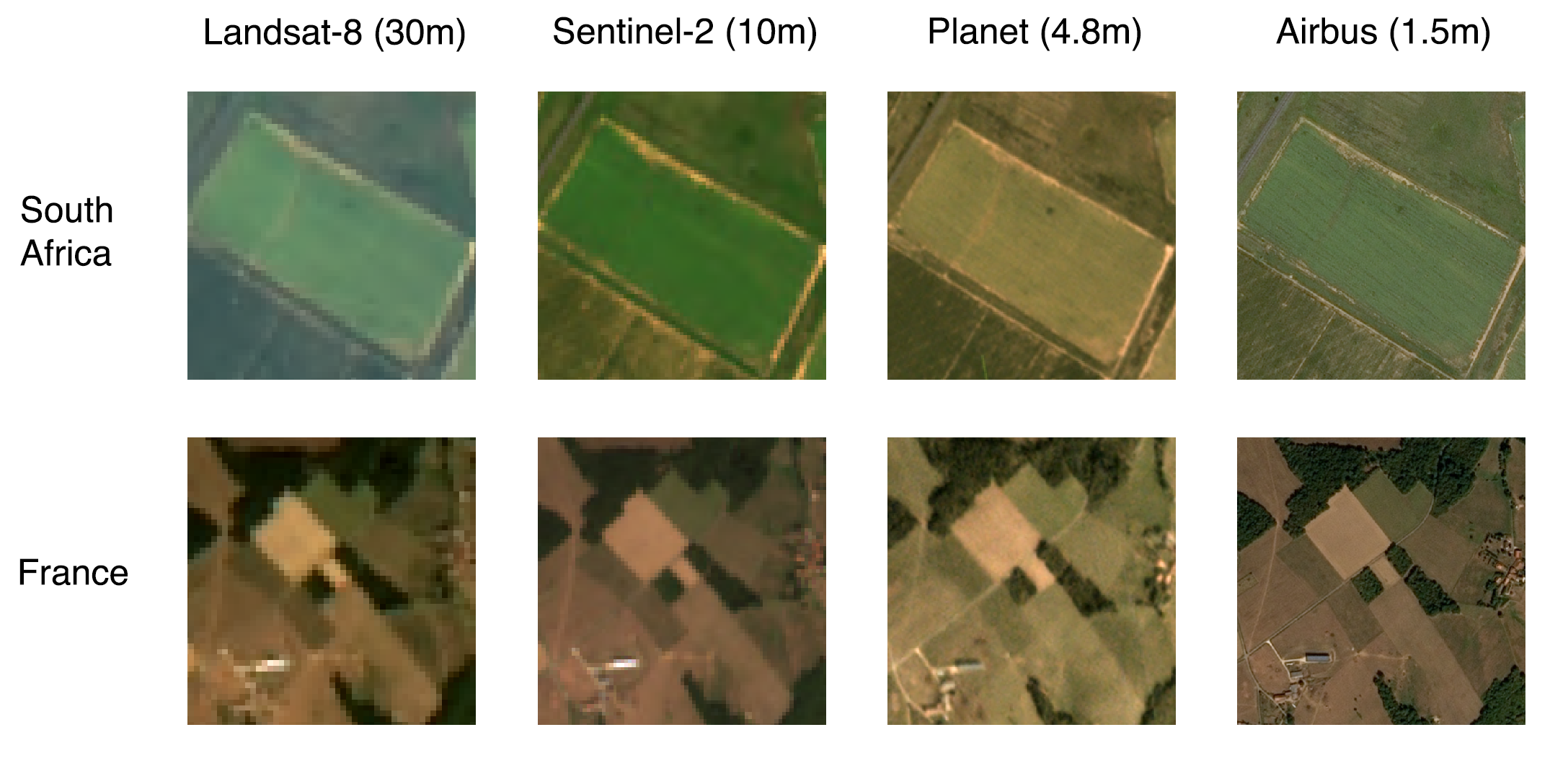

Satellite Imagery: Crop Classification

Large, regular fields → high accuracy

Tiny, irregular fields → much worse

Wang, Waldner & Lobell (2022)

Pattern 1: Covariate Shift

The inputs change, but the rules don't

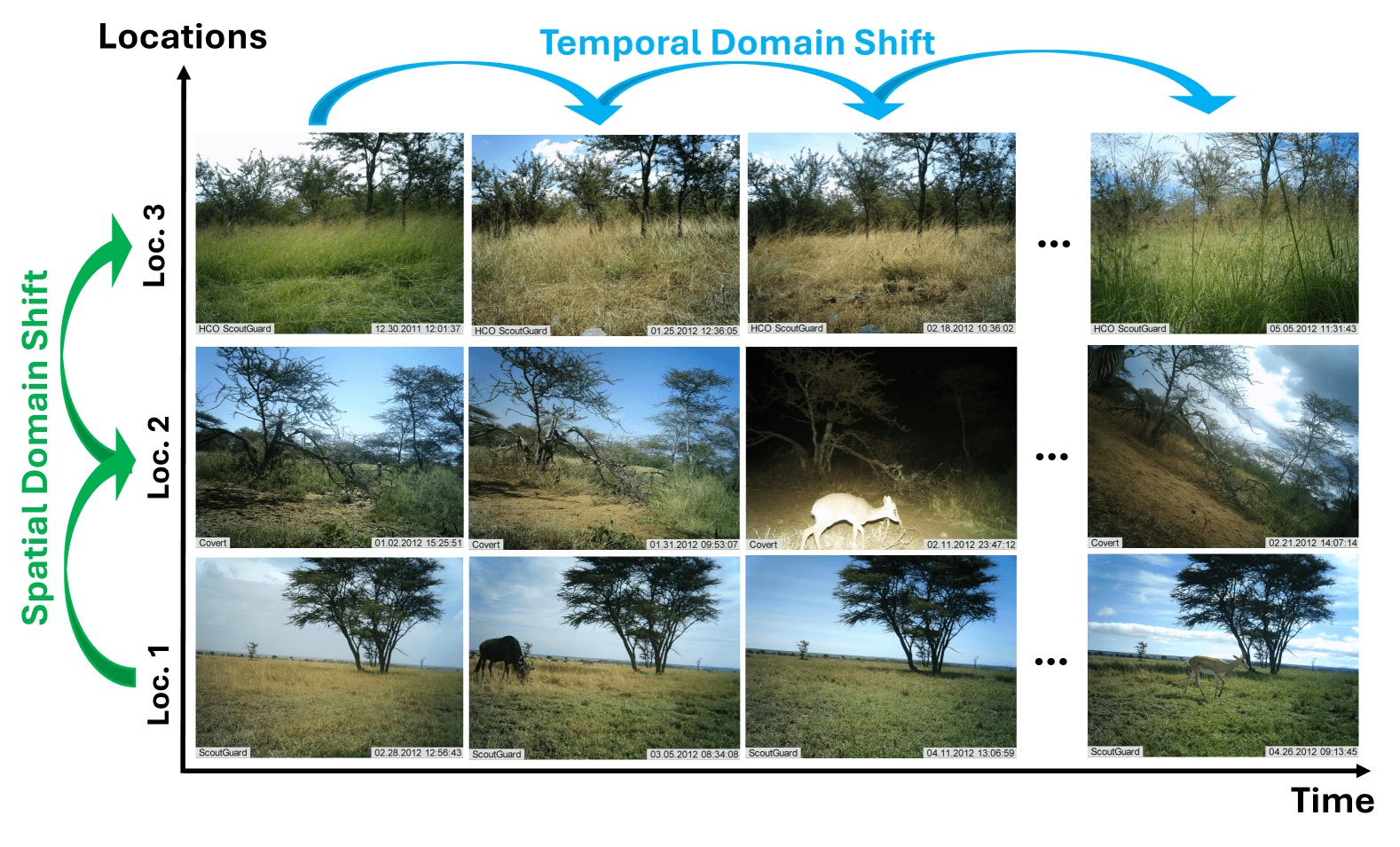

Covariate Shift: Wildlife Monitoring

Training: one location, one season

Deployment: new locations, new times

Same Deer, Different Pixels

A deer in a different place, at a different time, is still a deer.

Same ears. Same legs. Same antlers.

Didn't change

The relationship between "deer features" and "deer"

Changed

The background — location, lighting, vegetation

The rules are the same — only the inputs look different.

Covariate Shift: Definition

\(P(x)\) changes — inputs look different

\(P(y|x)\) stays the same — rules unchanged

The deer detector's knowledge is still valid — a deer is still a deer.

It just needs adjusting for different-looking inputs.

The most common type of domain shift.

Why the Joint Changes: \(P(x)\) Shifts

\(P(x, y) = P(y|x) \cdot P(x)\)

| \(P(\text{deer} | \text{infrared image})\) | \(P(\text{infrared image})\) | \(P(\text{infrared, deer})\) | |

|---|---|---|---|

| Training | 20% | 5% | 1% |

| Deployment | 20% | 90% | 18% |

Same conditional × different input frequency = different joint → \(P_{\text{source}} \neq P_{\text{target}}\)

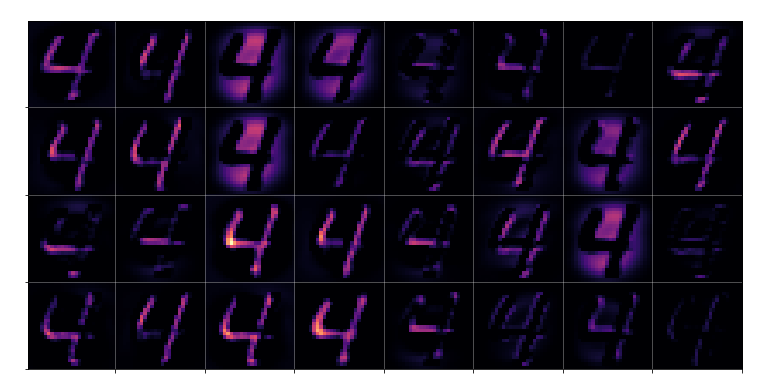

Covariate Shift: You've Seen It

| Example | \(x\) | \(P(x)\) shifted | \(P(y|x)\) same |

|---|---|---|---|

| Face ID + masks | face scan | masked faces everywhere | your identity didn't change |

| MNIST rotation | digit image | rotated pixels | a 3 is still a 3 |

| Sim-to-real robotics | camera image | sim vs real textures | same grasping task |

| Wildlife monitoring | trail camera | day vs infrared night | a deer is still a deer |

Same rules, different-looking inputs → covariate shift.

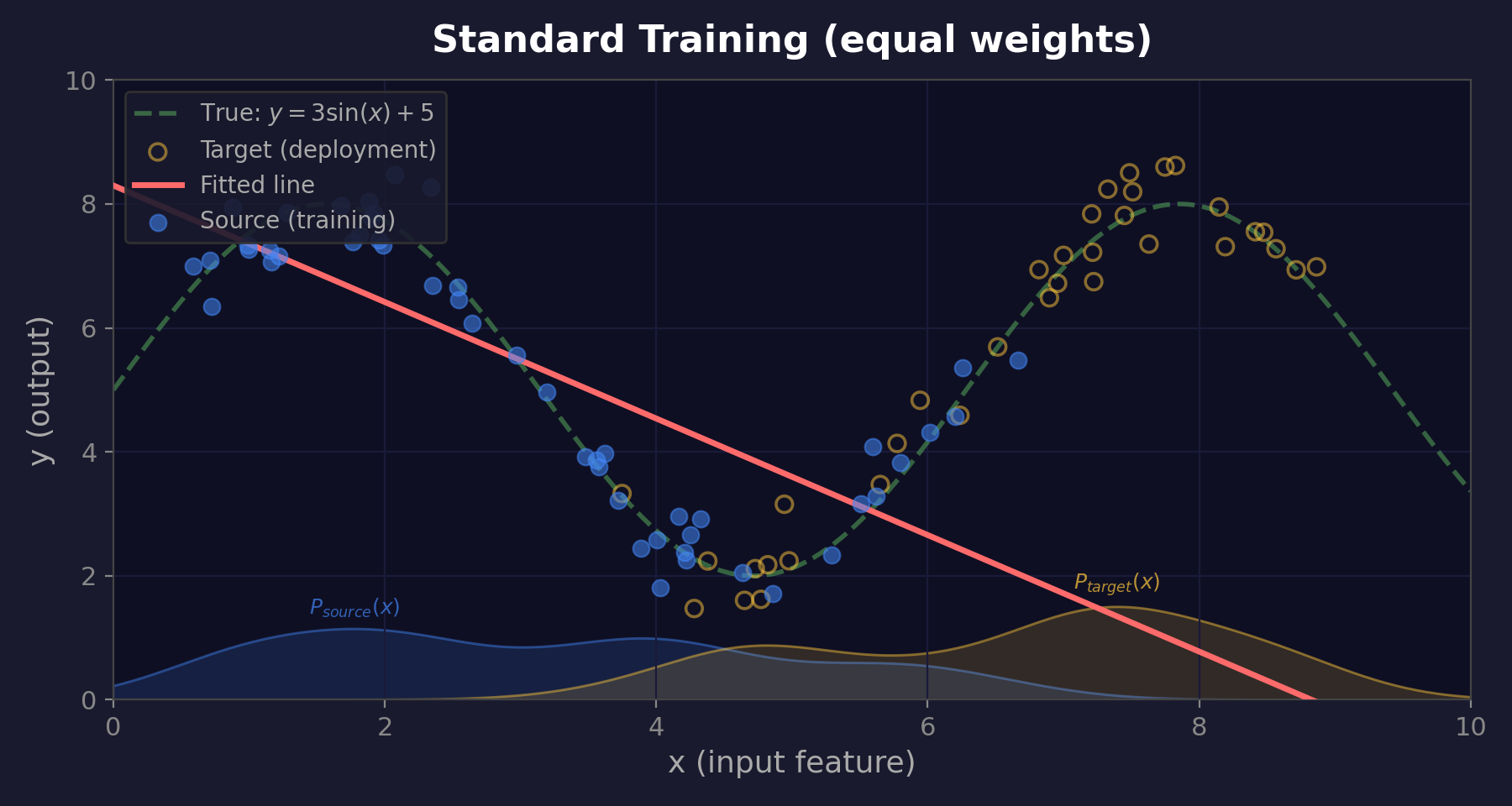

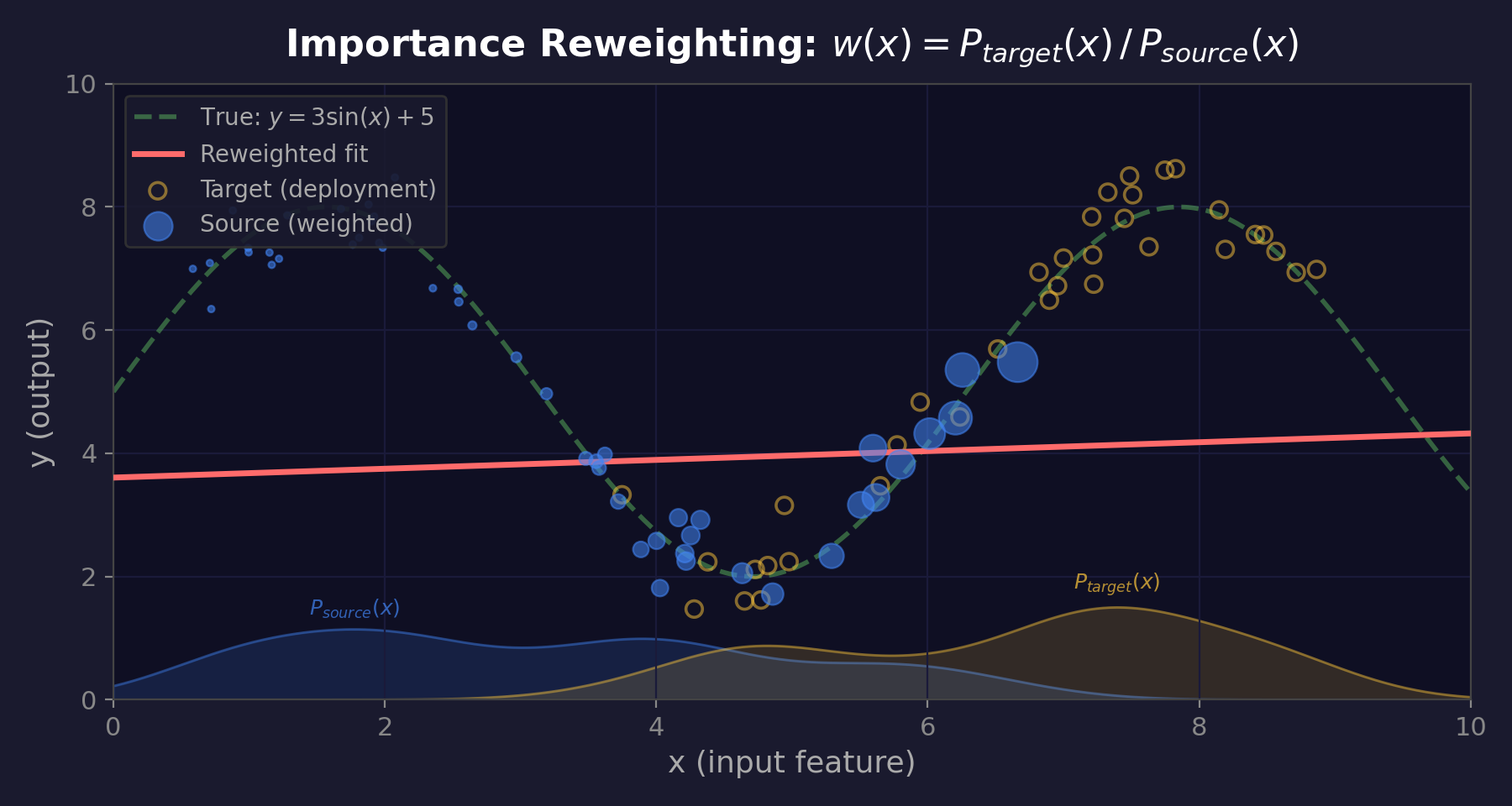

Fixing Covariate Shift

Weight each training example by: \(w(x) = \frac{P_{\text{target}}(x)}{P_{\text{source}}(x)}\)

Common in target → boost | Rare in target → reduce

Other Fixes (Preview)

Reweighting isn't the only approach:

🔧

Finetuning

Continue training on target data

Deer detector + a few night images

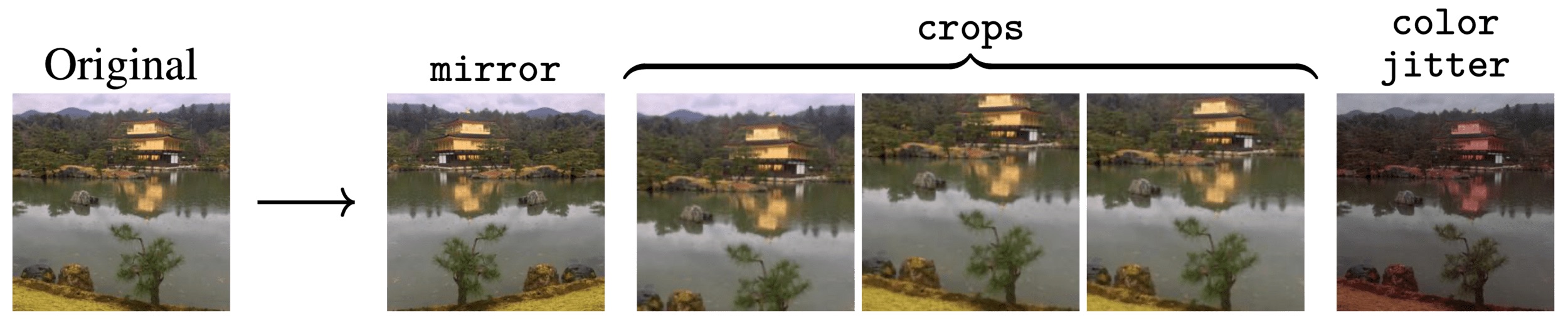

🎲

Data Augmentation

Simulate target conditions during training

Synthetically darken daytime photos

⚡

Test-Time Adaptation

Adapt on-the-fly at deployment

No target labels needed

We'll cover these in detail next lecture.

Pattern 2: Label Shift

Semester

40%

30%

15%

15%

P(y) changed

Summer

10%

25%

30%

35%

👩💻 — studying or fun? Same image, different odds.

Label Shift: Cold Diagnosis

Same cold detection model, different patient populations:

Children's Hospital

Kids get 6–8 colds per year

MGH (adult general hospital)

Adults get 2–3 colds per year

\(P(x|y)\) same — a cold looks the same in kids and adults

\(P(y)\) changed — prevalence is very different

Also called: prior probability shift, target shift

Why the Joint Changes: \(P(y)\) Shifts

\(P(x, y) = P(x|y) \cdot P(y)\)

| \(P(\text{runny nose} | \text{cold})\) | \(P(\text{cold})\) | \(P(\text{runny nose, cold})\) | |

|---|---|---|---|

| Children's | 80% | 40% | 32% |

| MGH | 80% | 10% | 8% |

Same conditional × different prior = different joint → \(P_{\text{source}} \neq P_{\text{target}}\)

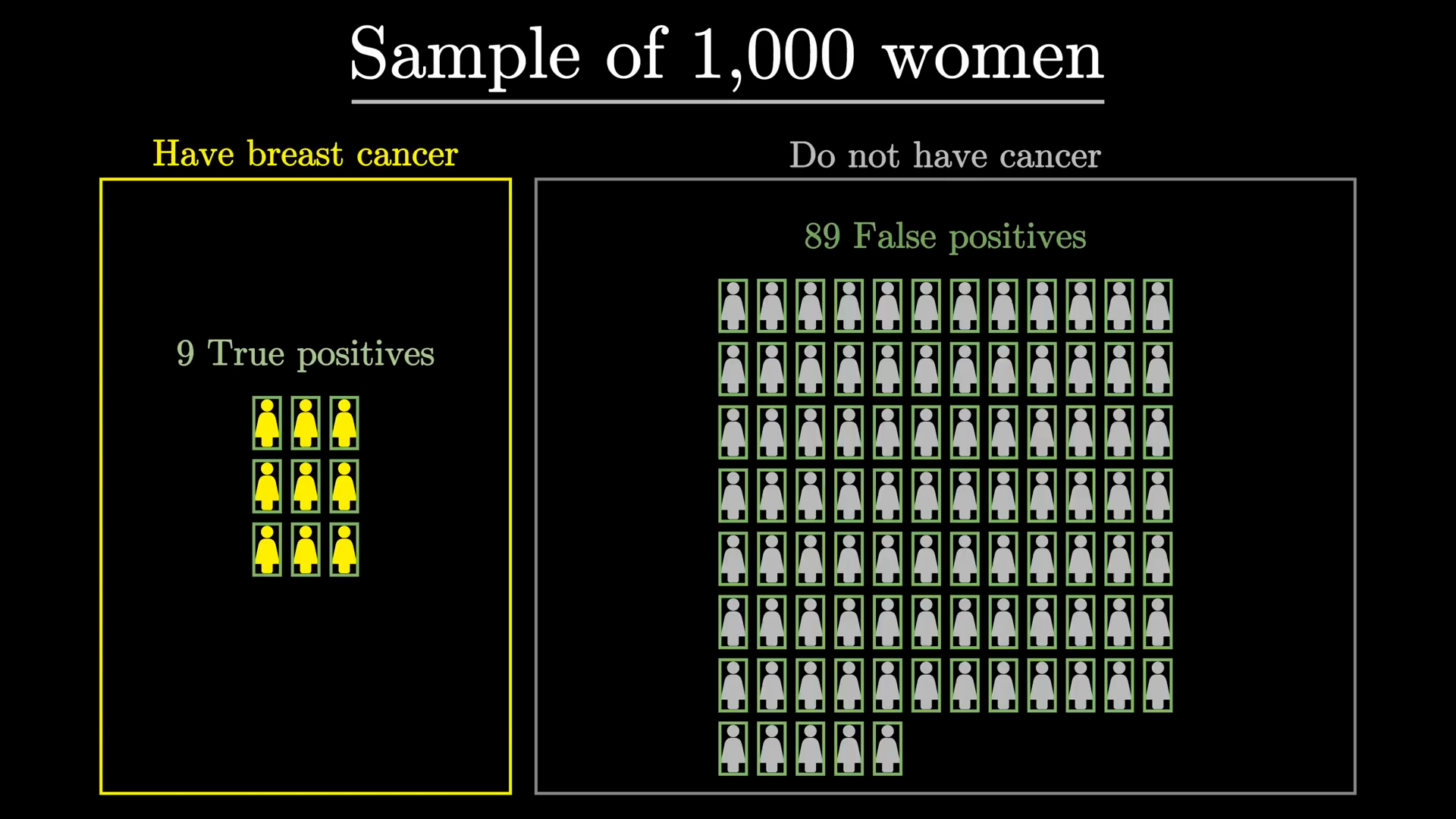

Label Shift as Bayes' Rule

3Blue1Brown, The medical test paradox

Sensitivity = 90%, specificity = 91%. You test positive.

| \(P(\text{disease})\) | \(P(\text{disease} | +)\) | |

|---|---|---|

| 10% prevalence | 10% | ~50% |

| 1% prevalence | 1% | ~9% |

10% case: \(\frac{0.9 \cdot 0.10}{0.9 \cdot 0.10 + 0.09 \cdot 0.90} = \frac{0.09}{0.171} \approx 0.53 \approx 50\%\)

1% case: \(\frac{0.9 \cdot 0.01}{0.9 \cdot 0.01 + 0.09 \cdot 0.99} \approx 0.09\)

Same test, same \(P(x|y)\) — the prior \(P(y)\) changes everything.

That's label shift.

\[ P(D|+) = \frac{P(+|D)P(D)}{P(+|D)P(D) + P(+|\neg D)P(\neg D)} \]

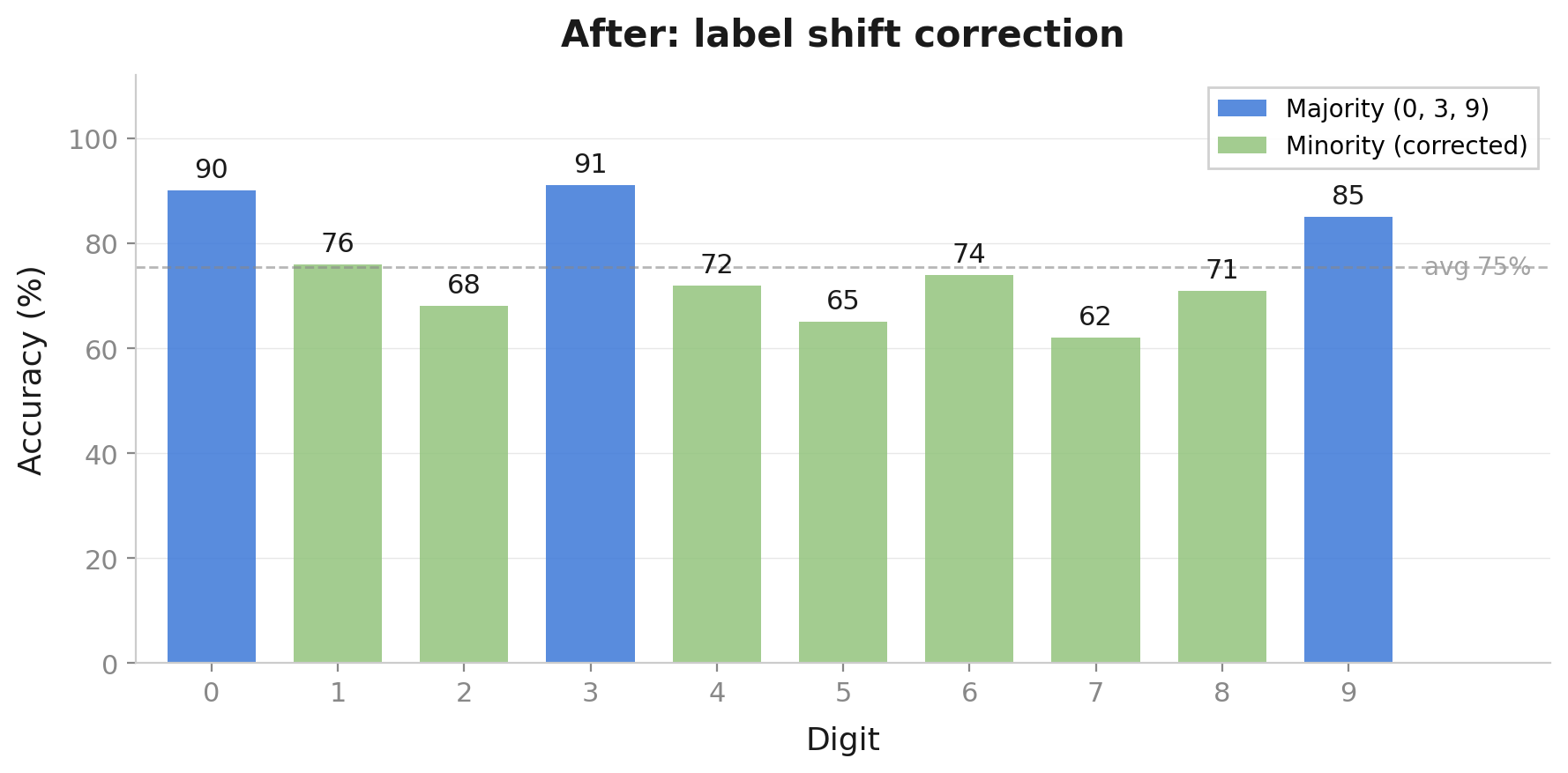

Fixing Label Shift

Adjust predictions: \(P_{\text{target}}(y|x) \propto P_{\text{source}}(y|x) \cdot \frac{P_{\text{target}}(y)}{P_{\text{source}}(y)}\)

Common in target → boost | Rare in target → reduce

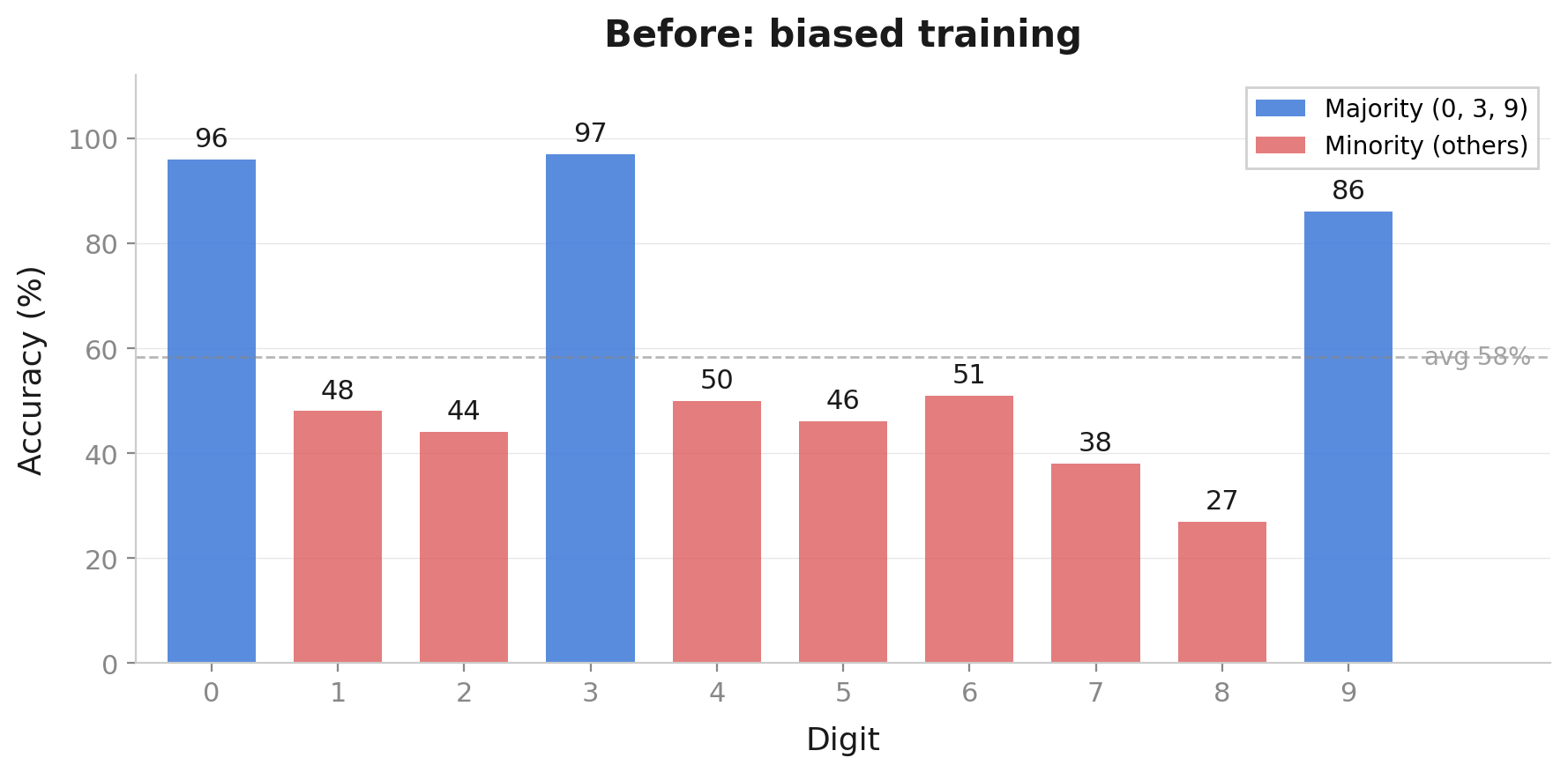

MNIST example demo

Two Patterns, One Framework

Covariate Shift

\(P(x)\) changes

\(P(y|x)\) stays same

World looks different, works the same

e.g. deer detector, sim-to-real, Face ID

Fix: reweight, finetune, augment

Label Shift

\(P(y)\) changes

\(P(x|y)\) stays same

World works the same, mix is different

e.g. cold diagnosis, MNIST class bias

Fix: adjust priors, rebalance

Identifying which pattern → determines the fix.

Which Type of Shift?

Spam filter: trained on Gmail, deployed on corporate email

→ Covariate — different writing style, same spam vs not-spam

Sentiment analysis: trained on restaurant reviews, deployed on electronics reviews

→ Covariate — different vocabulary, same positive/negative meaning

Disease screening: trained in flu season, deployed in summer

→ Label shift — same symptoms, way fewer sick patients

Satellite imagery: trained in France, deployed in India

→ Both! — different terrain (covariate) and different crop mix (label)

Your Turn: Loan Approval Model

Credit scoring model trained at a major bank in New York, deployed at a regional bank in rural Midwest.

What might be different?

- Applicant profiles (income levels, employment types)

- Default rates (different economic conditions)

Both types! — Different applicant features AND different default rates

The Diagnostic Framework

When we encounter a new deployment scenario, ask:

- Do the inputs look different? → Check for covariate shift

- Can a domain classifier separate source vs target? → measurable input shift

Probe: train \(d(x)\) to predict source vs target.

Near chance (~50%) → little detectable input shift; high accuracy → covariate shift signal.

- Are the class frequencies different? → Check for label shift

- Compare class distributions source vs target → class imbalance signals label shift

Probe: compare \(\hat{P}(y)\) across source and target.

Similar frequencies → no label shift; large gaps → label shift signal.

- Did the underlying rules change? → Might need to retrain

Case Study: Waymo Phoenix → SF

Flat, wide, sunny → hills, fog, narrow streets

Diagnosing Waymo's Shift

| Question | Answer | Diagnosis |

|---|---|---|

| Inputs look different? | Yes — fog, hills, narrow streets | Covariate shift |

| Class frequencies different? | Yes — more cyclists, pedestrians | Label shift |

| Rules changed? | No — a stop sign is still a stop sign | No concept shift |

Domain-classifier check: source-vs-target frame classifier performs well → measurable input shift.

Both types — real-world shifts are often mixed.

How Waymo Adapted

- Collected SF data — thousands of miles with safety drivers

- Reweighted — upweight rare SF scenarios (fog, hills) ← covariate shift fix

- Rebalanced — adjust for higher cyclist/pedestrian frequency ← label shift fix

- Finetuned — Phoenix model as starting point + SF data

- Augmented — synthetic fog, hills, unusual traffic

After years of adaptation, Waymo now operates commercially in SF.

Summary

Domain shift is not overfitting — the world changed, not your model's complexity.

Covariate shift (\(P(x)\) changes, \(P(y|x)\) same) is the most common pattern — fix with reweighting, finetuning, or augmentation.

Label shift (\(P(y)\) changes, \(P(x|y)\) same) requires adjusting class priors, not model architecture.

Diagnosis before treatment — identify what changed, then pick the matching fix.

Models are frozen snapshots of one distribution; the world keeps moving, so anticipate and adapt.

Reference: Shift Types

| Shift Type | What Changes? | What Stays Same? | Fix Strategy | Today's Examples |

|---|---|---|---|---|

| Covariate | \(P(x)\) | \(P(y|x)\) | Reweight, finetune, augment | Face ID, deer detector, sim-to-real |

| Label | \(P(y)\) | \(P(x|y)\) | Adjust priors, rebalance | Cold diagnosis, MNIST class bias |

| Concept (preview) | \(P(y|x)\) | Nothing guaranteed | Retrain, continual learning | Next lecture! |

Next Time: Making Models Robust

Now that we can diagnose domain shift... how do we fix it?

AI Educators Pilot - Lecture - Domain Shift

By Shen Shen

AI Educators Pilot - Lecture - Domain Shift

- 157